In the rapidly evolving landscape of technology, Artificial Intelligence (AI) has moved beyond being a mere tool to becoming the very cornerstone of enterprise operations. Organizations globally are racing to integrate AI, driven by the promise of enhanced efficiency, unprecedented insights, and transformative automation. However, many find themselves grappling with a critical realization: the true hurdle isn’t just the AI models themselves, but the foundational architecture supporting them. Without a robust, well-thought-out architecture, AI implementations often remain fragmented experiments, failing to deliver on their full potential. This article delves into the critical elements of an AI-First architecture, illustrating how successful enterprises are designing their platforms to unlock AI’s true power, moving beyond simply layering AI on top of existing data silos.

The Paradigm Shift: From Data-First to AI-First

For years, the mantra in enterprise technology was “data-first.” Collect all the data, store it, clean it, and then figure out how to use it. While crucial, this approach often treats AI as an afterthought—an application built on top of a data infrastructure not inherently designed for intelligent, autonomous systems. The AI-First paradigm represents a profound shift. It acknowledges that for AI to flourish and drive significant business outcomes, the entire enterprise architecture needs to be re-envisioned with AI at its core. This means building a foundation where intelligence is not an add-on but an intrinsic part of how data is managed, understood, and utilized.

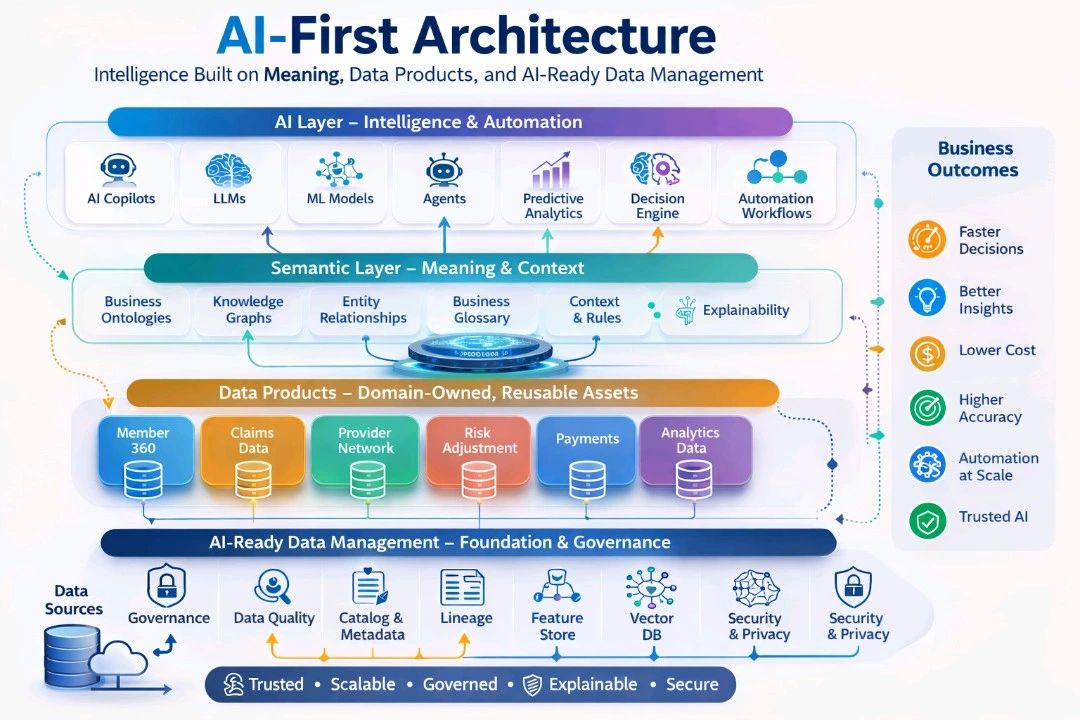

The journey from raw data to impactful business outcomes is no longer linear but cyclical and integrated:

Raw Data → Data Products → Semantic Meaning → AI Intelligence → Business Outcomes

This transformation is key to moving beyond AI pilots to achieving true enterprise-wide AI capabilities.

The Pillars of AI-First Architecture

An effective AI-First architecture is built upon four interconnected and interdependent layers. Each layer plays a vital role in ensuring that AI systems are not only performant but also trustworthy, scalable, explainable, and secure.

AI Layer – Intelligence & Automation: The Apex of Autonomy

The pinnacle of the AI-First architecture is the AI Layer, where intelligence and automation converge to drive predictions, insights, and decisive actions. This layer embodies the “action” aspect of agentic AI, closing the loop between perception and execution. It’s where the rubber meets the road, transforming data and semantic understanding into tangible business value.

AI Copilots

AI Copilots are intelligent assistants designed to augment human capabilities. They can range from code-generating copilots for developers to creative writing assistants, data analysis copilots for business users, or even customer support copilots that guide agents through complex interactions. These tools enhance productivity by automating repetitive tasks, providing real-time suggestions, and streamlining workflows, empowering human decision-makers rather than replacing them entirely. They are a prime example of how AI can become embedded in daily operations, driving efficiency and innovation across various functions.

Large Language Models (LLMs)

Large Language Models (LLMs) are at the forefront of generative AI, capable of understanding, generating, and manipulating human language with remarkable fluency. In an AI-First architecture, LLMs are not just for chatbots; they serve as sophisticated reasoning engines. They can be employed for tasks such as summarizing vast amounts of unstructured data, generating reports, translating documents, or even assisting in legal discovery. Their ability to comprehend complex semantic nuances makes them invaluable for extracting meaning from diverse text-based data sources and generating human-like responses.

Machine Learning Models (ML Models)

Beneath the sophistication of LLMs lie traditional Machine Learning (ML) models, which form the backbone for a wide array of predictive and classification tasks. These models are crucial for identifying patterns, forecasting trends, and making informed decisions based on historical data. Examples include fraud detection systems, predictive maintenance algorithms, customer churn prediction, and demand forecasting. The AI-First approach emphasizes the lifecycle management of these models, ensuring they are continuously trained, evaluated, and deployed in a governed manner.

Agents

AI Agents represent a significant leap from passive AI tools to autonomous systems that can plan, act, and learn toward high-level goals. Unlike simple programs, agents can coordinate tools, services, and other agents over time, utilizing memory, feedback, and safety mechanisms. In an enterprise context, this could mean an “Invoice Reconciliation Agent” that automatically matches invoices with purchase orders and payments, or a “Customer Service Agent” that handles routine inquiries and escalates complex cases to human representatives. The rise of agentic AI is fundamentally reshaping how organizations operate, moving from human-centric workflows to agent-orchestrated processes.

Predictive Analytics

Predictive analytics utilizes statistical algorithms and machine learning techniques to identify the likelihood of future outcomes based on historical data. Within the AI Layer, predictive analytics provides forward-looking insights that enable proactive decision-making. This can include anticipating customer needs, predicting equipment failures, or forecasting market trends. By embedding predictive capabilities directly into operational workflows, organizations can move from reactive responses to anticipatory strategies, gaining a significant competitive edge.

Decision Engine

A decision engine systematizes and automates complex business logic and rules. It acts as the brain that takes the output from ML models, predictive analytics, and even LLMs, and translates them into actionable decisions or recommendations. For instance, a decision engine might automatically approve loan applications based on a credit score prediction from an ML model and a rule set for loan eligibility. This component is critical for scaling automation and ensuring consistency in decision-making across the enterprise, reducing reliance on manual approvals for routine operations.

Automation Workflows

Automation workflows orchestrate the execution of tasks and processes driven by AI. They stitch together various AI components, data services, and legacy systems to create end-to-end automated solutions. This could involve an automated customer onboarding process, a supply chain optimization workflow, or a self-healing IT infrastructure. The AI-First approach emphasizes designing these workflows to be intelligent and adaptive, leveraging AI to dynamically adjust to changing conditions and optimize performance without constant human intervention.

Semantic Layer – Meaning & Context: Bridging the Gap Between Data and Understanding

Beneath the dynamic AI Layer lies the Semantic Layer, a crucial component that imbues raw data with meaning and context, enabling AI systems to “understand” the enterprise rather than merely processing bits and bytes. This layer is especially vital for agentic AI, allowing agents to reason about complex goals and context. Without a rich semantic foundation, AI outputs can be brittle, inconsistent, and difficult to explain.

Business Ontologies

Business ontologies are formal descriptions of concepts and relationships within a specific domain. Think of them as a structured vocabulary that defines the entities, attributes, and relationships relevant to an organization’s operations. For example, in a healthcare context, an ontology would define terms like “Patient,” “Doctor,” “Appointment,” “Diagnosis,” and the relationships between them. This structured knowledge provides a common understanding that AI systems can leverage for consistent interpretation and reasoning across diverse data sources. An “ontology-first” approach is gaining traction, where business concepts are modeled before being mapped to data schemas, improving AI’s traceability and resilience.

Knowledge Graphs

Knowledge graphs extend business ontologies by linking numerous data points and entities through their defined relationships, creating a rich network of interconnected information. While an ontology defines the types of things and their relationships, a knowledge graph populates those types with actual instances and specific connections. For an AI, a knowledge graph provides a powerful way to traverse related information, perform sophisticated queries, and infer new facts, much like a human would connect different pieces of information. This enables AI systems to answer complex questions that require synthesizing information from multiple sources.

Entity Relationships

Entity relationships explicitly define how different entities within the enterprise context are connected. This goes beyond simple data linkages to represent the logical and business-level connections. For example, a “Customer” entity might have a “buys” relationship with a “Product” entity, and a “has” relationship with an “Address” entity. Clearly defined entity relationships are fundamental for AI to build a comprehensive understanding of the business landscape, allowing it to perform tasks like personalized recommendations or cross-selling by understanding customer behaviors and preferences.

Business Glossary

A business glossary provides a standardized definition for key business terms and metrics across the organization. It acts as a single source of truth for business language, ensuring that everyone, including AI systems, uses and interprets terms consistently. For instance, defining “Active User” or “Customer Lifetime Value” precisely eliminates ambiguity and reduces errors in AI models and reports. This consistency is paramount for building trusted AI systems and facilitating effective communication between human stakeholders and AI tools.

Context & Rules

This component involves establishing the specific operational context for AI and defining the business rules that govern its behavior. Context might include the time of day, geographic location, user role, or the specific business process being executed. Rules are explicit guidelines or constraints that AI systems must adhere to. For example, a rule might state that “no discount exceeding 15% can be applied without managerial approval.” By embedding context and rules, AI systems can make decisions that are not only intelligent but also compliant with business policies and sensitive to operational realities. This layer also contributes significantly to the explainability of AI’s actions.

Explainability

Explainability in AI refers to the ability to understand why an AI system made a particular decision or prediction. In the Semantic Layer, explainability is built in by design, not as an afterthought. By providing AI with a clear semantic understanding of the business domain, its decisions can be traced back to specific concepts, relationships, and rules within the knowledge graph and ontology. This transparency is critical for building trust, meeting regulatory requirements (especially in regulated industries), and for human users to audit and refine AI behavior.

Data Products – Domain-Owned, Reusable Assets: The Fuel for Intelligent Systems

Bridging the Semantic Layer and the underlying data infrastructure are Data Products. These are not merely raw datasets; they are domain-owned, curated, and reusable assets designed to serve as reliable, high-quality inputs for AI systems. They represent a shift from centralized, monolithic data lakes to distributed, independently managed data units that adhere to clear contracts and standards.

Member 360

A “Member 360” data product provides a comprehensive, unified view of a customer or member. This aggregates data from various sources such as demographic information, purchase history, interaction logs, support tickets, and web activity into a single, cohesive profile. For AI, this normalized and contextualized view is invaluable for tasks like customer segmentation, personalized marketing, churn prediction, and understanding customer journeys. It eliminates the need for individual AI models to stitch together disparate customer data points, ensuring consistency and accuracy.

Claims Data

In industries like insurance or healthcare, “Claims Data” is a crucial data product. This would encompass all information related to a claim: submission details, claim status, associated medical procedures, costs, and outcomes. Standardizing claims data as a data product allows AI systems to perform fraud detection, process claims more efficiently, identify patterns for risk assessment, and improve overall operational workflows. Its structured nature and clear ownership ensure that AI models receive trusted and consistent inputs.

Provider Network

For industries involving service providers, a “Provider Network” data product centralizes information about all affiliated providers. This includes credentials, service offerings, locations, performance metrics, and compliance status. AI can leverage this data product to optimize provider matching for customers, identify gaps in network coverage, manage provider relationships, and even assess the quality of care or service. This domain-specific data product ensures that AI applications built upon it have access to reliable and up-to-date provider information.

Risk Adjustment

“Risk Adjustment” data products are critical in financial services and healthcare for accurately assessing risk profiles. This could involve data on credit scores, health conditions, historical risk events, and demographic factors. By consolidating and standardizing this information, AI models can more accurately calculate risk scores, determine appropriate insurance premiums, or assess lending eligibility. Such data products are vital for ensuring fair and compliant AI decision-making.

Payments

A “Payments” data product consolidates all transaction-related information, including payment methods, transaction amounts, dates, statuses, and associated invoices or orders. This is essential for financial reconciliation, fraud detection, revenue forecasting, and understanding customer spending patterns. AI systems utilizing this data product can automate payment processing, identify suspicious transactions, and provide insights into financial health.

Analytics Data

“Analytics Data” as a data product curates and delivers data specifically prepared for analytical purposes. This might include aggregated sales figures, website traffic metrics, marketing campaign performance, or operational dashboards. While other data products are more transactional, analytics data products are optimized for reporting, business intelligence, and feeding into predictive models that require summarized or pre-processed information. They ensure that AI and human analysts have access to consistent and high-quality data for deriving insights.

AI-Ready Data Management – Foundation & Governance: The Bedrock of Trust

At the very base of the AI-First architecture lies AI-Ready Data Management. This foundational layer is concerned with the holistic governance, quality, accessibility, and security of all enterprise data, making it trusted, scalable, explainable, and secure. Without this robust foundation, even the most advanced AI models and semantic layers will falter due to fragmented or unreliable data. It addresses the challenges of ensuring data truly fuels AI, rather than hindering it.

Data Sources

The journey begins with Data Sources – the origin points of all enterprise data. These can be diverse, ranging from operational databases (transactional systems, ERP, CRM), external feeds (market data, social media), IoT sensor data, steaming data, to unstructured content (documents, emails, images). The AI-Ready paradigm emphasizes treating these sources not as isolated silos but as integral parts of a unified data ecosystem that can be ingested, processed, and transformed for AI consumption. This requires robust integration strategies to connect and extract data effectively.

Governance

Data Governance is paramount in an AI-First world. It establishes the policies, processes, and responsibilities for managing and protecting data assets. This includes defining data ownership, access controls, data retention policies, and compliance with regulations (like GDPR or HIPAA). Effective governance ensures that AI systems operate within ethical boundaries, use data responsibly, and comply with legal requirements, fostering trust in AI outputs. It is vital for preventing the “AI Failure Zone” where fragmented data infrastructure prevents real business outcomes.

Data Quality

Poor data quality is a leading cause of AI project failures. The AI-Ready foundation prioritizes Data Quality through processes like data profiling, cleansing, standardization, and validation. It ensures that the data fed to AI models is accurate, complete, consistent, and timely. This involves establishing data quality rules, monitoring data health, and implementing remediation processes. High-quality data is non-negotiable for AI to generate reliable insights and make sound decisions.

Catalog & Metadata

A comprehensive Data Catalog and robust Metadata management are essential for discoverability and understanding. The catalog acts as an inventory of all data assets, while metadata provides descriptive information about each asset (e.g., schema, data types, origin, usage, ownership, refresh frequency). For AI, metadata is crucial for understanding the context and lineage of data, helping models interpret data correctly and allowing data scientists to quickly find relevant datasets for their projects. It also aids in compliance and auditing.

Lineage

Data Lineage provides a clear audit trail of data’s journey, from its origin through all transformations and usages, to its final destination in an AI model or report. Understanding data lineage is critical for debugging AI models, ensuring data provenance, and demonstrating compliance. If an AI model produces an unexpected result, tracing its inputs back through the lineage can help identify potential data quality issues or erroneous transformations. This transparency is fundamental for explainable and trustworthy AI.

Feature Store

A Feature Store is a centralized repository for storing, managing, and serving machine learning “features.” Features are the specific, engineered data points that ML models use for training and inference. By providing a consistent and version-controlled source for features, a feature store prevents data leakage, ensures consistency between training and production environments, and accelerates model development and deployment. It is a critical component for operationalizing ML models at scale.

Vector Database

With the rise of large language models and other deep learning techniques, Vector Databases have become an increasingly important part of the AI-Ready foundation. These databases specialize in storing and querying “vector embeddings,” which are numerical representations of complex data (like text, images, or audio) that capture their semantic meaning. Vector databases enable efficient similarity searches, semantic retrieval, and contextual understanding for AI applications, particularly those powered by LLMs and knowledge graphs.

Security & Privacy

Data Security and Privacy are paramount considerations across all layers but are foundational here. This involves implementing robust access controls, encryption (at rest and in transit), anonymization techniques, and auditing mechanisms to protect sensitive data from unauthorized access or misuse. For AI, it means ensuring that models are trained and operate on data that is not compromised and that privacy regulations are strictly adhered to. This aspect is continuously reinforced with robust security measures at every touchpoint.

Moving Beyond “AI on Top of Data”: The Transformative Benefits

By shifting to an AI-First architecture, organizations move beyond fragmented AI prototypes to a robust, scalable, and sustainable AI capability. This architectural shift unlocks a multitude of profound business outcomes:

Faster Decisions

With AI directly integrated into the operational core, supported by rich semantic understanding and high-quality data products, the decision-making cycle dramatically accelerates. AI Copilots provide real-time recommendations, Decision Engines automate routine choices, and Predictive Analytics offer immediate insights, allowing businesses to react, adapt, and innovate at an unprecedented pace.

Better Insights

The combination of the Semantic Layer and AI Layer enables deeper, more sophisticated insights than ever before. AI can reason over vast amounts of data, understand nuanced business contexts through ontologies and knowledge graphs, and uncover hidden patterns that human analysts might miss. This translates into more intelligent market predictions, optimized operational strategies, and a richer understanding of customer behavior.

Lower Cost

Automation workflows driven by AI Agents and Decision Engines reduce manual effort and operational overhead. Streamlined processes, optimized resource allocation from predictive analytics, and increased efficiency across various functions contribute to significant cost savings. Furthermore, by building data products and managing data proactively, organizations reduce the sunk costs associated with poor data quality and fragmented data infrastructure.

Higher Accuracy

AI models, when trained on Trusted, Scalable, Governed, Explainable, and Secure data products and guided by clear semantic understanding, achieve higher levels of accuracy. The consistency provided by this layered architecture minimizes biases, reduces errors, and ensures that AI outputs are reliable and trustworthy. This accuracy is critical for applications ranging from financial fraud detection to medical diagnostics.

Automation at Scale

The AI-First architecture provides the framework for true automation at scale. AI Agents can autonomously perform complex tasks, and Automation Workflows can orchestrate entire business processes. This moves beyond individual task automation to achieving enterprise-wide operational efficiency, allowing human capital to be reallocated to more strategic and creative endeavors.

Trusted AI

Perhaps the most critical outcome is Trusted AI. By prioritizing governance, data quality, lineage, explainability, and security at every layer, organizations can ensure that their AI systems are not only performant but also ethical, compliant, and transparent. This trust is foundational for user adoption, regulatory acceptance, and overall business confidence in AI-driven initiatives. An AI that cannot be trusted will not be used, regardless of its intelligence.

Operationalizing the AI-First Vision

Implementing an AI-First architecture is not a trivial undertaking. It requires a strategic and phased approach, starting with establishing clear governance and visibility, consolidating shared infrastructure, and then enabling advanced runtime capabilities.

The journey involves:

- Assessing Current State: Understanding existing data infrastructure, AI pilot projects, and organizational capabilities.

- Defining a Semantic Model: Collaboratively developing business ontologies, glossaries, and knowledge graphs that truly reflect the organization’s domain.

- Building Data Product Pipelines: Identifying core business domains and curating high-quality, reusable data products that can feed AI systems.

- Investing in AI-Ready Data Management Tools: Implementing solutions for data governance, quality, cataloging, lineage, feature stores, and vector databases.

- Iterative AI Development: Building AI applications (Copilots, Agents, ML Models) iteratively, ensuring they leverage the underlying semantic and data product layers.

- Establishing AI Governance Frameworks: Defining policies for AI development, deployment, monitoring, and ethical use.

The “AI Failure Zone” often arises when the AI roadmap outpaces the underlying data architecture, leading to fragmented systems where agents, copilots, and chatbots cannot reason over shared, current business context. Overcoming this requires consolidating into a “Contextual Data Layer” that manages meaning, relationships, time, and provenance for AI to function effectively.

The Future is Agentic and Architected

The shift to an AI-First architecture represents one of the most profound changes in enterprise architecture since the advent of cloud computing. It moves organizations from merely deploying AI tools to fundamentally redesigning operations around autonomous systems. This isn’t just about making AI work; it’s about making AI work intelligently, reliably, and ethically across the entire enterprise.

The competitive landscape of tomorrow will be defined not by who has the most AI models, but by who has the most intelligent, integrated, and governed AI architecture. Organizations that embrace this architectural paradigm shift will be the ones that truly unlock the transformative power of AI, driving unprecedented productivity, scalability, and intelligence.

Architecture, in the end, will determine whether AI becomes a mere collection of experiments or a true enterprise capability that reshapes the future.

Do you want to explore how an AI-First architecture can transform your IoT and IIoT initiatives? Are you ready to move beyond pilots and build a truly intelligent, automated, and trusted enterprise?

Contact us today to discuss your AI-First strategy and how iotworlds.com can help you architect your future. Send an email to info@iotworlds.com.