The rapidly evolving landscape of Artificial Intelligence (AI) presents both unprecedented opportunities and significant challenges. As AI systems become more ubiquitous, their impact on individuals, businesses, and society at large intensifies. This heightened influence necessitates robust regulatory frameworks, with the European Union’s AI Act standing as a landmark example. However, as organizations scramble to prepare for these new regulations, a critical distinction often gets blurred: the difference between AI compliance and AI governance. Many mistakenly treat these two concepts as interchangeable, a misconception that can lead to significant risks, operational inefficiencies, and ultimately, severe penalties.

This article delves deep into the fundamental differences between AI governance and AI compliance, explaining why a clear understanding of each is paramount for any organization developing, deploying, or utilizing AI systems, especially those operating within the EU’s regulatory orbit. We will explore how compliance focuses on demonstrating adherence to rules through evidence, while governance establishes the operational systems and processes that ensure responsible AI operation throughout its lifecycle. By clarifying these distinctions, we aim to equip businesses with the insights needed to build robust, defensible, and ultimately, responsible AI practices that not only meet regulatory mandates but also foster trust and drive sustainable innovation.

The EU AI Act and the Imperative for Clarity

The EU AI Act, formally known as Regulation 2024/1689, is the world’s first comprehensive legal framework for artificial intelligence. Its phased implementation, with some provisions already in force and crucial high-risk AI obligations becoming fully enforceable from August 2, 2026, makes understanding its nuances critical for Chief Compliance Officers and organizations globally. Though the Act entered into force on August 1, 2024, its extraterritorial reach means that any company whose AI impacts EU citizens, regardless of their headquarters, is subject to its requirements.

The Act adopts a risk-based approach, classifying AI systems based on their potential impact on fundamental rights and safety, with corresponding obligations escalating accordingly. This tiered approach underscores the need for organizations to accurately assess their AI systems and implement appropriate measures. Fines for non-compliance can be substantial, reaching up to €35 million or 7% of global annual turnover, whichever is higher, making documented compliance efforts a formal mitigating factor. This financial and reputational exposure highlights the urgent need for a precise and effective approach to AI regulation.

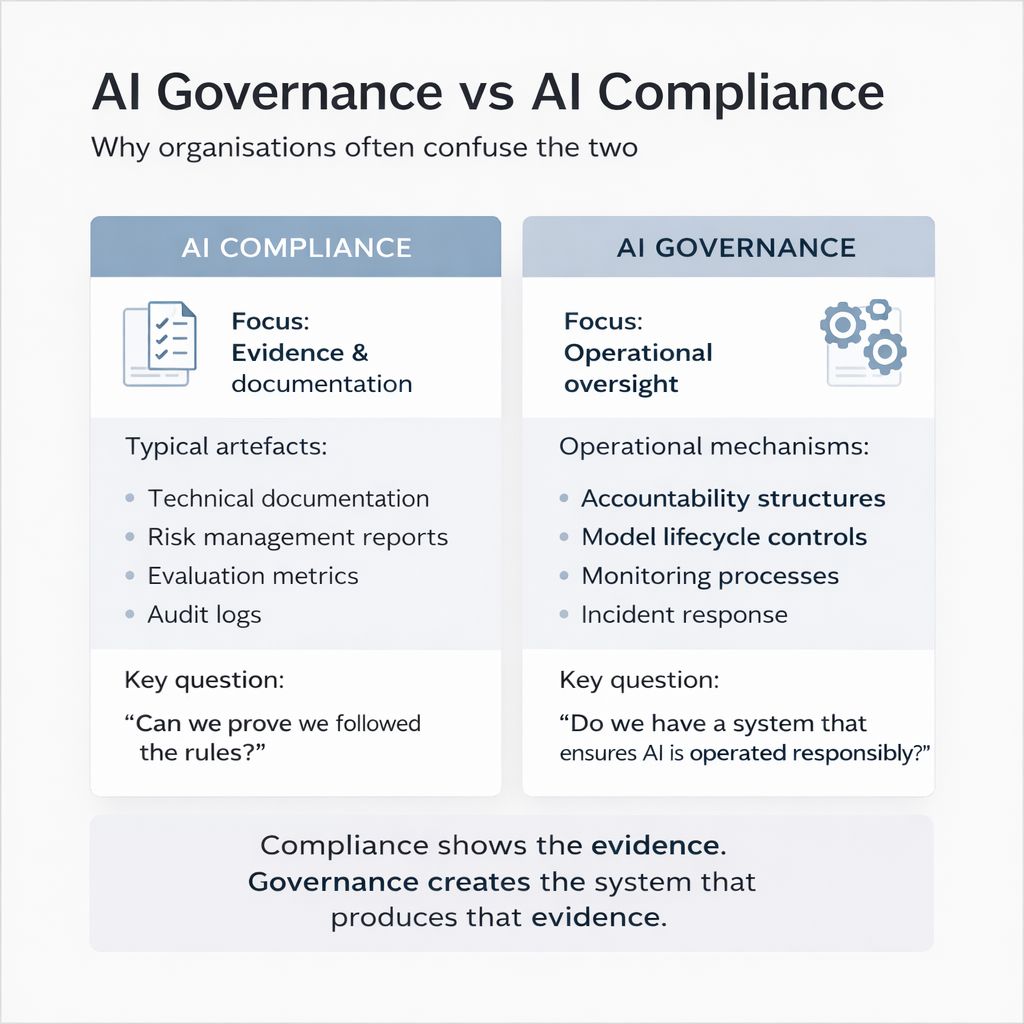

Despite the critical nature of these regulations, a common pitfall emerges: the conflation of AI governance and AI compliance. While these terms are related and interdependent, they represent distinct organizational functions with different objectives and operational mechanisms. A clear understanding of this distinction is not merely academic; it is fundamental to building sustainable, defensible, and responsible AI programs.

Unpacking AI Compliance: The Evidence Trail

AI compliance, at its core, is about demonstrating adherence to specific regulatory requirements. It is an evidence-centric discipline, focused on proving that an organization has followed the rules. Think of it as the “receipts” and “records” that prove due diligence and conformity with legal and ethical mandates.

Focus: Evidence and Documentation

The primary focus of AI compliance is the generation and preservation of tangible evidence and comprehensive documentation. This involves meticulously recording every step taken to meet regulatory obligations. Without this documentation, even if an organization has followed the rules, it cannot prove it in the face of an audit or inquiry.

Typical Artefacts of AI Compliance

To satisfy compliance requirements, organizations must produce and maintain a range of specific documents and records. These “artefacts” serve as the verifiable proof of an organization’s adherence to regulations like the EU AI Act.

Technical Documentation

This includes detailed descriptions of the AI system’s design, development, and functionality. It covers aspects like system architecture, data flow diagrams, models used, and the logic behind critical decision-making processes. For high-risk AI systems under the EU AI Act, this is particularly extensive and must be kept up-to-date throughout the system’s lifecycle.

Risk Management Reports

These reports document the identification, assessment, and mitigation strategies for potential risks associated with the AI system. This includes technical risks (e.g., bias, accuracy issues, security vulnerabilities), social risks (e.g., discrimination, privacy invasion), and ethical risks. These reports demonstrate a proactive approach to understanding and addressing potential harms.

Evaluation Metrics

Documentation of performance metrics, testing methodologies, and validation results is crucial. This includes details of fairness metrics, accuracy scores, robustness assessments, and how these metrics were chosen and applied. It provides empirical evidence of the AI system’s performance against predefined benchmarks and regulatory thresholds.

Audit Logs

Comprehensive audit logs record all significant activities related to the AI system, including data access, model training, deployment changes, and user interactions. These logs provide an immutable historical record, vital for tracing actions, investigating incidents, and demonstrating accountability. They allow auditors to reconstruct events and verify processes.

The Key Question for Compliance

The overarching question that AI compliance seeks to answer is: “Can we prove we followed the rules?” This question emphasizes the objective, verifiable nature of compliance. It’s not enough to intend to follow the rules; one must be able to demonstrate that they were, in fact, followed, backed by incontrovertible evidence.

Demystifying AI Governance: The System of Oversight

In contrast to compliance’s focus on evidence, AI governance is centered on establishing and maintaining the operational framework that produces that evidence and ensures responsible AI practices are embedded throughout the organization. Governance is about the “how” – how AI systems are managed, controlled, and overseen across their entire lifecycle, from conception to retirement. It’s the engine that drives responsible AI.

Focus: Operational Oversight

The core focus of AI governance is operational oversight. This means setting up the durable, systematic structures, processes, and responsibilities that ensure AI is developed, deployed, and used in a manner consistent with ethical principles, legal requirements, and organizational values. It’s about proactive management rather than reactive documentation.

Operational Mechanisms of AI Governance

Effective AI governance relies on a suite of interconnected operational mechanisms that institutionalize responsible AI practices. These mechanisms provide the continuous control and management required to navigate the complexities of AI development and deployment.

Accountability Structures

These define clear roles, responsibilities, and reporting lines for various aspects of AI development and deployment. This includes identifying who is responsible for data quality, model validation, risk assessments, ethical reviews, and incident response. Clear accountability ensures that there are designated individuals or teams with ownership over different stages of the AI lifecycle, preventing diffuse responsibility.

Model Lifecycle Controls

This refers to the systematic management of AI models from their initial design and data acquisition through training, testing, deployment, monitoring, and eventual decommissioning. It includes robust version control, change management processes, and rigorous validation steps at each stage to ensure models perform as intended and remain compliant.

Monitoring Processes

Continuous monitoring of AI systems after deployment is a critical governance function. This involves tracking model performance, detecting drift, identifying biases, and observing real-world impacts. Effective monitoring systems trigger alerts when deviations occur, enabling timely intervention and mitigation. This goes beyond mere logging; it’s about active surveillance and performance management.

Incident Response

A well-defined incident response plan for AI systems is essential. This outlines the procedures for identifying, triaging, investigating, and resolving issues or failures that arise from AI systems (e.g., biased outputs, security breaches, performance degradation). It includes communication protocols, remediation steps, and post-mortems to learn from incidents and prevent recurrence.

The Key Question for Governance

The fundamental question that AI governance addresses is: “Do we have a system in place that ensures AI is operated responsibly?” This question highlights the systemic, ongoing nature of governance. It moves beyond individual acts of compliance to focus on the organizational infrastructure that guarantees consistent, ethical, and lawful AI operations.

The Dangerous Illusion of Compliance Without Governance

Many organizations preparing for new regulations, particularly those facing upcoming EU AI Act deadlines, tend to prioritize compliance in isolation. They focus on generating the necessary documentation and evidence without sufficiently investing in the underlying governance structures. This approach, while seemingly efficient in the short term, is fundamentally flawed and creates significant, inherent risks.

Fragmented Documentation

When compliance is pursued without a strong governance framework, documentation often becomes fragmented and inconsistent. Different teams might use varying templates, store information in disparate locations, or generate evidence in an ad-hoc manner. This makes it incredibly difficult to compile a coherent and comprehensive body of evidence for an audit or regulatory inquiry. Auditors require a clear narrative that connects all documentation, and fragmentation hinders this narrative significantly.

Reactive Audit Preparation

Without robust governance, audit preparation typically becomes a frantic, last-minute scramble. Teams are forced to retrospectively gather, organize, and rationalize documents that were not originally created with an integrated compliance strategy in mind. This not only causes immense stress and consumes valuable resources but also increases the likelihood of missing critical pieces of evidence or presenting inconsistent information, which can raise red flags for auditors.

Evidence That Is Difficult to Defend

Even if an organization manages to accumulate a large volume of documentation, its defensibility is compromised without governance. Auditors don’t just look for documents; they look for a clear story of how those documents fit into a systematic approach to responsible AI. Without the overarching context of governance—the defined processes, accountability, and controls—individual pieces of evidence may lack credibility or appear uncoordinated. Regulators will question how the evidence was generated and who was ultimately responsible, issues that governance addresses directly.

Governance Gaps Discovered Too Late

Perhaps the most perilous outcome of neglecting governance in favor of pure compliance is the late discovery of critical operational gaps. An organization might believe it’s compliant because it has a checklist of documents, only to find during an audit (or worse, after an incident) that its actual operational practices do not align with regulatory expectations. For example, a risk management report might exist, but if the underlying risk management process is not adequately integrated with development workflows or lacks clear lines of accountability, the report itself becomes a mere formality, unable to prevent or effectively respond to real-world issues. These gaps, discovered under pressure, can lead to not only non-compliance but also significant real-world harm and reputational damage.

The Symbiotic Relationship: Governance as the Foundation for Defensible Compliance

Rather than opposing forces, AI governance and AI compliance are two sides of the same coin, with governance serving as the essential foundation upon which truly defensible compliance is built.

Governance Creates the System

AI governance is the architectural blueprint and ongoing operational management system for responsible AI. It defines:

- Who is responsible for what (accountability structures).

- How AI systems are developed, deployed, and managed through their entire lifecycle (model lifecycle controls).

- What proactive measures are in place to ensure ongoing safety and performance (monitoring processes).

- How the organization responds when things go wrong (incident response).

This “system” is designed to embed responsible AI practices into the organization’s DNA, ensuring that safety, ethics, and legal adherence are considered at every stage.

Compliance Shows the Evidence

AI compliance then demonstrates that this system is actually working as intended. It collects, organizes, and presents the tangible proof that the governance framework is effective. The technical documentation, risk management reports, evaluation metrics, and audit logs are not just standalone documents; they are the output of a well-governed process. They are the artifacts that confirm the system’s operational integrity and its adherence to regulatory mandates.

The Virtuous Cycle

When governance and compliance work in tandem, they create a virtuous cycle:

- Strong governance ensures that appropriate controls are in place, responsibilities are clear, and systems are continually monitored.

- This robust operational framework naturally generates the necessary evidence and documentation as part of its normal functioning.

- Compliance teams can then efficiently collect, review, and present this organically produced evidence, knowing it is coherent, consistent, and defensible.

- The success of compliance further reinforces the importance of strong governance, leading to continuous improvement and maturity in AI practices.

Without this symbiotic relationship, compliance becomes a burdensome, reactive exercise, always playing catch-up. With it, compliance becomes an integrated, proactive outcome of responsible AI operations.

The EU AI Act and the Need for Governance Maturity

As the EU AI Act’s enforcement deadlines loom, the focus shifts from understanding the law to demonstrating readiness. For Chief Compliance Officers, 2026 is the year of execution, where “we are working on it” will no longer be an acceptable answer. Regulators will expect operational controls for data governance, model monitoring, human oversight, documentation, and post-deployment supervision to be in place, tested, and defensible. This explicitly calls for governance maturity, not just a pile of documents.

The EU AI Act’s comprehensive nature and stringent requirements necessitate a shift in organizational thinking. It’s not about merely ticking boxes but about fundamentally integrating responsible AI principles into every aspect of an organization’s operations. This is precisely where AI governance shines.

Building Coherent and Defensible Evidence

The primary challenge organizations face is not merely collecting documentation but building the operational governance maturity required to make that evidence coherent and defensible. A fragmented approach, where documentation is generated without a unifying governance strategy, will inevitably fall short.

Consider an organization that has implemented various forms of “Responsible AI” policies, but lacks a clear framework for conformity assessment as required by the EU AI Act. The policy might document intent, but the Act requires documented evidence produced through systematic processes. The gap between intent and evidence carries real legal liability, emphasizing the urgency of robust governance.

Moving Beyond Checklists to Systems

Compliance checklists are a starting point, but they are insufficient on their own. The EU AI Act demands a systemic approach. This means:

- Integrating risk management into the AI development lifecycle, not as an afterthought.

- Establishing clear roles and responsibilities from the C-suite down to individual developers.

- Implementing continuous monitoring, not just point-in-time evaluations.

- Developing robust incident response protocols specific to AI failures.

These are all elements of AI governance, and their mature implementation is what will enable organizations to produce compliance evidence that is not only present but also structured, verifiable, and defensible during an audit.

The Competitive Advantage of Proactive Governance

While many organizations view the EU AI Act as a regulatory hurdle, forward-thinking companies recognize that robust AI governance can transform compliance into a competitive advantage. Companies that proactively embrace comprehensive AI governance frameworks aren’t just avoiding fines; they are:

- Reducing operational risks and technical debt: By embedding governance early, organizations prevent costly redesigns and remediation efforts down the line.

- Increasing stakeholder trust and brand value: Demonstrating a commitment to responsible AI builds credibility with customers, partners, and regulators.

- Accelerating AI development cycles: Clear governance frameworks provide guardrails for innovation, allowing development teams to move faster with confidence.

- Enhancing model performance and reliability: Rigorous lifecycle controls and monitoring lead to more accurate, robust, and dependable AI systems.

Accenture highlights that high-performing organizations using AI generate significantly more revenue growth and outperform on customer experience and ESG metrics. These leaders are more likely to be “responsible by design,” meaning they build AI on solid data and AI governance principles across the complete lifecycle. This underscores that effective AI governance is not just a cost of doing business but a strategic investment that drives both compliance and competitive differentiation.

A Three-Layered Approach to AI Governance and Compliance

Navigating the complexities of global AI regulation necessitates a structured approach. Fernando Lucktemberg proposes a “Three-Layer Stack” for AI governance, which effectively frames how organizations can achieve both compliance and governance maturity. While he refers to general “AI governance,” the framework implicitly highlights how underlying governance principles support compliance.

Layer 1: Technical Standards and Best Practices (e.g., NIST AI RMF, ISO 42001)

This foundational layer focuses on technical implementation and operationalization of responsible AI. Frameworks like the National Institute of Standards and Technology (NIST) AI Risk Management Framework (RMF) or ISO 42001 provide specific guidelines for managing AI risks, ensuring cybersecurity, data privacy, and ethical design at a technical level. These standards often dictate the types of technical documentation, risk management practices, and evaluation metrics that will be required for compliance. This is where engineering teams translate high-level requirements into tangible technical controls.

Layer 2: Binding Extraterritorial Regulations (e.g., EU AI Act)

The second layer represents the mandatory legal frameworks with enforcement mechanisms and financial penalties. The EU AI Act falls squarely into this category. It translates the principles from Layer 1 into legal obligations and specific conformity assessment requirements. Organizations operating in the EU or targeting EU users must ensure their AI governance structures and corresponding compliance evidence directly address the articles and annexes of the Act. This layer dictates what evidence must be presented to prove adherence.

Layer 3: International Consensus and Principles (e.g., Bletchley Park Declaration)

This top layer reflects broader ethical principles, international agreements, and aspirational goals for responsible AI development. While not always directly legally binding, these frameworks influence future regulations and set global expectations for AI. A robust governance strategy will consider these broader principles to future-proof its AI systems and demonstrate leadership in responsible innovation.

A mature AI governance strategy integrates these layers, ensuring that technical standards meet regulatory requirements, which in turn are informed by broader ethical considerations. This layered approach allows for a comprehensive and adaptive response to the evolving AI regulatory landscape.

Implementing a Robust AI Governance Framework: Practical Steps

For organizations looking to move from a reactive compliance mindset to a proactive governance-driven approach, a structured action plan is essential.

1. Conduct an AI Inventory and Risk Assessment

The first step is to comprehensively identify all AI systems in use or in development, and classify them according to a risk typology (e.g., the EU AI Act’s four risk tiers). This assessment should identify potential harms, biases, and compliance gaps. Understanding where your high-risk AI systems are is paramount, as these will require the most stringent governance and compliance measures.

2. Define Accountability Structures

Clearly delineate roles and responsibilities for AI governance. This involves appointing an AI governance committee or a specific individual (e.g., a Chief AI Officer or a designated compliance lead) responsible for overseeing the entire framework. Establish clear lines of reporting and decision-making for AI-related risks and ethical dilemmas. This ensures that “the buck stops somewhere” for responsible AI.

3. Develop Comprehensive AI Policies and Procedures

Create internal policies that translate regulatory requirements and ethical principles into actionable guidelines for AI development, deployment, and use. These should cover:

- Data governance: Policies for data acquisition, quality, privacy, and security.

- Model development and validation: Standards for testing, bias detection, robustness, and performance evaluation.

- Human oversight: Protocols for human intervention and meaningful human control where required.

- Transparency and explainability: Guidelines for documenting AI system logic and communicating its capabilities and limitations.

- Post-market monitoring: Procedures for continuous performance tracking and risk detection.

4. Implement Robust Model Lifecycle Controls

Embed governance into the entire AI lifecycle. This includes:

- Version control for models and datasets.

- Rigorous documentation requirements at each stage of development.

- Automated testing and validation pipelines that include ethical and fairness checks.

- Secure deployment procedures and change management processes.

5. Establish Continuous Monitoring and Incident Response

Deploy tools and processes for continuous monitoring of deployed AI systems. This includes:

- Performance monitoring: Tracking accuracy, drift, and other key metrics.

- Bias detection: Continuous or periodic checks for discriminatory outcomes.

- Security monitoring: Detecting and responding to vulnerabilities or attacks.

- A clear incident response plan: Outlining steps for addressing AI failures, security breaches, and ethical issues, including robust communication plans to stakeholders and regulatory bodies where necessary.

6. Foster a Culture of Responsible AI

Governance is not just about rules; it’s about culture. Provide ongoing training for all relevant staff—developers, product managers, legal teams, and executives—on AI ethics, regulatory requirements, and internal governance policies. Encourage open dialogue, ethical reasoning, and a proactive approach to identifying and mitigating AI risks.

7. Regularly Audit and Adapt

AI governance frameworks are not static. They must be regularly audited internally and potentially by third parties to ensure effectiveness and compliance with evolving regulations. Establish a feedback loop for continuous improvement, adapting policies and procedures based on new technologies, emerging risks, and updated regulatory guidance. This iterative process ensures that the governance framework remains relevant and robust.

Conclusion: Bridging the Gap for Trustworthy AI

The distinction between AI governance and AI compliance is not a semantic nuance but a foundational principle for navigating the future of artificial intelligence. Compliance provides the evidence; governance creates the system that produces that evidence. Ignoring this difference exposes organizations to significant risks, from hefty fines under regulations like the EU AI Act to reputational damage and the erosion of trust.

By prioritizing AI governance, organizations can construct a resilient framework that proactively manages risks, embeds ethical considerations, and systematically generates the comprehensive, defensible evidence required for regulatory compliance. This not only meets legal obligations but also fosters a culture of responsible innovation, builds stakeholder confidence, and ultimately transforms regulatory demands into a distinct competitive advantage. As AI continues its inexorable march into every sector, those who understand and diligently implement robust AI governance will be the leaders shaping a trustworthy and beneficial AI future.

For an in-depth assessment of your AI governance maturity, guidance specific to the EU AI Act, or assistance in developing a comprehensive AI strategy, our experts at IoT Worlds are ready to help. We specialize in transforming complex regulatory challenges into actionable, value-driven solutions. Send an email to info@iotworlds.com to start a conversation about securing your AI future.