The rapid evolution of Artificial Intelligence (AI) from a theoretical concept to an integral component of enterprise operations has ushered in an unprecedented era of innovation and efficiency. However, this transformative power comes with a commensurate increase in security challenges. As AI systems become more sophisticated and autonomous, the traditional perimeter-based security models prove increasingly insufficient. Organizations are now grappling with an expanded attack surface, the complexity of managing identity and access across distributed AI microservices, stringent data protection requirements, and the daunting task of defending against AI-powered threats that exploit vulnerabilities at machine speed.

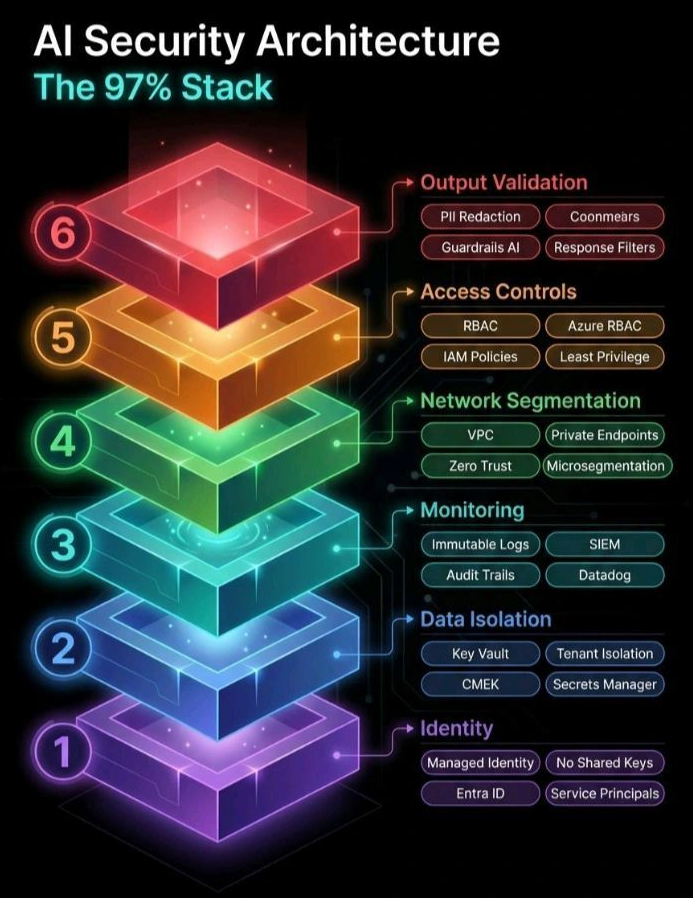

This landscape necessitates a fundamental shift in how we approach security for AI. This article delves into the critical components of a robust AI security architecture, focusing on a layered, defense-in-depth approach – often referred to as “The 97% Stack.” This architectural framework integrates governance, infrastructure, application, and model-level protections across the entire AI lifecycle, ensuring risk reduction and fostering trustworthy AI operations.

The Foundation of Trust: Understanding AI Security Architecture

At its core, AI security architecture refers to a comprehensive, multi-layered strategy designed to protect artificial intelligence systems. It’s not about a single defense but rather a synergistic integration of various controls that collectively safeguard AI from its inception to its ongoing operation. The goal is to build inherent resilience against a spectrum of threats, ranging from data poisoning and model inversion to sophisticated prompt injection attacks and unauthorized access.

The inherent probabilistic nature of AI systems, coupled with natural language as a common control channel, extends the enterprise threat model significantly. Confidentiality, integrity, and availability (the CIA triad) take on new dimensions in AI. Data leaks through prompts, tools, or logs, compromised training data altering model behavior, and “denial of wallet” attacks (where adversaries trigger expensive inference at scale) are just a few examples of the expanded threat landscape. Moreover, safety emerges as a first-class concern, as guardrails can be bypassed, leading to harmful or reputationally damaging content.

The “97% Stack” is a powerful visualization of this defense-in-depth strategy, illustrating six distinct layers, each building upon the last, to create a formidable security posture. We will explore each of these layers in detail, providing a comprehensive understanding of the mechanisms that matter and the protocols emerging to address the unique security challenges of AI.

Layer 1: Identity – The Cornerstone of AI Security

The absolute first and most fundamental layer of any secure AI architecture is robust identity management. Without unequivocally establishing who or what is interacting with your AI systems, all subsequent security measures become significantly less effective. In the context of AI, this extends beyond human users to include services, models, and even individual components communicating with each other. This layer is about ensuring that only authorized entities can access and operate within the AI environment.

Managed Identity for Services

Reliance on static credentials or shared keys introduces significant vulnerabilities. The principle of Managed Identity advocates for assigning unique, automatically managed identities to resources and services within your cloud environment. This eliminates the need for developers to manage credentials, significantly reducing the risk of accidental exposure or compromise.

Think of an AI model running as a service. Instead of manually configuring API keys or passwords for it to access a data store, a managed identity allows the cloud provider to handle the credential rotation and management securely. This not only enhances security but also streamlines development and deployment.

Eliminating Shared Keys

The concept of “No Shared Keys” is a direct extension of managed identity. Shared keys are a notorious source of security breaches. When multiple services or individuals use the same key, a compromise of that key grants access to all associated resources, creating a wide blast radius. By enforcing unique identities for each service and user, and by leveraging managed identity solutions, the risk associated with key compromise is drastically reduced.

Entra ID (Azure Active Directory) and Service Principals

For enterprises operating within the Microsoft Azure ecosystem, platforms like Entra ID (formerly Azure Active Directory) play a pivotal role in establishing and managing identities. Entra ID provides a comprehensive identity and access management (IAM) service that can extend to AI workloads.

Service Principals are a specific type of security identity used by applications, services, and automation tools to access specific Azure resources. They define the policies and permissions for these non-human identities, allowing for fine-grained control over what an AI application can do within the Azure environment. By carefully configuring Service Principals, organizations can adhere to the principle of least privilege, granting only the necessary permissions for an AI service to function.

Layer 2: Data Isolation – Protecting the Crown Jewels

Data is the lifeblood of AI. Training data, inference data, and the sensitive information processed by AI models are often highly confidential, personally identifiable, or proprietary. Consequently, securing this data through robust isolation mechanisms is paramount. This layer focuses on preventing unauthorized access to data, both at rest and in transit, and ensuring its integrity and confidentiality.

Key Vaults and Secure Credential Management

Storing sensitive keys, secrets, and certificates directly within application code or configuration files is a critical security anti-pattern. Dedicated Key Vault services provide a secure, centralized repository for managing cryptographic keys and other secrets. This ensures that sensitive information is encrypted, access is strictly controlled, and rotation policies can be enforced.

For AI systems, this means that connection strings to databases holding training data, API keys for external services, or cryptographic keys used for data encryption are never exposed directly. Instead, the AI application requests these secrets from the Key Vault at runtime, significantly reducing the attack surface.

Customer-Managed Encryption Keys (CMEK)

While cloud providers offer default encryption for data at rest, organizations with stringent compliance requirements or enhanced security needs often opt for Customer-Managed Encryption Keys (CMEK). With CMEK, organizations retain control over their encryption keys, adding an extra layer of security and auditability.

In an AI context, this could involve encrypting large datasets used for model training with keys managed by the organization. Even if an attacker were to gain access to the raw storage, the data would remain unintelligible without the CMEK.

Tenant Isolation in Multi-Tenant Environments

Many AI services and platforms operate in multi-tenant cloud environments. In such scenarios, “Tenant Isolation” is crucial. This refers to the architectural and technical measures taken by cloud providers to logically separate the data and resources of different customers (tenants).

For AI, this means ensuring that one organization’s training data, models, and inference requests are completely isolated from another’s. Robust tenant isolation prevents cross-tenant data leakage and ensures that the actions of one tenant do not adversely affect the security or performance of others.

Secrets Manager

Similar to Key Vaults, Secrets Manager services provide a secure way to store and retrieve credentials, API keys, and other secrets. They often include features like automatic rotation of secrets, fine-grained access control, and integration with other cloud services. This simplifies the management of secrets for AI applications, reducing the operational burden while enhancing security.

Effectively implementing data isolation ensures that even if an attacker manages to penetrate other layers, accessing the core AI data remains a significant hurdle.

Layer 3: Monitoring – Vigilance as a Continuous Defense

Even the most robust security architecture is incomplete without continuous monitoring. The Monitoring layer acts as the eyes and ears of your AI security, providing real-time visibility into system behavior, detecting anomalies, and alerting security teams to potential threats. For AI systems, this vigilance is particularly critical given the dynamic and sometimes unpredictable nature of machine learning models.

Immutable Logs and Audit Trails

At the heart of effective monitoring are “Immutable Logs” and “Audit Trails.” Every action, every access, every change within the AI environment should be meticulously recorded in tamper-proof logs. Immutability ensures that these logs cannot be altered or deleted, providing an undeniable record of events.

Audit trails, on the other hand, provide a chronological record of who accessed what, when, and from where. For AI, this includes logging model invocations, data access patterns, configuration changes, and any abnormal behavior indicative of a security incident. These logs are indispensable for forensic analysis, compliance, and post-incident review.

Security Information and Event Management (SIEM)

A “Security Information and Event Management (SIEM)” system is designed to aggregate, correlate, and analyze security data from various sources across an IT infrastructure. For AI security, a SIEM can ingest logs from identity services, data isolation layers, network components, and even the AI applications themselves.

The power of a SIEM lies in its ability to identify patterns and anomalies that might indicate an attack. For instance, a sudden surge in failed login attempts to an AI service, unusual data retrieval patterns, or unexpected model behavior could all be flagged by a SIEM for further investigation.

Datadog and Other Observability Platforms

Tools like “Datadog” (and other modern observability platforms) offer comprehensive monitoring capabilities that extend beyond traditional security logging. They provide insights into the performance, health, and behavior of AI applications and infrastructure. This includes metrics, traces, and logs, all correlated to provide a holistic view.

While not exclusively security tools, observability platforms are vital for AI security. They can help detect performance degradation caused by denial-of-service attacks, identify abnormal resource consumption that might indicate a compromised AI agent, or highlight unexpected interactions between AI components that could signal a breach. Their ability to contextualize security events within the broader operational landscape is invaluable.

The monitoring layer enables proactive threat detection and rapid incident response, transforming security from a reactive to a resilient, continuous process.

Layer 4: Network Segmentation – Containing the Blast Radius

The distributed nature of modern AI microservices, often built on serverless architectures, can significantly expand the attack surface. A single misconfigured component could provide attackers with a foothold for lateral movement. This makes robust “Network Segmentation” an indispensable layer, designed to logically divide networks into smaller, isolated segments, limiting the scope of potential attacks and preventing unauthorized access between different components of the AI system.

Virtual Private Clouds (VPC)

“Virtual Private Clouds (VPCs)” provide a logically isolated section of a public cloud where organizations can launch their cloud resources in a virtual network that they define. This allows for complete control over the IP address ranges, subnets, route tables, and network gateways.

For AI workloads, a VPC is fundamental for creating a secure, isolated environment for model training, inference, and data storage. By placing different AI components (e.g., data ingestion, model processing, API endpoints) in separate subnets within a VPC, traffic can be controlled and restricted, preventing direct communication between unauthorized components.

Private Endpoints

While VPCs provide overall network isolation, “Private Endpoints” offer a more secure way for services within a VPC to connect to specific public cloud services (like AI model repositories or data lakes) without traversing the public internet. This ensures that all traffic between the VPC and the external service remains within the cloud provider’s private network.

Integrating AI models with data sources often requires connecting to managed services. Private Endpoints eradicate the risk associated with exposing these connections to the internet, even with strong authentication, thereby enhancing the confidentiality and integrity of data transfers.

Zero Trust Architecture

“Zero Trust” is a security model that operates on the principle of “never trust, always verify.” Instead of establishing a trusted perimeter, Zero Trust assumes that no user, device, or application, inside or outside the network, should be implicitly trusted. Every access request is authenticated, authorized, and continuously validated.

Implementing Zero Trust for AI means that every microservice, every model invocation, and every data access request must be explicitly verified. This not only involves strong authentication but also context-aware authorization based on factors like user role, device posture, location, and the sensitivity of the data being accessed. This significantly elevates the security posture of distributed AI systems.

Microsegmentation

“Microsegmentation” takes network segmentation to an even finer granularity. Instead of segmenting at the subnet level, microsegmentation allows for the creation of isolated security zones for individual workloads, applications, or even specific AI functions. This creates a “default deny” posture, where only explicitly allowed communication pathways are permitted.

For complex AI systems with numerous interdependent microservices, microsegmentation is invaluable. If one microservice is compromised, microsegmentation ensures that the attacker’s ability to move laterally to other services is severely curtailed, effectively containing the potential damage. It’s a critical strategy for minimizing the blast radius of an attack in a serverless, microservices-oriented AI architecture.

Layer 5: Access Controls – Governing Who Can Do What

Even with robust identity verification and network isolation, it’s essential to define precisely what identified entities are authorized to do within the AI ecosystem. The “Access Controls” layer is dedicated to enforcing permissions, ensuring that users and services only have the necessary privileges to perform their assigned functions. This adheres strictly to the principle of least privilege, a cornerstone of secure systems.

Role-Based Access Control (RBAC)

“Role-Based Access Control (RBAC)” is a foundational access control mechanism where permissions are associated with specific roles, and users or services are then assigned to those roles. Instead of assigning permissions to individual users, which can quickly become unmanageable, RBAC simplifies permission management by centralizing it around job functions.

For AI, this means clearly defining roles such as “AI Data Scientist,” “Model Developer,” “AI Operations Engineer,” or “AI Auditor.” Each role would have a predefined set of permissions – for example, a Data Scientist might have access to specific training data stores and model development environments, while an AI Operations Engineer might have permissions to deploy and monitor models in production, but not to modify the underlying code or access raw sensitive data.

Azure RBAC (and Cloud Provider Equivalents)

Cloud providers offer their own implementations of RBAC, seamlessly integrating with their identity and resource management services. “Azure RBAC,” for instance, allows for granular permission assignments on Azure resources at different scopes, from subscriptions down to individual resources.

Leveraging cloud-native RBAC for AI workloads streamlines the management of access policies, ensuring consistency and ease of auditing. It allows organizations to control who can create, train, deploy, and manage AI models, who can access associated data, and who can configure security settings.

IAM Policies (Identity and Access Management)

Beyond RBAC, comprehensive “IAM Policies” provide further granularity and flexibility in defining permissions. These policies are declarative statements that specify what actions are allowed or denied on which resources, under what conditions.

For AI systems, IAM policies can be written to control access to specific API endpoints, to restrict model inference to certain times of day, or to limit data access based on the sensitivity classification of the data. They can enforce conditional access, ensuring that access to critical AI resources is only granted from secure devices or specific network locations.

Principle of Least Privilege

“Least Privilege” is not just a feature but a fundamental security principle that underpins effective access controls. It dictates that users and services should only be granted the minimum permissions necessary to perform their legitimate tasks, and no more.

Applying this to AI means meticulously reviewing and optimizing permissions for every account and service principal involved in the AI lifecycle. For example, a model inference service in production should only have read-only access to the necessary input data and perhaps write access to logging and output data, but never write access to the training data store or model configuration files. Adherence to least privilege significantly reduces the attack surface and minimizes the impact of a compromised credential.

Layer 6: Output Validation – The Final Frontier

Even after establishing strong identity, isolating data, monitoring activity, segmenting the network, and enforcing stringent access controls, the outputs generated by AI models a critical point of vulnerability. The “Output Validation” layer focuses on scrutinizing and filtering AI-generated content before it reaches end-users or downstream systems. This is particularly vital in mitigating risks like prompt injection, data leakage, and the generation of harmful or biased content.

PII Redaction

“PII Redaction” (Personally Identifiable Information Redaction) involves automatically identifying and obfuscating sensitive personal data from AI outputs. AI models, especially large language models, can inadvertently (or maliciously, via prompt injection) regurgitate PII that was present in their training data or provided in a prompt.

Implementing PII redaction ensures that names, addresses, phone numbers, financial information, and other sensitive identifiers are removed or masked before the AI’s response is delivered. This is crucial for compliance with privacy regulations like GDPR and HIPAA, and for protecting user confidentiality.

Guardrails AI

“Guardrails AI” refers to a set of preventative measures and rules designed to steer an AI model’s behavior within acceptable and safe boundaries. These guardrails are independent of the model itself and act as an external layer of validation and control.

Guardrails can enforce various policies, such as:

- Content Moderation: Preventing the generation of toxic, hateful, or inappropriate content.

- Topic Adherence: Ensuring the AI stays on-topic and does not deviate into unrelated or prohibited subjects.

- Factuality Checks: Integrating mechanisms to cross-reference AI-generated statements with trusted knowledge bases to prevent misinformation.

- Sensitivity Filters: Automatically detecting and blocking responses that touch on sensitive topics that the AI is not authorized to discuss.

Guardrails are particularly important for autonomous AI agents that can execute code, call APIs, and take real-world actions, as their “blast radius of attack” is significantly higher than traditional chatbots.

Response Filters

“Response Filters” are a broader category of validation mechanisms applied to AI outputs. These filters can be rule-based, machine-learning-based, or a combination of both, designed to detect and block undesirable content or behavior.

Examples of response filters include:

- Sanitization: Removing or encoding potentially malicious code snippets (e.g., HTML, JavaScript) from AI-generated text that might be rendered in a web application.

- Length Limits: Preventing excessively long or resource-intensive outputs.

- Sentiment Analysis: Flagging outputs with highly negative sentiment that might indicate an AI drift or an attempted attack.

- Keyword Blocking: Preventing the use of specific prohibited keywords or phrases.

These filters act as a last line of defense, catching outputs that might have slipped past earlier security layers or that represent new, emergent threats.

Coonmears (and other emerging techniques for adversarial robustness)

While “Coonmears” isn’t a universally recognized term in mainstream AI security documentation, its inclusion here likely refers to advanced techniques designed to enhance the “adversarial robustness” of AI models and their outputs. Adversarial attacks are a significant threat to AI integrity, where subtle, carefully crafted inputs can cause a model to make incorrect classifications or generate unexpected outputs.

Techniques in this realm might include:

- Adversarial Training: Training models with adversarially perturbed data to make them more resilient to future attacks.

- Defensive Distillation: A technique to harden models against adversarial examples by training a second model on the softened outputs of an initial model.

- Input Sanitization/Filtering: Employing pre-processing steps to detect and neutralize adversarial perturbations in input data before it reaches the model.

- Output Consistency Checks: Monitoring the consistency and reasonableness of AI outputs over time to detect deviations indicative of an adversary.

The continuous fight against AI-powered threats, which can automate reconnaissance and adapt attacks in real-time, requires a deep understanding and implementation of these advanced adversarial robustness techniques [go.aws].

The AI Execution Gap: From Models to Systems

The discussion often fixates on the AI model itself, as if the neural network were the entire attack surface. The “AI Execution Gap” metaphorically represents the chasm between a technically sound AI model and its secure, reliable deployment within an enterprise system. This gap encompasses all the integration points, data flows, protocols, and trust decisions that constitute the broader AI system.

Beyond Model-Centric Security

Many initial AI security conversations prioritize model-level guardrails and content filters. While these are important components of the Output Validation layer, they are insufficient on their own. Incidents still happen because the security focus is too narrow. A truly secure AI system requires a holistic approach that extends security considerations to the entire substrate on which intelligence operates.

The attack surface of an autonomous AI agent expands significantly compared to a traditional chatbot. Agents that can execute code, invoke APIs, and modify production systems introduce a higher “blast radius of attack,” making comprehensive system-level security imperative.

The Importance of a Systemic View

Bridging the AI Execution Gap requires a systemic view of security, integrating all six layers of the AI Security Architecture. It means understanding how:

- Identity management (Layer 1) securely provisions credentials for AI services.

- Data isolation (Layer 2) protects sensitive training and inference data throughout its lifecycle.

- Monitoring (Layer 3) provides continuous visibility into the entire AI pipeline for anomaly detection.

- Network segmentation (Layer 4) prevents lateral movement of threats within distributed AI components.

- Access controls (Layer 5) enforce the principle of least privilege for every AI interaction.

- Output validation (Layer 6) acts as a final safeguard against malicious or erroneous AI outputs.

Ignoring a single layer creates a weak link that adversaries can exploit. For example, excellent model-level guardrails are useless if an attacker can compromise a service’s identity (Layer 1) and bypass those guardrails through direct manipulation of the underlying infrastructure.

Building Resilient AI: Governance and Compliance

An effective AI security architecture goes hand-in-hand with robust AI governance. While the security stack provides the technical controls, governance provides the overarching framework, policies, and responsibilities necessary to manage AI risk across its lifecycle.

The AI Governance Model Canvas

Organizations need more than just policy-level AI governance; they need system-level governance tailored to each high-impact AI use case [caio.waiu]. The “AI Governance Model Canvas” is a practical tool for designing this specific governance for each AI system, addressing key questions like:

- What is the AI system allowed (and not allowed) to do?

- Who owns it and who signs off on key decisions?

- Which laws, standards, and internal policies must it follow?

- How will it be monitored, audited, and updated over time?

Integrating the technical controls of the AI security architecture with this governance framework ensures that security measures are aligned with business objectives, regulatory requirements, and ethical considerations.

Meeting Regulatory Demands

The complex regulatory environment, with frameworks like GDPR, HIPAA, PCI-DSS, and SOC 2, demands robust security controls and comprehensive audit trails for AI systems [go.aws]. The layered approach of the AI security architecture directly addresses these demands:

- Data Protection: Data isolation (Layer 2) and PII redaction (Layer 6) are critical for GDPR and HIPAA compliance.

- Access Control: RBAC and least privilege (Layer 5) ensure adherence to security principles required by most compliance frameworks.

- Auditability: Immutable logs and audit trails (Layer 3) provide the necessary evidence for regulatory scrutiny.

- Risk Management: The entire defense-in-depth approach is a proactive risk management strategy, aligning with frameworks like the NIST AI Risk Management Framework [caio.waiu].

By systematically implementing each layer of the AI Security Architecture, organizations can confidently navigate compliance requirements and build trustworthy AI systems.

The Future of AI Security: Adapting to Evolving Threats

The security landscape for AI is constantly evolving. Attackers are increasingly leveraging AI to automate reconnaissance, adapt attacks in real-time, and identify vulnerabilities at machine speed [go.aws]. This necessitates a dynamic and adaptable approach to AI security.

AI-Powered Defenses

Just as adversaries use AI, so too must defenses. Future AI security architectures will increasingly incorporate AI-powered threat detection and response mechanisms. This could involve:

- AI-Enhanced SIEM: Leveraging machine learning within SIEM systems to identify sophisticated attack patterns that evade traditional rule-based detection.

- Behavioral Anomaly Detection: Using AI to learn normal behavior patterns of AI models and systems, flagging any significant deviations as potential threats.

- Automated Incident Response: AI-driven automation to respond to detected threats, such as isolating compromised components or revoking temporary access.

Continuous Improvement and Research

No security architecture is ever truly “finished.” Organizations must commit to continuous improvement, research, and adaptation. This includes:

- Staying Abreast of Research: Monitoring the latest academic research and industry reports on AI security threats and mitigation strategies (e.g., adversarial machine learning research, prompt injection defense advancements).

- Regular Audits and Penetration Testing: Proactively testing the AI security architecture for vulnerabilities and weaknesses.

- Security by Design: Integrating security considerations from the very outset of AI system design and development, rather than as an afterthought.

The “97% Stack” provides a powerful mental model and a practical roadmap for building a resilient AI security architecture. By diligently implementing each layer—from identity to output validation—organizations can harness the transformative power of AI with confidence, knowing their intelligent systems are protected by a comprehensive and adaptable defense-in-depth strategy.

Empower Your AI Journey with IoT Worlds

Navigating the complexities of AI security architecture requires specialized expertise. At IoT Worlds, our team of seasoned professionals is dedicated to helping organizations like yours design, implement, and maintain robust, defense-in-depth security strategies for your AI initiatives. Whether you’re grappling with identity management for your AI services, establishing secure data isolation, or needing to implement cutting-edge output validation, we provide tailored consultancy services to bridge the AI Execution Gap and ensure your AI systems are secure, compliant, and resilient.

Don’t let security concerns hinder your AI innovation. Take the proactive step towards a secure AI future.

To learn more about how IoT Worlds can assist with your AI security architecture, email us today at info@iotworlds.com.