In today’s interconnected world, data centers stand as the unsung heroes of our digital lives. From the seamless streaming of your favorite shows to the intricate algorithms powering artificial intelligence, every digital interaction relies on a sophisticated physical infrastructure. Understanding the diverse landscape of data centers is no longer just the domain of IT professionals; it’s a strategic imperative for any business looking to thrive in the digital age.

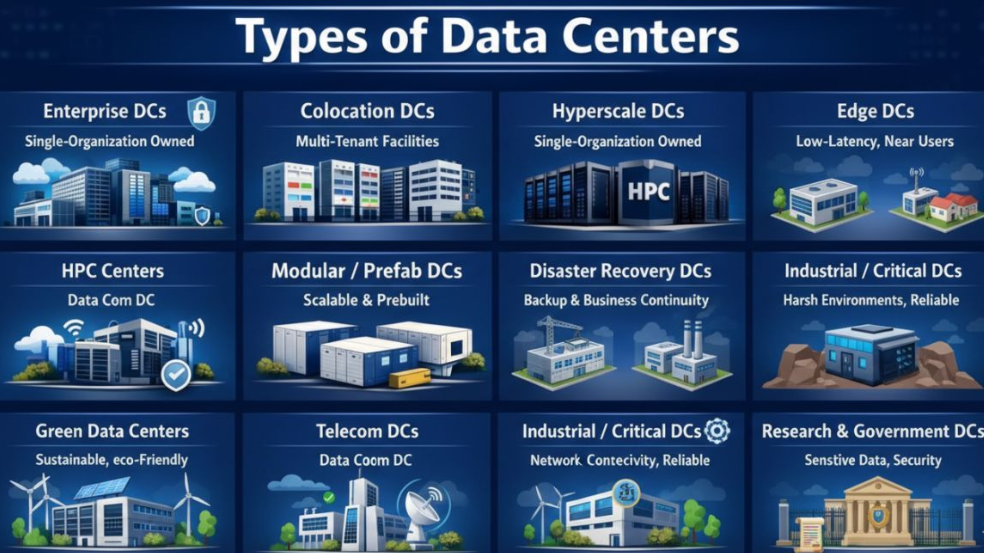

The sheer variety of digital demands means that a one-size-fits-all approach to data infrastructure is simply unfeasible. Different workloads require distinct environments, each optimized for specific performance metrics like speed, security, scalability, or cost-efficiency. This article will delve into 11 core types of data centers, offering a comprehensive guide to their unique characteristics, benefits, and the specific digital needs they address. By understanding these distinctions, organizations can make informed decisions that lay a strong, resilient, and future-ready foundation for their IT operations.

The Foundation: What Exactly is a Data Center?

Before exploring the different types, it is essential to establish a clear understanding of what a data center entails. At its core, a data center is a dedicated physical facility housing critical IT infrastructure such, as servers, storage systems, and networking equipment. These components work in harmony to process, store, and transmit vast amounts of data. Beyond the IT gear itself, data centers incorporate sophisticated support infrastructure, including power supply, cooling systems, fire suppression, and physical security measures, all designed to ensure continuous operation and protect valuable digital assets.

Essentially, data centers provide the controlled environment necessary for modern IT operations to function reliably and efficiently. They are the engine rooms of the internet, cloud computing, and virtually every digital service we use daily. The careful design and operation of a data center are paramount for maintaining data integrity, ensuring application performance, and safeguarding against disruptions.

1. Enterprise Data Centers: The Command Center of Your Business

A Dedicated Fortress for Internal Operations

Enterprise Data Centers are the workhorses of many organizations. These facilities are built, owned, and operated by a single company specifically for its internal use, mirroring an in-house IT department on a grander scale. They serve as the central hub for all mission-critical applications, sensitive data, and proprietary systems that are essential to the business’s day-to-day operations.

For industries such as finance, healthcare, or government, where data security and regulatory compliance are paramount, Enterprise DCs offer an unparalleled level of control. Organizations can dictate every aspect of the infrastructure, from hardware specifications and software configurations to security protocols and environmental controls. This level of customization ensures that the data center precisely meets the unique performance, security, and compliance requirements of the business.

Advantages of Ownership and Control

The primary benefit of an Enterprise Data Center is the absolute control it provides. This control extends to:

- Security: Organizations can implement highly specialized physical and cyber security measures tailored to their specific risk profile. This might include multi-factor authentication, biometric access controls, advanced surveillance systems, and dedicated security personnel.

- Customization: Every element, from rack density to cooling solutions and network architecture, can be customized to optimize performance for specific applications and workloads. This is particularly advantageous for highly specialized software or computationally intensive tasks.

- Compliance: Meeting stringent regulatory requirements, such as HIPAA, GDPR, or specific industry standards, is often easier within a fully controlled environment where all processes and data handling can be meticulously audited and managed.

- Performance Optimization: Direct control over hardware and network paths allows for fine-tuned performance optimization, minimizing latency and maximizing throughput for critical applications.

Challenges of Enterprise Data Centers

Despite their advantages, Enterprise Data Centers come with significant challenges. The initial capital expenditure for building such a facility can be substantial, encompassing everything from land acquisition and construction to purchasing and installing all IT and support infrastructure. Furthermore, ongoing operational costs for power, cooling, maintenance, staffing, and security are considerable. Organizations also bear the full responsibility for managing the data center’s lifecycle, including upgrades, disaster recovery planning, and eventual decommissioning. For many businesses, particularly those not in the core business of IT infrastructure, the financial and operational burden can be prohibitive.

2. Colocation Data Centers: Shared Space, Shared Benefits

The Collaborative IT Environment

Colocation Data Centers offer a compelling alternative for organizations that require dedicated hardware but prefer to outsource the management of the physical facility. In a colocation arrangement, a third-party provider owns and operates the building, power, cooling, and network infrastructure, while businesses rent space – typically in racks, cages, or private suites – to house their own servers and networking equipment.

This model allows companies to retain ownership and control over their IT hardware and software stack, often providing a similar level of customization to an Enterprise DC for the IT equipment itself. However, they offload the immense responsibility and cost associated with managing the physical environment to experts. Colocation providers ensure the facility maintains optimal operating conditions, redundancy, and security, allowing clients to focus on their core business operations.

Benefits of the Colocation Model

The appeal of colocation lies in its balance of control and outsourced management:

- Cost-Effectiveness: Reduces capital expenditure significantly, as companies avoid the massive upfront costs of building a data center. Operational costs are also lower due to shared infrastructure and economies of scale enjoyed by the provider.

- Scalability: Businesses can easily scale up or down their physical footprint as their needs evolve, renting more or less space without the complexities of managing a proprietary facility.

- Expert Management: Colocation providers specialize in data center operations, bringing expertise in power management, cooling systems, physical security, and network connectivity. This often translates to higher reliability and uptime.

- Enhanced Connectivity: Many colocation facilities are carrier-neutral, offering access to a wide array of network providers. This provides flexibility, competitive pricing for bandwidth, and improved connectivity options.

- Geographic Diversity: Companies can strategically place their equipment in various colocation facilities to achieve geographic redundancy for disaster recovery purposes, without the need to build multiple separate data centers.

Considerations for Colocation

While beneficial, choosing a colocation provider requires careful consideration. Organizations must evaluate the provider’s security measures, uptime guarantees (Service Level Agreements or SLAs), power redundancy, cooling capabilities, and network options. The “multi-tenant” nature means sharing some infrastructure, so understanding the provider’s operational standards and potential impacts of other tenants is important.

3. Hyperscale Data Centers: The Giants of the IoT Worlds

Massive Scale for Global Cloud Services

Hyperscale Data Centers are the behemoths of the digital landscape, representing the pinnacle of scale, efficiency, and infrastructure investment. These facilities are typically owned and operated by a single large technology company, such as a major cloud service provider (e.g., AWS, Microsoft Azure, Google Cloud) or an internet giant (e.g., Meta, Amazon). They are designed to support millions of users, enable global-scale services, and power the vast ecosystems of cloud computing and big data.

The defining characteristic of a hyperscale data center is its immense capacity. These facilities often span hundreds of thousands to over a million square feet, housing hundreds of thousands of servers and consuming tens to hundreds of megawatts of power. They are engineered from the ground up for extreme efficiency, reliability, and automated management to handle the enormous and ever-growing demand for digital services.

Engineering for Extreme Performance and Efficiency

Hyperscale data centers are technological marvels, built with several key objectives in mind:

- Unprecedented Scale: They are designed to expand rapidly and efficiently, often utilizing modular designs and standardized components to facilitate quick deployment of new capacity.

- Operational Efficiency: Every aspect is optimized for maximum energy efficiency, from innovative cooling techniques (like liquid cooling) to custom-designed server hardware and sophisticated power distribution systems. This helps manage the colossal operational costs.

- Automation: Extensive automation tools are used for server provisioning, resource allocation, monitoring, and maintenance, minimizing human intervention and maximizing operational speed.

- Global Reach: These facilities are strategically located around the world to ensure low latency and high availability for users across different geographies. They form the backbone of global content delivery networks and cloud platforms.

- Specialized Workloads: They are optimized for highly distributed, large-scale workloads typical of cloud services, AI/ML training, big data analytics, and global web services.

The Backbone of the Digital Economy

Hyperscale data centers are crucial for the modern digital economy. They provide the infrastructure that supports public cloud services, allowing businesses of all sizes to access scalable compute, storage, and networking resources without needing to build their own vast infrastructure. They also enable the development and deployment of advanced technologies like artificial intelligence and machine learning, which require immense computational power. While inaccessible to most individual businesses for direct ownership, their services are consumed ubiquitously through cloud platforms.

4. Edge Data Centers: Bringing Compute Closer to the Action

Low Latency for Real-Time Applications

Edge Data Centers represent a paradigm shift in data processing, moving compute resources away from centralized facilities and closer to the source of data generation and consumption – the “edge” of the network. This proximity dramatically reduces latency, making them indispensable for applications that require real-time processing and immediate responsiveness.

The rise of the Internet of Things (IoT), autonomous vehicles, smart cities, and augmented reality has created an explosion of data that often cannot wait for round trips to distant centralized data centers. Edge DCs are smaller, distributed facilities designed to handle this localized processing, filtering, and analysis of data, sending only essential information back to larger, centralized cloud or hyperscale data centers.

Key Characteristics and Benefits of Edge DCs

Edge Data Centers are defined by their location and purpose:

- Low Latency: By placing compute resources geographically closer to end-users or IoT devices, edge DCs drastically reduce the time it takes for data to travel, crucial for real-time applications.

- Bandwidth Optimization: They process data locally, reducing the amount of raw data that needs to be transmitted over wide area networks (WANs). This conserves bandwidth and lowers data transfer costs.

- Localized Processing: Enables immediate actions based on local data without dependence on a central cloud. This is critical for functions like autonomous driving, industrial automation, and real-time anomaly detection.

- Enhanced Reliability: Distributed edge deployments can offer higher resilience. If one edge data center goes offline, local services might still be maintained by other nearby edge nodes or failover to a central facility.

- Security at the Edge: Initial data processing and anonymization at the edge can enhance security and privacy by reducing the transmission of sensitive raw data.

Use Cases for Edge Computing

Edge DCs are vital for:

- IoT Deployments: Processing data from thousands or millions of sensors in smart factories, agricultural sites, or urban environments.

- Smart Cities: Managing traffic lights, public safety cameras, environmental sensors, and smart utilities.

- Autonomous Systems: Providing real-time decision-making capabilities for autonomous vehicles, drones, and robots.

- Augmented/Virtual Reality (AR/VR): Delivering immersive experiences that require instantaneous rendering and interaction.

- Content Delivery Networks (CDNs): Caching content closer to users to reduce load times for websites and streaming services.

5. High-Performance Computing (HPC) Centers: The Powerhouses of Discovery

Engineered for Computational Intensity

High-Performance Computing (HPC) Centers are specialized data centers engineered to tackle the most computationally demanding tasks imaginable. Unlike general-purpose data centers, HPC centers are designed from the ground up for extreme processing power, often featuring supercomputers, massive parallel processing capabilities, and advanced cooling systems to manage the intense heat generated by thousands of powerful processors.

These facilities are the engines behind scientific discovery, complex simulations, and cutting-edge research. They power everything from weather forecasting and genetic sequencing to financial modeling and artificial intelligence training. The focus in an HPC center is not just on uptime, but on maximizing raw computational throughput and minimizing the time it takes to complete complex calculations.

Core Components and Applications of HPC

HPC centers are distinct due to their specialized architecture:

- Supercomputers: Comprising thousands of interconnected processors (CPUs and GPUs) working in parallel to solve complex problems exponentially faster than conventional systems.

- Specialized Interconnects: High-speed, low-latency networks are critical to ensure that data can move efficiently between the vast number of processing units, avoiding bottlenecks.

- Advanced Cooling: The dense packing of powerful processors generates immense heat, necessitating sophisticated cooling solutions like liquid cooling or innovative air management systems to maintain optimal operating temperatures.

- Massive Storage: HPC tasks often involve analyzing and generating petabytes of data, requiring ultra-fast, high-capacity storage solutions that can keep pace with the computational speed.

- Specific Workloads:

- Scientific Research: Performing simulations for climate change, astrophysics, molecular dynamics, and drug discovery.

- AI/Machine Learning: Training large-scale neural networks for deep learning models.

- Financial Modeling: Running complex simulations for risk analysis, algorithmic trading, and quantitative finance.

- Engineering & Manufacturing: Designing and simulating complex products, from aerospace components to automotive crash tests.

- Big Data Analytics: Processing and analyzing colossal datasets that would overwhelm conventional systems.

The Role in Innovation

HPC centers are pivotal to innovation across virtually all scientific and industrial sectors. They enable breakthroughs that would be impossible with standard computing resources, driving progress in fields critical to human advancement and economic competitiveness. Their specialized nature means they are often found in universities, national laboratories, and large research-driven corporations.

6. Modular / Prefabricated Data Centers: Scalability on Demand

Rapid Deployment and Flexible Expansion

Modular or Prefabricated Data Centers represent a shift from traditional stick-built construction to a more agile, industrialized approach. These data centers are built off-site in factory-controlled environments, often as self-contained units or modules that include all necessary IT and support infrastructure (racks, power, cooling, fire suppression). Once constructed, these modules are transported to the deployment site and assembled, significantly reducing construction time and complexity.

This approach offers unparalleled flexibility and scalability, allowing organizations to expand their data center capacity rapidly as demand grows, without the long lead times and high upfront costs associated with traditional builds. They can range from single-rack units to multiple interconnected modules forming a much larger facility.

Advantages of Modular Data Center Design

The benefits of modular DCs are centered around speed, cost, and adaptability:

- Speed of Deployment: Factory assembly and pre-integration mean these data centers can be deployed much faster—weeks or months, compared to years for traditional builds.

- Scalability: Capacity can be expanded precisely as needed by adding more modules. This “pay-as-you-grow” model prevents over-provisioning and optimizes capital expenditure.

- Cost-Effectiveness: Reduced on-site labor, faster deployment, and improved quality control from factory manufacturing can lead to lower overall costs compared to traditional construction.

- Consistency and Quality: Built in controlled factory environments, modular DCs exhibit higher quality control and consistency in construction.

- Environmental Suitability: They can be deployed in remote or harsh environments where traditional construction is challenging or impractical.

- Energy Efficiency: Often designed with high energy efficiency in mind, leveraging optimized cooling and power distribution within the self-contained modules.

Applications and Future Trends

Modular DCs are ideal for:

- Edge Deployments: Quickly establishing compute resources in remote locations or closer to data sources.

- Temporary Capacity: Providing temporary or swing capacity during traditional data center renovations or expansions.

- Specific Projects: Deploying dedicated infrastructure for temporary or specific high-performance computing projects.

- Disaster Recovery: Rapidly deploying backup infrastructure in the event of a primary site failure.

The trend towards modularity is growing, driven by the need for faster time-to-market, greater agility, and sustainable practices in data center development.

7. Disaster Recovery Data Centers: Ensuring Business Continuity

Redundancy for Uninterrupted Operations

Disaster Recovery Data Centers (DRDCs) are specialized facilities designed with a singular, critical purpose: to ensure business continuity and minimize downtime in the event of a catastrophic failure at a primary data center. These centers are built for redundancy, acting as a backup site where critical data, applications, and systems can failover and continue operating when the main facility is compromised by natural disasters, cyberattacks, power outages, or other unforeseen incidents.

The essence of a DRDC lies in its ability to replicate the functionality of the primary data center, allowing an organization to quickly restore operations with minimal data loss and service interruption. This is achieved through robust data replication technologies, redundant network connectivity, and ample capacity to take over primary workloads.

Key Features of Effective DRDCs

Effective Disaster Recovery Data Centers incorporate several critical elements:

- Geographic Separation: DRDCs are typically located a significant distance from the primary data center to avoid being affected by the same regional disaster. However, the distance must be balanced with the need for low-latency data replication.

- Data Replication: Continuous or near-continuous replication of data from the primary site to the DRDC is essential. This can involve synchronous replication for zero data loss (RPO of zero) for mission-critical applications, or asynchronous replication for applications that can tolerate a small amount of data loss.

- Redundant Infrastructure: The DRDC itself is built with high levels of redundancy for power, cooling, and network connectivity, ensuring its own resilience.

- Recovery Point Objective (RPO) & Recovery Time Objective (RTO): Organizations define specific RPOs (how much data loss is acceptable) and RTOs (how quickly systems must be restored) which dictate the design and technology choices for the DRDC.

- Testing and Validation: Regular and rigorous testing of the disaster recovery plan and the DRDC’s capabilities is crucial to ensure it functions as expected during an actual crisis.

- Warm, Hot, or Cold Sites: DRDCs can be categorized by their readiness:

- Hot Sites: Fully equipped with hardware and actively running, capable of immediate failover with minimal downtime and data loss.

- Warm Sites: Have hardware in place but require some configuration and data restoration, leading to slightly longer recovery times.

- Cold Sites: Basic infrastructure (power, cooling) available, but hardware and data must be transported and set up, resulting in the longest recovery times.

Maintaining Trust and Operations

Investing in a robust DRDC is a fundamental aspect of risk management and maintaining customer trust. For many industries, regulatory bodies mandate comprehensive disaster recovery plans, making DRDCs a compliance necessity. They provide peace of mind that an organization’s digital assets and services can withstand unexpected disruptions.

8. Industrial / Mission-Critical Data Centers: Unyielding Reliability in Harsh Environments

Designed for Extremes and Absolute Uptime

Industrial or Mission-Critical Data Centers are a specialized breed, built to operate reliably and continuously in some of the most challenging and often unforgiving environments. These facilities are distinct from typical enterprise or colocation centers due to their extreme resilience, robust physical construction, and specialized environmental controls designed to withstand harsh industrial conditions, from extreme temperatures and vibrations to electromagnetic interference and dust.

These data centers are vital for sectors where any downtime can have severe consequences, including not only financial losses but also risks to human safety, environmental damage, or critical infrastructure failure. They are the backbone of operations in energy production, large-scale manufacturing, transportation systems, and utility grids.

Characteristics for Unwavering Performance

The design and operation of Industrial/Mission-Critical DCs prioritize reliability above almost all else:

- Hardened Construction: Built to withstand physical impacts, extreme weather events, seismic activity, and often designed to be blast-resistant.

- Environmental Control: Employ sophisticated filtration systems to protect against dust, chemicals, and pollutants common in industrial settings. They also manage temperature and humidity across a wider range of external conditions.

- Electromagnetic Shielding: Designed to mitigate electromagnetic interference (EMI) that can disrupt sensitive electronic equipment, common in environments with heavy machinery.

- Redundant Systems: Feature multiple layers of redundancy for power (generators, UPSs, multiple utility feeds), cooling, and network connectivity to guarantee continuous operation.

- Cyber-Physical Security: Strong integration of physical security measures with cybersecurity protocols to protect against both physical intrusion and cyber threats, recognizing the potential impact on physical systems.

- Long-Life Cycle Equipment: Often utilize ruggedized industrial-grade IT equipment and infrastructure components designed for extended lifespans and reliability in demanding conditions.

- Remote Monitoring and Management: Advanced monitoring and telemetry systems enable remote management and predictive maintenance, crucial for facilities located in often isolated industrial sites.

Where Reliability is Non-Negotiable

Industrial/Mission-Critical Data Centers are essential for:

- Oil & Gas: Managing SCADA systems for pipelines, offshore drilling platforms, and refineries.

- Manufacturing: Controlling automated production lines, robotics, and quality control systems in smart factories.

- Utilities: Operating power grids, water treatment plants, and other public utilities.

- Transportation: Managing railway signaling, air traffic control, and port logistics.

These data centers highlight the critical link between digital infrastructure and the physical world, emphasizing that for certain operations, the digital world is the physical world.

9. Green Data Centers: Powering the Future Responsibly

Sustainability at the Core of Design and Operation

Green Data Centers represent a significant and growing trend in the industry, driven by increasing environmental concerns, rising energy costs, and regulatory pressures. These facilities are specifically designed, built, and operated with a primary focus on minimizing their environmental impact throughout their lifecycle. This commitment to sustainability goes beyond mere compliance; it’s an inherent part of their operational philosophy.

The digital world consumes enormous amounts of energy, primarily for powering IT equipment and cooling systems. Green data centers actively seek to reduce their carbon footprint by optimizing energy efficiency, leveraging renewable energy sources, minimizing water consumption, and reducing waste.

Pillars of a Green Data Center

Several approaches define a green data center:

- Energy Efficiency:

- High PUE (Power Usage Effectiveness): A key metric for green data centers, aiming for PUE values as close to 1.0 as possible, indicating that nearly all power is used for IT equipment.

- Efficient Cooling: Employing advanced cooling techniques such as free cooling (using outside air), liquid cooling, hot/cold aisle containment, and optimizing airflow management to reduce reliance on energy-intensive chillers.

- Energy-Efficient Hardware: Utilizing servers, storage, and networking equipment designed for lower power consumption.

- DC Power Distribution: Exploring Direct Current (DC) power distribution in the data center to reduce conversion losses from AC power.

- Renewable Energy Sources: Sourcing power from renewable energy, such as solar, wind, and hydroelectric plants, either directly or through renewable energy credits/purchasing agreements.

- Water Conservation: Implementing water-efficient cooling systems, such as closed-loop systems, or exploring adiabatic cooling to minimize water usage.

- Waste Reduction and Recycling: Practicing responsible disposal and recycling of outdated IT equipment and facility materials.

- Sustainable Building Materials: Using environmentally friendly, recycled, or locally sourced materials in the construction of the facility.

- Software Optimization: Utilizing virtualization and efficient resource management software to maximize hardware utilization and minimize idle power consumption.

The Business Case for Green

Beyond environmental stewardship, green data centers offer tangible business benefits. Lower energy consumption leads to significant operational cost savings. They also enhance an organization’s brand reputation, attracting environmentally conscious customers and investors. Moreover, as regulations regarding carbon emissions and energy consumption tighten, operating green data centers provides a proactive approach to compliance.

The concept of a green data center is not just a niche; it is becoming an increasingly integral part of mainstream data center design and operation, reflecting a growing global imperative for sustainable technology.

10. Telecom Data Centers: Orchestrating Digital Communications

The Hubs of Connectivity

Telecom Data Centers are the specialized facilities at the heart of our communication networks, owned and operated by telecommunications service providers. These data centers are uniquely designed to manage the immense volume of network traffic, switching, routing, and processing that enables seamless voice, video, and data communication across regions and globally. They are indispensable for the functioning of cellular networks, internet service providers (ISPs), and enterprise communication platforms.

Unlike traditional data centers focused primarily on compute and storage, Telecom DCs place a paramount emphasis on network connectivity, bandwidth, and low latency across wide area networks. They house the core network equipment, such as routers, switches, and optical transmission systems, along with the servers required to run network management applications and deliver telecom services.

Core Functions and Specializations

Telecom Data Centers are characterized by their network-centric design:

- Network Core & Edge Functions: Hosting critical network infrastructure that forms the backbone of communication networks, including core routers, switches, and gateways to other networks. They also facilitate edge network functions closer to subscribers.

- High Bandwidth & Low Latency: Engineered for extremely high bandwidth capabilities and minimal latency to ensure rapid data transfer for real-time communication services like VoIP, video conferencing, and 5G networks.

- Carrier Neutrality (Often): While owned by telecom providers, many also serve as interconnection points, peering with other carriers and content providers to facilitate global data exchange.

- Redundant Network Connectivity: Features extensive redundant fiber optic connections and diverse network paths to ensure uninterrupted service even in the face of fiber cuts or network failures.

- Specialized Equipment: House telecom-specific equipment that is often different from standard IT servers, designed for network processing and signaling.

- Geographic Distribution: Strategically distributed across regions to provide localized access points for subscribers and manage traffic efficiently.

- Support for Emerging Technologies: Crucial for the rollout of 5G, IoT connectivity platforms, and other next-generation communication services.

Powering Our Connected World

Telecom data centers are the silent enablers of our connected world. Without them, our mobile phones wouldn’t connect, our internet wouldn’t function, and the global flow of information would grind to a halt. Their continuous operation and advanced network capabilities are fundamental to modern society and commerce.

11. Research & Government Data Centers: Security, Compliance, and Discovery

Guardians of Sensitive Data and National Infrastructure

Research and Government Data Centers are highly specialized facilities designed to meet extraordinary requirements for security, compliance, and often, high-performance computing for scientific discovery or national defense. These data centers handle some of the most sensitive, classified, and strategically important data in existence, necessitating stringent protocols that far exceed those of typical commercial data centers.

These facilities serve a broad range of public sector needs, from safeguarding citizen data and national security information to powering advanced scientific research, meteorological forecasting, and defense applications. The emphasis is on maintaining the highest levels of data integrity, confidentiality, and availability, often within highly regulated frameworks.

Defining Characteristics and Objectives

Research and Government Data Centers are distinguished by their intense focus on protection and specific mission requirements:

- Exemplary Security: Implement multi-layered physical and cyber security measures, often compliant with classified information handling standards. This includes restricted access zones, advanced surveillance, military-grade encryption, and continuous threat monitoring.

- Rigorous Compliance: Adhere to strict regulatory frameworks and government mandates, such as FISMA, FedRAMP, NIST standards, and other specific national security directives. Auditing and accountability are paramount.

- High-Performance Computing (HPC) Capabilities: Many government and research facilities are also HPC centers, powering supercomputers for simulations in defense, intelligence, climate science, and fundamental research.

- Long-Term Data Archiving: Often responsible for the long-term archiving and preservation of vast quantities of historical, scientific, or census data.

- Geographic Distribution and Redundancy: Multiple secure sites are often used to ensure geographic diversity and build in redundancy for critical national infrastructure.

- Specialized Staff: Operations are typically managed by highly vetted and specialized personnel with security clearances.

- Custom Infrastructure: Facilities are often custom-built and heavily reinforced, designed for resilience against a wide array of threats, both physical and cyber.

Diverse Applications within Public Service

Research and Government Data Centers are critical for:

- National Security & Defense: Supporting intelligence agencies, military operations, and defense research.

- Scientific & Academic Research: Powering university research, national laboratories, space exploration, and medical advancements.

- Public Services: Managing citizen records, tax data, social security systems, and other essential government services.

- Weather & Climate Forecasting: Running complex atmospheric and oceanic models.

- Emergency Services: Supporting critical communication and data processing for disaster response and public safety.

The integrity and continuous operation of these data centers are fundamental to national stability, public well-being, and pushing the boundaries of human knowledge.

The Strategic Importance of Data Center Selection

The digital landscape is a vast and intricate ecosystem, and the choice of data center infrastructure is a pivotal strategic decision that impacts every facet of an organization’s operations. As observed, no two workloads are identical, and consequently, no single data center type can optimally serve all needs. The deliberate selection of the right data center model, or combination of models, directly influences an organization’s competitive advantage, operational efficiency, and long-term sustainability.

Aligning Infrastructure with Business Objectives

The journey of selecting a data center begins with a comprehensive understanding of an organization’s core business objectives and the specific demands of its applications and data. Key considerations include:

- Performance Requirements: Does the application demand ultra-low latency (e.g., financial trading, edge AI), or can it tolerate higher latency (e.g., batch processing, archival storage)?

- Scalability Needs: Is rapid, on-demand scaling essential for fluctuating workloads, or is growth more predictable and linear?

- Security Posture: What level of physical and cyber security is mandated by the nature of the data and regulatory compliance?

- Compliance Landscape: Which industry-specific or governmental regulations must be met (e.g., HIPAA, GDPR, PCI DSS, FISMA)?

- Cost Efficiency: Balancing capital expenditure (CapEx) against operational expenditure (OpEx), and understanding the total cost of ownership (TCO) for different models.

- Disaster Recovery and Business Continuity: What are the acceptable Recovery Point Objectives (RPO) and Recovery Time Objectives (RTO)?

- Sustainability Goals: Does the organization have environmental mandates to meet, and how important is a reduced carbon footprint?

- Geographic Presence: Is proximity to users or specific markets critical?

Beyond Individual Workloads: Hybrid and Multi-Cloud Strategies

In today’s complex IT environments, it is increasingly rare for a single organization to rely exclusively on one type of data center. Many businesses adopt hybrid or multi-cloud strategies, leveraging the strengths of different models to create a resilient, flexible, and optimized infrastructure ecosystem. For example:

- A financial institution might use an Enterprise Data Center for core banking systems requiring maximum security and control, while leveraging a Colocation Data Center for secondary applications, and utilizing Hyperscale Cloud for scalable, less sensitive workloads like customer-facing web applications.

- A manufacturing company might deploy Industrial/Mission-Critical Data Centers on the factory floor for real-time automation, complement them with Edge Data Centers for local IoT processing, and send aggregated data to a Hyperscale Cloud for advanced analytics and AI training.

- A government agency might utilize highly secure Research & Government Data Centers for classified information, while distributing less sensitive public-facing services across various Colocation or even Green Data Centers that meet specific sustainability mandates.

This strategic blending of data center types allows organizations to optimize for cost, performance, and risk across their entire digital estate.

The Interplay with Emerging Technologies

The evolution of data centers is intrinsically linked to the emergence of new technologies. The explosion of Artificial Intelligence, Machine Learning, and the Internet of Things is not only reshaping existing data center models, but also driving the creation of new specialized facilities.

- AI/ML: These technologies demand immense computational power, driving the need for more HPC Centers and specialized hardware within Hyperscale Data Centers (e.g., GPU farms).

- IoT: The proliferation of billions of connected devices necessitates the growth of Edge Data Centers to process data closer to the source, reducing latency and bandwidth strain.

- 5G: The rollout of 5G networks is heavily reliant on Telecom Data Centers and Edge Data Centers to deliver the promised low latency and high bandwidth capabilities.

- Sustainability: Growing awareness and regulatory pressures are accelerating the adoption of best practices in Green Data Centers across all types of facilities.

Understanding these interdependencies is crucial for future-proofing IT infrastructure investments.

The Digital Performance Imperative

In an era where digital performance often translates directly into competitive advantage, the decision-making process around data center infrastructure is no longer confined to technical teams; it is a critical business decision. The wrong data center strategy can lead to inflated costs, performance bottlenecks, security vulnerabilities, and an inability to scale or innovate. Conversely, a well-conceived strategy can unlock new opportunities, enhance operational resilience, and provide a formidable foundation for growth.

Investing in a deep understanding of the diverse types of data centers available, their respective strengths and weaknesses, and how they align with specific business needs is paramount. This strategic clarity empowers organizations to build an IT backbone that is not just robust and reliable, but also agile and adaptable to the ever-changing demands of the digital world. Ultimately, it solidifies the understanding that infrastructure isn’t just IT – it’s a non-negotiable component of business success.

Unlock Your Optimal Data Center Strategy with IoT Worlds

Navigating the complex landscape of data center types and building an IT infrastructure that truly aligns with your strategic goals can be a daunting challenge. From ensuring the security of sensitive data in an Enterprise DC to optimizing for low-latency edge deployments, or integrating sustainable practices in a Green Data Center, each decision carries significant implications for your operations and competitive standing.

At IoT Worlds, we specialize in transforming this complexity into clarity and actionable strategies. Our expert consultants possess deep knowledge across all 11 types of data centers, combined with an understanding of emerging technologies and industry best practices. We can help your organization:

- Assess Your Current Infrastructure: Gain a clear understanding of your existing data center footprint and identify areas for optimization.

- Define Your Requirements: Meticulously analyze your workloads, performance needs, security mandates, and compliance obligations.

- Design a Tailored Strategy: Develop a customized data center strategy that incorporates the optimal blend of Enterprise, Colocation, Hyperscale, Edge, HPC, Modular, Disaster Recovery, Industrial/Critical, Green, Telecom, and Research & Government data center models to meet your unique business objectives.

- Optimize for Performance and Cost: Leverage our expertise to ensure your infrastructure delivers maximum performance at the most efficient cost.

- Future-Proof Your Operations: Build a scalable, resilient, and sustainable data center roadmap that can adapt to technological advancements and evolving business demands.

Don’t let guesswork define your digital foundation. Partner with IoT Worlds to ensure your data center strategy is a true enabler of your business success.

To learn more about how IoT Worlds can help you select, design, and optimize your data center infrastructure, send an email to info@iotworlds.com today. Let us empower your organization to make informed, strategic decisions that drive efficiency, resilience, and innovation in the digital world.