The manufacturing landscape is undergoing a radical transformation, fueled by the promises of Industry 4.0. Factories are no longer isolated silos of production but increasingly interconnected, intelligent ecosystems. At the heart of this evolution lies data – vast quantities of it, generated at unprecedented rates and from diverse sources. However, the sheer volume and variety of this data present a critical challenge: choosing the right data architecture to harness its full potential.

Too often, manufacturers fall into the trap of attempting a “one-size-fits-all” approach, pushing all data into a single platform. This monolithic strategy, while seemingly straightforward, is a significant impediment to achieving the true benefits of Industry 4.0, including faster AI adoption, scalable digital twins, real-time operational intelligence, and resilient manufacturing ecosystems.

The reality of modern manufacturing is that it generates fundamentally different categories of data, each possessing unique characteristics concerning structure, velocity, and analytical requirements. To truly unlock the power of Industry 4.0, manufacturing leaders must adopt a strategic approach to data architecture, recognizing that specialized data platforms, coexisting within a hybrid architecture, are essential for supporting both real-time operations and long-term strategic intelligence.

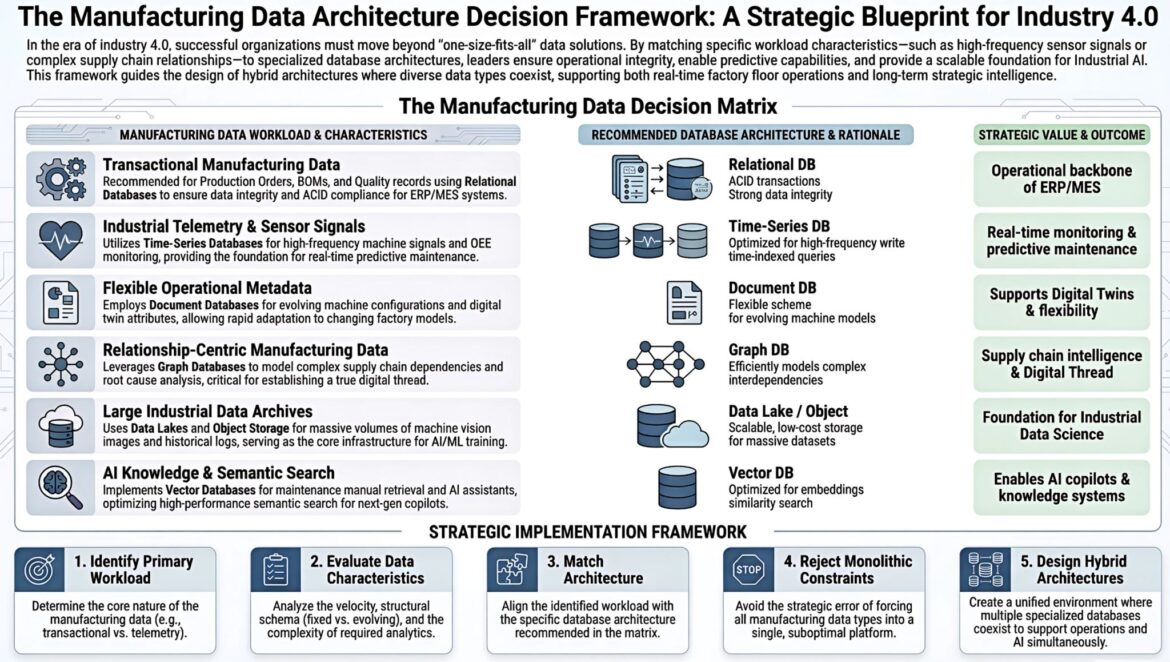

This article introduces “The Manufacturing Data Architecture Decision Framework,” a strategic blueprint designed to guide manufacturing leaders in navigating the complexities of data management in an AI-driven, highly connected global environment. By understanding the core manufacturing data workloads, evaluating their characteristics, and matching them with the appropriate database architectures, organizations can build robust, scalable, and intelligent manufacturing operations.

For decades, manufacturing systems were characterized by isolated automation islands. Data historians, SCADA systems, human-machine interfaces (HMIs), programmable logic controllers (PLCs), and distributed control systems (DCS) often operated independently, each with its own proprietary databases and communication protocols. This architecture was adequate when optimization was confined to individual machines or specific production lines.

However, the ambitions of Industry 4.0 extend far beyond local improvements. They demand:

- Seamless integration with enterprise resource planning (ERP), manufacturing execution systems (MES), product lifecycle management (PLM), and supply chain management (SCM) systems.

- Advanced capabilities like predictive and prescriptive maintenance.

- Cross-plant analytics and benchmarking for continuous improvement.

- End-to-end visibility, from machine sensors to boardroom key performance indicators (KPIs).

Without a well-defined reference architecture, every new Industry 4.0 initiative risks becoming a bespoke, one-off integration project. This leads to increased project time, higher costs, and a higher risk of failure. A strategic approach to data architecture provides governance for security, performance, and compliance, offers reusable building blocks, and fosters a shared language among operational technology (OT), information technology (IT), and data teams.

Understanding the Six Core Manufacturing Data Workloads

The first step in building a successful Industry 4.0 data architecture is to clearly define the different types of data workloads generated within a manufacturing environment. Each workload has distinct characteristics that dictate the most suitable database architecture. The framework identifies six core manufacturing data workloads:

1. Transactional Manufacturing Data

Transactional data forms the bedrock of any manufacturing operation. It encompasses the structured, high-integrity information essential for running the business.

Characteristics of Transactional Manufacturing Data

- High Integrity and ACID Compliance: This data requires strict adherence to ACID (Atomicity, Consistency, Isolation, Durability) properties to ensure reliability, especially for financial and operational records.

- Structured Schema: Data typically conforms to a predefined schema, ensuring consistency and ease of querying.

- Frequent Read/Write Operations: ERP and MES systems constantly update and retrieve this data for order processing, inventory management, and production scheduling.

- Examples: Production orders, bills of material (BOMs), quality control records, inventory levels, customer orders, supplier information, financial transactions.

Recommended Database Architecture and Rationale

- Relational Databases (RDBMS): RDBMS are the traditional and still highly effective choice for transactional data. Their robust capabilities for maintaining data integrity through ACID transactions, enforcing relationships between data sets, and supporting complex queries make them ideal for ERP and MES systems. Examples include PostgreSQL, MySQL, SQL Server, and Oracle.

Strategic Value and Outcome

- Operational Backbone of ERP/MES: Relational databases provide the reliable and consistent data foundation necessary for the smooth operation of core enterprise systems, enabling efficient planning, execution, and reporting of manufacturing processes.

2. Industrial Telemetry & Sensor Signals

The rise of the Industrial Internet of Things (IIoT) has led to an explosion of telemetry and sensor data. This data provides real-time insights into machine performance and operational conditions.

Characteristics of Industrial Telemetry & Sensor Signals

- High Volume and Velocity: Sensors often generate data points every second or even faster, leading to massive streams of continuous data.

- Time-Indexed: Every data point is associated with a timestamp, making time-based analysis critical.

- Append-Only: Data is typically appended to existing records rather than being frequently updated.

- Examples: Temperature readings, pressure levels, vibration data, machine RPM, energy consumption, OEE (Overall Equipment Effectiveness) metrics, motor currents.

Recommended Database Architecture and Rationale

- Time-Series Databases (TSDB): TSDBs are purpose-built for handling high-volume, time-indexed data efficiently. They are optimized for writing large amounts of new data points frequently and for performing time-based queries (e.g., retrieving data for a specific time range, calculating averages over time windows). Their specialized indexing and compression techniques make them superior to relational databases for this workload. Examples include InfluxDB, TimescaleDB, and Prometheus.

Strategic Value and Outcome

- Real-time Monitoring & Predictive Maintenance: Time-series data underpins real-time dashboards for operational visibility and is crucial for developing predictive maintenance models that can identify potential equipment failures before they occur. This ultimately reduces downtime and maintenance costs.

3. Flexible Operational Metadata

Modern manufacturing environments are dynamic. Machine configurations change, product lines evolve, and digital twins require flexible models to reflect these changes.

Characteristics of Flexible Operational Metadata

- Evolving Schema: Data structures may not be fixed and can change frequently as new attributes are added or existing ones are modified.

- Semi-structured or Unstructured Data: Often includes JSON, XML, or other flexible formats that do not conform to a rigid tabular structure.

- Rapid Adaptation: The ability to quickly update and query this metadata is essential for maintaining accurate digital representations of physical assets.

- Examples: Machine configurations, digital twin attributes, product variations, process parameters, instruction sets for flexible manufacturing cells.

Recommended Database Architecture and Rationale

- Document Databases (NoSQL): Document databases, such as MongoDB or Couchbase, are ideal for handling flexible, semi-structured data. They store data in document-like structures (e.g., JSON), allowing for schema evolution without requiring complex migrations. This flexibility is crucial for digital twins that need to adapt to changing factory models and for agile manufacturing processes.

Strategic Value and Outcome

- Supports Digital Twins & Flexibility: Document databases provide the agility needed to maintain dynamic digital twin models and adapt to evolving machine configurations, enabling responsive and flexible manufacturing operations.

4. Relationship-Centric Manufacturing Data

In complex manufacturing and supply chain environments, understanding the relationships between entities is as critical as the data itself.

Characteristics of Relationship-Centric Manufacturing Data

- Interconnected Entities: Data points are often highly dependent on other data points, representing complex relationships.

- Graph Traversal: Queries frequently involve navigating through multiple layers of relationships to uncover insights.

- Examples: Supply chain dependencies (suppliers, components, products), root cause analysis (linking machine failures to specific components or processes), process flows, Bill of Material (BOM) explosions.

Recommended Database Architecture and Rationale

- Graph Databases: Graph databases, like Neo4j or Amazon Neptune, are specifically designed to store and query relationships efficiently. They represent data as nodes (entities) and edges (relationships), making it intuitive to model and traverse complex interdependencies. This architecture is invaluable for tasks such as identifying critical paths in a supply chain or tracing the origin of a product defect.

Strategic Value and Outcome

- Supply Chain Intelligence & Digital Thread: Graph databases enable powerful supply chain analytics, helping identify bottlenecks, predict disruptions, and optimize logistics. They are also fundamental for establishing a true digital thread, providing end-to-end traceability of products and processes.

5. Large Industrial Data Archives

Modern manufacturing generates petabytes of historical data, including machine vision images, video streams, log files, and other unstructured or semi-structured data, essential for AI/ML training and long-term analysis.

Characteristics of Large Industrial Data Archives

- Massive Volume: Data sets can grow to enormous sizes (terabytes to petabytes), requiring cost-effective storage solutions.

- Diverse Formats: Can include images, video, audio, unstructured text logs, and other binary data.

- Infrequent Access (often): While crucial for analytics, individual archive items may not be accessed frequently in real-time operations, but bulk access for training AI models is common.

- Examples: Machine vision images for quality inspection, video recordings of assembly lines, historical sensor data (after initial processing), detailed machine logs, CAD files, engineering documents.

Recommended Database Architecture and Rationale

- Data Lakes / Object Storage: Data lakes, often built on object storage solutions like Amazon S3, Google Cloud Storage, or Azure Blob Storage, provide scalable, low-cost storage for massive and diverse datasets. They can store data in its raw format, making it suitable for future analytical needs, even if those needs are not yet fully defined. This forms the essential foundation for big data analytics and AI/ML training.

Strategic Value and Outcome

- Foundation for Industrial Data Science: Data lakes and object storage form the core infrastructure for advanced industrial data science initiatives, enabling the training of sophisticated AI and machine learning models for anomaly detection, predictive analytics, and process optimization.

6. AI Knowledge & Semantic Search

As AI moves to the forefront of Industry 4.0, there is a growing need for systems that can store and retrieve information based on meaning and context, powering AI assistants and knowledge systems.

Characteristics of AI Knowledge & Semantic Search

- Semantic Similarity: The primary access pattern is based on the meaning or conceptual similarity of data rather than exact keyword matches.

- Embeddings: Data is often represented as high-dimensional numerical vectors (embeddings) generated by machine learning models.

- High-Performance Search: Requires fast similarity searches across large sets of these embeddings.

- Examples: Maintenance manuals, standard operating procedures, expert system knowledge bases, design specifications, customer feedback, incident reports, all encoded for semantic search for AI copilot retrieval.

Recommended Database Architecture and Rationale

- Vector Databases: Vector databases are a relatively new class of databases specifically optimized for storing and querying vector embeddings. They enable fast similarity searches, which are fundamental for semantic search, recommendation systems, and AI-powered knowledge retrieval. They are becoming critical infrastructure for building next-generation AI copilots and intelligent assistants in manufacturing. Examples include Pinecone, Weaviate, and Milvus.

Strategic Value and Outcome

- Enables AI Co-pilots & Knowledge Systems: Vector databases are essential for deploying intelligent AI assistants that can understand natural language queries, retrieve relevant information from vast knowledge bases, and support human operators with contextual insights, thereby enhancing decision-making and operational efficiency.

Strategic Implementation Framework for Leaders

Having understood the diverse data workloads, the next crucial step for manufacturing leaders is to implement a strategic framework for their data architecture. This framework ensures that the right data is managed by the right system, ultimately driving maximum value from Industry 4.0 investments.

1. Identify the Primary Workload

The journey begins with a clear understanding of the core nature of the data being handled. This is arguably the most critical step, as mischaracterizing a workload will lead to suboptimal architectural choices.

Focus on Intent and Use Case

It’s essential to look beyond the raw data itself and consider its primary purpose and how it will be used. For example, while sensor data might eventually be archived, its primary workload is high-frequency telemetry for real-time monitoring.

Ask Key Questions

- What is the main objective of collecting this data? (e.g., controlling a machine, tracking an order, analyzing long-term trends, training an AI model).

- Who are the primary users of this data, and what are their immediate needs?

- What happens if this data is unavailable or inconsistent?

- Is this data primarily structured, semi-structured, or unstructured?

By clearly defining the primary workload, organizations can avoid the common pitfall of trying to make a single database fit all possible needs, which inevitably leads to compromises in performance, scalability, and functionality.

2. Evaluate Data Characteristics

Once the primary workload is identified, the next step is to rigorously analyze its specific characteristics. This deep dive provides the necessary information to select the most appropriate database technology.

Key Characteristics to Evaluate

- Velocity: How frequently is data generated, ingested, and queried? Is it a continuous stream of high-frequency events (e.g., sensor data), or is it updated periodically (e.g., transactional data)? High-velocity data demands architectures optimized for rapid writes and reads.

- Structural Schema (Fixed vs. Evolving): Is the data structure rigid and unlikely to change (e.g., relational tables for ERP), or is it flexible and prone to evolution (e.g., metadata for digital twins)? Fixed schemas benefit from relational databases, while evolving schemas are better suited for document stores.

- Data Volume: How much data will be stored? Is it gigabytes, terabytes, or petabytes? This impacts storage costs, retrieval times, and the choice of scalable solutions like data lakes.

- Complexity of Required Analytics: What kind of analysis will be performed on this data? Simple aggregations, complex joins, time-series analysis, graph traversals, or semantic similarity searches? The nature of the analytics directly influences the database’s querying capabilities.

- Consistency Requirements: What level of data consistency is needed? Strong consistency (ACID) for transactional data, or eventual consistency for large-scale, high-velocity data?

- Latency Requirements: How quickly does the data need to be available for use and analysis? Real-time operational data has much stricter latency requirements than archived historical data.

This comprehensive evaluation ensures that the chosen architecture aligns with the technical demands of the data, preventing performance bottlenecks and scalability issues down the line.

3. Match the Right Architecture

With a clear understanding of the workload and its characteristics, organizations can now align these requirements with the specific database architectures recommended in the Manufacturing Data Decision Matrix.

Avoiding “Flavor of the Month” Pitfalls

It’s crucial to select technologies based on their inherent strengths and how they address the evaluated data characteristics, rather than simply adopting the latest trend. Each database type (Relational, Time-Series, Document, Graph, Data Lake/Object, Vector) is optimized for specific use cases, and leveraging these optimizations is key to building an efficient and powerful data architecture.

Iterative Matching Process

This step is often iterative. For example, if a workload primarily involves sensor data but also requires occasional complex financial reporting, it might necessitate a combination of a time-series database for real-time telemetry and a relational database for financial transactions, with robust data integration between them.

4. Reject Monolithic Data Strategies

One of the most profound insights from industry experts is that most Industrial IoT deployments fail to generate meaningful ROI not due to technology limitations, but because the architecture prioritizes connectivity over financial outcomes, leading to data silos. The strategic error of attempting to force all manufacturing data types into a single, suboptimal platform is a pervasive problem that hinders true digital transformation.

Why Monolithic Fails

- Compromised Performance: A single database attempting to handle high-velocity sensor data, complex relational transactions, and flexible document metadata will inevitably struggle. Performance will suffer across the board.

- Increased Complexity: While seemingly simpler initially, trying to contort one technology to meet diverse needs often results in complex workarounds, brittle integrations, and higher maintenance costs.

- Limited Scalability: Different data types scale differently. A monolithic approach creates bottlenecks where one workload’s scalability limits the entire system.

- Suboptimal Analytics: Tools designed for one type of data (e.g., SQL for relational data) are inefficient or even incapable of performing advanced analytics on other types of data (e.g., graph traversals or semantic search).

- ROI Killer: Data silos, often a byproduct of monolithic strategies, are the single biggest ROI killer in IIoT deployments. When architecture is driven by connectivity rather than outcomes, systems collect data without generating value.

Embracing specialized databases for specialized workloads is not about adding complexity; it’s about embracing efficiency and unlocking the full potential of each data type.

5. Design Hybrid Data Architectures

The ultimate goal of this framework is to guide organizations towards designing hybrid data architectures. This involves creating a unified environment where multiple specialized databases coexist, working in concert to support both real-time operational needs and advanced AI/ML capabilities simultaneously.

Principles of Hybrid Architectures

- Data Integration and Orchestration: While specialized databases handle specific workloads, robust data integration layers are crucial. Technologies like message brokers (e.g., Kafka, Redpanda) and data virtualization platforms can act as central data hubs, decoupling producers from consumers and enabling scalable real-time data ingestion and distribution. The Unified Namespace (UNS) architecture is emerging as a powerful approach to eliminate data silos and accelerate ROI by creating a single source of truth for operational data.

- Edge-to-Cloud Continuum: Data processing and storage don’t have to happen solely in the cloud. Edge-first architectures reduce latency, lower cloud bandwidth costs, and enhance the responsiveness of critical industrial processes. Hybrid architectures intelligently distribute workloads between the edge, on-premises, and cloud environments.

- Data Governance and Security: A unified approach to data governance, security, and compliance across all specialized databases is paramount. This includes consistent access controls, data encryption, and auditing capabilities.

- Abstraction Layers: Implementing abstraction layers over the underlying databases allows applications to interact with data in a consistent manner, regardless of the storage technology. This promotes modularity and reduces coupling.

- The Three Pillars of IIoT: Connectivity, data collection and analysis, and automation and control form the foundation. Hybrid architectures enable robust connectivity for industrial devices (e.g., using protocol adapters like Apache PLC4X for legacy systems), efficient data collection and analysis across diverse data types, and intelligent automation fueled by insights.

By meticulously designing hybrid data architectures, manufacturing organizations can overcome the limitations of monolithic systems and create agile, intelligent, and resilient operations capable of truly harnessing the power of Industry 4.0.

The Critical Role of AI Readiness and Data Integration

The promise of Industry 4.0 often conjures images of factories humming with AI-powered robots and seamless automation. However, the path to AI readiness is paved with well-structured data. As the IoT device count surges, from 18.8 billion in 2024 to an estimated 40 billion by 2030, the infrastructure decisions made today will determine who captures value from this growth.

AI Amplifies, Not Resolves, Inconsistency

A common misconception is that AI can magically resolve data inconsistencies. In reality, AI amplifies inconsistencies. If the input data is incomplete, time-misaligned, or lacks context, the AI outputs, while polished, may be unreliable. This erodes trust and makes validation difficult. Therefore, a robust data architecture that ensures data reliability, consistency, and contextual richness is a prerequisite for successful AI adoption.

Bridging the IT/OT Divide

Historically, operational technology (OT) and information technology (IT) systems operated in separate domains, leading to significant integration gaps and data silos. OT systems, focused on real-time control and safety, often used proprietary protocols and isolated networks. IT systems, managing business operations and enterprise data, were designed for different concerns.

The Manufacturing Data Architecture Decision Framework inherently addresses this IT/OT integration challenge by:

- Recognizing Specialized Needs: Acknowledging that OT data (like telemetry) has different requirements than IT data (like transactional records).

- Promoting Interoperability: Encouraging the use of modern data platforms and integration patterns (like message brokers and unified namespaces) that can bridge these historical divides.

- Fostering Collaboration: Providing a shared language and framework for IT and OT teams to collaborate on data strategy, ensuring security, change control, and ownership are managed holistically.

Without a repeatable pattern for publishing operational data across the IT/OT boundary, capabilities break down, and network-level performance remains uneven. Investing in proper data architecture ensures data can reliably move and carry the correct context across systems and sites.

The Power of the Unified Namespace (UNS) Architecture

The Unified Namespace (UNS) is emerging as a critical architectural pattern for eliminating data silos and creating a single, contextualized source of truth for industrial data. By integrating various data sources into a hierarchical, event-driven model, UNS allows data from different specialized databases to be contextualized and accessed uniformly. This significantly improves data portability, consistency, and reusability, directly contributing to higher project success rates and improved operational efficiency.

The integration of data from relational databases, time-series stores, document databases, graph databases, data lakes, and vector databases into a cohesive UNS allows for richer contextualization, enabling AI models to access a more complete and accurate view of the manufacturing environment.

Measuring Success: ROI and Project Outcomes

The strategic implementation of a hybrid data architecture directly impacts the return on investment (ROI) of Industry 4.0 initiatives. While many deployments focus on connectivity, truly successful projects prioritize financial outcomes.

Organizations that adopt structured IIoT deployment frameworks, such as the one described herein, consistently achieve higher project success rates—often between 80-90%—and realize significant improvements in operational efficiency, typically ranging from 25-40%.

Tangible Benefits and ROI

- Positive ROI within 12-24 Months: For critical asset monitoring, initial investments in the range of 200K−800K commonly generate 1−3M in annual operational improvements within this timeframe.

- Reduced Development Time and Risk: Reusable building blocks and a shared understanding of the architecture significantly reduce project time and the inherent risks associated with complex integration efforts.

- Faster AI Adoption: By providing clean, contextualized data from specialized sources, hybrid architectures accelerate the development, training, and deployment of AI models, leading to quicker realization of AI-driven benefits.

- Scalable Digital Twins: Flexible operational metadata management and robust data lakes enable the creation and evolution of highly accurate and scalable digital twins, which are crucial for simulation, optimization, and predictive analytics.

- Real-time Operational Intelligence: Optimized time-series and relational databases ensure that operational data is processed and presented in real-time, allowing for immediate corrective actions and proactive decision-making.

- Resilient Manufacturing Ecosystems: Graph databases provide deep insights into supply chain dependencies, enhancing resilience and enabling faster root cause analysis, while data archives support continuous improvement and future innovation.

The Future Belongs to Architecturally Intelligent Manufacturers

The era of “one-size-fits-all” data solutions in manufacturing is over. As Industry 4.0 continues its relentless advance, the success of digital transformation initiatives will increasingly hinge on the strategic decisions made regarding data architecture. Factors that contribute to Industry 4.0 success include strong governance for security, performance, and compliance, and reusable building blocks for development. The complexity of managing diverse data types, from high-velocity sensor signals to intricate supply chain relationships and specialized AI knowledge bases, demands a sophisticated, hybrid approach.

Manufacturing leaders who embrace “The Manufacturing Data Architecture Decision Framework” will not only navigate this complexity but will also gain a formidable competitive advantage. By treating data platforms strategically and meticulously matching workloads with the right architectures, organizations will unlock:

- Faster AI adoption: Empowering intelligent automation, predictive capabilities, and advanced decision support.

- Scalable digital twins: Enabling comprehensive virtual representations of physical assets and processes for simulation and optimization.

- Real-time operational intelligence: Providing immediate, actionable insights to optimize production, quality, and maintenance.

- Resilient manufacturing ecosystems: Building robust supply chains and operational models capable of withstanding disruptions and adapting to change.

- Reduced total cost of ownership: By utilizing purpose-built databases that are inherently more efficient for their specific workloads.

The future of smart manufacturing belongs to those organizations that understand a simple, yet profound, principle: The right data architecture determines the success of Industry 4.0. It’s not merely about deploying new technology; it’s about designing the foundational data infrastructure that allows those technologies to thrive and generate measurable value.

Ready to architect your manufacturing future?

Navigating the complexities of Industrial IoT and robust data architectures can be challenging. At IoT Worlds, we specialize in guiding manufacturing leaders through this transformative journey, helping you design and implement data strategies that deliver tangible ROI and propel your Industry 4.0 initiatives forward.

For a strategic consultation on optimizing your manufacturing data architecture and unlocking the full potential of your operations, reach out to us today.

Email us at info@iotworlds.com to start the conversation.