For decades, the bedrock of our digital world has been the data center. These colossal facilities, meticulously engineered and housed, have served as the silent engines driving enterprise applications, transactional databases, and predictable compute workloads. They were built with an emphasis on general efficiency, sequential processing, and broad connectivity, perfectly suited for the demands of a pre-AI era. However, a seismic shift is underway, irrevocably altering the landscape of digital infrastructure. The advent of artificial intelligence (AI), particularly the sophisticated deep learning models and large language models (LLMs) that define our current technological epoch, has rendered this traditional architecture fundamentally obsolete for the demands of industrial-scale intelligence production.

Many organizations, unfortunately, find themselves at a critical juncture, attempting to power the next generation of industrial intelligence with infrastructure designed for a bygone era. This “AI on top of data” strategy—retrofitting AI workloads onto existing, ill-suited data centers—is not merely inefficient; it’s a strategic blind spot that threatens to stifle innovation and competitiveness. The truth is, AI systems operate on an entirely different set of principles: massive parallel computation, high-throughput data pipelines, and ultra-low latency communication across thousands of specialized processors. These demands necessitate a complete rethinking of data center design, moving from a repository for information to a dynamic factory for intelligence.

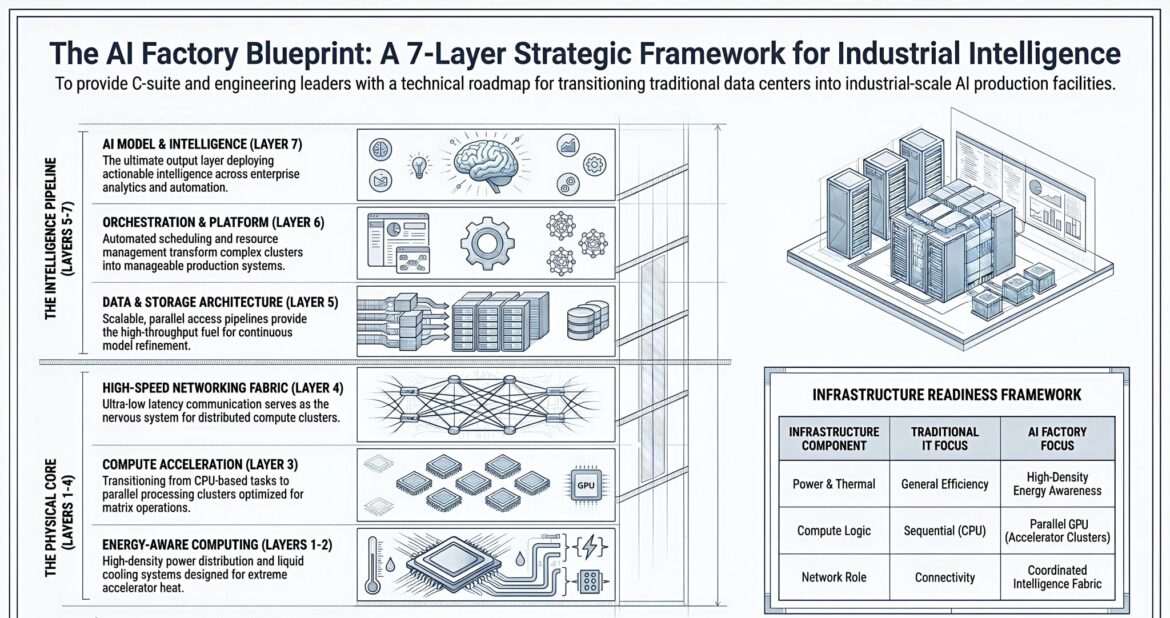

The future of industrial intelligence lies not in patching up old systems but in building entirely new paradigms. It demands the rise of the AI Factory, an infrastructure environment meticulously engineered to manufacture intelligence at an industrial scale, much as traditional factories produce physical goods. This article will delve into why traditional data center architecture is technically extinct for AI workloads, introducing the AI Factory Blueprint: A 7-Layer Strategic Framework for Industrial Intelligence. This framework provides C-suite executives and engineering leaders with a clear technical roadmap for transitioning towards these intelligence production facilities, ensuring they are equipped to harness the true power of AI.

The Paradigm Shift: From Data Center to AI Factory

For two decades, data centers were conceived as sophisticated hotels for servers—flexible for human management but increasingly inefficient for the specialized demands of modern AI workloads. As of early 2026, this paradigm has definitively collapsed. The fundamental purpose of these facilities has transformed. Instead of simply storing and serving data, they are now designed to ingest raw data, refine it, and produce intelligence. Companies are no longer just running applications; they are manufacturing intelligence itself, operating what NVIDIA CEO Jensen Huang aptly termed “AI factories”.

This transformation is driven by the explosion of demand for frontier model training and massive-scale inference, giving rise to an “AI-Native Design” philosophy. These are no longer mere IT facilities; they are purpose-built AI Factories—an infrastructure revolution converting electrons into invaluable digital intelligence. The scale of this shift is staggering: a traditional enterprise data center with 500 racks at 5-10 kW each might total 2.5-5 MW of IT power, whereas a single NVIDIA GB300 NVL72 cluster, with the same rack count, can demand 65-70 MW. The engineering required to power, cool, and network such densities has rendered much of the existing global data center stock functionally obsolete for AI workloads.

Defining the AI Factory

An AI Factory is not merely a larger data center; it’s a fundamentally different beast. It is an infrastructure environment explicitly designed for the industrial-scale production of intelligence. The analogy to a manufacturing plant is not just a metaphor; it’s an operating model. Just as a factory takes raw materials and transforms them into finished goods, an AI Factory ingests data, energy, and compute power as its primary inputs. Through a complex sequence of processes involving specialized compute, high-bandwidth storage, and low-latency networking, it transforms these inputs into actionable intelligence, which then powers operations, automation, and decision systems.

The core distinction lies in its specialized purpose. These factories are dense, orchestrated systems where thousands of GPUs, specialized processors, high-bandwidth storage, and low-latency networking function as a single, cohesive organism. Every stage of the AI lifecycle—from data ingestion and training to inference and monitoring—is handled under one roof, moving away from disparate silos towards a truly integrated production pipeline. This transition from general-purpose to purpose-built infrastructure is not optional; it’s a commercial imperative driven by the need to train models with trillions of parameters.

The Implosion of Traditional Data Center Assumptions

The transformation from traditional data centers to AI Factories fundamentally alters several long-held infrastructure assumptions:

- Power & Thermal Design: Traditional data centers prioritize general efficiency; AI Factories demand high-density energy awareness. An AI rack can easily demand 60 kW or more, sometimes exceeding 100 kW, compared to a typical 5-10 kW for traditional racks. This dramatic increase challenges existing electrical infrastructure and necessitates advanced cooling solutions.

- Compute Architectures: The focus shifts from sequential CPU-based processing to parallel GPU (accelerator clusters) processing. CPUs excel at sequential tasks, while AI training thrives on parallel processing using GPUs and specialized AI accelerators.

- Networking Role: Connectivity, once the primary concern, evolves into a coordinated intelligence fabric. Traditional networks, optimized for client-server communication, lack the high-bandwidth, low-latency, east-west connectivity required for AI models to exchange data constantly and rapidly between GPUs, storage, and compute nodes.

The crisis for AI factories, as described by Nithin, is that our old ways of connecting compute components have reached their limits. GPUs, acting like “stadiums of thousands of workers,” require a massive, continuous supply of data every microsecond. If the memory is too far or the CPU too slow, these expensive hardware resources sit idle, starved for information. This has led to the deployment of specialized platforms like NVIDIA Blackwell B200 and Rubin architecture, which are not just faster chips, but new ways of packing the “manager, workforce, and memory into a single tight space”.

The Physical Core: Layers 1-4 of the AI Factory Blueprint

The foundational layers of an AI Factory blueprint constitute its “Physical Core.” These layers address the fundamental physics and engineering challenges of concentrating and managing immense computational power to drive intelligence production. Without a robust and purpose-built physical core, the promises of AI at scale remain just that – promises.

Energy-Aware Computing (Layers 1-2): Powering the Intelligence Engine

The first and arguably most critical foundational shift in AI Factory architecture is in its approach to power and thermal management. Traditional data centers focused on general efficiency, aiming to reduce overall power consumption while maintaining operational stability. In contrast, AI Factories demand high-density energy awareness. This is not merely about providing more power; it’s about intelligent, localized, and ultra-efficient distribution and cooling of unprecedented energy concentrations.

The Wattage Wake-Up Call

The computational intensity of modern AI hardware, particularly GPUs and specialized accelerators, results in extraordinarily high power consumption. While a traditional data center rack might draw 5-10 kW, an AI rack can easily demand 60 kW or more, often exceeding 100 kW. This represents a tenfold to twentyfold increase in power density. Such densities render existing electrical infrastructures, not designed for such concentrated energy, largely unsuitable. Retrofitting these facilities is often cost-prohibitive and complex, leading to underutilized capacity or outright inability to host cutting-edge AI hardware.

The demand for power is so intense that an AI factory must concentrate the electricity of a small city into a single room to keep its “thinking synchronized”. Hyperscalers’ capital expenditure (CAPEX) is projected to exceed 600 billion in 2026, a 36% year-over-year increase, largely driven by the demands of AI infrastructure. This underscores the scale of investment required to power these new intelligence engines.

The Cooling Conundrum: From Air to Liquid

With extreme power density comes extreme heat generation. The sheer thermal output of high-density AI hardware quickly overwhelms conventional air-cooling systems (CRACs/CRAHs). This leads to dangerous hotspots, reduced hardware lifespan, and increased risk of operational failure. The AI revolution necessitates a thermodynamic revolution.

The solution lies in advanced cooling technologies, primarily direct-to-chip liquid cooling or immersion cooling. These systems efficiently remove heat directly from its source, preventing thermal runaway and allowing for the sustained operation of high-performance components. Liquid cooling is no longer a niche solution; it’s becoming the benchmark. A PUE (Power Usage Effectiveness) of 1.10 for liquid cooling often contrasts sharply with the 1.6 seen in traditional air-cooled facilities, indicating significantly greater energy efficiency in heat dissipation. Hyperscale adoption of liquid cooling is surging, rising from 18% in 2021 to 52% in 2026. Implementing these advanced cooling solutions in older facilities frequently requires complete overhauls, highlighting the need for purpose-built AI factories.

The ability to manage and dissipate heat effectively is directly tied to an AI factory’s operational efficiency and hardware longevity. Without robust energy-aware computing at its core, an AI factory cannot perform its primary function.

Compute Acceleration (Layer 3): The Parallel Processing Imperative

Layer 3 of the AI Factory Blueprint represents the heart of its intelligence production capability: Compute Acceleration. This layer signifies the fundamental transition from CPU-based tasks to parallel processing clusters, specifically optimized for the matrix operations that underpin modern AI.

Beyond the CPU: The Rise of the GPU

Traditional data centers relied predominantly on CPUs, which are excellent at sequential task processing. However, AI training, particularly deep learning, demands massive parallel processing. This is where GPUs and specialized AI accelerators (like TPUs or ASICs) become indispensable. These processors, featuring thousands of cores, are exponentially more efficient for AI workloads than traditional CPUs.

Think of a CPU as a brilliant manager handling tasks one by one, while a GPU is like a stadium of thousands of workers performing mathematical operations in unison. A single AI server rack can house dozens of these powerful accelerators, a stark contrast to older CPU-centric architectures. This shift to GPU-centric acceleration is not merely a component upgrade; it’s a complete architectural reorientation.

The Crisis of Distance: Memory Walls and Accelerator Architectures

The sheer speed and parallelism of GPUs create a critical bottleneck: the “crisis of distance” or the “memory wall”. In traditional computing, RAM located inches from the processor was acceptable. However, for a modern AI factory, those few inches become a vast, debilitating distance. Even at the speed of light, data cannot travel across a circuit board fast enough to keep a continuously hungry GPU busy. This leaves extremely expensive hardware idle, waiting for information.

To overcome this, AI Factories deploy specialized platforms that tightly integrate the “manager” (CPU), “workforce” (GPU), and memory into a single, cohesive unit. Architectures like NVIDIA’s Blackwell B200 and Rubin are designed to pack these components closer together, minimizing latency and maximizing throughput. This involves innovative packaging technologies, such as Chip-on-Wafer-on-Substrate (CoWoS) and High Bandwidth Memory (HBM), which allow memory and processing units to communicate over extremely short, high-speed interfaces. The goal is to ensure that the GPU stadium is always full and performing at peak capacity.

High-Speed Networking Fabric (Layer 4): The Nervous System of Intelligence

Layer 4, the High-Speed Networking Fabric, serves as the nervous system of the AI Factory. Its role is to provide ultra-low latency communication, ensuring that all distributed compute clusters can communicate seamlessly and efficiently, functioning as a single, massive computational unit.

The Data Superhighway: Bandwidth and Latency for AI

AI models are voraciously data-hungry. They require constant, high-speed data exchange between GPUs, between different compute nodes, and with storage systems. Traditional networks, optimized for client-server communication and north-south traffic (between clients and servers), simply cannot cope with the demands of AI. AI workloads generate immense “east-west” traffic—communication between servers and accelerators within the data center.

This necessitates a networking fabric characterized by:

- Ultra-High Bandwidth: To move massive datasets rapidly between different components.

- Ultra-Low Latency: To ensure that data arrives precisely when needed, preventing expensive accelerators from becoming idle. Even microsecond delays can accumulate into significant performance bottlenecks.

- Massive Scalability: To connect thousands of GPUs and accelerators into a unified cluster.

The “network war” between technologies like Ultra Ethernet and InfiniBand highlights the intense focus on developing optimal connectivity solutions for AI. These technologies are designed to create direct, high-speed communication pathways, effectively eliminating the “crisis of distance” that plagues traditional networking approaches for AI workloads.

Coordinated Intelligence Fabric

The network in an AI Factory moves beyond mere connectivity; it becomes a coordinated intelligence fabric. It’s not just about pipes and cables; it’s about intelligent routing, congestion management, and quality of service (QoS) tailored for AI workloads. This fabric is crucial for tightly integrated systems where compute, networking, and security must move in lockstep. It ensures that training data can be rapidly distributed to thousands of GPUs, intermediate results aggregated with minimal delay, and model parameters synchronized across the entire cluster. Without such a fabric, even the most powerful accelerators will operate in isolation, failing to unlock their collective potential.

The Intelligence Pipeline: Layers 5-7 for AI Production

Beyond the physical core, the AI Factory Blueprint extends to the “Intelligence Pipeline.” These upper layers (Layers 5-7) transform raw computational power into actionable intelligence, governing how data is managed, how complex systems are orchestrated, and ultimately, how AI models deliver tangible value.

Data & Storage Architecture (Layer 5): Fueling Continuous Model Refinement

Layer 5, the Data & Storage Architecture, provides the high-throughput fuel for continuous model refinement, ensuring that the AI Factory always has access to the massive datasets it needs to train, validate, and improve its intelligence.

Massive Datasets and Parallel Access

AI models, especially large language models and other deep learning architectures, are incredibly data-hungry. They thrive on vast quantities of high-quality data. Traditional storage architectures, often optimized for transactional databases and sequential file access, are simply inadequate for the demands of the AI Factory.

The key requirements for AI storage are:

- Scalability: The ability to store petabytes, and even exabytes, of data.

- Parallel Access Pipelines: Unlike traditional systems that might access one file at a time, AI training often requires thousands of compute nodes to simultaneously access different parts of the same dataset, or entirely different datasets, with extremely high bandwidth. This necessitates storage systems designed for massive parallel I/O.

- High Throughput: The ability to deliver data to the compute accelerators at speeds that can keep pace with their insatiable demand, preventing I/O bottlenecks that would leave expensive GPUs idle.

- Low Latency: While throughput is critical for large data transfers, low latency is equally important for metadata operations and quick access to smaller, frequently used data segments.

This layer often involves distributed file systems, object storage, and specialized data lakes optimized for AI workloads. Technologies like NVMe-oF (NVMe over Fabrics) are becoming crucial to minimize latency between compute and storage. The storage architecture isn’t just a repository; it’s an active component of the intelligence production line, continually feeding the computational engines.

Data Governance and Lifecycle Management for AI

Beyond raw technical specifications, an effective Data & Storage Architecture for an AI Factory also encompasses robust data governance, versioning, and lifecycle management. Given the iterative nature of AI model training, the ability to track different versions of datasets, manage data provenance, and ensure data quality is paramount. Data must be readily available, cleaned, and organized in formats conducive to efficient model training. This includes strategies for data ingestion, transformation, curation, and archiving, ensuring that the “raw materials” for intelligence manufacturing are always of the highest standard.

Orchestration & Platform Layer (Layer 6): Managing the Complex AI Production Line

Layer 6, the Orchestration & Platform Layer, is where the immense complexity of an AI Factory is transformed into manageable production systems. This layer provides automated scheduling and resource management, turning disparate clusters of accelerators, storage, and networking into a cohesive, intelligent manufacturing operation.

Automated Scheduling and Resource Management

An AI Factory is a dynamic, highly utilized environment. Workloads for training, inference, and fine-tuning are constantly competing for resources. Manual management of such a complex system is impossible. This layer introduces:

- Automated Scheduling: Intelligently allocates compute, networking, and storage resources to specific AI jobs based on priorities, resource availability, and completion deadlines.

- Resource Management: Monitors the health and utilization of all components, dynamically scaling resources up or down as needed, and reconfiguring the factory layout to optimize for different workloads. This ensures maximum utilization of expensive AI hardware and prevents bottlenecks.

- Workload Orchestration: Manages the entire lifecycle of AI jobs, from data preparation to model deployment, ensuring that all dependencies are met and workflows execute smoothly.

This layer is often powered by sophisticated platform software, container orchestration systems (like Kubernetes with specialized AI extensions), and machine learning operations (MLOps) platforms. These tools provide the necessary abstraction and automation to allow data scientists and engineers to focus on AI development rather than infrastructure plumbing.

Transforming Clusters into Manageable Production Systems

Without an effective Orchestration & Platform Layer, the AI Factory would be a collection of powerful but uncoordinated machines. This layer binds them together, enabling the creation of “modular, repeatable, and secure environments designed to turn data into intelligence”. It ensures that the various components—compute engines, the network as a conveyor system, and security as guardrails—work together seamlessly to create consistent outcomes.

This layer is also critical for supporting the rapid lifecycle of AI hardware, which can be less than five years, implying a rapid depreciation cycle. Efficient orchestration allows for seamless integration of new hardware, migration of workloads, and phased upgrades without disrupting ongoing intelligence production.

AI Model & Intelligence Layer (Layer 7): Deploying Actionable Insights

Layer 7, the AI Model & Intelligence Layer, represents the ultimate output of the AI Factory. This is where trained models are deployed, transforming raw data and computational effort into actionable intelligence that drives enterprise analytics and automation.

From Raw Data to Actionable Insights

This layer is the culmination of all the underlying infrastructure. It houses the trained AI models—the “finished products” of the factory. These models are then integrated into various enterprise systems, applications, and decision-making processes. This includes:

- AI Model Deployment: Putting trained models into production, making them accessible via APIs or integrating them directly into applications.

- Inference at Scale: Running these models to generate predictions, classifications, recommendations, or content in real-time or batch processes. This demands significant computational resources for efficient, low-latency inference.

- Continuous Learning and Improvement: Monitoring the performance of deployed models, gathering new data, and feeding it back into the training pipeline (Layers 5-6) for continuous refinement and adaptation. This feedback loop is essential for maintaining the relevance and accuracy of AI intelligence.

- Integration with Enterprise Systems: Ensuring that the intelligence generated by the AI models can be seamlessly consumed by existing enterprise analytics platforms, business intelligence tools, and automation systems.

The output from this layer is not just data; it’s “digital intelligence”—the most valuable commodity of the 21st century. This intelligence powers a wide array of applications, from predictive maintenance in manufacturing to personalized customer experiences, supply chain optimization, and automated decision-making.

The Strategic Imperative of Intelligence Production

The AI Model & Intelligence Layer highlights the fundamental shift in organizational strategy. Companies are no longer just using AI; they are manufacturing intelligence. This demands a product-centric view of AI, where models are treated as critical intellectual property that delivers measurable business value. The ability to rapidly train, deploy, and refine AI models is a core competitive differentiator.

This layer directly supports the vision of companies “operating giant AI factories” that “manufacture intelligence,” as articulated by Jensen Huang. It moves AI from an experimental research endeavor to a commercial imperative, where the ability to generate intelligence at scale defines progress and reshapes the competitive landscape for enterprises and governments alike.

The Strategic Imperative: Why Leaders Must Act Now

The transition from traditional data centers to AI Factories is not merely a technical upgrade; it’s a strategic imperative that C-suite executives and engineering leaders can no longer afford to ignore. Those who cling to outdated infrastructure risk falling irrevocably behind in an era where intelligence production dictates market leadership and societal progress.

The Accelerating Pace of AI Innovation

The pace of AI innovation is unprecedented. The development of generative and agentic models has shifted the definition of progress. Training models with trillions of parameters is no longer an academic exercise but a commercial necessity. This acceleration has profound consequences for infrastructure. The traditional benchmarks of computing are no longer sufficient to capture what is at stake. The very definition of computing has changed: “The data center has stopped being a warehouse of servers. It has become the computer”.

The rapid depreciation cycle of AI hardware, often less than five years, further emphasizes the need for flexible, purpose-built infrastructure that can adapt to continuous advancements. Investment in AI Factory infrastructure ensures that organizations can leverage the latest innovations without being constrained by legacy systems.

Strategic Risks: Stranded Assets and Missed Opportunities

Organizations attempting to run modern AI workloads on archaic infrastructure face significant strategic risks:

- Stranded Assets: Investing further in traditional data center infrastructure for AI purposes can quickly lead to stranded assets. These facilities will prove incapable of supporting the power, cooling, and networking demands of cutting-edge AI hardware, rendering them obsolete for the most valuable workloads.

- Underutilization and Inefficiency: Even if AI hardware can be partially deployed, it will operate at a fraction of its potential due to bottlenecks in power, cooling, or networking. This represents an enormous waste of capital and compute resources.

- Delayed Innovation and Competitive Disadvantage: The inability to efficiently train, deploy, and scale AI models will directly impact an organization’s ability to innovate, develop new products and services, and compete effectively in an AI-driven economy. Competitors building true AI Factories will achieve faster iteration cycles, superior model performance, and ultimately, greater market share.

- Operational Instability: Overloading traditional data centers with AI demands can lead to system instability, thermal shutdowns, and reduced hardware lifespan, resulting in costly downtime and maintenance.

These risks are not theoretical; they are tangible threats to organizations that fail to evolve their infrastructure strategy. The “AI-first” world demands “AI-first” infrastructure.

The Investment Race: Building the Future of Intelligence

The race to build AI Factories is already well underway. NVIDIA alone had deployed over 100 AI factories worldwide as of January 2025, a number that continues to grow monthly. Every major hyperscaler, sovereign government, and forward-thinking enterprise is either racing to build new facilities or undertaking massive retrofits to support this new class of computing.

This global surge in investment underscores the critical importance of AI infrastructure. Hyperscaler CAPEX, already exceeding 600 billion in 2026, with a 36% year-over-year increase, directly reflects this accelerated investment in AI capability. Nations like Indonesia are even pursuing “Sovereign AI” ambitions, recognizing that national AI capabilities are intrinsically linked to owning and operating AI Factory infrastructure.

The insights and applications derived from these factories are becoming the new industrial engines of innovation. Organizations that prioritize this shift are positioning themselves not just to participate in the AI era but to lead it.

The Verdict: Infrastructure Is the Product

The core message is clear: infrastructure is no longer merely an IT overhead; it is a strategic product. For modern AI, the factory itself becomes the computer. The efficiency, scalability, and performance of an organization’s AI Factory directly correlate with its ability to produce intelligence, innovate, and achieve its strategic objectives.

The analogy of manufacturing is not accidental. Manufacturing transformed the world by building factories for physical production. The next transformation will be driven by organizations building factories for intelligence. This requires a shift in mindset from running general workloads to operating agile, intelligent production pipelines that consume vast amounts of data, generate unpredictable demands on infrastructure, and require seamless coordination across compute, networking, and security.

The AI Factory Blueprint provides a clear roadmap for this transition. It guides C-suite and engineering leaders to understand the fundamental changes required at every layer, from energy-aware computing at the physical core to the intelligent deployment of AI models at the pipeline’s end. Embracing this framework is not about optional optimization; it’s about essential re-architecture for survival and leadership in the intelligence age.

The complexities of designing, building, and optimizing an AI Factory are immense, requiring specialized expertise in high-density power, advanced cooling, high-speed networking, distributed storage, and sophisticated orchestration platforms. These are not trivial undertakings, and the cost of getting it wrong can be catastrophic, leading to massive capital expenditure without the desired intelligence output.

Are you prepared to navigate this strategic transformation? Does your organization have a clear roadmap for transitioning from traditional data centers to industrial-scale AI production facilities? The future of your industrial intelligence hinges on these critical infrastructure decisions.

At IoT Worlds, we specialize in guiding enterprises through this complex landscape. Our experts understand the intricacies of the 7-Layer AI Factory Architecture Framework, from implementing energy-aware computing solutions to architecting high-speed networking fabrics and deploying scalable orchestration platforms. We help C-suite and engineering leaders avoid the strategic blind spots, build future-proof infrastructure, and unlock the full potential of AI for their operations, automation, and decision systems.

Don’t let outdated infrastructure hinder your journey into the intelligence era. Partner with IoT Worlds to design and implement your cutting-edge AI Factory Blueprint.

Contact us today to discuss how we can help you build your industrial intelligence powerhouse. Send an email to info@iotworlds.com and let’s start manufacturing your future.