In the rapidly evolving landscape of artificial intelligence, the conversation is shifting. While accuracy and performance once dominated discussions, a new, more profound question is taking center stage: “Can we explain why the system made this decision?” This question is fundamental to the successful integration of AI into enterprise decision workflows, where outcomes can have significant financial, operational, and human impact. The answer lies in the robust implementation of Explainable AI (XAI) architecture.

This comprehensive guide delves into the criticality of XAI, exploring its core principles and demonstrating how a well-designed XAI architecture fosters trust, ensures transparency, and drives responsible AI adoption across industries.

The Paradigm Shift: From Black Box to Trustworthy AI

For decades, the pursuit of higher accuracy in machine learning models often came at the cost of interpretability. Complex algorithms, like deep neural networks, excelled at pattern recognition and prediction but operated as “black boxes,” making it nearly impossible for humans to understand their internal reasoning. While acceptable for certain applications, this opacity becomes a significant liability when AI systems influence critical decisions in areas such as:

- Supply chain planning: Optimizing logistics, predicting demand fluctuations, and managing inventory.

- Financial forecasting: Assessing market trends, predicting stock movements, and managing investment portfolios.

- Risk and compliance monitoring: Detecting fraud, identifying anomalies in financial transactions, and ensuring regulatory adherence.

- Product quality and warranty predictions: Anticipating potential product failures and improving manufacturing processes.

- Workforce planning and hiring recommendations: Optimizing human capital allocation and assisting in talent acquisition.

In these contexts, where decisions can affect millions of dollars, operational stability, or people’s careers, a mere prediction is insufficient. Leaders require confidence that the AI system is not only accurate but also using the right inputs, following a transparent reasoning path, and producing outcomes that can be validated, challenged, and improved. The absence of this transparency erodes trust, hinders adoption, and exposes organizations to significant risks. This is precisely why the focus has shifted from simply powerful AI to trustworthy AI.

The Core Questions of Explainable AI

At its essence, Explainable AI is designed to address three fundamental questions:

- Why did the model make this prediction? This goes beyond simply stating the outcome; it involves uncovering the underlying logic and factors that led to a specific decision.

- Which inputs influenced the outcome the most? Understanding feature importance helps identify the key drivers behind a prediction, enabling validation and potentially revealing biases or unexpected correlations.

- What evidence supports the result? This refers to providing tangible data points or contextual information that substantiates the AI’s conclusion, moving beyond abstract correlations to concrete justification.

By answering these questions, XAI transforms AI from an inscrutable oracle into a collaborative assistant, fostering greater understanding, accountability, and confidence among users and stakeholders.

Understanding the Explainable AI Architecture

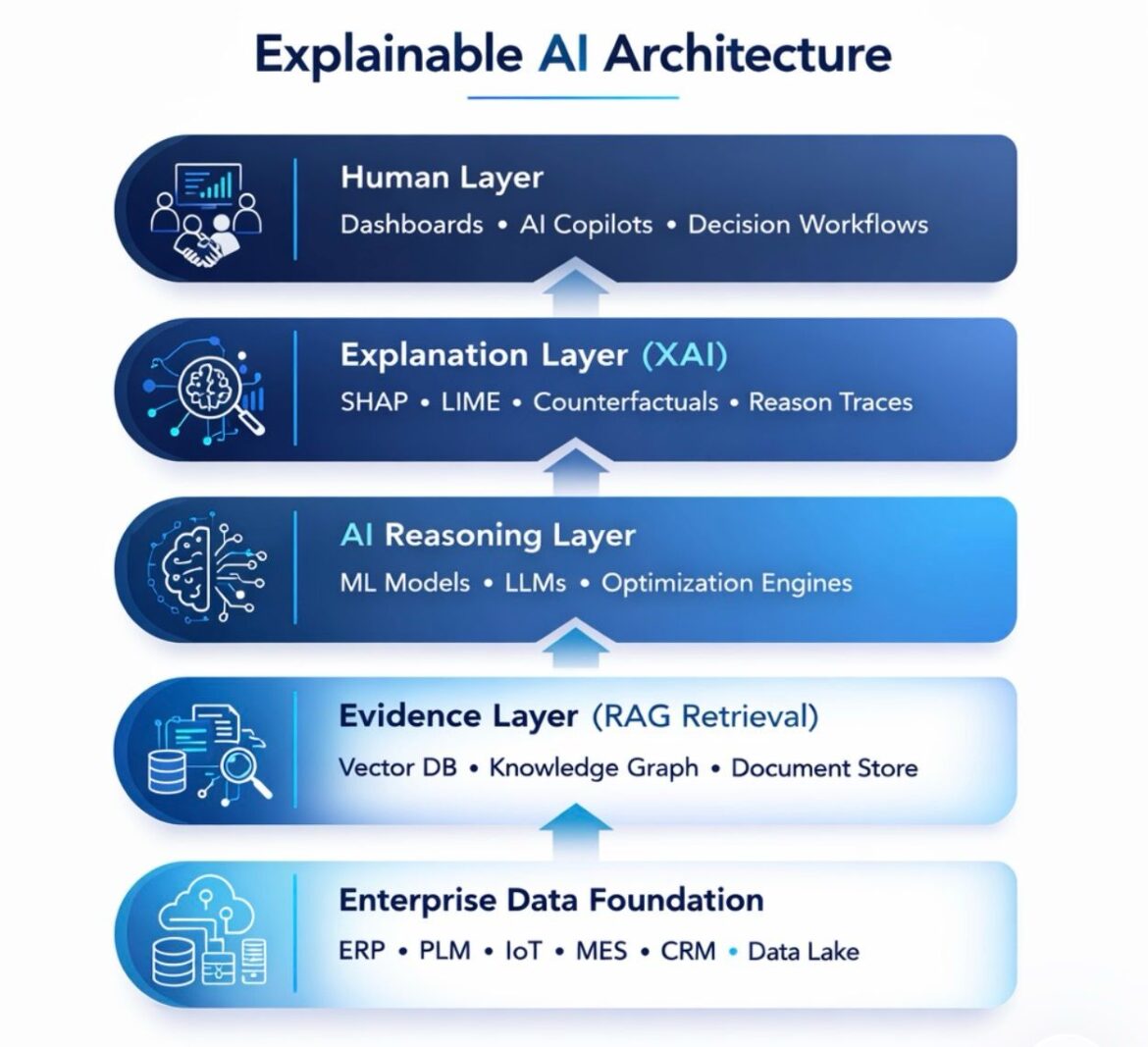

A practical way to conceptualize the integration of XAI into enterprise systems is through a layered architecture. This framework illustrates how various components interact to deliver transparent and auditable AI decisions. The visual representation highlights five distinct, yet interconnected, layers:

- Human Layer

- Explanation Layer (XAI)

- AI Reasoning Layer

- Evidence Layer (RAG Retrieval)

- Enterprise Data Foundation

Each layer plays a crucial role in enabling a truly explainable and trustworthy AI system, working in conjunction to address the core questions of XAI.

The Enterprise Data Foundation: The Bedrock of AI Systems

At the very bottom of the Explainable AI architecture lies the Enterprise Data Foundation. This foundational layer is the comprehensive repository and management system for all organizational data. Its robustness and integrity are paramount, as the quality and accessibility of data directly impact the performance and explainability of any AI system built upon it.

This layer typically encompasses a wide array of enterprise systems and data storage solutions, including:

- ERP (Enterprise Resource Planning): Systems managing core business processes like finance, human resources, manufacturing, and supply chain. Data from ERP systems can include sales figures, inventory levels, production schedules, and employee records.

- PLM (Product Lifecycle Management): Systems managing the entire lifecycle of a product from conception, design, and manufacturing to service and disposal. This data can be crucial for product quality predictions and warranty analysis.

- IoT (Internet of Things): Data streams from connected devices, sensors, and operational technology (OT) in industrial environments. IoT data often provides real-time insights into machine performance, environmental conditions, and asset utilization, critical for predictive maintenance and operational optimization.

- MES (Manufacturing Execution Systems): Systems that monitor and control work-in-process on the factory floor. MES data offers granular insights into production efficiency, material usage, and quality control, which feed into supply chain and product quality models.

- CRM (Customer Relationship Management): Systems managing customer interactions and data throughout the customer lifecycle. CRM data is vital for understanding customer behavior, predicting churn, and personalizing experiences.

- Data Lake: A vast repository that stores raw data in its native format, often used for big data analytics and machine learning. This provides a flexible and scalable storage solution for diverse data types, acting as a staging area for data before it is processed and structured for specific AI applications.

The Enterprise Data Foundation is not merely a collection of data sources; it’s a strategically designed ecosystem that ensures data quality, consistency, and accessibility. Without a well-governed and integrated data foundation, the subsequent layers of the XAI architecture would lack the necessary fuel to operate effectively and reliably. This layer is crucial because the explanations generated by XAI are only as good as the data they are derived from.

The Importance of Data Governance and Quality

The sheer volume and diversity of data within the Enterprise Data Foundation necessitate robust data governance practices. This includes:

- Data Lineage: Tracking the origin and transformations of data as it moves through various systems.

- Data Security: Implementing measures to protect sensitive information from unauthorized access or breaches.

- Data Quality Management: Ensuring accuracy, completeness, consistency, and timeliness of data. Poor data quality can lead to biased models and misleading explanations.

- Data Integration: Harmonizing data from disparate sources into a unified and usable format.

Consider the example of a fraud detection model. If the underlying transaction data in the Enterprise Data Foundation is incomplete or contains errors, the model’s predictions could be flawed, and the explanations generated by the XAI layer would be unreliable. Therefore, investing in a strong data foundation is not just a prerequisite for AI, but a cornerstone of trustworthy AI.

The Evidence Layer: Grounding AI in Retrieved Context

Building upon the robust Enterprise Data Foundation, the Evidence Layer (RAG Retrieval) provides the crucial context and verifiable information necessary to support AI decisions. RAG, or Retrieval-Augmented Generation, is a paradigm that marries the predictive power of AI models with the ability to retrieve factual information from a knowledge base. This layer significantly enhances explainability by grounding AI’s reasoning in concrete evidence rather than solely relying on learned patterns from training data.

The Evidence Layer typically leverages several technologies to store, organize, and retrieve relevant information:

- Vector DB (Vector Database): These databases store data as numerical vectors, allowing for efficient similarity searches. When a query is initiated, the system can quickly find semantically similar documents or data points, providing highly relevant context for the AI model. For instance, if an AI model is making a recommendation, a vector database can retrieve similar past successful recommendations and their underlying rationale.

- Knowledge Graph: A structured representation of knowledge that connects entities, concepts, and events through relationships. Knowledge graphs enable the AI system to understand complex relationships between different pieces of information, providing a richer context for decision-making. For example, a knowledge graph could link a specific product defect to its manufacturing facility, supplier, and related quality control reports.

- Document Store: A database optimized for storing and retrieving semi-structured data, such as documents, articles, PDFs, and web pages. This allows the AI to access and cite specific textual evidence to support its claims. When a loan application is rejected, for instance, the document store could provide excerpts from credit policy documents or relevant financial regulations as supporting evidence.

The primary function of the Evidence Layer is to act as an intelligent information retrieval system that feeds specific, verifiable data points to the AI Reasoning Layer and subsequently to the Explanation Layer. This mitigates the “hallucination” problem often associated with generative AI models and provides concrete references for human users.

How RAG Enhances Explainability

The integration of RAG in the Evidence Layer is a game-changer for XAI. It directly addresses the question, “What evidence supports the result?” Instead of a black-box model simply outputting a prediction, the RAG mechanism allows the system to:

- Cite Sources: Provide direct links or excerpts from documents, reports, or policies that justify a particular decision.

- Offer Contextual Understanding: Introduce relevant background information that might not have been explicitly part of the initial model input but is crucial for human comprehension.

- Reduce Ambiguity: By presenting concrete evidence, the likelihood of misinterpreting the AI’s rationale is significantly reduced.

For an AI system recommending a specific medical treatment, the Evidence Layer could retrieve relevant clinical trial data, patient medical history, and medical guidelines to support the recommendation. This capability is pivotal for building trust, especially in high-stakes domains where verifiable evidence is paramount.

The AI Reasoning Layer: The Engine of Decision-Making

Positioned above the Evidence Layer, the AI Reasoning Layer is where the core computational intelligence resides. This layer is responsible for processing data from the Enterprise Data Foundation, leveraging context from the Evidence Layer, and generating predictions, classifications, or optimizations. It embodies the technical prowess of the AI system, employing various models and engines to arrive at its conclusions.

Key components of the AI Reasoning Layer include:

- ML Models (Machine Learning Models): This encompasses a vast array of algorithms, from traditional statistical models (e.g., Logistic Regression, Support Vector Machines) to more complex architectures (e.g., Random Forests, Gradient Boosting Machines). These models are trained on historical data to identify patterns and make predictions. For example, an ML model might predict customer churn based on past behavior or identify fraudulent transactions.

- LLMs (Large Language Models): Generative AI models like GPT, used for natural language understanding, generation, summarization, and complex reasoning tasks. LLMs are increasingly being integrated into enterprise AI for tasks like generating summaries of reports, answering complex queries, and even assisting with code generation. In the context of decision-making, an LLM might analyze unstructured text data (e.g., customer feedback, legal documents) to inform a decision.

- Optimization Engines: Algorithms designed to find the best possible solution from a set of alternatives, often under various constraints. These are critical for tasks like supply chain optimization, resource allocation, and scheduling. For example, an optimization engine might determine the most efficient delivery routes or the optimal allocation of manufacturing resources.

The AI Reasoning Layer is where the “decision” is made. However, at this stage, the decision might still be a black box to human users. The output is typically a prediction, a recommendation, or an optimized plan. The challenge, and the purpose of the subsequent layers, is to make how this decision was reached understandable.

Bridging Data, Logic, and Predictions

The AI Reasoning Layer acts as the brain of the operation, taking raw and contextualized information and translating it into actionable insights. It constantly interacts with:

- Enterprise Data Foundation: To ingest features, labels, and training data necessary for model learning and inference.

- Evidence Layer: To retrieve specific facts, rules, or document excerpts that can inform or validate its reasoning, especially for LLM-based systems utilizing RAG.

The performance and reliability of this layer are crucial. Organizations frequently use platforms like Amazon SageMaker to host their XAI models, ensuring scalability and secure execution. However, even with highly accurate models, the “why” remains an outstanding question until the next layer comes into play.

The Explanation Layer (XAI): Unveiling the AI’s Inner Workings

The Explanation Layer (XAI) is where the magic of interpretability truly happens. This layer takes the output from the AI Reasoning Layer and translates its complex internal workings into human-understandable explanations. This is where the questions “Why did the model make this prediction?” and “Which inputs influenced the outcome the most?” are directly addressed.

This layer leverages a variety of specialized techniques and tools, often categorized as either model-agnostic or model-specific:

- SHAP (SHapley Additive exPlanations): A widely used model-agnostic method that quantifies the contribution of each feature to a specific prediction. SHAP values are based on game theory and provide a consistent and locally accurate explanation. For instance, in a loan application, SHAP could show that a high debt-to-income ratio and a short credit history significantly contributed to a rejection.

- LIME (Local Interpretable Model-agnostic Explanations): Another popular model-agnostic technique that explains individual predictions by creating a simpler, interpretable model around the local vicinity of the prediction. LIME can highlight specific words in a text classification task or pixels in an image recognition task that were most influential.

- Counterfactuals: These explanations address the question, “What would have had to be different for a different outcome to occur?” For example, if a loan was denied, a counterfactual explanation might state, “If your credit score were 50 points higher, your loan would have been approved.” This provides actionable insights for users.

- Reason Traces: For certain AI systems, especially rules-based or symbolic AI, reason traces can provide a step-by-step breakdown of the logical path taken to reach a decision. This is akin to a debug log, showing the specific rules fired and data points considered at each stage.

The Explanation Layer is crucial for transforming opaque AI decisions into transparent insights. It helps stakeholders understand the specific factors influencing a decision and provides a basis for validating or challenging the AI’s output.

Model-Agnostic vs. Model-Specific Techniques

While both approaches exist, model-agnostic methods like SHAP and LIME are often preferred in production environments. This is because they can be applied to any black-box model, regardless of its underlying architecture. This versatility is vital in dynamic AI systems where models are frequently updated, retrained, or even swapped out. Model-specific methods, while sometimes offering deeper insights for a particular model type, require re-engineering if the model changes.

The Explanation Layer acts as an interpreter, translating complex mathematical operations and learned patterns into narratives and visualizations that humans can readily grasp. Without this layer, the insights from the AI Reasoning Layer would remain largely inaccessible and untrustworthy.

The Human Layer: Empowering Decision-Makers

At the pinnacle of the Explainable AI architecture stands the Human Layer. This is where the explained AI insights are presented to human users in an intuitive and actionable format. This layer facilitates interaction, allows for human oversight, and ultimately empowers decision-makers to leverage AI effectively and responsibly.

The Human Layer integrates various interfaces and tools to enable seamless engagement with the AI system:

- Dashboards: Visual summaries of AI performance, key metrics, and aggregated explanations. Dashboards allow users to monitor the overall behavior of AI models, identify trends, and spot potential issues. They can display feature importances averaged across many predictions, showing which factors generally drive the model’s decisions.

- AI Copilots: Intelligent assistants that work alongside human users, providing real-time explanations, recommendations, and insights within existing workflows. An AI copilot might suggest next best actions in a CRM system and explain the rationale behind those suggestions.

- Decision Workflows: Integrating AI explanations directly into the business processes where decisions are made. This ensures that users have the necessary context and justification at the point of action. For example, in a loan approval workflow, the system would present not just the approval/rejection decision, but also the top influential factors and supporting evidence.

The goal of the Human Layer is to make AI transparent, controllable, and trustworthy for the end-user. It’s about building “trust architecture” for the next generation of enterprise decision systems.

Fostering Trust and Collaboration

The Human Layer is critical for establishing trust and encouraging adoption of AI systems. When users can see why an AI made a particular decision, they are more likely to trust its outputs, question its assumptions, and learn from its insights. This fosters a collaborative environment where humans and AI can work together more effectively.

Transparency in this layer is not merely a “nice-to-have” feature; it’s a necessity for debugging, regulatory compliance, and driving continuous improvement. If an AI system consistently makes errors in a specific scenario, the explanations provided in the Human Layer can help experts identify the root cause, leading to model adjustments or data improvements. The React-based UI components often visualize feature importance and predictions, enhancing user understanding.

Why Explainable AI Matters: A Business Imperative

The shift towards Explainable AI is not merely a technical advancement; it’s a business imperative driven by regulatory pressures, ethical considerations, and the practical challenges of deploying AI in real-world environments.

Regulatory Compliance and Governance

As AI systems become more pervasive, regulators worldwide are implementing stricter guidelines regarding transparency, fairness, and accountability. Regulations like the EU’s GDPR (General Data Protection Regulation) and emerging AI acts emphasize the “right to explanation” for decisions made by automated systems. Without XAI, organizations face significant compliance risks and potential legal repercussions.

- AI Model Audits: Regulators and internal compliance teams require the ability to audit AI decisions, tracing them back to their inputs and underlying logic. XAI provides the necessary audit trails and justification.

- Bias Detection and Mitigation: Explanations can help uncover and quantify biases in AI models, which is crucial for ethical deployment, especially in sensitive areas like hiring, lending, or healthcare.

- Accountability: XAI clarifies responsibility. If a model makes a wrong decision, explanations help pinpoint whether the issue lies in the data, the model logic, or the interpretation. This is fundamental for establishing accountability.

Enhanced Trust and User Adoption

Perhaps the most significant benefit of XAI is its ability to build and sustain trust among users and stakeholders. When individuals understand how an AI system arrives at its conclusions, they are more likely to accept its recommendations and integrate it into their daily operations.

- Reduced Resistance: A lack of transparency can lead to suspicion and resistance among employees and customers. XAI demystifies AI, fostering acceptance.

- Empowered Users: Knowing the “why” empowers users to challenge an AI’s decision when it seems incorrect, providing valuable feedback for improvement.

- Improved Human-AI Collaboration: When humans understand AI’s reasoning, they can collaborate more effectively, combining their domain expertise with the AI’s analytical power. This is particularly relevant for AI copilots and decision support systems.

Debugging, Validation, and Continuous Improvement

For engineers and data scientists, XAI is an invaluable tool for debugging, validating, and continuously improving AI models.

- Debugging Black-Box Models: When a model performs unexpectedly, explanations can help identify faulty features, data leakage, or incorrect assumptions that are difficult to spot otherwise. This moves beyond simple accuracy metrics to insights into true business logic.

- Model Validation: Explanations allow domain experts to validate whether the AI is reasoning based on sound principles, rather than spurious correlations. For example, in a medical diagnosis model, an explanation might confirm that the AI is using clinically relevant symptoms rather than irrelevant patient identifiers.

- Feature Engineering Insights: Understanding feature importance can guide data scientists in refining existing features or creating new ones, leading to more robust and accurate models.

- Model Monitoring: Explanations can be used to monitor model drift and ensure that the AI continues to make decisions based on expected factors over time.

Operational Resilience and Risk Management

In production environments, XAI acts as a critical risk management tool. It enables organizations to anticipate, identify, and mitigate risks associated with AI deployment.

- Reconstructing Decisions: In case of an anomaly or error, XAI, combined with traceability, allows for the complete reconstruction of an AI decision, including the data used, model version, and the internal reasoning process.

- Managing High-Impact Decisions: For critical applications like financial transactions, medical diagnoses, or automated vehicle control, explainability is a non-negotiable requirement for managing the inherent risks.

- Preventing “Shadow AI”: When official AI systems are opaque, users may resort to unapproved, less reliable methods. XAI promotes the use of vetted, transparent AI.

Traceability: The Indispensable Companion to Explainability

While closely related, explainability and traceability address different, yet complementary, aspects of trustworthy AI. Traceability focuses on documenting and reconstructing the complete lifecycle of an AI decision, addressing the question, “What happened during this decision?”.

Distinguishing Explainability and Traceability

- Explainability (Why?): Provides insight into why an AI system made a specific output. It focuses on the model’s reasoning and feature contributions.

- Traceability (What?): Documents what led to a decision. It focuses on the data flow, model versions, execution context, and specific timestamps.

Consider a fraud detection model that flags a transaction.

- Explainability would tell you: “The transaction was flagged because it occurred in a new geographic location, involved an unusually high amount, and happened outside normal business hours. The highest contributing factor was the unusual amount.”

- Traceability would tell you: “This transaction was processed by

FraudModel_v2.3at2026-03-14 10:30:15 UTCusing input data fromcustomer_transactions_2026-03-14.csv. The model was deployed byJohn Doeon2026-03-01. Initial data preprocessing steps includedmin-max scalingandone-hot encoding.”

Architecting for Traceability

A robust XAI architecture inherently supports traceability through its layered design:

- Enterprise Data Foundation: Ensures that input data is versioned, timestamped, and audited

DynamoDBandS3manage data and model storage whileElastiCachehandles caching. - Evidence Layer: Documents the specific contextual information retrieved to support a decision.

- AI Reasoning Layer: Records the exact model version, hyperparameters, and any specific configurations used for an inference

SageMakerensures that the XAI models are properly hosted. - Explanation Layer: Stores the generated explanations themselves, linked to the specific decision.

- Observability and Security: Observability tools like

CloudWatchandX-Rayensure that every step of the process is monitored and logged. Security measures likeIAM,VPC, andKMSprotect the integrity of the data and the system configuration.CloudFrontandAPI GatewaywithWAFsecurely handle client access.

Combined, explainability and traceability create a comprehensive system for AI governance, regulatory compliance, and effective problem-solving. This is what makes an intelligent system a trustworthy one.

Implementing Explainable AI Architecture in Practice

Building a production-ready Explainable AI architecture requires a strategic approach, moving beyond theoretical concepts to practical implementation.

Key Architectural Principles

Several principles guide the successful implementation of XAI:

- Layered Isolation: As depicted in the architecture, isolating concerns into distinct layers (data, compute, explainability, presentation) promotes modularity, maintainability, and scalability.

- Transparency by Design: Integrate explainability from the initial design phase, rather than attempting to bolt it on as an afterthought.

- Scalability: The architecture must be able to handle increasing data volumes, model complexities, and user demands. Cloud-native platforms like AWS, with services such as

SageMaker,DynamoDB, andS3, are often leveraged for this purpose. - Security: Implement robust security measures across all layers to protect sensitive data and prevent unauthorized access or manipulation.

- Observability: Comprehensive monitoring and logging (

CloudWatch,X-Ray) are essential to track system performance, identify issues, and ensure auditability. - User-Centric Design: The Human Layer must be designed with the end-user in mind, providing explanations in a clear, concise, and actionable format.

- Continuous Feedback Loop: Establish mechanisms for users to provide feedback on explanations, enabling iterative improvement of both the explanations and the underlying AI models.

A Roadmap for Implementation

Organizations can follow a structured roadmap to implement Explainable AI architecture:

- Assess Business Needs and High-Impact Use Cases: Identify areas where AI decisions have significant business, ethical, or regulatory implications. Prioritize these use cases for XAI implementation.

- Strengthen the Enterprise Data Foundation: Invest in data governance, data quality initiatives, and robust data integration strategies. Ensure that data is reliable, accessible, and properly cataloged.

- Establish the Evidence Layer: Develop or integrate systems for knowledge management, such as vector databases, knowledge graphs, and document stores. Define how contextual information will be retrieved and utilized by the AI Reasoning Layer.

- Integrate XAI into the AI Reasoning Layer: Select appropriate XAI techniques (SHAP, LIME, counterfactuals) based on model types and explanation requirements. Integrate these techniques into the model deployment pipeline, using platforms like

SageMakerfor hosting and execution. - Design the Human Layer Interfaces: Develop user-friendly dashboards, AI copilots, and decision workflows that effectively present explanations. Collaborate with business users and subject matter experts to ensure the explanations are meaningful and actionable.

- Implement Observability and Security: Deploy comprehensive monitoring, logging, and security solutions across the entire architecture. Ensure auditability and compliance with relevant regulations.

- Pilot and Iterate: Begin with pilot projects, gather feedback from users, and iteratively refine the XAI system. Continuously evaluate the effectiveness of explanations and the overall trustworthiness of the AI.

- Training and Adoption: Educate users about how to interpret explanations and interact with the XAI system. Foster a culture of responsible AI use.

By following this roadmap, organizations can transition from experimental AI models to building trustworthy AI systems that meet ethical, regulatory, and operational expectations.

The Future of Enterprise AI: Human-Centric and Trustworthy

The evolution of AI in the enterprise is inexorably moving towards a human-centric paradigm. The power of AI is maximized not when it operates autonomously in a black box, but when it augments human intelligence, assists in complex decision-making, and can clearly articulate its reasoning. Explainable AI architecture is the blueprint for this future.

As AI continues to deeply integrate into critical business functions, its ability to explain itself will no longer be a competitive advantage but a foundational requirement. Organizations that proactively build transparent, auditable, and understandable AI systems will be the ones that succeed in scaling AI responsibly, building lasting trust with their stakeholders, and driving sustainable innovation. The journey towards trustworthy AI is a continuous one, demanding ongoing vigilance, technological adaptation, and a strong commitment to ethical principles.

The architectural layers—from the foundational Enterprise Data Foundation to the user-facing Human Layer—collectively ensure that AI does not become a liability, but rather a powerful, trusted tool that enhances human capabilities and organizational outcomes. By prioritizing clear explanations, verifiable evidence, and robust traceability, businesses can unlock the full potential of AI while mitigating its inherent risks.

Is your organization ready to build a resilient, transparent, and trustworthy AI ecosystem? Do you need expert guidance in navigating the complexities of Explainable AI architecture and implementing solutions that foster trust and compliance?

Reach out to us at info@iotworlds.com to discuss how IoT Worlds can help you design, develop, and deploy a cutting-edge Explainable AI architecture tailored to your unique enterprise needs.