The landscape of robotics is undergoing a profound transformation. As robots become more sophisticated and autonomous, the need for advanced artificial intelligence (AI) to govern their decision-making processes has never been more critical. For years, the robotics community has grappled with the challenge of integrating complex AI logic into existing frameworks, often contorting tools to fit purposes for which they were never truly designed. This article delves into the innovative OM1 architecture, a modular AI runtime that promises to redefine how robots perceive, reason, and act, offering a dedicated layer for intelligent decision-making that seamlessly complements the robust communication capabilities of ROS2.

The Foundational Role of ROS2: Communication, Not Cognition

To truly appreciate the significance of OM1, it’s essential to first understand the bedrock upon which much of modern robotics is built: ROS2 (Robot Operating System 2). ROS2 is an invaluable framework, a cornerstone for developing sophisticated robotic applications. It excels at what it was designed to do: facilitate efficient and reliable data exchange between various robotic components.

ROS2’s Core Strengths: A Data-Centric Paradigm

At its heart, ROS2 is a communication middleware. It provides a standardized way for different software modules, known as “nodes,” to communicate with each other. This communication is orchestrated through several well-defined mechanisms:

- Publish/Subscribe (Pub/Sub): This asynchronous messaging pattern allows nodes to broadcast data (publish) to a topic, and other nodes can receive this data by subscribing to that topic. Think of it as a bulletin board where information is posted for anyone interested to read. For example, a camera node might publish image data, and a vision processing node might subscribe to that topic to receive and analyze those images.

- Services: Services offer a synchronous request/reply mechanism. A client node sends a request to a service server node, which then performs an action and sends back a response. This is ideal for operations where an immediate result is expected, such as requesting a robot arm to move to a specific position and waiting for confirmation of completion.

- Actions: Actions are designed for long-running, pre-emptable tasks that provide feedback mid-execution. They combine aspects of both services and topics. A client sends a goal to an action server, which then provides continuous feedback on the progress of the goal and allows the client to cancel the operation if needed. This is crucial for tasks like autonomous navigation, where the robot needs to report its progress and potentially be interrupted.

These communication primitives make ROS2 exceptionally good at moving data around. Sensor readings, motor commands, navigation waypoints – all are efficiently transmitted and received across the robot’s ecosystem. This data flow is fundamental to a robot’s operation, enabling various subsystems to work in concert.

The Deliberate Absence of AI Decision-Making in ROS2

Crucially, ROS2 was never intended to be an AI framework. It was not designed with an inherent opinion on how a robot should “think” or “reason.” Its mandate is to provide the infrastructure for communication, leaving the complex tasks of intelligence and decision-making to other layers. This deliberate design choice has been a strength, allowing ROS2 to remain lightweight, flexible, and robust as a communication layer, empowering developers to integrate their preferred AI techniques.

However, this strength has also presented a unique challenge. As the demand for more intelligent and autonomous robots grew, developers found themselves attempting to shoehorn sophisticated AI decision-making logic into ROS2 nodes.

The Limitations of Traditional Approaches within ROS2

For years, the robotics community has improvised, attempting to imbue ROS2 nodes with higher-level intelligence. This often involved:

- State Machines: These are computational models that define a system’s behavior based on a finite number of states and transitions between these states. While effective for simple, well-defined behaviors, state machines can quickly become unwieldy and difficult to manage for complex, dynamic scenarios.

- Behavior Trees: Behavior trees offer a more modular and hierarchical approach to controlling robot behavior. They allow for the creation of complex behaviors by combining simpler ones, often used for tasks like navigation, manipulation, and interaction. While an improvement over raw state machines, integrating generative AI reasoning directly into behavior tree nodes often feels like an unnatural fit.

- LangChain Nodes: The emergence of large language models (LLMs) has led to attempts to integrate frameworks like LangChain directly into ROS2 nodes. While LangChain provides powerful tools for chaining together LLM calls and other data sources, embedding it directly within the ROS2 communication paradigm can feel akin to forcing a square peg into a round hole. The asynchronous, message-passing nature of ROS2 doesn’t always align seamlessly with the sequential, reasoning-driven nature of LLM interactions.

The common thread among these approaches is that they are adaptations, not native solutions, for AI decision-making within the ROS2 ecosystem. ROS2’s strength lies in its opinionated stance on communication, not on cognition. This leaves a significant void: Where, precisely, in the robot’s software stack does the “what should the robot do now?” logic truly belong?

Introducing OM1: A Dedicated AI Reasoning Layer

This is where OM1 enters the picture, proposing a fundamental shift in how we architect intelligent robotic systems. OM1 is a modular AI runtime designed to sit above the ROS2 stack, providing a dedicated layer for AI logic and decision-making. It acknowledges ROS2’s prowess in data movement but asserts that the complex task of reasoning and action planning requires its own distinct and purpose-built environment.

The Genesis of OM1: An Overdue Question

The very existence of OM1 stems from an overdue question: Do we need a dedicated layer above ROS2 for AI logic? The evidence of 965 teams customizing OM1 for their own hardware, a number far exceeding that of a mere demo repository, strongly suggests an emphatic “yes.” This level of adoption signifies a profound recognition within the robotics community of the need for a more robust and native solution for AI decision-making.

OM1’s Core Philosophy: Modularity and Multimodality

OM1’s design philosophy is rooted in modularity and multimodality. It’s not a monolithic AI solution but rather a flexible framework that can integrate various AI components and process diverse types of sensory data.

- Modular AI Runtime: OM1 provides a structured environment for AI modules to operate, allowing developers to plug in and combine different AI models and algorithms as needed. This modularity ensures flexibility and adaptability, critical for the ever-evolving field of AI.

- Multimodal Input: One of OM1’s key strengths is its ability to natively handle multimodal input. Robots interact with the world through a rich tapestry of sensors: cameras for vision, microphones for sound, LiDAR for 3D mapping, GPS for location, and internal sensors for battery and system state. OM1 is designed to ingest and process all this diverse data, providing a holistic understanding of the robot’s environment. This is a significant departure from approaches that often treat each sensor stream in isolation.

- Python-Native: OM1 is built with Python as its native language. This is a strategic choice, leveraging Python’s extensive ecosystem of AI and machine learning libraries, its ease of use, and its popularity among developers. This makes OM1 accessible and facilitates rapid prototyping and deployment of AI solutions.

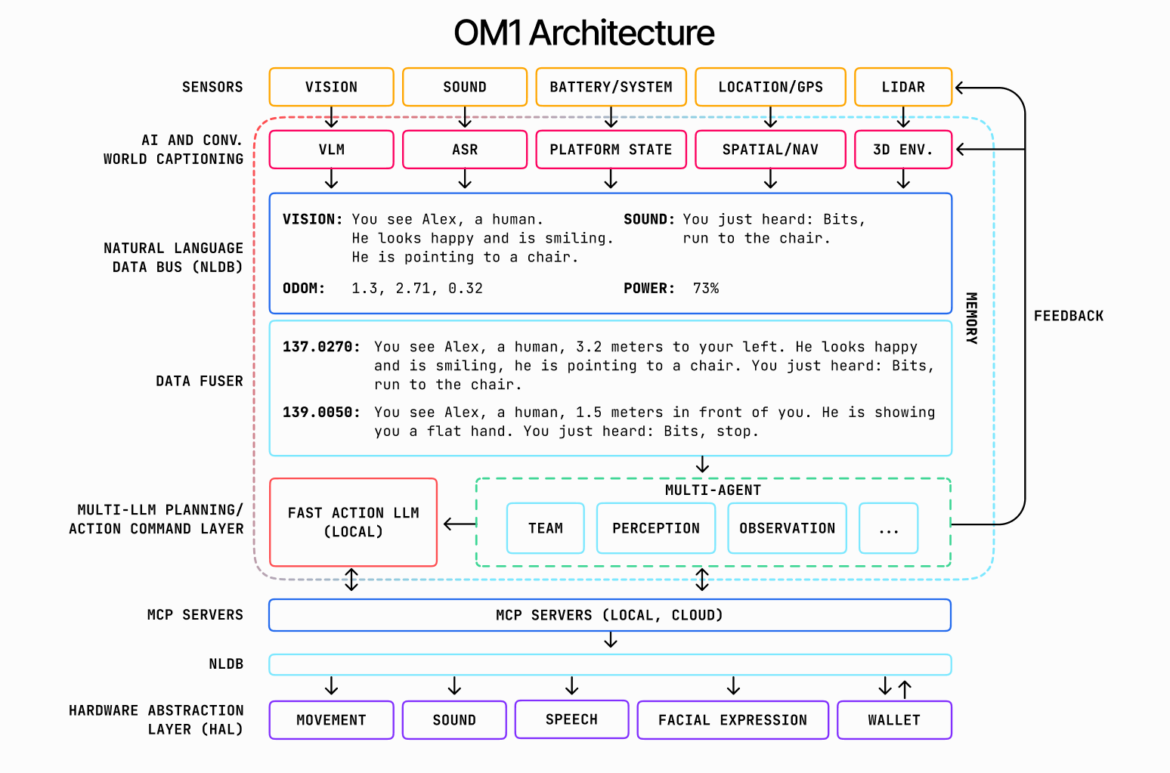

Deconstructing the OM1 Architecture: A Journey from Perception to Action

Let’s delve deeper into the architectural components of OM1, understanding how it transforms raw sensor data into intelligent actions.

Sensors and Pre-processing: The Gates to Perception

At the very foundation of any intelligent system are its sensors. OM1 first receives raw data from various sensors, abstracting away the hardware specifics. This raw data then flows into initial AI and conversational processing layers.

- Vision Data and VLM (Vision-Language Models): Camera feeds, providing rich visual information, are processed by Vision-Language Models (VLMs). VLMs are powerful AI models that can understand and generate human-like language based on visual input. They perform tasks like object recognition, scene understanding, and even generating descriptive captions for what the robot “sees.” For instance, a VLM might identify “Alex, a human,” describe his emotional state (“looks happy and is smiling”), and note his actions (“pointing to a chair”).

- Sound Data and ASR (Automatic Speech Recognition): Audio input from microphones is fed into Automatic Speech Recognition (ASR) systems. ASR converts spoken language into text, allowing the robot to understand verbal commands and environmental sounds. For example, the ASR might detect “Bits, run to the chair,” identifying a command directed at the robot.

- Platform State: Data from internal sensors provides crucial information about the robot’s operational status, such as battery level, motor speeds, and other system diagnostics. This “platform state” is vital for the robot to understand its own capabilities and limitations.

- Location/GPS and Spatial/Nav: GPS data, combined with other localization techniques, provides the robot’s global position. This is then integrated with spatial and navigation modules to create and maintain a representation of the robot’s immediate environment and its intended path.

- LiDAR and 3D Environment: LiDAR (Light Detection and Ranging) sensors generate detailed 3D point clouds of the surrounding environment. This data is processed to build a comprehensive 3D map, identify obstacles, and understand the geometry of the robot’s workspace.

The Natural Language Data Bus (NLDB): A Unified Representation of Reality

A crucial innovation within OM1 is the Natural Language Data Bus (NLDB). This is not merely a communication channel; it’s a mechanism for homogenizing diverse sensor inputs into a unified, human-readable format. Instead of dealing with disparate data types (pixel arrays, audio waveforms, point clouds), the NLDB translates these into natural language descriptions.

Imagine the output from the VLM: “You see Alex, a human. He looks happy and is smiling. He is pointing to a chair.” This is a concise, descriptive interpretation of complex visual data. Similarly, the ASR might generate: “You just heard: Bits, run to the chair.” The NLDB acts as an intelligent interpreter, transforming raw sensor measurements into a stream of textual observations. This unification dramatically simplifies the subsequent reasoning layers, as they no longer need to directly parse raw sensor data but can instead operate on abstract, semantic representations.

The Data Fuser: Weaving a Coherent Narrative

The next stage in OM1’s processing pipeline is the Data Fuser. The NLDB provides individual observations, but these observations need to be integrated and contextualized to form a coherent understanding of the world. The Data Fuser takes these discrete natural language snippets and combines them, often with temporal and spatial information, to create a richer, more comprehensive narrative.

For example, individual observations like “You see Alex, a human, 3.2 meters to your left. He looks happy and is smiling, he is pointing to a chair” and “You just heard: Bits, run to the chair” are fused. This fusion not only provides a spatial context (Alex is 3.2 meters to the left) but also creates a more complete picture of the current situation and the command given. As time progresses, the Data Fuser continuously updates this understanding, integrating new information and refining the robot’s internal “world model.” This stream of fused, contextualized observations is then passed to the higher-level reasoning modules.

Memory: The Robot’s Internal Chronicle

Integral to the Data Fuser and the subsequent reasoning layers is the concept of Memory. OM1 includes a robust memory component that stores past fused observations, commands, and actions. This memory serves as the robot’s internal chronicle, allowing it to maintain context, learn from past experiences, and develop a more sophisticated understanding of its environment over time. Feedback from actions also feeds back into this memory, creating a continuous learning loop. This is critical for tasks requiring persistence, long-term planning, and adaptation.

Multi-LLM Planning/Action Command Layer: The Brain of the Robot

This is where the true AI decision-making power of OM1 resides. The Multi-LLM Planning/Action Command Layer is responsible for processing the fused multimodal input and determining what the robot should do next. This layer leverages the power of multiple Large Language Models (LLMs) to perform complex reasoning, planning, and action generation.

- Fast Action LLM (Local): For immediate, reactive decisions, OM1 employs a “Fast Action LLM” that operates locally. This LLM is optimized for quick inference and rapid response, enabling the robot to react swiftly to dynamic changes in its environment or urgent commands. This local LLM can handle a significant portion of the robot’s real-time decision-making, ensuring low latency and responsiveness.

- Multi-Agent System: Beyond instantaneous reactions, OM1 introduces a sophisticated “Multi-Agent” system. This system allows for more complex, deliberative reasoning and planning, often engaging multiple specialized AI agents working collaboratively. These agents can include:

- Team Agent: Responsible for coordinating the robot’s actions within a larger team of robots or human collaborators.

- Perception Agent: Focused on refining and interpreting perceptual information, providing deeper insights from the fused data.

- Observation Agent: Dedicated to generating new questions or hypotheses based on current observations, driving further exploration or information gathering.

- Other Specialized Agents: The modular nature of OM1 allows for the integration of various other specialized agents, each tailored for specific reasoning tasks, such as task planning, resource allocation, or ethical considerations.

The Multi-LLM Planning/Action Command Layer, with its combination of fast, local LLMs and a collaborative multi-agent system, acts as the robot’s brain, translating abstract understanding into concrete action plans. Feedback from the execution of these plans is crucial and is fed back into the memory and the perception layers, allowing the system to learn and adapt over time.

MCP Servers: Scaling Intelligence (Local and Cloud)

The computational demands of running advanced LLMs and multi-agent systems can be substantial. OM1 addresses this through the utilization of MCP (Modular Compute Platform) Servers. These servers, which can be deployed both locally on the robot and in the cloud, provide the necessary computational horsepower to execute the complex AI models in the planning and action layers.

- Local MCP Servers: For latency-critical tasks and situations where cloud connectivity is limited or unreliable, local MCP servers ensure that essential AI reasoning can occur on-device. This is crucial for real-time control and maintaining autonomy.

- Cloud MCP Servers: For more computationally intensive tasks, deep learning model training, or scenarios requiring access to vast datasets, cloud-based MCP servers offer scalable resources. This hybrid approach provides flexibility, allowing developers to optimize for performance, cost, and connectivity as needed.

The ability to seamlessly leverage both local and cloud compute resources for AI processing is a significant advantage of the OM1 architecture, enabling robots to operate in diverse environments and handle varying levels of computational complexity. The NLDB also interfaces with MCP Servers to distribute information for processing.

Hardware Abstraction Layer (HAL): Bridging AI to Actuation

Finally, the outcomes of the AI reasoning and planning layers need to be translated into physical actions. This is the responsibility of the Hardware Abstraction Layer (HAL). The HAL provides a standardized interface for the AI system to control various robotic actuators and peripherals, abstracting away the specifics of the underlying hardware. This separation of concerns ensures that the AI logic remains independent of the robot’s physical configuration, making OM1 highly portable across different robotic platforms.

The HAL enables the AI system to generate commands for:

- Movement: Controlling motors for locomotion (wheels, legs, arms).

- Sound: Generating speech, alerts, or other audio output.

- Speech: Actuating vocalizers for verbal communication with humans.

- Facial Expression: Controlling actuators for expressive robot faces, enhancing human-robot interaction.

- Wallet: Interfacing with secure payment systems for transactional capabilities, enabling robots to perform tasks like making purchases or receiving payments.

The bidirectional arrows connecting the HAL to the MCP Servers (Local, Cloud) signify that the HAL not only receives commands from the AI layers but can also provide feedback on the success or failure of those commands, further enriching the robot’s internal state and informing future planning.

The Paradigm Shift: Why OM1 is Necessary

The argument for OM1 is compelling. It addresses a fundamental architectural gap in robotics that has been evident for years.

ROS2’s Data Flow vs. OM1’s Reasoning Flow

The core distinction between ROS2 and OM1 can be summarized as:

- ROS2: Optimized for data flow. Its strength lies in efficiently moving sensor data, control commands, and other messages between disparate nodes. It’s a highly effective communication highway.

- OM1: Optimized for reasoning flow. It’s the intelligent decision-maker that sits atop that highway, interpreting the data, deliberating on potential actions, and issuing high-level commands.

By providing a dedicated, Python-native layer for AI logic, OM1 liberates ROS2 to focus on its core strength of robust communication. This separation of concerns leads to a cleaner, more modular, and ultimately more scalable architecture for intelligent robots.

Overcoming the “Squeezing AI into ROS2 Nodes” Problem

The traditional approach of “squeezing AI decision-making into ROS2 nodes” has always felt like a workaround. State machines and behavior trees, while useful for deterministic behaviors, struggle with the nuances of generative AI reasoning. LangChain nodes, while powerful, often feel grafted onto a system not designed for their intrinsic logic.

OM1, by contrast, provides a native home for these sophisticated AI techniques. It offers an environment where LLMs, multi-agent systems, and multimodal fusion can operate in a coherent and integrated manner, without the architectural compromises previously necessitated by ROS2-centric designs.

The Benefits of a Dedicated AI Layer

The advantages of adopting an OM1-like architecture are numerous:

- Increased Modularity: AI components can be developed, tested, and updated independently of the ROS2 communication layer, leading to more robust and maintainable systems.

- Enhanced Scalability: The ability to leverage both local and cloud-based MCP servers allows for dynamic scaling of AI compute resources, adapting to the complexity of tasks and environmental conditions.

- Improved maintainability: Clear separation of concerns simplifies debugging and troubleshooting. Issues in AI logic are isolated from communication problems.

- Faster Development Cycles: Python-native development with access to a rich AI/ML ecosystem accelerates the development and iteration of intelligent behaviors.

- Richer Multimodal Understanding: OM1’s native support for fusing diverse sensor inputs into a unified natural language representation leads to a more comprehensive and contextualized understanding of the robot’s world.

- More Sophisticated Reasoning: Dedicated LLM and multi-agent layers enable more complex planning, deliberation, and adaptive behaviors that are difficult to achieve within traditional ROS2 nodes.

- Future-Proofing: As AI capabilities rapidly evolve, a dedicated AI layer like OM1 can more easily integrate new models, algorithms, and paradigms without requiring a complete overhaul of the foundational communication infrastructure.

OM1 in Action: Real-World Implications

To illustrate the profound impact of OM1, consider a few real-world scenarios:

Autonomous Logistics Robot

Imagine a logistics robot operating in a complex warehouse environment.

- ROS2’s Role: ROS2 would handle the communication of LiDAR data for mapping, motor commands for navigation, and sensor readings from obstacle detection systems.

- OM1’s Role: OM1 would receive multimodal input (vision of new inventory, sound of a human worker, commands from a central system). Its VLMs would identify boxes and labels. Its ASR would process voice commands like “Move this pallet to loading dock 3.” The Data Fuser would combine these inputs into a coherent understanding: “Human worker Alex is asking to move a pallet identified as ‘Product XYZ’ to loading dock 3, which is currently clear based on LiDAR data.” The Multi-LLM Planning layer would then determine the optimal path, considering factors like traffic in the warehouse, the robot’s current battery level, and the urgency of the task. It would then issue high-level commands to the HAL, such as “Navigate to loading dock 3,” ensuring collision avoidance throughout the journey. If an unexpected obstacle appears, the Fast Action LLM would quickly issue a “stop” command, while the multi-agent system re-plans.

Interactive Service Robot

Consider a service robot assisting customers in a retail store.

- ROS2’s Role: ROS2 would manage communication for facial recognition data, voice synthesis, and movement commands to follow customers or navigate aisles.

- OM1’s Role: OM1 would process customer queries using ASR (“Where are the sneakers?”). Its VLMs would analyze customer facial expressions and gestures to infer mood or intent. The Data Fuser would combine these: “Customer appears frustrated, asking for sneakers, currently standing near apparel.” The Multi-LLM Planning layer would then access product inventory information, determine the precise location of sneakers, and generate a polite, helpful response for the Speech HAL: “Certainly! The sneakers are in aisle 7, just past the fitting rooms. Would you like me to lead the way?” The planning layer would also consider the customer’s apparent frustration and prioritize a quick, clear response. It could even propose upselling opportunities based on stored knowledge of customer preferences (e.g., “We also have a new line of running shoes you might be interested in”).

Advanced Manufacturing Robot

Picture a robotic arm on an assembly line performing intricate tasks.

- ROS2’s Role: ROS2 would handle the high-frequency communication of joint angles, force sensor data during manipulation, and camera feeds for precise part placement.

- OM1’s Role: OM1 would continuously monitor the assembly process through vision and tactile sensors. Its VLMs would detect anomalies in part alignment or identify missing components. The Data Fuser would integrate these observations, potentially flagging a deviation from the expected assembly sequence. The Multi-LLM Planning layer would then diagnose the issue (e.g., “Part A is misaligned by 2mm, requiring corrective action”) and generate a series of precise robotic movements through the HAL to rectify the misalignment, potentially learning from similar past errors stored in its memory. This allows for intelligent error recovery and quality control beyond pre-programmed routines.

In each of these scenarios, OM1 elevates the robot’s capabilities from merely executing pre-programmed routines to understanding, reasoning, and adapting to dynamic, real-world situations.

The Future of Robotics: OM1 and Beyond

The introduction of OM1 marks a significant milestone in the evolution of robotics. It formalizes a much-needed architectural pattern, providing a robust and flexible framework for integrating advanced AI into robotic systems. The high number of forks and ongoing customization efforts indicate that OM1 is not just a concept but a practical, impactful solution that is resonating deeply within the robotics development community.

As AI models continue to advance, especially in areas like multimodal understanding, generative AI, and multi-agent systems, a dedicated reasoning layer like OM1 will become indispensable. It paves the way for robots that can not only move and manipulate but also truly understand their environment, engage in natural human-robot interaction, learn from experience, and autonomously make complex decisions in dynamic and unpredictable settings.

The question “Where in your stack do you put the ‘what should the robot do now’ logic?” now has a compelling answer: it belongs in OM1, a dedicated, modular AI runtime that empowers robots to not just communicate data, but to comprehend, reason, and act intelligently in the real world. This architectural shift will unlock unprecedented levels of autonomy and capability in the next generation of robotic applications, driving innovation across industries and transforming how we interact with intelligent machines.

Are you ready to elevate your robotic systems with cutting-edge AI decision-making? Discover how IoT Worlds can help you implement and optimize OM1 architecture for your unique applications. Send an email to info@iotworlds.com to schedule a consultation and transform your vision into reality.