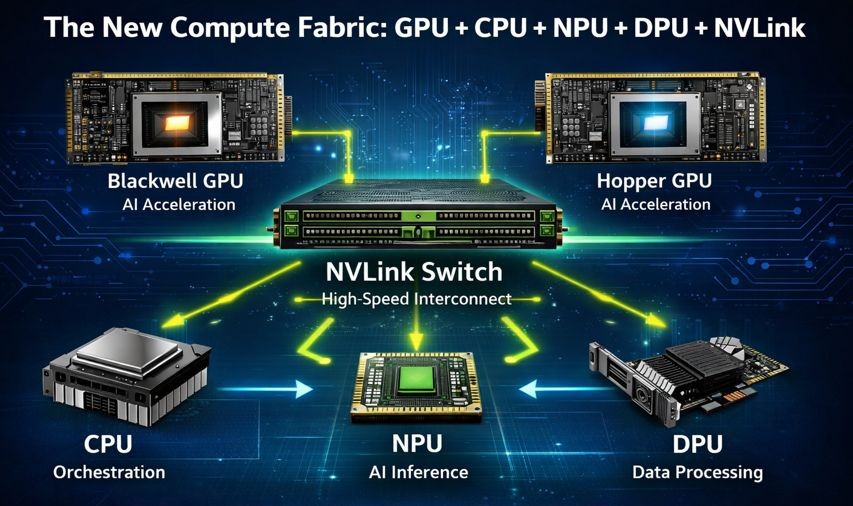

The landscape of modern computing is undergoing a profound transformation. What was once a relatively uniform field dominated by the Central Processing Unit (CPU) has evolved into a sophisticated, heterogeneous ecosystem. Today, Artificial Intelligence (AI) workloads, in particular, demand an unprecedented level of computational power and specialized processing capabilities. This demand has given rise to a new compute fabric, where various processors, each with its unique strengths, collaborate seamlessly to unlock intelligence at scale. This article explores the critical components of this new fabric—GPUs, CPUs, NPUs, DPUs, and NVLink Switches—and examines how their synergistic interaction is shaping the future of AI, High-Performance Computing (HPC), and enterprise workloads.

The Evolution of Compute: From Monolithic to Heterogeneous Architectures

For decades, the CPU reigned supreme as the workhorse of nearly all computing tasks. Its versatility and ability to handle a broad range of instructions made it indispensable. However, as computational demands escalated, especially with the advent of AI and big data, the limitations of a CPU-centric model became apparent. The sequential nature of many CPU operations proved to be a bottleneck for highly parallel tasks common in machine learning. This spurred the development of specialized accelerators designed to offload and dramatically speed up specific types of computations.

The Rise of Specialized Processors

The journey towards a heterogeneous compute fabric began with the recognition that different types of computational problems benefit from different architectural approaches. While the CPU excels at complex control flows and general-purpose tasks, its efficiency diminishes when faced with highly parallel, computationally intensive operations. This realization led to the emergence of dedicated accelerators, each engineered to address specific computational bottlenecks. The continued evolution of these specialized processors has culminated in the sophisticated compute fabrics we see today, capable of handling the most demanding AI workloads.

Understanding the Heterogeneous Fabric

A heterogeneous compute fabric is an architecture where multiple types of processors, each optimized for different kinds of computations, work together. Instead of relying on a single, general-purpose chip, this approach leverages the strengths of diverse processing units to achieve optimal performance and efficiency for complex workloads. This paradigm shift is particularly crucial for AI, where training large models and performing inference at scale require a delicate balance of massive parallelism, low-latency data movement, and efficient resource orchestration.

The Powerhouse: GPU (Blackwell / Hopper)

At the heart of the new compute fabric, especially for AI, lies the Graphics Processing Unit (GPU). Initially designed for rendering images and video in gaming, GPUs have evolved into powerful parallel processing machines, making them ideally suited for the mathematical operations inherent in AI algorithms.

The Architecture of AI Acceleration

Modern GPUs like NVIDIA’s Blackwell and Hopper architectures are engineered from the ground up for AI acceleration. They feature thousands of smaller, specialized cores that can perform many calculations simultaneously. This massive parallelism is precisely what AI training and inference demand, particularly for large neural networks and generative AI models.

Key Characteristics of GPUs for AI

- Massive Parallelism: GPUs excel at performing many similar computations concurrently. This is fundamental to matrix multiplications and other linear algebra operations that underpin deep learning.

- High Memory Bandwidth: AI models often require access to vast amounts of data. GPUs are equipped with high-bandwidth memory (HBM) that can quickly feed data to their processing cores, preventing bottlenecks.

- Specialized AI Cores: Modern GPUs include dedicated Tensor Cores (in NVIDIA’s case) or similar units that are specifically designed to accelerate mixed-precision matrix operations, further boosting performance for AI workloads.

- Scalability: GPUs can be interconnected to form powerful clusters, allowing for the training of even the largest and most complex AI models.

GPU’s Role in Training and Inference

In the context of AI, GPUs play a dual role:

AI Training

Training AI models, especially large language models (LLMs) and generative AI, involves feeding vast datasets to a neural network, adjusting millions or even billions of parameters, and iteratively refining the model’s performance. This process is incredibly computationally intensive and requires immense parallel processing power, which GPUs provide. A single training run for a large AI model can take days or even weeks on a powerful GPU cluster. The advancements in GPU technology, such as the Blackwell and Hopper architectures, continually push the boundaries of what’s possible in AI model development.

AI Inference

Once an AI model is trained, it needs to be deployed to make predictions or generate outputs. This process is known as inference. While generally less computationally demanding than training, inference at scale still requires significant processing power, especially for real-time applications. GPUs are highly efficient at performing inference, allowing for quick and accurate responses from deployed AI models. Their ability to handle multiple inference requests concurrently makes them ideal for cloud-based AI services and edge AI applications.

The Orchestrator: CPU

While GPUs handle the heavy lifting of AI arithmetic, the Central Processing Unit (CPU) remains an indispensable component of the compute fabric. It acts as the orchestrator, managing overall system operations, handling general-purpose tasks, and ensuring smooth communication between various specialized processors.

The General-Purpose Workhorse

The CPU is designed for flexibility and sequential processing. It excels at a wide variety of tasks that don’t benefit from massive parallelism, including operating system functions, application logic, and database management. In a heterogeneous environment, the CPU takes on crucial roles that ensure the efficient operation of the entire system.

Key Functions of the CPU in the New Compute Fabric

- General-Purpose Compute: The CPU handles all the tasks that aren’t specifically optimized for other processors. This includes running the operating system, managing system resources, and executing general application code.

- Orchestration: The CPU is responsible for orchestrating the overall workload, distributing tasks to the appropriate specialized processors (GPUs, NPUs, DPUs), and managing their execution. It ensures that data flows efficiently between components and that resources are allocated optimally.

- Control Plane Tasks: In data centers, CPUs are critical for control plane operations, managing network configurations, security policies, and storage access. They provide the foundational intelligence for the entire infrastructure.

- Data Pre- and Post-Processing: While DPUs handle large-scale data movement, CPUs often perform preliminary data processing, formatting, and post-processing steps before data is fed to a GPU or NPU for AI computation or after results are generated. This ensures data is in the correct format and ready for the next stage of processing.

- Managing I/O Operations: CPUs manage input/output (I/O) operations, interacting with storage devices, network interfaces, and other peripherals. This is crucial for retrieving data for AI models and storing their outputs.

Complementary, Not Competitive

It’s important to view the CPU not as a competitor to specialized accelerators but as a complementary component. Its strengths lie in its versatility and ability to manage complex workflows, making it the brain of the entire compute system. Without the CPU’s orchestrating capabilities, the specialized processors would operate in isolation, hindering the overall efficiency and intelligence of the data center.

The Inference Specialist: NPU (Neural Processing Unit)

As AI deployments grow, especially at the edge and for high-volume inference tasks, the need for even more specialized and power-efficient processing units has emerged. This is where the Neural Processing Unit (NPU) comes into play. NPUs are purpose-built accelerators designed for deep learning inference at scale, often with a focus on energy efficiency.

Designed for Deep Learning Inference

Unlike GPUs, which are powerful but can be power-intensive, NPUs are often optimized for specific types of neural network operations and power consumption. Their architecture prioritizes efficiency and throughput for inference tasks, making them ideal for scenarios where trained models need to be deployed and run with minimal latency and power usage.

Advantages of NPUs

- Power Efficiency: NPUs are designed to perform deep learning inference with significantly lower power consumption compared to general-purpose GPUs. This makes them suitable for edge devices, IoT applications, and large-scale data center inference where power expenditure is a major concern.

- High Throughput for Inference: While GPUs are excellent for both training and inference, NPUs are hyper-specialized for inference, often achieving higher inference throughput for specific model architectures at a given power budget.

- Low Latency: For real-time AI applications, low inference latency is crucial. NPUs are engineered to deliver rapid predictions, which is vital for use cases like autonomous vehicles, real-time analytics, and industrial automation.

- Smaller Footprint: Many NPUs are designed to be compact, enabling their integration into a wider range of devices, from smartphones and smart cameras to industrial sensors and embedded systems.

- Dedicated Hardware Accelerators: NPUs often incorporate dedicated hardware blocks for common deep learning operations, such as convolution, activation functions, and pooling, further enhancing their efficiency.

NPU Applications

NPUs are finding their way into a diverse array of applications:

- Edge AI: From smart cameras performing object detection to industrial sensors predicting equipment failures, NPUs bring AI capabilities directly to the data source, reducing reliance on cloud connectivity and minimizing latency.

- Smartphones and Consumer Devices: NPUs enable on-device AI features like facial recognition, natural language processing, and advanced computational photography without draining battery life.

- Data Center Inference: For massive-scale AI inference, such as serving millions of simultaneous user requests for recommendation engines or chat assistants, NPUs can provide a cost-effective and energy-efficient solution compared to continually running power-hungry GPUs.

- Robotics: In robotics, NPUs can process sensor data in real-time to enable navigation, object manipulation, and human-robot interaction.

The Synergy with GPUs and CPUs

While NPUs excel at inference, they often work in conjunction with GPUs and CPUs. GPUs might be used to train the models that NPUs then deploy. CPUs continue to handle the orchestration and management of the NPU-poweredinference pipelines, ensuring data integrity and overall system stability. The NPU fills a critical niche, specializing in efficient, large-scale deployment of AI results.

The Data Mover: DPU (Data Processing Unit)

In a world increasingly driven by data, the efficient movement and processing of information are paramount. The Data Processing Unit (DPU) is a revolutionary processor specifically designed to offload networking, storage, and security tasks from CPUs and GPUs, thereby freeing these valuable resources to focus on their primary computational roles.

The Challenge of Data Movement

Modern data centers generate and consume exabytes of data. Moving this data efficiently between servers, storage systems, and accelerators is a significant challenge. Traditional architectures often burden CPUs with these data-centric tasks, diverting their cycles from more critical application processing. This impacts overall system performance, creates latency, and increases power consumption.

DPU Architecture and Capabilities

A DPU is a programmable processor that integrates a high-performance network interface with a system-on-a-chip (SoC) architecture, often including ARM cores, PCIe interfaces, and dedicated acceleration engines. Its core function is to accelerate data path services.

Key Roles of the DPU

- Offloading Networking: DPUs handle network virtualization, routing, and packet processing directly on the network interface card. This significantly reduces the overhead on the primary CPU, allowing it to focus on application logic.

- Accelerating Storage: DPUs can manage storage virtualization, encryption, and data compression at wire speed. This improves storage efficiency and performance while minimizing the burden on host CPUs.

- Enhancing Security: DPUs provide hardware-accelerated security features like firewalls, intrusion detection, and encryption/decryption, creating a secure boundary around applications and data. This allows for pervasive security without compromising performance.

- Zero-Trust Security: By implementing security features at the network edge, DPUs enable a zero-trust security model, where every data packet and connection is authenticated and authorized, regardless of its origin.

- Providing a “Data Center on a Chip”: In essence, a DPU creates a separate, high-performance execution environment for data infrastructure functions, isolating them from the application workload. This enhances reliability, performance, and security.

Why DPUs are Pivotal for AI and HPC

Freeing Up CPU and GPU Cycles

In AI and HPC environments, every CPU and GPU cycle is precious. By offloading networking, storage, and security tasks to DPUs, these expensive computational resources are liberated to focus entirely on AI training, inference, or scientific simulations. This translates directly to faster computation, greater throughput, and more efficient utilization of expensive hardware.

Enabling Efficient Data Movement

AI models often require massive datasets. DPUs ensure that this data can be moved efficiently and securely to and from GPUs and NPUs without introducing bottlenecks. Their high-bandwidth capabilities and specialized engines for data processing are crucial for maintaining the flow of information that fuels AI.

Improving Scalability and Resilience

By distributing infrastructure tasks to DPUs, the overall system becomes more scalable and resilient. It reduces the “noisy neighbor” problem, where one workload’s infrastructure demands impact others. This balance ensures predictable performance across diverse workloads.

The Foundation for Future Data Centers

The DPU is rapidly becoming a foundational component of modern, software-defined data centers. It provides the intelligent infrastructure necessary to support the most demanding workloads, paving the way for more agile, secure, and performant computing environments.

The AI Fabric Stitcher: NVLink Switch

Even the most powerful GPUs cannot operate in isolation when tackling the largest AI models. To achieve truly unprecedented levels of computational power, multiple GPUs need to communicate and collaborate at extremely high speeds. This is where the NVLink Switch, a high-bandwidth, low-latency interconnect, plays a critical role, stitching GPUs together into a unified and intelligent AI fabric.

The Need for High-Speed Interconnects

Traditional interconnects like PCIe were not designed for the extreme demands of inter-GPU communication in large-scale AI training. Moving massive amounts of data between GPUs over standard networking or PCIe links introduces significant latency and bandwidth bottlenecks, severely limiting the scalability of AI workloads. NVLink was developed to overcome these limitations.

NVLink: A Closer Look

NVLink is a high-speed, point-to-point interconnect that enables GPUs to communicate with each other much faster than through traditional PCIe buses. The NVLink Switch takes this concept further, allowing multiple GPUs to be interconnected in a flexible and scalable topology, forming a powerful collective.

Key Benefits of NVLink Switches

- High Bandwidth: NVLink provides significantly higher bandwidth per link compared to PCIe, enabling much faster data exchange between GPUs. This is crucial for training large AI models that require frequent synchronization and data sharing among many GPUs.

- Low Latency: Beyond raw bandwidth, NVLink is designed for extremely low latency communication. This minimizes the time GPUs spend waiting for data from other GPUs, leading to more efficient utilization of computational resources and faster training times.

- Scalability: NVLink Switches allow for the connection of hundreds, and potentially thousands, of GPUs into a single, massive AI supercomputer. This forms a “GPU fabric” where all GPUs can access each other’s memory and data with high speed, effectively creating a single, distributed memory space for AI models.

- Unified Memory: NVLink supports technologies that enable GPUs to directly access each other’s memory, blurring the lines between individual GPU memory spaces. This simplifies programming and allows for the development of larger, more complex models that would otherwise be constrained by the memory limits of a single GPU.

- Dedicated AI Fabric: The NVLink Switch creates a dedicated, high-performance fabric optimized specifically for AI workloads. This ensures that AI data traffic is prioritized and moves with minimal interference, providing predictable and consistent performance.

How NVLink Switches Enable Massive AI Models

Training state-of-the-art large language models or generative AI models often requires distributing the model and its data across a vast number of GPUs. The NVLink Switch is the backbone that makes this possible. It allows these distributed GPUs to act as a single, cohesive unit, sharing information and collaborating on computational tasks with the speed and efficiency demanded by cutting-edge AI.

NVLink’s Impact on AI Development

The availability of high-speed interconnects like NVLink has been a game-changer for AI research and development. It has enabled researchers and engineers to build and train models with an unprecedented number of parameters, leading to revolutionary advancements in natural language processing, computer vision, and scientific discovery. Without NVLink, the practical scalability required for these monumental AI challenges would be significantly hampered.

The Synergy: How Each Component Powers the AI Fabric

The true power of the new compute fabric lies not in the individual capabilities of each processor, but in their sophisticated interplay. This synergistic relationship creates a robust, scalable, and intelligent infrastructure capable of pushing the boundaries of what AI can achieve.

Scalability Through Interconnection

The ability to scale AI workloads is paramount. NVLink Switches are the conduits that connect thousands of GPUs, fostering a seamless and highly efficient single AI environment. This interconnectedness allows for:

- Distributed Training: Breaking down massive AI training tasks into smaller, manageable chunks that can be processed in parallel across numerous GPUs. The NVLink Switch ensures rapid data synchronization between these GPUs, keeping them working in harmony.

- Cluster-wide Resource Sharing: Allowing dynamic allocation and sharing of GPU resources across different AI jobs and users, maximizing utilization and throughput within the data center.

- Future-Proofing: Providing an architectural foundation that can accommodate increasingly larger AI models and more complex computational demands as the field of AI evolves.

Efficiency Through Specialization

The heterogeneous approach optimizes efficiency by assigning tasks to the processors best suited for them:

- DPUs for Data Movement: By offloading networking, storage, and security responsibilities from CPUs and GPUs, DPUs ensure that the primary computational engines are not burdened with data infrastructure tasks. This allows GPUs to dedicate their entire capacity to AI calculations and CPUs to focus on orchestration.

- NPUs for Inference: For deployed AI models, NPUs provide a highly efficient and power-optimized solution for inference at scale. This allows for real-time AI applications without the power overhead of general-purpose GPUs, especially in edge computing scenarios.

- CPUs for Orchestration: The CPU, acting as the central command, manages the distribution of tasks, monitors system health, and ensures smooth operation across the diverse array of specialized processors. It handles the complex logic that binds the fabric together.

Predictable Performance Through Balance and Resilience

A well-architected heterogeneous compute fabric ensures predictable performance for a wide array of workloads:

- Resource Isolation: By dedicating specific processors to particular types of tasks, the fabric minimizes contention for resources. For example, DPU-handled network traffic is isolated from CPU-bound application processes, ensuring more stable performance for both.

- Workload Optimization: The ability to dynamically route different workload components to the most appropriate processor type means that each part of a complex application (e.g., AI, HPC, traditional enterprise software) receives optimal processing.

- Resilience and Reliability: Distributing tasks across multiple specialized units can enhance the overall resilience of the system. If one component faces a temporary overload or issue, the intelligent orchestration by the CPU can help reallocate resources or manage the impact. This layered approach contributes to higher uptime and more consistent performance for critical applications.

- Cost-Effectiveness: While initial investment in a heterogeneous fabric might seem higher, the long-term efficiency gains, reduced power consumption for specific tasks (e.g., NPU for inference), and optimized utilization of expensive GPU resources often lead to a lower total cost of ownership.

The Impact on Modern Data Centers and Enterprise Workloads

The emergence of this new compute fabric is revolutionizing modern data centers and having a profound impact across various industries.

Transforming AI Development and Deployment

The combined power of GPUs, NPUs, DPUs, and NVLink Switches is accelerating every stage of the AI lifecycle:

- Faster Training: Large-scale GPU clusters interconnected by NVLink allow for the rapid training of increasingly complex AI models, leading to quicker innovation in fields like drug discovery, material science, and personalized medicine.

- Efficient Inference at Scale: NPUs and DPUs enable the deployment of AI models in production with high efficiency and low latency, powering real-time analytics, autonomous systems, and highly responsive AI services.

- New AI Capabilities: The sheer computational power and data handling capabilities unlock the potential for entirely new AI applications that were previously infeasible due to computational constraints.

Powering High-Performance Computing (HPC)

While AI is a primary driver, the heterogeneous fabric also significantly benefits traditional HPC workloads:

- Scientific Simulation: Complex simulations in physics, chemistry, and climate modeling leverage the parallel processing power of GPUs, with DPUs ensuring rapid data handling and CPUs orchestrating the overall simulation.

- Big Data Analytics: Processing and analyzing massive datasets benefits from the combined strengths of DPUs for data ingress/egress, GPUs for analytical computations, and CPUs for managing the data pipelines.

Enhancing Enterprise Infrastructure

Beyond AI and HPC, enterprises are also reaping the benefits:

- Software-Defined Data Centers (SDDC): DPUs are foundational to SDDCs, enabling true software-defined networking, storage, and security by offloading these functions from host CPUs.

- Cloud Computing: Cloud providers are rapidly adopting these heterogeneous architectures to offer highly scalable and efficient AI and HPC services to their customers. The modularity and specialization allow for flexible resource allocation and optimized cost structures.

- Cybersecurity: DPUs with their hardware-accelerated security features provide a robust defense against cyber threats, offering a secure foundation for critical enterprise applications and data.

- Edge Computing: The combination of power-efficient NPUs and DPUs is crucial for deploying intelligent applications at the network edge, bringing compute closer to data sources and enabling new use cases in manufacturing, retail, and smart cities.

Overcoming Computational Bottlenecks

Historically, computational bottlenecks have limited the scope and ambition of many projects. The new compute fabric systematically addresses these bottlenecks:

- Compute Bottlenecks: Solved by the massive parallel processing capabilities of GPUs for training and NPUs for efficient inference.

- Data Movement Bottlenecks: Alleviated by DPUs, which efficiently handle network, storage, and security tasks, ensuring data flows freely and without hindering other processors.

- Inter-Processor Communication Bottlenecks: Mitigated by NVLink Switches, providing high-bandwidth, low-latency communication between GPUs.

- Orchestration and Management Bottlenecks: Handled by the CPU, ensuring that all specialized components work together harmoniously.

This holistic approach to system design is enabling organizations to tackle problems of unprecedented scale and complexity.

The Future is Now: Intelligence at Scale

The concept of a heterogeneous compute fabric is not merely a theoretical construct; it is the reality of modern data centers and the driving force behind the rapid advancements in Artificial Intelligence. The synergy of GPU, CPU, NPU, DPU, and NVLink Switches is delivering intelligence at a scale previously unimaginable.

The Role of Software

While hardware innovation is foundational, it’s crucial to acknowledge the equally vital role of software. Sophisticated software stacks, including AI frameworks, operating systems, virtualization platforms, and orchestration tools, are required to effectively manage and program this complex heterogeneous environment. These software layers abstract away the underlying hardware complexities, allowing developers to leverage the full power of the compute fabric with greater ease.

Continuous Innovation

The journey towards more powerful and efficient compute fabrics is ongoing. We can expect continuous innovation in each of these component areas:

- GPUs: Further increases in processing power, memory bandwidth, and specialized AI cores.

- CPUs: Continued improvements in core count, clock speeds, and instruction set architectures, alongside deeper integration with accelerators.

- NPUs: Greater specialization, energy efficiency, and broader adoption across various edge and data center inference platforms.

- DPUs: Enhanced programmability, more comprehensive offload capabilities, and tighter integration with security and management frameworks.

- Interconnects: Evolution of NVLink and other high-speed interconnect technologies to support even greater scalability and lower latency, enabling the construction of exascale AI supercomputers.

Embracing the Heterogeneous Future

For businesses and researchers seeking to leverage the full potential of AI and advanced computing, understanding and embracing this heterogeneous model is no longer optional. It is a prerequisite for innovation, competitive advantage, and tackling the most pressing challenges of our time.

The future of compute isn’t defined by a single chip; it’s defined by the intelligent symphony of diverse processors working in concert. The GPU brings the raw power for acceleration, the CPU orchestrates the entire operation, the NPU refines inference with efficiency, the DPU masterfully moves and secures data, and the NVLink Switch stitches it all together into a unified and formidable AI fabric. This collective force is what truly delivers intelligence at scale, reshaping industries and transforming our world.

To learn more about how to harness the power of this new compute fabric for your specific AI, HPC, or enterprise needs, or to explore tailored solutions for optimizing your data center infrastructure, reach out to our experts.

Contact us today to discover how a specialized compute fabric can revolutionize your operations and drive your success. Email us at info@iotworlds.com.