Industrial automation is undergoing a profound transformation. The factory floor, once governed by fixed rules and human observation, is rapidly evolving into a dynamic, intelligent ecosystem powered by Artificial Intelligence and Machine Learning. This shift is particularly evident in the realm of machine vision, where AI/ML-driven systems are replacing traditional approaches to deliver unprecedented levels of precision, efficiency, and safety.

The integration of AI into machine vision unlocks capabilities far beyond what was previously possible. High-precision checks that detect microscopic defects, intricate robotic coordination for complex assembly tasks, and enhanced safety protocols that anticipate and prevent accidents are now within reach. However, this advancement is not without its challenges. The massive volumes of data generated by these advanced vision systems can overwhelm standard production networks, demanding a specialized network design featuring high-bandwidth connectivity, precision synchronization (PTP), and robust Power-over-Ethernet (PoE) solutions. This article delves into the intricacies of optimizing AI-driven machine vision in industrial automation, exploring its business impact, the foundational pillars of network design, various deployment models, and the critical importance of security and visibility.

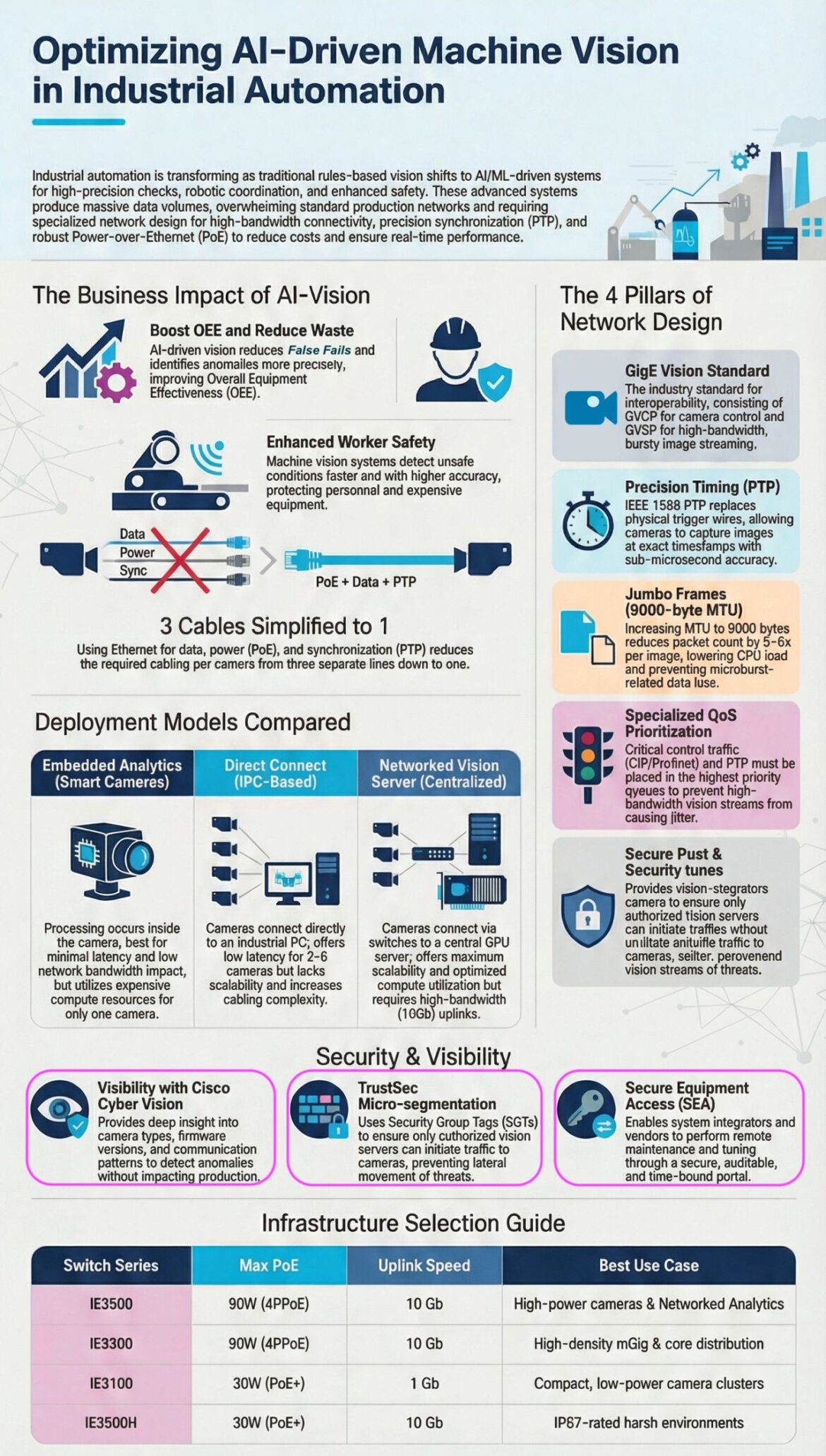

The Business Impact of AI-Vision

The deployment of AI-driven machine vision in industrial settings is not merely a technological upgrade but a strategic business imperative. It offers tangible benefits that directly impact an organization’s bottom line and operational excellence. At its core, AI-vision empowers industries to achieve higher levels of efficiency, reduce waste, and create safer working environments. For example, Physical AI systems, which rely heavily on machine vision for perception, are crucial for automating tasks in physical environments, adhering to strict latency and safety constraints crucial for industrial operations.

Boosting Overall Equipment Effectiveness (OEE) and Reducing Waste

One of the most significant advantages of AI-driven vision is its ability to dramatically improve Overall Equipment Effectiveness (OEE) while simultaneously minimizing waste. Traditional machine vision systems often suffer from “false fails,” where products are incorrectly flagged as defective, leading to unnecessary scrap and rework. Conversely, they can also miss genuine defects, resulting in costly product recalls or customer dissatisfaction.

AI-driven vision systems overcome these limitations by learning from vast datasets of images, enabling them to identify anomalies with far greater precision. Machine learning models, when fed with high-frequency data from IIoT sensors, become adept at detecting subtle precursors to failure that would be invisible to human eyes or static thresholds, allowing for a shift from predictive to prescriptive maintenance. This enhanced accuracy means:

- Reduced False Fails: AI algorithms can differentiate between minor, non-critical variations and actual defects, significantly decreasing the number of perfectly good products discarded due to erroneous inspections. This directly translates to less material waste and improved resource utilization.

- Precise Anomaly Detection: The ability of AI to learn intricate patterns allows for the detection of nascent issues before they escalate into major problems. This could be a hairline crack on a component, a slight misalignment in an assembly, or a subtle deviation in a manufacturing process. By catching these anomalies early, manufacturers can intervene proactively, preventing costly breakdowns and extensive damage to equipment or finished products.

- Optimized Production Processes: With real-time, accurate defect detection, AI-vision provides invaluable feedback for process optimization. Engineers can pinpoint the root causes of recurring defects and make data-driven adjustments to machinery, materials, or methodologies. This continuous improvement loop fosters a more robust and efficient production line, directly contributing to a higher OEE score.

The financial implications of these improvements are substantial. By reducing scrap, minimizing rework, and preventing downtime, businesses can realize significant cost savings and increased profitability.

Enhanced Worker Safety

Beyond efficiency, AI-driven machine vision plays a crucial role in enhancing worker safety within industrial environments. Traditional safety measures, while essential, can sometimes be reactive or limited by human perception. AI-powered vision systems, with their ability to process visual data at high speeds and with keen accuracy, introduce a new paradigm of proactive safety. AI and Machine Learning converge with industrial operations to make machine vision the “eyes” of the smart factory, improving safety, robotic coordination, and quality control.

- Faster and More Accurate Hazard Detection: AI-vision systems can continuously monitor work zones for unsafe conditions, such as unauthorized entry into hazardous areas, improper use of personal protective equipment (PPE), or objects falling from conveyors. Their ability to process information faster than human operators means they can trigger alerts or even automatic shutdowns almost instantaneously, preventing accidents before they occur.

- Protection of Personnel and Equipment: By identifying potential risks with higher accuracy, AI-vision safeguards both human workers and expensive machinery. For instance, vision systems can detect if a human limb is too close to a moving part of a robot, immediately halting its operation. This not only prevents injuries but also averts damage to high-value industrial equipment that could result from collisions or improper handling. Collaborative robots, specifically, rely on integrated safety monitoring and adaptive motion planning that can be greatly enhanced by AI-driven vision.

- Adaptive Safety Protocols: AI systems can learn and adapt to dynamic factory environments. If a new hazard emerges or operational parameters change, the AI can be retrained to recognize and respond to these new conditions, continuously upgrading safety protocols without extensive manual reprogramming. This adaptive capability ensures that safety measures remain relevant and effective even as industrial processes evolve. Predictive maintenance and anomaly detection, as highlighted by expert Roman Oshyyko, represent early and safe wins for AI in industrial automation, further contributing to a safer environment by preventing equipment failures.

The integration of AI into machine vision for safety purposes not only protects human lives but also reduces the financial burden associated with workplace accidents, including medical costs, legal liabilities, and lost productivity.

Architectural Foundation: The 4 Pillars of Network Design

The seamless operation of AI-driven machine vision in industrial automation hinges on a robust and intelligently designed network infrastructure. The immense data throughput generated by high-resolution cameras, coupled with the real-time processing demands of AI/ML algorithms, necessitates a departure from conventional network approaches. Four critical pillars underpin a successful network design for AI-vision.

GigE Vision Standard

At the heart of interoperability for industrial machine vision lies the GigE Vision Standard. This globally recognized standard dictates how cameras and vision systems communicate over Ethernet networks. It consists of several key components:

- GVCP (GigE Vision Control Protocol): This protocol handles the control and configuration of GigE Vision cameras. It allows vision systems to discover cameras, set parameters such as exposure and gain, and trigger image acquisition. The standardization of GVCP ensures that cameras from different manufacturers can be easily integrated into a unified system.

- GVSP (GigE Vision Stream Protocol): GVSP is responsible for the high-bandwidth, bursty streaming of image data from cameras to the processing unit. Industrial cameras typically generate uncompressed image data at high frame rates, leading to substantial data volumes that GVSP is designed to efficiently manage.

- Robustness and Reliability: The GigE Vision Standard inherently builds in mechanisms for error detection and recovery, ensuring reliable data transmission even in challenging industrial environments where electromagnetic interference or network congestion can be prevalent.

- Scalability: By leveraging standard Ethernet infrastructure, GigE Vision systems are inherently scalable. Manufacturers can add more cameras or increase processing power without needing to overhaul their entire network, providing significant flexibility for future expansion.

Adhering to the GigE Vision Standard is paramount for ensuring compatibility, simplifying integration, and optimizing the performance of AI-driven machine vision deployments. Without such a standard, each component would require custom interfaces, drastically increasing complexity and cost.

Precision Timing Protocol (PTP)

For applications requiring precise synchronization, such as multi-camera setups for 3D reconstruction or coordinated robotic movements, the IEEE 1588 Precision Timing Protocol (PTP) is indispensable. PTP replaces traditional physical trigger wires with a network-based timing synchronization mechanism, offering sub-microsecond accuracy.

- Sub-Microsecond Accuracy: PTP allows all connected devices, including cameras and processing units, to synchronize their internal clocks with extreme precision. This means that multiple cameras can capture images at the exact same instant, regardless of their physical location within the factory. This level of synchronization is critical for tasks like capturing stereoscopic images for depth perception or coordinating high-speed robotic actions.

- Elimination of Physical Trigger Wires: Historically, achieving synchronous image capture required dedicated physical trigger wires running to each camera. This added significant cabling complexity, increased installation costs, and made systems less flexible. PTP eliminates these physical connections, simplifying wiring and reducing infrastructure overhead. Data, Power, and PTP synchronization can now be delivered over a single Ethernet cable, simplifying cabling from three separate lines down to one.

- Event Correlation: Precise timestamps enabled by PTP are crucial for correlating events across different sensors and systems. For example, an image captured by a vision system can be accurately correlated with data from a robotic arm or a manufacturing process stage, providing a comprehensive view of an event.

- Real-Time Performance: In AI-driven machine vision, decision-making often relies on the temporal relationship between events. PTP ensures that all data points are accurately time-aligned, which is fundamental for real-time analysis and closed-loop control applications. The latency requirements for industrial AI range from 1 to 5 milliseconds for motion control, highlighting the need for precise timing.

The adoption of PTP is a cornerstone for advanced AI-vision applications that demand strict temporal coherence across multiple data streams.

Jumbo Frames (9000-byte MTU)

The sheer volume of data produced by high-resolution industrial cameras can strain network resources and CPU processing capabilities. Jumbo Frames, which allow for an increased Maximum Transmission Unit (MTU) of up to 9000 bytes, offer a significant optimization.

- Reduced Packet Count: Standard Ethernet frames typically have an MTU of 1500 bytes. When transmitting a large image, it must be fragmented into many smaller packets. Jumbo Frames allow for much larger data payloads per packet. This means that a single image can be transmitted using significantly fewer packets (often 5-6 times fewer for a typical image).

- Lower CPU Load: With fewer packets to process, network interfaces and CPU resources on both the camera and the receiving server experience a reduced load. This frees up valuable processing power for AI/ML algorithms, enabling faster inference and more complex analysis.

- Preventing Microburst-Related Data Loss: In high-bandwidth industrial networks, data can often arrive in “microbursts,” where a large amount of data is transmitted in a very short period. If the network buffers cannot handle these bursts, data loss can occur. By accommodating larger data payloads, Jumbo Frames can help to smooth out data flow and reduce the likelihood of microburst-related data loss, enhancing the reliability of image transmission.

- Improved Throughput: While not directly increasing the raw bandwidth, Jumbo Frames improve the effective throughput by reducing the overhead associated with packet headers and processing. More actual image data can be transmitted per unit of time.

Implementing Jumbo Frames is a relatively straightforward network configuration that can yield substantial performance benefits for AI-driven machine vision systems, particularly in scenarios involving large image files or high frame rates.

Specialized Quality of Service (QoS) Prioritization

In a converged industrial network, not all data traffic is created equal. Critical control traffic, such as data from Programmable Logic Controllers (PLCs) or Distributed Control Systems (DCS), and PTP synchronization messages demand absolute priority to prevent operational disruptions. High-bandwidth vision streams, while important, should not impede these mission-critical communications. Specialized Quality of Service (QoS) prioritization addresses this challenge.

- Dedicated Priority Queues: QoS mechanisms allow network administrators to classify and prioritize different types of traffic. Critical control traffic (e.g., CIP/Profinet) and PTP messages are assigned to the highest priority queues. This ensures that even during periods of heavy network congestion caused by voluminous vision data, these essential control signals are delivered with minimal delay and jitter.

- Preventing Jitter in Vision Streams: While control traffic takes precedence, QoS also plays a role in managing vision streams. By intelligently buffering and scheduling vision data, QoS helps to prevent excessive jitter (variations in packet delay) that could negatively impact the real-time performance and accuracy of AI-vision applications.

- Ensuring Deterministic Performance: Industrial automation systems rely on deterministic behavior—actions must occur precisely when expected. QoS contributes to this by guaranteeing predictable latency and bandwidth for critical applications, ensuring that AI-driven decisions are executed within strict time constraints.

- Network Segmentation: Beyond basic prioritization, advanced QoS strategies can involve network segmentation, logically separating different traffic types. This creates virtual “lanes” for specific data flows, further hardening the network against interference and ensuring the integrity of critical communications.

Effective QoS implementation is non-negotiable for integrating AI-driven machine vision into operational technology (OT) networks. It safeguards the stability and reliability of the entire industrial process while enabling the advanced capabilities of AI-vision.

Deployment Models Compared

The optimal deployment strategy for AI-driven machine vision systems depends on various factors, including latency requirements, scalability needs, computing resources, and the number of cameras involved. There are three primary models to consider, each with its own advantages and trade-offs.

Embedded Analytics (Smart Cameras)

Embedded analytics, often found in “smart cameras,” refers to a deployment model where the AI processing occurs directly inside the camera device itself. These cameras are essentially self-contained vision systems with integrated processing capabilities.

- Minimal Latency: Since processing happens at the very edge, directly on the camera, this model offers the lowest possible latency. Decisions derived from visual data can be made and acted upon almost instantaneously, which is critical for ultra-low-latency applications like fast machine control or immediate safety interlocks.

- Low Network Bandwidth Impact: Only metadata or final decision outputs (e.g., “pass/fail,” “object detected at coordinates X, Y”) need to be sent over the network, rather than raw, high-resolution image streams. This significantly reduces network bandwidth requirements, easing the burden on the industrial network.

- Autonomous Operation: Smart cameras can operate largely autonomously, making them ideal for remote or distributed deployments where continuous network connectivity to a central server might be unreliable or impractical.

- Cost of Compute and Scalability Limitations: A significant drawback is that embedded compute resources in cameras are typically expensive and limited. Each camera essentially requires its own dedicated processing unit. While suitable for a single camera or a small number of cameras performing specific, localized tasks, this model lacks scalability for applications requiring centralized data analysis, complex AI models, or coordination across many cameras. Upgrading processing power often means replacing the entire camera.

- Limited Centralized Training and Management: Training complex AI models usually requires significant computational resources, which are typically not available on an embedded smart camera. While they can run pre-trained models, iterative model improvement and centralized management of a fleet of cameras can be challenging.

Direct Connect (IPC-Based)

In the direct connect model, cameras are physically connected directly to an Industrial PC (IPC). The IPC serves as the local processing unit, performing AI inference and potentially some data pre-processing.

- Low Latency for Small Clusters: This model can offer relatively low latency for a small number of cameras (typically 2-6 cameras) connected to a single IPC. The dedicated local compute power of the IPC reduces network dependence for image processing.

- Dedicated Compute Power: Unlike embedded analytics, the IPC provides more substantial processing power than what’s typically found in a smart camera. This allows for more complex AI models and higher frame rates for a limited number of cameras.

- Increased Cabling Complexity: A major limitation is its lack of scalability and increased cabling complexity. Each camera requires a direct physical connection to the IPC, which can become unwieldy and expensive as the number of cameras grows. This also means IPCs need to be strategically placed near camera clusters.

- Limited Centralized View: While the IPC can process data from its connected cameras, achieving a holistic, factory-wide view or coordinating operations across multiple IPCs can become challenging without a higher-level network integration.

- Maintenance and Management: Managing multiple distributed IPCs, each running its own set of applications and potentially different AI models, can add to maintenance overhead.

Networked Vision Server (Centralized)

The networked vision server model represents the most scalable and flexible approach, where cameras connect via switches to a central GPU server or cloud environment for AI processing. This is where the network truly shines, enabling powerful AI model training and historical data retention.

- Maximum Scalability: This model offers unparalleled scalability. Dozens, hundreds, or even thousands of cameras can feed their data into a centralized server infrastructure. As the number of cameras or computational demands increase, the central server can be upgraded or scaled out with additional GPUs and processing power without affecting the camera installations.

- Optimized Compute Utilization: Centralized servers with powerful GPUs can efficiently handle the processing needs of multiple cameras concurrently. Resources can be dynamically allocated, ensuring optimal utilization of expensive compute hardware. This flexibility is crucial for training complex machine learning models, which often require vast amounts of real-world data.

- Advanced AI Model Training and Deployment: The high-performance computing resources of a central server are ideal for training sophisticated AI models, running iterative improvements, and deploying them across the entire fleet of cameras. This also facilitates A/B testing of different models and continuous learning.

- Centralized Data Analysis and Storage: All raw image data and processed insights can be stored centrally, enabling comprehensive data analytics, historical trend analysis, and regulatory compliance. This aggregated data is invaluable for identifying long-term patterns, optimizing processes, and gaining deeper operational intelligence.

- High-Bandwidth Uplinks Required: The primary requirement for this model is a robust network capable of handling the immense bandwidth generated by many high-resolution cameras streaming raw data simultaneously. This necessitates high-bandwidth uplinks (e.g., 10 Gigabit Ethernet) from edge switches to the central server.

- Potential for Higher Latency (if not optimized): While offering scalability, if the network is not properly designed with QoS and other optimizations, data transmission to the central server can introduce higher latency compared to edge-based processing. However, with the right network architecture, real-time performance can still be achieved.

Each deployment model offers a distinct balance of performance, cost, and complexity. The choice should align with the specific application requirements, the scale of the deployment, and the available infrastructure.

Security & Visibility

As AI-driven machine vision systems become integral to industrial operations, the importance of robust security and comprehensive visibility cannot be overstated. Connecting cameras and AI processing units to the network introduces potential vulnerabilities that must be actively managed to protect intellectual property, ensure operational integrity, and maintain data privacy. Security and governance should be integrated into the scope from the outset, not as an afterthought.

Visibility with Cisco Cyber Vision

Maintaining visibility into the intricate web of industrial devices, including new AI-driven cameras and vision systems, is foundational to effective cybersecurity. Solutions like Cisco Cyber Vision provide deep insights into the operational technology (OT) network.

- Discovery of Devices: Cyber Vision automatically discovers and identifies all connected industrial assets, including various camera types, their manufacturers, and their operating systems. This creates a comprehensive inventory of all devices on the network, which is the first step in assessing vulnerabilities.

- Firmware Version Monitoring: Outdated firmware can be a significant security risk. Cyber Vision monitors the firmware versions of cameras and other devices, alerting administrators to any unpatched vulnerabilities that could be exploited by attackers.

- Communication Patterns Analysis: Understanding how cameras communicate with vision servers and other industrial control systems is crucial. Cyber Vision maps these communication flows, detecting any deviations from normal behavior. An unexpected communication attempt from a camera to an external server, for instance, could indicate a compromise.

- Anomaly Detection: By establishing a baseline of normal operational behavior, Cyber Vision can detect anomalies without impacting production. This might include unusual traffic volumes from a camera, unexpected protocol usage, or unauthorized configuration changes. Detecting these anomalies early is key to thwarting cyberattacks.

Comprehensive visibility tools are essential for understanding the attack surface, identifying vulnerabilities, and rapidly responding to security incidents within the AI-vision infrastructure.

TrustSec Micro-segmentation

Micro-segmentation is a powerful cybersecurity strategy that enhances the security posture of AI-driven machine vision by creating granular security zones within the network. TrustSec Micro-segmentation, by using Security Group Tags (SGTs), takes this concept to the next level.

- Granular Access Control: Instead of relying on broad network segments, micro-segmentation allows for the creation of very specific security policies based on the identity and role of devices or users. With SGTs, a camera processing quality control data can be assigned a specific tag, and the vision server designed to receive that data can be assigned another.

- Preventing Lateral Movement of Threats: The core benefit of micro-segmentation is its ability to contain breaches. If an attacker manages to compromise a single camera or an unprivileged device, micro-segmentation prevents them from easily moving laterally across the network to access other critical systems, such as production control systems or intellectual property servers. Policies can be implemented such that only authorized vision servers can initiate traffic to specific cameras.

- Reducing Attack Surface: By strictly controlling which devices can communicate with each other, micro-segmentation significantly reduces the attack surface. Unnecessary communication paths are eliminated, thereby decreasing the opportunities for attackers to exploit vulnerabilities.

- Simplified Policy Management: While seemingly complex, solutions like TrustSec simplify the management of these granular policies through identity-based access control. Policies are defined once and then assigned to SGTs, which are dynamically applied to devices as they connect to the network.

Micro-segmentation is critical for isolating AI-vision components, protecting sensitive data, and ensuring that any potential security breach is contained, thereby minimizing its impact on production.

Secure Equipment Access (SEA)

Remote access is a common requirement in industrial environments for maintenance, troubleshooting, and optimization. However, it also presents a significant security challenge. Secure Equipment Access (SEA) provides a secure, auditable, and time-bound portal for system integrators and vendors to perform remote maintenance and tuning.

- Controlled Remote Access: SEA establishes a securely brokered connection between external parties and specific industrial equipment, including AI-vision cameras and servers. This eliminates the need for direct, wide-open access, significantly reducing the risk of unauthorized entry.

- Auditable Access Logs: Every remote session is fully logged and auditable, providing a clear record of who accessed what, when, and for how long. This is crucial for accountability, compliance, and forensic analysis in case of a security incident.

- Time-Bound Permissions: Access can be granted for a specific duration, automatically expiring once the task is complete. This prevents persistent connections that could be exploited if credentials are stolen or compromised.

- Specific Device Access: Instead of granting broad network access, SEA allows for precise control over which specific devices an external user can connect to. A vendor might only need access to a particular camera for firmware updates, not the entire vision server or the plant’s control network.

- Multi-Factor Authentication (MFA): Robust SEA solutions typically integrate with multi-factor authentication, adding an extra layer of security to verify the identity of remote users.

Secure Equipment Access is vital for enabling the necessary remote support and optimization of AI-driven machine vision systems while simultaneously mitigating the inherent cybersecurity risks associated with external access.

Infrastructure Selection Guide

Choosing the right network infrastructure is paramount for supporting the bandwidth, power, and environmental demands of AI-driven machine vision. The industrial switch, as the backbone of the edge network, plays a crucial role. Key considerations include maximum Power over Ethernet (PoE) capabilities, uplink speed, and environmental ratings.

Power over Ethernet (PoE) Capabilities

PoE simplifies cabling by delivering both data and electrical power over a single Ethernet cable. For AI-driven machine vision, especially with advanced cameras and edge computing devices, robust PoE capabilities are essential. The industry standard has evolved to support higher power delivery:

- PoE+ (IEEE 802.3at) – 30W: This standard is suitable for many traditional IP cameras and lower-power edge devices. Switches like the IE3100, offering 30W per port, are ideal for compact, low-power camera clusters where computational demands are moderate and centralized processing offloads heavy loads.

- 4PPoE (IEEE 802.3bt, Type 3 and Type 4) – 90W: The latest PoE standard, often referred to as PoE++ or 4-pair PoE, delivers up to 90W per port. This higher power output is critical for:

- High-Power Cameras: Advanced AI-vision cameras incorporate more powerful sensors, integrated lighting, and even on-board processing (as seen in embedded analytics models). These devices often require more than 30W of power.

- Edge Computing Devices: Industrial ruggedized PCs or specialized AI accelerators deployed at the edge to perform localized inference may draw significant power, making 90W PoE indispensable.

- Simplified Installation and Reduced Cost: By eliminating separate power outlets and cabling for each device, 90W PoE drastically simplifies installation, reduces material costs, and improves infrastructure flexibility. Switches like the IE3500 and IE3300, both providing 90W 4PPoE, are essential for these high-power applications.

When selecting switches, understanding the power requirements of all connected AI-vision devices is non-negotiable to ensure stable and reliable operation.

Uplink Speed

The uplink speed of an industrial switch determines how quickly data can be transmitted from the edge to the aggregation layer or directly to a central vision server. For AI-driven machine vision, especially in the networked vision server model, high uplink speeds are critical due to the sheer volume of raw image data.

- 1 Gigabit Ethernet (1 GbE): Switches with 1 GbE uplinks, such as the IE3100, are generally suitable for clusters of low-power cameras or scenarios where only metadata and compressed results are transmitted upstream. They are effective for compact deployments where bandwidth demands are not extreme.

- 10 Gigabit Ethernet (10 GbE): For high-performance AI-vision systems, 10 GbE uplinks are rapidly becoming the standard. Switches like the IE3500, IE3300, and IE3500H offer 10 GbE uplink speeds. This higher bandwidth is essential for:

- High-Resolution, High-Frame-Rate Cameras: Multiple cameras streaming uncompressed, high-definition (e.g., 4K or higher) video at dozens or hundreds of frames per second generate massive data volumes that can quickly saturate 1 GbE links.

- Networked Analytics Deployments: When raw image data from numerous cameras is sent to a central GPU server for processing, a 10 GbE uplink ensures minimal bottlenecks and allows for real-time analysis.

- High-Density mGig and Core Distribution: For aggregating traffic from many edge switches or connecting to the core network, 10 GbE uplinks provide the necessary capacity to prevent congestion and maintain peak performance.

The choice of uplink speed should directly correlate with the aggregate bandwidth requirements of all cameras and edge devices connected to the switch, ensuring that the network can handle peak data loads without performance degradation.

Environmental Ratings and Specific Use Cases

Industrial environments are often characterized by harsh conditions, including extreme temperatures, vibration, dust, and moisture. Consequently, industrial switches must possess appropriate environmental ratings to ensure reliable long-term operation.

- Ruggedized Design: Switches like the IE3500H are specifically designed for IP67-rated harsh environments. An IP (Ingress Protection) rating of 67 signifies that the device is fully protected against dust ingress (6) and can withstand immersion in water up to 1 meter for 30 minutes (7). This makes it suitable for deployments in wash-down areas, outdoor substations, or other environments where extreme conditions prevail.

- Extended Temperature Ranges: Industrial switches often need to operate reliably across a much broader temperature range than commercial-grade equipment, typically from −40∘C to +75∘C (−40∘F to 167∘F).

- Vibration and Shock Resistance: Factories are rife with machinery that generates vibration and shock. Industrial switches are engineered to withstand these forces without suffering mechanical or electrical failure.

When selecting switches, it is crucial to match the switch’s environmental ratings to the specific conditions of the deployment location.

Summary of Infrastructure Selection:

| Switch Series | Max PoE | Uplink Speed | Best Use Case |

|---|---|---|---|

| IE3500 | 90W (4PPoE) | 10 Gb | High-power cameras & Networked Analytics |

| IE3300 | 90W (4PPoE) | 10 Gb | High-density mGig & core distribution |

| IE3100 | 30W (PoE+) | 1 Gb | Compact, low-power camera clusters |

| IE3500H | 30W (PoE+) | 10 Gb | IP67-rated harsh environments |

This guide illustrates that the “best” switch is highly dependent on the specific needs of the AI-driven machine vision application. A careful evaluation of power, bandwidth, and environmental factors will lead to the most effective and cost-efficient infrastructure deployment.

The Future of AI-Driven Machine Vision in Smart Manufacturing

The trajectory of AI-driven machine vision is one of continuous growth and increasing sophistication, solidifying its role as a cornerstone of smart manufacturing and Industry 4.0. The convergence of Industrial IoT (IIoT) and AI, often termed AIoT, is transforming industrial operations from merely “connected” to truly “intelligent”. This evolution promises further enhancements in automation, predictive capabilities, and human-machine collaboration.

Towards Autonomous Industrial Systems

AI-driven machine vision is a key enabler for the transition to truly autonomous industrial systems. As noted by UV Netware, physical AI and IIoT form the operational foundation of smart manufacturing, allowing organizations to move from reactive operations to self-optimizing industrial systems driven by real-time analytics and closed-loop automation.

- Self-Correction and Optimization: Future systems will not only detect defects or anomalies but also proactively initiate corrective actions. For example, an AI-vision system detecting a minor alignment issue in an assembly process could automatically trigger micro-adjustments to robotic arms or machinery settings, maintaining optimal production quality without human intervention.

- Adaptive Production Lines: AI-vision will enable production lines to adapt dynamically to changing demands, material variations, or even equipment wear. Cameras will continuously feed data to AI models that analyze performance, predict maintenance needs, and reconfigure production flows to maximize throughput and efficiency.

- Enhanced Robotic Autonomy: Beyond simple pick-and-place, AI-vision will facilitate more complex and nuanced robotic tasks. Robots will use vision to continuously assess their environment, understand task context, and learn new manipulation techniques, leading to even greater flexibility and dexterity in automation.

Hyper-Personalization and Mass Customization

The detailed real-time insights provided by AI-driven machine vision will support the growing trend of hyper-personalization and mass customization in manufacturing.

- Individual Product Verification: Every product can be individually verified and tracked throughout its production journey, identifying unique characteristics or customer-specific configurations. This ensures that custom orders are fulfilled with exact precision.

- Dynamic Quality Control for Bespoke Items: As manufacturing shifts from mass production to smaller batches of customized goods, AI-vision systems can dynamically adjust their inspection parameters to the unique specifications of each item, maintaining high quality standards across a diverse product portfolio.

Ethical AI and Explainable Models

As AI becomes more deeply embedded in industrial decision-making, the focus will increasingly shift towards ethical considerations and the interpretability of AI models.

- Bias Mitigation: Efforts will be made to ensure that AI-vision models are free from biases that could lead to unfair or inaccurate inspections, particularly in tasks involving human-product interaction or safety.

- Explainable AI (XAI): For critical applications, operators and engineers will need to understand why an AI system made a particular decision. Explainable AI models, guided by clear metrics like ROI tied to downtime and scrap costs, will provide insights into their reasoning, fostering trust and enabling better troubleshooting. This is crucial for gaining operator buy-in and for regulatory compliance.

Cybersecurity Resilience

The increasing interconnectedness of AI-vision systems, driven by the need for data sharing and centralized analytics, will amplify the importance of cybersecurity resilience.

- AI for Cybersecurity: AI itself will be leveraged to enhance cybersecurity. Machine learning models will analyze network traffic and vision data to detect sophisticated cyber threats, predict potential vulnerabilities, and automate defense mechanisms in real-time.

- Secure by Design: Future AI-vision systems and their underlying infrastructure will be designed with cybersecurity as a core component, rather than an afterthought. This includes cryptographic authentication for cameras, secure boot processes, and continuous vulnerability management.

Human-AI Collaboration

Far from replacing humans entirely, AI-driven machine vision will increasingly foster richer human-AI collaboration.

- Augmenting Human Capabilities: AI will provide humans with superhuman perception and analytical capabilities, allowing operators to focus on higher-level strategic tasks, problem-solving, and continuous improvement. The goal is to augment decisions rather than replace deterministic control logic.

- Intelligent Assistants: AI-vision systems will act as intelligent assistants, providing real-time feedback, highlighting areas of concern, and offering recommendations to human workers, thereby improving overall efficiency and reducing cognitive load. This includes tools that provide deep insight into camera types, firmware versions, and communication patterns to detect anomalies.

The future of AI-driven machine vision in industrial automation is bright, promising unprecedented levels of efficiency, adaptation, and safety. However, realizing this potential requires a continued focus on robust network foundations, stringent security measures, and a strategic approach to AI deployment that prioritizes both technological advancement and operational integrity.

Conclusion

The journey towards optimizing AI-driven machine vision in industrial automation is a multifaceted one, demanding a holistic consideration of business drivers, technical infrastructure, and cybersecurity imperatives. As industries continue to embrace digital transformation, the strategic integration of AI/ML into vision systems is not merely an option but a critical pathway to achieving superior operational performance, enhanced safety, and sustainable growth.

The profound business impact of AI-vision, ranging from boosting Overall Equipment Effectiveness (OEE) and reducing waste through precise anomaly detection, to significantly enhancing worker safety by proactively identifying hazards, underscores its transformative power. These benefits are not just theoretical; they translate directly into tangible cost savings and increased profitability for enterprises.

However, realizing these benefits hinges on a meticulously designed and robust network infrastructure. The four pillars of network design—the GigE Vision Standard for interoperability, Precision Timing Protocol (PTP) for sub-microsecond synchronization, Jumbo Frames for efficient data transfer, and Specialized Quality of Service (QoS) for traffic prioritization—are non-negotiable for handling the massive data volumes and real-time demands of AI-vision systems. Without this foundational strength, even the most advanced AI algorithms will falter.

Furthermore, the choice of deployment model—whether embedded analytics for low latency, direct connect for small clusters, or the highly scalable networked vision server—must align with specific operational requirements and future growth projections. Each model presents a unique balance of capabilities and considerations that must be carefully weighted.

Finally, paramount among all considerations are security and visibility. In an increasingly interconnected industrial landscape, securing AI-vision assets from cyber threats is critical. Solutions like Cisco Cyber Vision for deep operational insight, TrustSec Micro-segmentation for containing threats, and Secure Equipment Access (SEA) for controlled remote maintenance are indispensable safeguards. These measures protect not only the AI-vision systems themselves but also the integrity of critical industrial control processes and sensitive data.

The industrial switch, as a key component of the infrastructure, must be carefully selected based on its Power over Ethernet (PoE) capabilities to power advanced cameras and edge devices, its uplink speed to handle demanding bandwidth requirements, and its environmental ratings to ensure resilience in harsh factory conditions.

As industrial automation continues its rapid evolution, AI-driven machine vision will only grow in sophistication, propelling the industry towards fully autonomous, self-optimizing, and highly adaptable smart manufacturing environments. This future is not a distant dream but a present reality, achievable through strategic planning and the adoption of robust, integrated solutions.

If your organization is navigating the complexities of integrating AI-driven machine vision into its industrial operations, or if you need expert guidance in designing a secure and scalable network foundation for your smart factory, we are here to help. Our team at IoT Worlds specializes in transforming industrial challenges into innovative solutions.

Ready to unlock the full potential of AI-driven machine vision and enhance your industrial automation? Contact us today to discuss your specific needs and how we can assist you in building a resilient, intelligent, and secure operational future. Email us at info@iotworlds.com.