In the dynamic landscape of modern cybersecurity, where threats constantly evolve in sophistication and stealth, a reactive approach to security is no longer sufficient. Traditional security measures, relying heavily on signatures and known attack patterns, are often outmaneuvered by advanced persistent threats (APTs) and zero-day exploits. The cybersecurity paradigm has shifted, demanding a proactive stance – one that actively seeks out the silent, undetected dangers lurking within networks. This proactive discipline is known as threat hunting.

Alerts tell you what was detected. Threat hunting reveals what was missed.

Modern security teams don’t wait for signatures – they investigate proactively. The difference between average and advanced teams lies in their proactive mindset, deep telemetry visibility, a continuous improvement loop, and a strong alignment to MITRE ATT&CK and adversary behavior. Threat hunting is not a one-time exercise; it’s a discipline.

This comprehensive guide delves into seven core threat hunting methodologies that every mature Security Operations Center (SOC) should understand and implement to fortify their defenses.

The Imperative of Proactive Threat Hunting

The cybersecurity arena is an incessant arms race. Adversaries are constantly innovating, employing sophisticated tactics to bypass conventional security controls. The alarming reality is that in many organizations, threats operate undetected for months, moving laterally, escalating privileges, and positioning themselves for maximum impact. The mean time to identify a breach has historically stretched to 181 days, according to some analyses. This extended dwell time provides attackers ample opportunity to exfiltrate sensitive data, sabotage operations, or establish long-term persistence within a network.

Threat hunting directly confronts this challenge. Instead of passively waiting for automated tools to trigger alerts, threat hunting involves a purposeful and structured search for evidence of malicious activities that have not yet triggered existing security systems. It operates on the critical assumption that compromise may have already occurred, and it’s the security team’s responsibility to actively unearth these hidden threats.

Why Threat Hunting Matters Now More Than Ever

The evolving threat landscape underscores the criticality of threat hunting:

- Sophisticated Attacks: Advanced threats often employ novel techniques that evade signature-based detection. Threat hunting, with its focus on behavior and anomalies, is better equipped to identify these stealthy operations.

- Reduced Dwell Time: By proactively seeking out threats, organizations can significantly reduce the time adversaries spend undetected within their networks, thereby minimizing the potential impact of a breach.

- Improved Security Posture: Regular threat hunts identify weaknesses in existing defenses, allowing security teams to refine their tools, processes, and detection rules.

- Bridging the Gap: Threat hunting fills the void left by automated defenses, addressing the “unknown unknowns” that traditional security systems are designed to miss.

- Human-Centric Approach: While heavily reliant on automation and machine assistance, successful threat hunting remains a human-centric activity. Analysts leverage their expertise and critical thinking to formulate hypotheses and interpret complex data.

The Foundation of Effective Threat Hunting

Before diving into specific methodologies, it’s crucial to understand the foundational elements that underpin successful threat hunting:

Intelligence-Driven Approach

Threat hunting is significantly enhanced when informed by intelligence. This involves understanding adversary tactics, techniques, and procedures (TTPs), as well as indicators of compromise (IoCs). Threat intelligence provides context, helping hunters to prioritize their efforts and develop more accurate hypotheses.

Comprehensive Data Visibility

Effective threat hunting demands access to a rich and diverse set of telemetry and log data. Without comprehensive visibility into network activity, endpoint behavior, user actions, and cloud events, hunters operate in the dark. Key data sources include:

- Endpoint Telemetry: Process execution, file modifications, registry changes, command-line activity.

- Network Traffic: Packet captures (PCAP), NetFlow, DNS queries, encrypted traffic analysis.

- User Behavior Analytics (UBA): Anomalous login patterns, privileged account activity.

- Cloud & SaaS Logs: Logs from Azure, AWS, Google Cloud, and SaaS platforms.

- SIEM Data: Centralized log collection and correlation.

Skilled Analysts

While tools are essential, the human element is paramount. Skilled threat hunters possess a blend of technical expertise, analytical thinking, and an inquisitive mindset. They can formulate insightful hypotheses, interpret complex data patterns, and adapt their strategies based on new findings.

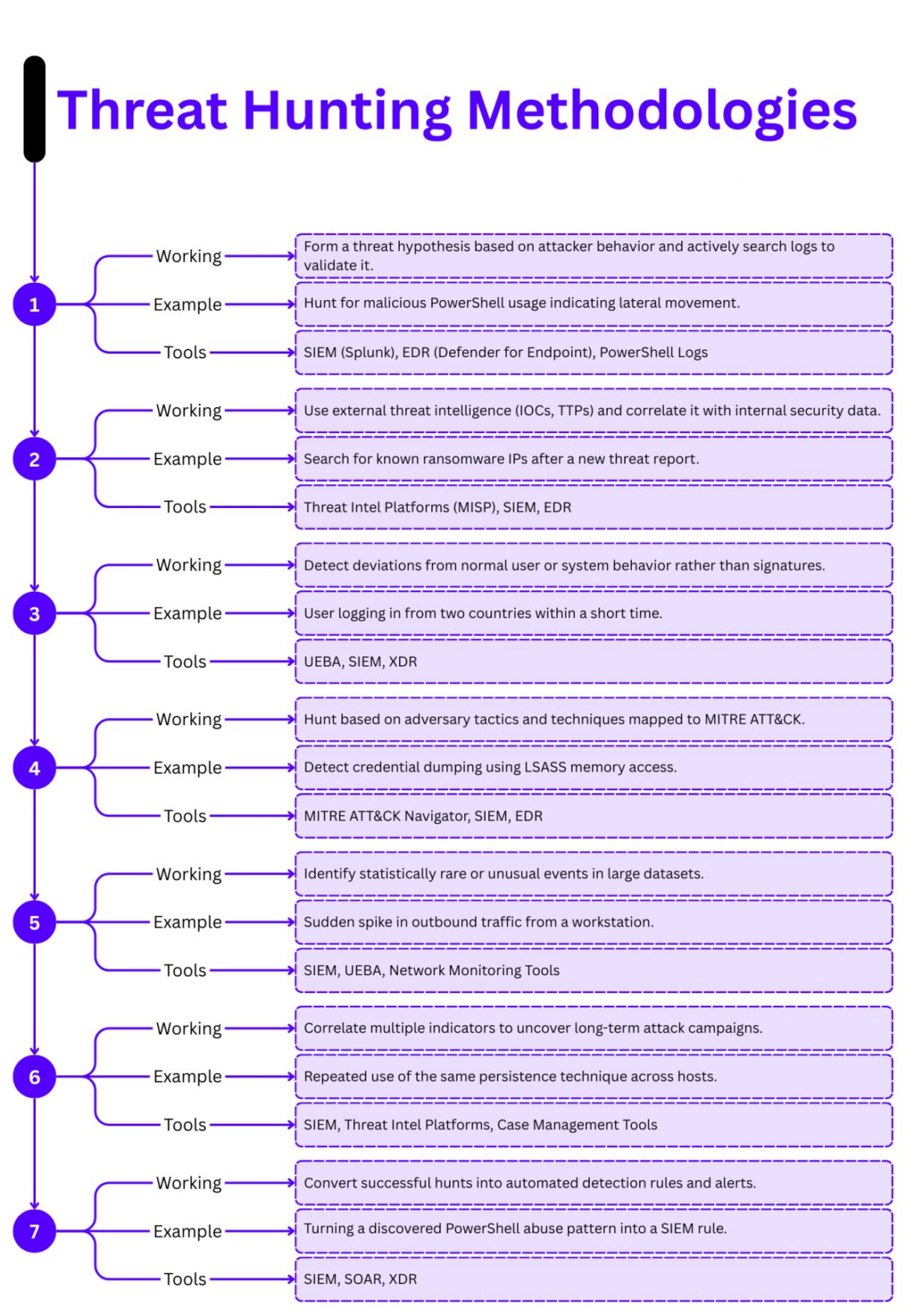

Seven Core Threat Hunting Methodologies

Each of the following methodologies offers a distinct approach to uncovering hidden threats, and a mature SOC often leverages a combination of these to build a robust threat hunting program.

1. Hypothesis-Driven Hunting

At its core, threat hunting is about asking questions and seeking answers. Hypothesis-driven hunting embodies this principle by starting with an assumption about potential malicious activity and then systematically searching for evidence to validate or refute it.

Working Principle

This methodology involves forming a specific threat hypothesis based on expected attacker behavior and then actively searching through logs and telemetry data to validate that hypothesis. The hypothesis acts as a guiding question, narrowing the scope of the investigation and focusing the hunter’s efforts. The initial hypothesis can be a general observation, such as noticing unusual network traffic, or a more specific supposition derived from external threat intelligence.

Example Scenario

A common hypothesis might stem from an understanding of how attackers often move laterally within a network. For instance, a hypothesis could be: “An attacker has gained initial access and is now using malicious PowerShell scripts to execute commands or establish persistence on other systems.”

Based on this, a hunter would specifically search for unusual or malicious PowerShell usage indicating lateral movement. This could involve looking for PowerShell execution from unexpected directories, suspicious command-line arguments, or connections made by PowerShell processes to internal systems.

Key Tools & Data Sources

To effectively execute hypothesis-driven hunting, several tools and data sources are crucial:

- Security Information and Event Management (SIEM) systems (e.g., Splunk): For centralized log collection and analysis from various sources.

- Endpoint Detection and Response (EDR) solutions (e.g., Microsoft Defender for Endpoint): Provides detailed endpoint telemetry, including process execution, command-line arguments, and file system changes.

- PowerShell Logs: Crucial for detecting script execution and understanding what commands were run.

Benefits & Considerations

Hypothesis-driven hunting is highly effective because it focuses investigations, making them more efficient. It encourages a deeper understanding of adversary behaviors and can uncover novel attack techniques. However, it requires skilled analysts capable of formulating informed hypotheses and interpreting complex data.

2. Intelligence-Led Hunting

Informed by the latest threat intelligence, this methodology leverages external knowledge about adversaries to pinpoint potential threats within an organization’s environment. Threat intelligence provides the crucial context needed to move beyond generic searches and target specific threats.

Working Principle

Intelligence-led hunting involves using external threat intelligence, including Indicators of Compromise (IoCs) and Tactics, Techniques, and Procedures (TTPs), and correlating this information with internal security data. IoCs are typically atomic indicators like IP addresses, domains, or file hashes associated with known malicious activity. TTPs describe how adversaries operate, providing a more behavioral understanding of their actions.

Example Scenario

Imagine a new threat report detailing a ransomware campaign that uses specific command-and-control (C2) IP addresses. An intelligence-led hunt would involve searching internal network logs and endpoint telemetry for any connections to these known malicious IP addresses after the threat report is released. Similarly, if intelligence suggests a particular APT group favors specific persistence mechanisms, hunters would actively search for those mechanisms within their environment.

Key Tools & Data Sources

Successful intelligence-led hunting relies on robust access to and integration of threat intelligence:

- Threat Intelligence Platforms (TIPs) (e.g., MISP): Centralize and manage various threat intelligence feeds, allowing for efficient searching and correlation.

- Security Information and Event Management (SIEM) systems: For ingesting and correlating internal log data with external threat intelligence.

- Endpoint Detection and Response (EDR) solutions: Provide endpoint context for IoCs like file hashes and suspicious processes.

Benefits & Considerations

This methodology is highly effective for detecting known threats and understanding the operational landscape. It allows organizations to prioritize their hunting efforts based on current and relevant threats. The primary challenge lies in effectively integrating and operationalizing diverse threat intelligence feeds, ensuring they are timely and accurate.

3. Behavioral / Anomaly-Based Hunting

Moving beyond static signatures, behavioral or anomaly-based hunting focuses on detecting deviations from established baselines of normal user and system behavior. This approach is particularly effective at catching stealthy attackers and insider threats who might leverage legitimate tools and accounts.

Working Principle

This methodology works by establishing profiles of normal behavior for users, applications, and network entities within an environment. Any significant deviation from these baselines is flagged as an anomaly, prompting further investigation. Instead of searching for known bad, it searches for “unknown unusual.”

Example Scenario

Consider a user who typically logs in from a specific geographic region and during regular business hours. An anomaly-based hunt would detect if the same user suddenly logs in from two widely disparate countries within a short time frame, or attempts to access sensitive data outside their usual working hours. This could indicate compromised credentials or an insider threat. Other examples include unusual data exfiltration volumes, access to rarely used servers, or abnormal process execution chains.

Key Tools & Data Sources

To build and monitor behavioral baselines, specialized tools are often employed:

- User and Entity Behavior Analytics (UEBA) solutions: Specifically designed to profile user and entity behavior, identify anomalies, and detect insider threats.

- Security Information and Event Management (SIEM) systems: For collecting and analyzing a wide range of logs to establish behavioral baselines.

- Extended Detection and Response (XDR) platforms: Offer broader visibility across multiple security layers, enhancing anomaly detection.

Benefits & Considerations

Behavioral hunting excels at detecting zero-day attacks, insider threats, and sophisticated adversaries who “live off the land” using legitimate tools. It’s more resilient to evasion techniques than signature-based detection. However, it can generate a higher volume of false positives initially as baselines are established and refined, requiring careful tuning and analysis.

4. MITRE ATT&CK–Mapped Hunting

The MITRE ATT&CK framework has revolutionized how security professionals categorize and understand adversary tactics and techniques. MITRE ATT&CK-mapped hunting directly leverages this knowledge base to guide hunting efforts.

Working Principle

This methodology involves hunting specifically for adversary tactics and techniques outlined in the MITRE ATT&CK framework. Instead of focusing on specific IoCs, hunters focus on how attackers achieve their objectives, such as credential access, lateral movement, or persistence. This allows for a more comprehensive and proactive approach to detecting adversary behavior, even if the specific tools or IoCs change.

Example Scenario

A hunter might choose to focus on the “Credential Dumping” technique (T1003) within the MITRE ATT&CK framework. This would lead them to look for activities associated with credential theft, such as suspicious access to the Local Security Authority Subsystem Service (LSASS) memory. They would search for processes attempting to access LSASS, specific command-line parameters used by tools like Mimikatz, or anomalous process injections into LSASS. Other examples include hunting for common lateral movement techniques like PsExec (T1021.002) or specific persistence mechanisms.

Key Tools & Data Sources

Leveraging the MITRE ATT&CK framework requires integrated tools and a deep understanding of its structure:

- MITRE ATT&CK Navigator: A tool that helps visualize and track adversary techniques, making it easier to plan and execute ATT&CK-aligned hunts.

- Security Information and Event Management (SIEM) systems: For correlating events with ATT&CK techniques.

- Endpoint Detection and Response (EDR) solutions: Provide the granular endpoint telemetry needed to detect specific ATT&CK techniques.

Benefits & Considerations

MITRE ATT&CK-mapped hunting provides a standardized, globally recognized framework for understanding and detecting adversary behavior. It improves communication within security teams, helps prioritize hunting efforts, and can uncover sophisticated attacks. The challenge lies in mapping internal telemetry to specific ATT&CK techniques, which requires significant effort in detection engineering.

5. Statistical / Outlier Analysis

Sometimes, the most telling signs of compromise are events that simply “don’t fit.” Statistical and outlier analysis are powerful techniques for surfacing these rare or abnormal occurrences in vast datasets.

Working Principle

This methodology aims to identify statistically rare or unusual events within large datasets. By applying statistical methods, hunters can quantify the “normal” range of activity and automatically flag anything that falls outside of it. This can be based on frequency, volume, duration, or other numerical attributes of events.

Example Scenario

A classic example of statistical analysis in threat hunting involves network traffic. A sudden, significant spike in outbound traffic from a workstation that typically has low external communication could indicate data exfiltration. Similarly, an unusually high number of login failures from a single IP address in a short period might point to a brute-force attack. Other outliers could include processes running for an abnormally long time, or an unexpected volume of file modifications.

Key Tools & Data Sources

Statistical and outlier analysis often requires specialized capabilities within security tools:

- Security Information and Event Management (SIEM) systems: Many SIEMs have built-in statistical analysis capabilities or can integrate with external analytics platforms.

- User and Entity Behavior Analytics (UEBA) solutions: As mentioned, UEBA tools are strong in identifying behavioral outliers.

- Network Monitoring Tools: Provide detailed insights into network traffic patterns, volumes, and connections.

Benefits & Considerations

This approach is highly effective at identifying previously unknown threats that manifest as statistical anomalies. It can be applied across various data types and doesn’t require prior knowledge of attack signatures. However, like behavioral hunting, it can generate false positives if baselines are not accurately established or if legitimate, but unusual, events occur. Requires a strong understanding of data analytics.

6. Campaign / Correlation Hunting

Attackers often operate in campaigns, employing a series of related activities over time to achieve their objectives. Campaign or correlation hunting focuses on connecting these seemingly disparate events to reveal the larger picture of an intrusion.

Working Principle

This methodology involves correlating multiple weak signals or seemingly isolated indicators across different systems and timeframes to uncover long-term attack campaigns. Instead of looking for a single “smoking gun,” hunters piece together a mosaic of suspicious activities that, individually, might not trigger an alert.

Example Scenario

Consider an attacker attempting to establish persistence. They might use the same persistence technique (e.g., a specific registry run key) across multiple hosts within an organization. Individually, one instance might be overlooked. However, a campaign hunt would connect the repeated use of this specific persistence technique across numerous endpoints, revealing a coordinated intrusion attempt. Other examples include correlating a phishing email campaign with subsequent beaconing to a specific C2 server, or linking a series of seemingly unrelated brute-force attempts to a single adversary.

Key Tools & Data Sources

Connecting the dots across broad datasets is crucial for campaign hunting:

- Security Information and Event Management (SIEM) systems: Essential for centralizing and correlating events from diverse sources over time.

- Threat Intelligence Platforms: Help provide context for observed activities, linking them to known campaigns or adversary groups.

- Case Management Tools: Critical for organizing findings, linking investigations, and documenting the progression of an intrusion.

Benefits & Considerations

Campaign hunting provides a holistic view of adversary operations, allowing responders to understand the full scope of a breach rather than dealing with isolated incidents. It helps in proactively identifying the various stages of an attack chain. This methodology requires significant analytical skill and robust data correlation capabilities, as well as the ability to maintain context over extended periods.

7. Detection Engineering Feedback Loop

Threat hunting isn’t just about finding threats; it’s also about strengthening defenses. The detection engineering feedback loop ensures that successful hunts lead to continuous improvement in automated detection capabilities.

Working Principle

This methodology involves converting successful threat hunts into automated detection rules and alerts. When a hunter uncovers a novel or previously undetected malicious activity pattern, that knowledge is used to refine existing security controls or create new ones, reducing the likelihood of future similar attacks slipping through. This closes the loop between proactive hunting and reactive detection, making the security posture stronger over time.

Example Scenario

If a hunter successfully identifies a specific abuse pattern of PowerShell (e.g., a particular sequence of commands used for privilege escalation) that was not previously detected by automated systems, they would then convert this discovered pattern into a new SIEM rule or a signature for an EDR solution. This new rule would automatically alert security teams if the same PowerShell abuse pattern is observed in the future. Similarly, a discovered lateral movement technique could be translated into a custom detection within an XDR platform.

Key Tools & Data Sources

This methodology requires tools that facilitate the creation and deployment of detection rules:

- Security Information and Event Management (SIEM) systems: For deploying new correlation rules and alerts.

- Security Orchestration, Automation, and Response (SOAR) platforms: Can automate the process of creating and deploying detection rules based on hunting findings.

- Extended Detection and Response (XDR) platforms: Offer capabilities to create custom detections and integrate them directly into the detection engine.

Benefits & Considerations

This feedback loop is critical for continuous improvement and enhancing the overall security posture. It transforms hunting from a purely reactive measure into a proactive one that actively strengthens automated defenses. It also reduces the need for manual hunting of previously discovered threats, freeing up hunters for more complex investigations. The challenge lies in ensuring that newly created detection rules are accurate, efficient, and don’t generate excessive false positives, requiring careful testing and validation.

Building a Mature Threat Hunting Program

Implementing these methodologies requires more than just tools; it demands a cultural shift towards a proactive mindset and a commitment to continuous improvement.

Essential Components of a Mature Program

- Proactive Mindset: Security teams must embrace the assumption of compromise and actively seek out threats rather than waiting for alerts.

- Deep Telemetry Visibility: Comprehensive data collection from endpoints, networks, cloud environments, and user activity is non-negotiable.

- Skilled Analysts: Investment in training and developing threat hunting skills is crucial. This includes understanding adversary TTPs, data analysis, and forensic investigation techniques.

- Framework Alignment: Leveraging frameworks like MITRE ATT&CK provides structure and a common language for understanding and combating adversary behavior.

- Continuous Improvement Loop: Successful hunts must translate into improved automated detections, refining the security posture iteratively.

- Integration of Threat Intelligence: Timely and relevant threat intelligence is the fuel that powers effective threat hunting, providing context and direction.

Threat hunting is a human-centric activity that pushes the boundaries of automated detection methods. While 73% of organizations have adopted a defined threat hunting framework, only 38% actually follow it. The real value comes from consistent application and refinement.

The ability to turn threat intelligence into actionable detection and hunting strategies is a defining advantage for modern SOC analysts. By contextualizing, correlating, and operationalizing intelligence, security teams can consistently outpace advanced threats.

Final Thoughts: The Discipline of Cybersecurity Resilience

In the ceaseless battle against cyber adversaries, resting on the laurels of automated defenses is a precarious strategy. Threat hunting is the active pursuit of what’s hidden, the deliberate uncovering of what’s missed, and the continuous strengthening of our digital fortresses. By embracing hypothesis-driven exploration, intelligence-led targeting, behavioral anomaly detection, MITRE ATT&CK-mapped strategies, statistical outlier analysis, campaign correlation, and a robust detection engineering feedback loop, organizations can evolve their security operations from reactive to truly proactive. It’s a journey from simply responding to what is seen, to actively seeking out what might be unseen.

Threat hunting is the discipline that allows organizations to stay one step ahead, to reduce the “dwell time” of adversaries, and to build genuine cybersecurity resilience. It’s about empowering security teams with the knowledge and tools to not just protect, but to proactively defend.

Is your organization ready to elevate its threat hunting capabilities and fortify its defenses against the most sophisticated threats?

At IoT Worlds, we specialize in helping organizations implement advanced threat hunting methodologies, integrate cutting-edge security technologies, and train their teams to proactively detect and neutralize elusive cyber threats. From developing bespoke threat hunting playbooks to optimizing your SIEM and XDR platforms for deeper visibility, our experts are here to guide you.

Don’t let hidden threats compromise your security posture. Take the proactive step towards a more secure future today.

Email us to learn more about how we can help you build a world-class threat hunting program and transform your security operations: info@iotworlds.com