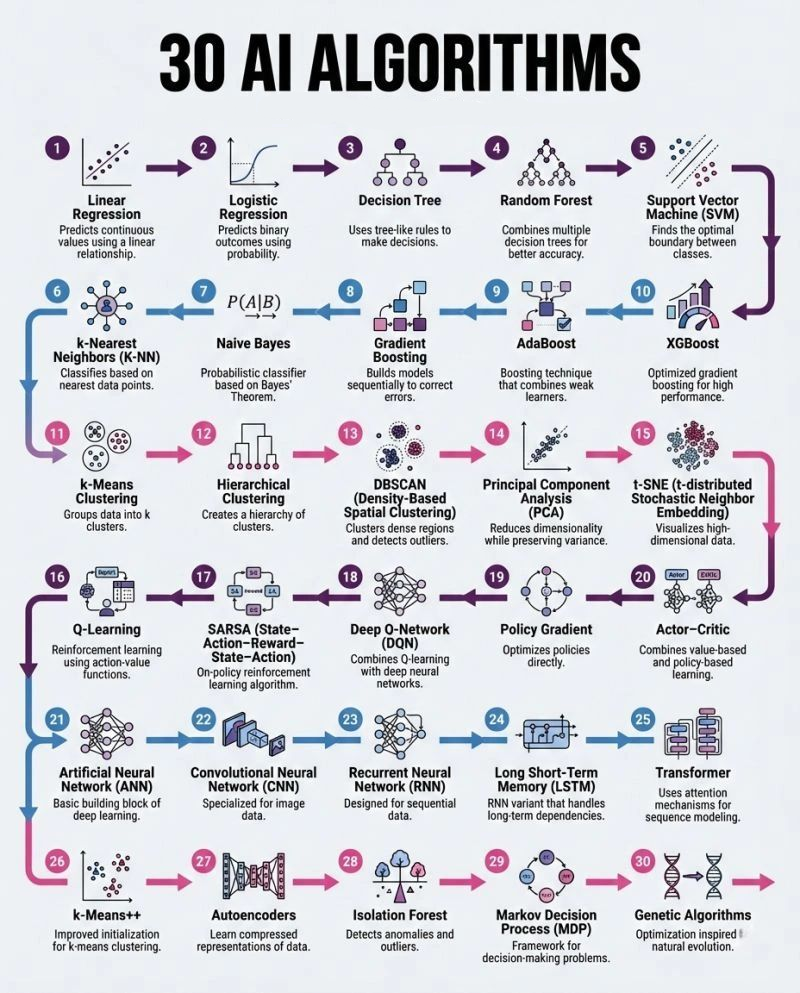

The Algorithmic Backbone of Artificial Intelligence

In the rapidly evolving landscape of artificial intelligence, understanding the foundational algorithms is paramount. While tools change and frameworks evolve, the core algorithms remain the enduring pillars upon which all AI innovation is built. This comprehensive guide delves into 30 essential AI algorithms, offering a structured map to navigate the diverse world of machine learning, from supervised and unsupervised learning to reinforcement learning, deep learning architectures, and optimization techniques. Mastering these algorithms means grasping 90% of the applied AI foundations that power everything from predictive analytics to autonomous systems.

Supervised Learning: Learning from Labeled Data

Supervised learning is a cornerstone of AI, where algorithms learn from labeled datasets – data tagged with the correct output. The goal is to establish a mapping from inputs to outputs, enabling accurate predictions on new, unseen data. This category forms the backbone of many real-world AI breakthroughs and is often the starting point for building useful AI systems.

Regression Algorithms: Predicting Continuous Values

Regression algorithms are designed to predict continuous numerical outcomes. They establish a relationship between input variables and a continuous output, minimizing the discrepancies between predicted and actual values.

Linear Regression

Linear Regression is a fundamental algorithm that predicts continuous values by establishing a linear relationship between input features and the target variable. It models the relationship as a straight line, aiming to find the best-fit line that minimizes the sum of squared differences between observed and predicted values.

How it works: Imagine plotting data points on a graph; linear regression tries to draw a straight line that best represents the trend of these points. This line is defined by the equation Y=mX+c, where Y is the predicted output, X is the input feature, m is the slope, and c is the intercept. For multiple input features, the equation extends to Y=b0+b1X1+b2X2+…+bnXn.

Use cases: Predicting house prices based on size, forecasting stock prices, or estimating sales based on advertising spend.

Classification Algorithms: Predicting Discrete Categories

Classification algorithms are used when the output variable is categorical, meaning they predict discrete categories or classes.

Logistic Regression

Despite its name, Logistic Regression is a classification algorithm. It predicts the probability of a binary outcome (e.g., yes/no, true/false) by fitting data to a logistic function.

How it works: Instead of predicting the value directly, it predicts the probability of an instance belonging to a particular class. It uses a sigmoid function to map predictions into a probability ranging between 0 and 1. If the probability is above a certain threshold (e.g., 0.5), it classifies the instance into one class; otherwise, it classifies it into the other.

Use cases: Spam detection, medical diagnosis (e.g., presence of a disease), customer churn prediction.

Decision Trees

Decision Trees use a tree-like structure of rules to make decisions. They partition the data into subsets based on feature values, creating a tree where each internal node represents a test on an attribute, each branch represents an outcome of the test, and each leaf node represents a class label.

How it works: The algorithm greedily selects the best attribute to split the data at each node, aiming to create the purest possible child nodes (nodes where instances mostly belong to one class).

Use cases: Customer segmentation, risk assessment, predicting loan defaults.

Random Forest

Random Forest improves upon single decision trees by combining multiple decision trees to achieve better accuracy and prevent overfitting.

How it works: It constructs a multitude of decision trees during training and outputs the class that is the mode of the classes (classification) or mean prediction (regression) of the individual trees. Each tree in the forest is built from a random subset of the training data and a random subset of features.

Use cases: Image classification, medical diagnosis, stock market prediction.

Support Vector Machine (SVM)

Support Vector Machine (SVM) is a powerful algorithm for classification that finds the optimal hyperplane (a decision boundary) that best separates different classes in the feature space.

How it works: SVM aims to maximize the margin between the classes, meaning it finds the hyperplane that has the largest distance to the nearest training data point of any class. These closest points are called “support vectors.”

Use cases: Face detection, text categorization, handwritten digit recognition.

K-Nearest Neighbors (K-NN)

K-Nearest Neighbors (K-NN) is a non-parametric, instance-based learning algorithm that classifies new data points based on the majority class of their ‘k’ nearest neighbors in the feature space.

How it works: When a new data point needs to be classified, K-NN looks at the ‘k’ closest data points in the training set. The new data point is then assigned the class that is most common among these ‘k’ neighbors.

Use cases: Recommendation systems, anomaly detection, pattern recognition.

Naive Bayes

Naive Bayes is a probabilistic classifier based on Bayes’ Theorem, assuming independence between features. It’s particularly effective for large datasets.

How it works: It calculates the probability of a given data point belonging to a particular class based on the probabilities of its individual features occurring within that class, assuming that all features contribute independently to the probability.

Use cases: Spam filtering, sentiment analysis, document classification.

Boosting Algorithms: Enhancing Model Performance

Boosting algorithms are ensemble methods that sequentially build models to correct the errors of previous models, leading to a strong learner from a series of weak learners.

Gradient Boosting

Gradient Boosting builds models sequentially, with each new model attempting to correct the errors made by the previous ones. It focuses on minimizing the loss function (the difference between predicted and actual values).

How it works: It iteratively adds new models to the ensemble, each one trained to predict the “residuals” or errors of the previous model. The predictions of all the models are then combined to get the final prediction.

Use cases: Predictive analytics, forecasting, ranking search results.

AdaBoost (Adaptive Boosting)

AdaBoost is a boosting technique that combines multiple “weak learners” (often simple decision trees) to create a strong learner. It assigns higher weights to misclassified samples, forcing subsequent learners to focus on those difficult cases.

How it works: Initially, all data points have equal weights. After each iteration, the weights of misclassified data points are increased, and a new weak learner is trained on this re-weighted data. This process continues, and the final prediction is a weighted sum of all weak learners.

Use cases: Face detection, anomaly detection, bioinformatics.

XGBoost (Extreme Gradient Boosting)

XGBoost is an optimized and highly efficient gradient boosting framework designed for speed and performance. It’s a popular choice for structured data problems.

How it works: It incorporates regularization techniques (L1 and L2) to prevent overfitting, handles sparse data, and uses parallel processing, making it significantly faster and more robust than traditional gradient boosting implementations.

Use cases: Winning solutions in machine learning competitions, ad click-through rate prediction, customer behavior analysis.

Unsupervised Learning: Discovering Hidden Patterns

Unsupervised learning deals with unlabeled data, where the algorithm must discover patterns or inherent structures within the data on its own, without explicit guidance. This category is crucial for tasks like data exploration, customer segmentation, and anomaly detection.

Clustering Algorithms: Grouping Similar Data

Clustering algorithms aim to group similar data points together into clusters, where data points within a cluster are more similar to each other than to those in other clusters.

k-Means Clustering

k-Means Clustering groups data into ‘k’ distinct clusters. It’s an iterative algorithm that partitions observations into ‘k’ clusters in which each observation belongs to the cluster with the nearest mean (centroid).

How it works:

- Initialize ‘k’ centroids randomly.

- Assign each data point to its closest centroid.

- Recalculate the position of each centroid based on the mean of all data points assigned to that cluster.

- Repeat steps 2 and 3 until the centroids no longer move significantly or a maximum number of iterations is reached.

Use cases: Customer segmentation, image compression, document clustering.

k-Means++

k-Means++ is an improved initialization technique for k-Means clustering, designed to select initial cluster centroids that are spread out, leading to faster convergence and better quality clusters.

How it works: Instead of random centroid selection, k-Means++ strategically places the initial centroids farther apart, reducing the likelihood of suboptimal clustering results.

Use cases: Any scenario where k-Means clustering is applied, particularly for preventing poor initializations.

Hierarchical Clustering

Hierarchical Clustering creates a hierarchy of clusters, either by starting with individual data points and merging them (agglomerative) or by starting with one large cluster and splitting it (divisive).

How it works: Agglomerative hierarchical clustering starts by considering each data point as its own cluster. Then, it iteratively merges the two closest clusters until only one cluster remains. The process generates a dendrogram, a tree-like diagram that illustrates the arrangement of clusters.

Use cases: Biological taxonomy, market research, anomaly detection.

DBSCAN (Density-Based Spatial Clustering of Applications with Noise)

DBSCAN clusters dense regions of data points together and identifies outliers as noise. It doesn’t require specifying the number of clusters in advance.

How it works: It defines clusters as areas of a certain density, identifying “core samples” that have many neighbors within a specified radius. It then expands these core samples to form clusters and marks samples that are not dense enough as noise.

Use cases: Geographical data analysis, anomaly detection in network traffic, identifying unusual patterns.

Dimensionality Reduction Algorithms: Simplifying Data

Dimensionality reduction algorithms reduce the number of input features in a dataset while retaining as much information as possible. This helps in visualization, reducing noise, and speeding up subsequent machine learning tasks.

Principal Component Analysis (PCA)

Principal Component Analysis (PCA) is a widely used technique for dimensionality reduction that transforms the data into a new set of orthogonal variables called principal components, reducing the dimensionality while preserving most of the variance.

How it works: PCA identifies the directions (principal components) along which the data varies the most. The first principal component captures the most variance, the second the second most, and so on. By selecting a subset of these components, the dimensionality of the data is reduced.

Use cases: Image processing, facial recognition, data visualization.

t-SNE (t-distributed Stochastic Neighbor Embedding)

t-SNE is a non-linear dimensionality reduction technique especially well-suited for visualizing high-dimensional data in 2D or 3D while preserving local structure.

How it works: It converts similarities between data points to joint probabilities. Initially, high-dimensional points are represented as probabilities in the original space, and then a corresponding set of lower-dimensional points are created, aiming to minimize the Kullback-Leibler divergence between the two probability distributions.

Use cases: Visualizing complex datasets, clustering high-dimensional features, exploring relationships in genomics data.

Anomaly Detection Algorithms: Finding the Unusual

Anomaly detection algorithms identify unusual patterns or data points that deviate significantly from the majority of the data.

Isolation Forest

Isolation Forest is an effective algorithm specifically designed for anomaly detection. It works by isolating anomalies rather than profiling normal data points.

How it works: It builds an ensemble of isolation trees. Anomalies, being “few and different,” are more susceptible to being isolated in shorter paths within the trees compared to normal data points.

Use cases: Fraud detection, cybersecurity threat detection, identifying faulty equipment.

Autoencoders

Autoencoders are a type of neural network that learn compressed representations of data. While primarily used for dimensionality reduction and feature learning, they can also be effectively used for anomaly detection.

How it works: An autoencoder consists of an encoder that compresses the input into a lower-dimensional latent space, and a decoder that reconstructs the input from this latent representation. When trained on normal data, it struggles to reconstruct anomalous data accurately, leading to a high reconstruction error, which signals an anomaly.

Use cases: Anomaly detection in high-dimensional data, image denosing, learning data representations.

Reinforcement Learning: Learning Through Interaction

Reinforcement learning (RL) is a paradigm where an agent learns to make decisions by interacting with an environment, receiving rewards or penalties for its actions. The goal is to maximize the cumulative reward over time.

Value-Based Reinforcement Learning

Value-based RL algorithms focus on learning a value function that estimates the expected return for being in a specific state or taking a specific action in a state.

Q-Learning

Q-Learning is a model-free reinforcement learning algorithm that learns an optimal action-value function (Q-function), which estimates the expected utility of taking a given action in a given state and following an optimal policy thereafter.

How it works: The agent explores the environment, and for each state-action pair, it updates its Q-value based on the immediate reward and the maximum Q-value of the next state. The Q-table eventually converges to optimal values, allowing the agent to choose actions that maximize future rewards.

Use cases: Game playing (e.g., Chess, Go), robotics control, resource management.

SARSA (State-Action-Reward-State-Action)

SARSA is an on-policy reinforcement learning algorithm, meaning it learns the value of the policy it is currently following, as opposed to Q-Learning which learns the value of the optimal policy.

How it works: Like Q-Learning, SARSA updates its Q-values based on an observed trajectory. However, the update rule for SARSA uses the action taken in the next state, as determined by the current policy, rather than the action that yields the maximum Q-value.

Use cases: Similar to Q-Learning, but often more stable in certain environments, navigating mazes, control systems.

Deep Q-Network (DQN)

Deep Q-Network (DQN) combines Q-learning with deep neural networks. It allows reinforcement learning to be applied to environments with high-dimensional state spaces where traditional Q-tables become infeasible.

How it works: Instead of a Q-table, a deep neural network approximates the Q-function. The network takes the state as input and outputs the Q-values for all possible actions. Experience replay and target networks are used to stabilize training.

Use cases: Atari game playing, complex robotic tasks, autonomous driving.

Policy-Based Reinforcement Learning

Policy-based RL algorithms directly learn a policy, which maps states to actions, without necessarily learning a value function.

Policy Gradient

Policy Gradient algorithms optimize policies directly. They aim to find the optimal policy by iteratively updating the policy parameters in the direction that increases the expected cumulative reward.

How it works: These methods directly search for the policy that maximizes long-term returns. They use the gradient of the policy’s performance with respect to its parameters to update the policy.

Use cases: Robotics control, continuous control problems, generating complex behaviors.

Actor-Critic

Actor-Critic methods combine both value-based and policy-based learning. The “actor” learns a policy, and the “critic” learns a value function to evaluate the actor’s actions.

How it works: The actor’s policy is updated based on the critic’s judgment (advantage function), which tells the actor how good or bad its action was compared to the expected value. This combination often leads to more stable and efficient learning.

Use cases: Complex control tasks, humanoid robotics, game AI.

Framework for Decision-Making

Markov Decision Process (MDP)

A Markov Decision Process (MDP) provides a mathematical framework for modeling decision-making problems in situations where outcomes are partly random and partly under the control of a decision-maker. It is the theoretical backbone for most reinforcement learning algorithms.

How it works: An MDP defines a set of states, a set of actions, transition probabilities between states, and rewards received for actions. The goal is to find an optimal policy that maximizes the total reward over time.

Use cases: Planning, robotics, economics, scheduling problems.

Deep Learning Architectures: Emulating the Human Brain

Deep learning is a subfield of machine learning inspired by the structure and function of the human brain’s neural networks. These architectures are capable of learning complex representations from vast amounts of data, leading to breakthroughs in areas like image and speech recognition.

Foundational Neural Networks

Artificial Neural Networks (ANN)

Artificial Neural Networks (ANNs), also known as feedforward neural networks, are the basic building blocks of deep learning. They consist of interconnected nodes (neurons) organized in layers: an input layer, one or more hidden layers, and an output layer.

How it works: Information flows in one direction from the input layer through the hidden layers to the output layer. Each connection has a weight, and each neuron has an activation function. The network learns by adjusting these weights through a process called backpropagation, minimizing the difference between predicted and actual outputs.

Use cases: Pattern recognition, classification, regression tasks.

Convolutional Neural Network (CNN)

Convolutional Neural Networks (CNNs) are a specialized type of deep neural network that excels in processing grid-like data, such as images. They are designed to automatically learn spatial hierarchies of features.

How it works: CNNs use convolutional layers that apply filters to detect local patterns (e.g., edges, textures) across small receptive fields of the input. Pooling layers then reduce the spatial dimensions, and fully connected layers make final predictions.

Use cases: Image classification, object detection, facial recognition, medical image analysis.

Recurrent Neural Network (RNN)

Recurrent Neural Networks (RNNs) are designed for sequential data, where the output from one step is fed as input to the next. This makes them suitable for tasks involving time series, natural language, and speech.

How it works: RNNs have a “memory” that allows them to process sequences of arbitrary length. They maintain a hidden state that captures information about previous elements in the sequence, allowing them to understand context.

Use cases: Speech recognition, natural language processing, machine translation, time series prediction.

Long Short-Term Memory (LSTM)

Long Short-Term Memory (LSTM) networks are a special type of RNN variant that addresses the vanishing gradient problem, enabling them to handle long-term dependencies in sequential data more effectively.

How it works: LSTMs utilize a sophisticated “cell state” and various “gates” (input, forget, output gates) that control the flow of information, allowing the network to selectively remember or forget past information as needed.

Use cases: Speech synthesis, video analysis, sentiment analysis, generating text.

Transformer

Transformer networks are a revolutionary deep learning architecture, introduced in 2017, that primarily uses attention mechanisms for sequence modeling, largely replacing RNNs and LSTMs in many natural language processing (NLP) tasks.

How it works: Transformers forgo recurrence and convolutions, relying entirely on self-attention mechanisms to weigh the importance of different parts of the input sequence when processing each element. This allows for parallel processing and captures long-range dependencies more efficiently.

Use cases: Machine translation, text summarization, language understanding, large language models (LLMs).

Optimization & Evolutionary Algorithms: Beyond Traditional Learning

These algorithms offer alternative approaches to problem-solving, often inspired by natural processes, to find optimal solutions or explore complex search spaces.

Genetic Algorithms

Genetic Algorithms are optimization algorithms inspired by natural evolution. They use concepts like selection, crossover, and mutation to find optimal solutions to problems.

How it works: A population of candidate solutions (individuals) is iteratively evolved. In each generation, individuals are evaluated, and the fittest ones are selected to produce offspring through crossover (combining solutions) and mutation (introducing random changes). This process gradually leads to better solutions.

Use cases: Optimization problems, scheduling, engineering design, game AI.

The Enduring Core of AI

The algorithms outlined above represent the fundamental building blocks of virtually every AI application seen today. From simple regression models predicting trends to intricate deep learning architectures understanding human language, each algorithm plays a crucial role in the AI ecosystem. Understanding their mechanics, strengths, and appropriate use cases empowers developers, researchers, and businesses to harness the full potential of artificial intelligence.

Why Algorithm Selection Matters

Choosing the right algorithm is often the most critical step in an AI project. The “best” algorithm isn’t just about accuracy; it’s about fitting the algorithm to the specific problem type, data characteristics, and business constraints such as latency, cost, scalability, and interpretability. A well-chosen algorithm can lead to efficient, robust, and impactful AI solutions.

The Journey Ahead

As AI continues to advance, new algorithms will undoubtedly emerge, and existing ones will be refined. However, the core principles embedded in these 30 algorithms will remain foundational. By mastering them, you gain not just a toolset, but a deep understanding of the underlying intelligence that drives the AI revolution.

Discover How IoT Worlds Can Propel Your AI Initiatives

At IoT Worlds, we specialize in transforming theoretical AI knowledge into practical, scalable, and impactful solutions for businesses across industries. Whether you’re looking to integrate predictive analytics, automate complex processes with reinforcement learning, or build state-of-the-art deep learning applications, our team of experts is equipped to guide you through every step.

Unlock the full potential of AI for your business.

Contact us today to explore how these 30 AI algorithms, tailored to your specific needs, can revolutionize your operations and drive innovation.

Email us at info@iotworlds.com to start the conversation!