The promise of Artificial Intelligence of Things (AIoT) is transformative, offering unprecedented levels of automation, insight, and efficiency across industries. From smart factories optimizing production lines to intelligent cities managing traffic flow and predictive maintenance in critical infrastructure, AIoT is poised to revolutionize how we interact with the physical world. However, the path to realizing this potential is fraught with challenges. Many AIoT initiatives, despite significant investment and brilliant minds at work, falter not due to faulty algorithms or poor model accuracy, but because fundamental architectural decisions are overlooked or postponed until it’s too late.

This isn’t merely about integrating AI into existing IoT systems; it’s about crafting entirely new, hybrid intelligence systems where every component – from edge devices to cloud infrastructure, data pipelines, and automation protocols – works in seamless concert. The difference between a proof-of-concept gathering dust and a scalable, production-ready platform often hinges on a handful of critical architectural choices made early in the design process.

These decisions are not trivial. They demand foresight, a deep understanding of operational realities, and an unwavering commitment to building for real-world conditions rather than idealized scenarios. The allure of groundbreaking AI models can distract teams from the foundational engineering that underpins true AIoT success. Without a robust, well-conceived architecture, even the most sophisticated AI models are destined to remain academic exercises, unable to deliver tangible value in production environments.

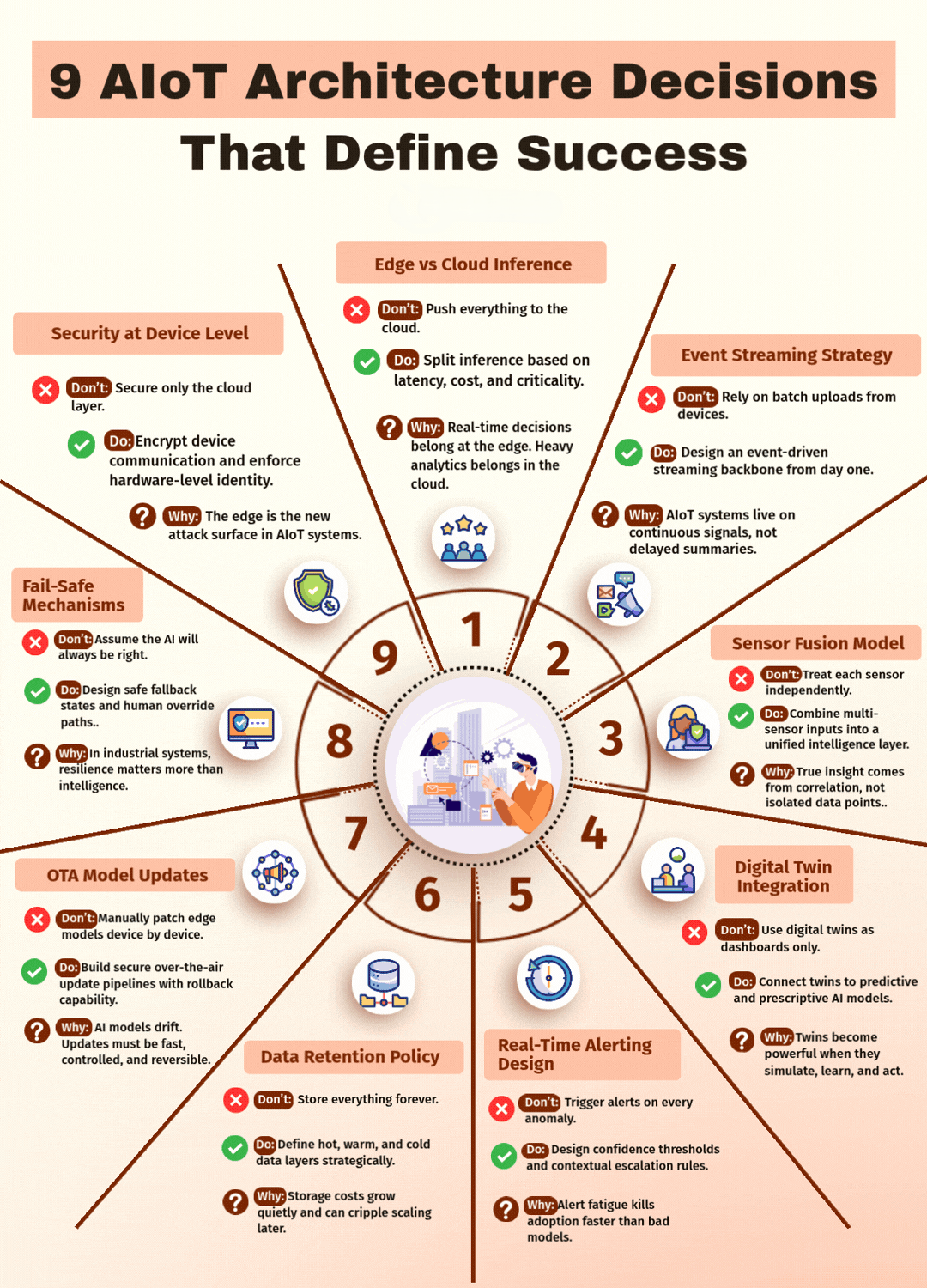

In this comprehensive guide, we will explore nine pivotal AIoT architecture decisions that are non-negotiable for success. These aren’t just best practices; they are the bedrock upon which resilient, scalable, and intelligent AIoT systems are built.

1. Edge vs. Cloud Inference: Strategic Distribution of Intelligence

One of the most critical foundational decisions in AIoT architecture lies in determining where intelligence – specifically, AI model inference – should reside. The simplistic view often pits edge inference against cloud inference as mutually exclusive choices. In reality, a successful AIoT strategy demands a nuanced approach, strategically distributing intelligence based on specific operational requirements.

1.1 Beyond the Either/Or: A Hybrid Approach

The “edge vs. cloud” debate is not an either/or proposition for AIoT. Instead, it’s about identifying the optimal placement for different types of computational tasks. Edge devices, by their nature, are closer to the data source and the physical processes they monitor and control. The cloud offers vast computational power, scalable storage, and the ability to process and analyze fleet-wide data. A robust AIoT architecture leverages the strengths of both.

1.2 Latency-Critical Actions at the Edge

For actions requiring immediate response or operating in environments with intermittent connectivity, edge inference is paramount. Imagine an autonomous robot navigating a factory floor; decisions about collision avoidance or immediate adjustments to movement cannot tolerate the latency of a round trip to the cloud. Similarly, in predictive maintenance, the real-time detection of a critical anomaly on a piece of machinery might necessitate an immediate shutdown or alert, which is best handled at the edge.

Edge inference minimizes latency by processing data locally, reducing the reliance on network availability and bandwidth. This is particularly crucial in industrial settings, where network reliability can be inconsistent, and safety-critical operations demand instantaneous reactions. The key is to run models that directly influence real-time operational outcomes on the edge devices themselves.

1.3 Fleet-Wide Pattern Recognition and Heavy Analytics in the Cloud

While the edge excels at immediate, localized decision-making, the cloud is indispensable for processing and analyzing large datasets from an entire fleet of devices. Tasks such as long-term trend analysis, global model retraining, identifying subtle patterns across diverse data streams, and optimizing complex business processes are best suited for the cloud.

The cloud provides the necessary computational resources and storage capabilities to run resource-intensive machine learning models, perform comprehensive data aggregation, and derive macro-level insights that inform strategic decisions. For example, by analyzing performance data from thousands of similar machines across different locations, a cloud-based AI model can identify subtle degradation patterns that might be missed by individual edge devices, leading to more accurate predictive maintenance schedules and improved asset utilization across the entire operation.

1.4 Deliberate Distribution, Not Default Placement

The power of AIoT lies in this deliberate distribution of intelligence. It’s about designing a system where edge devices handle immediate, context-specific tasks, acting as intelligent local agents, while the cloud acts as a central brain, providing overarching intelligence, learning, and optimization. This requires a clear understanding of the trade-offs involved, including latency requirements, bandwidth constraints, data privacy concerns, and computational power available at different levels of the architecture.

A well-designed AIoT system will define clear interfaces and protocols for data exchange between edge and cloud, ensuring that the right data is sent to the right location for processing at the right time. This orchestration of intelligence is what truly differentiates a successful AIoT deployment from a simplistic IoT system with a bolted-on AI layer.

2. Security at the Device Level: The Foundation of Trust

In the interconnected world of AIoT, security is not an afterthought; it is the absolute foundation. Many organizations mistakenly assume that securing the API gateway or the cloud infrastructure is sufficient. However, in an AIoT context, this approach is fundamentally flawed. If your security model begins at the network perimeter, you’ve already exposed your system to a myriad of vulnerabilities at its most expansive and often least protected points: the devices themselves.

The edge is the new attack surface. Every IoT device, from a simple sensor to a complex industrial controller, represents a potential entry point for malicious actors. Compromising a single device can lead to data breaches, operational disruptions, or even physical harm in critical infrastructure. Therefore, robust security measures must be embedded directly into the devices from their inception.

2.1 Device Identity and Secure Boot

Establishing strong device identity is paramount. Each device must have a unique and verifiable identity that can be authenticated across the network. This often involves hardware-level security features, such as Trusted Platform Modules (TPMs) or Hardware Security Modules (HSMs), which store cryptographic keys and provide a secure environment for cryptographic operations.

Secure boot mechanisms ensure that only trusted software can run on a device. By verifying the digital signature of each component of the boot process – from the initial bootloader to the operating system and application firmware – secure boot prevents the execution of malicious code and protects against tampering. This is crucial for maintaining the integrity and trustworthiness of edge devices, particularly in environments where physical access might be a concern.

2.2 Encrypted Device Communication

All communication between devices, and between devices and the cloud, must be encrypted. This protects sensitive data from eavesdropping and tampering as it traverses potentially insecure networks. Implementing industry-standard encryption protocols, such as Transport Layer Security (TLS) or Datagram Transport Layer Security (DTLS), is essential. This extends beyond just data in transit; securing data at rest on devices, especially if it contains sensitive information, is also a critical consideration.

2.3 Certificate Management and Lifecycle

Managing digital certificates for device authentication and secure communication is a complex but vital aspect of AIoT security. A robust certificate management system should handle the issuance, renewal, and revocation of certificates throughout the device’s lifecycle. This includes mechanisms for timely certificate updates to mitigate vulnerabilities and processes for revoking compromised certificates immediately. Automated certificate management is often necessary given the large scale of AIoT deployments.

2.4 Firmware Over-the-Air (FOTA) Security

As new vulnerabilities are discovered or security patches are released, the ability to securely update device firmware over-the-air (FOTA) is critical. FOTA updates must be digitally signed and verified to prevent the deployment of malicious firmware. Additionally, robust rollback mechanisms are necessary to revert to previous stable versions in case an update introduces new issues or vulnerabilities. This ensures that security remains agile and adaptable to evolving threats.

2.5 Defense in Depth at the Edge

Effective AIoT security adopts a “defense in depth” strategy, with multiple layers of security controls protecting against various attack vectors. This includes network segmentation, access control mechanisms, intrusion detection systems, and regular security audits of both hardware and software at the device level. The goal is to make it as difficult as possible for an attacker to compromise a device and, even if a compromise occurs, to limit the blast radius of that breach.

Ultimately, by prioritizing security at the device level, organizations build a foundation of trust that is essential for the reliable and safe operation of AIoT systems. Without this foundational security, the entire AIoT edifice remains vulnerable, regardless of the sophistication of its AI components.

3. Event-Driven over Batch: Reacting to the Rhythm of Reality

The traditional enterprise IT landscape often relies on batch processing, where data is collected over a period and then processed in large chunks. While this approach has its merits for certain use cases, it is fundamentally ill-suited for the dynamic, real-time demands of AIoT. Successful AIoT systems thrive on immediacy, reacting to state changes and events as they occur, not on a pre-defined schedule. This mandates a shift towards event-driven architectures.

3.1 The Limitations of Batch Processing in AIoT

Batch pipelines were a sensible choice when storage was expensive and compute resources were scarce. Data would be collected, stored, and then processed during off-peak hours. However, in AIoT, critical decisions often need to be made instantaneously. Waiting for an end-of-day batch job to process sensor readings about a failing machine component could lead to catastrophic downtime or safety incidents. Similarly, relying on batch updates from devices means that any AI models operating on that data are always working with stale information, hindering their ability to provide proactive insights or take immediate corrective actions.

3.2 The Power of Event-Driven Architectures

Event-driven architectures are designed to react to discrete events as they happen. In an AIoT context, an “event” could be anything from a sensor reading exceeding a threshold, a change in device status, a user input, or even a system-generated alert. These events trigger immediate actions or downstream processes, ensuring that the system is always reflecting the most current state of the physical world.

3.3 Continuous Signal Processing, Not Delayed Summaries

AIoT systems live on continuous signals. Imagine a smart agricultural system monitoring soil moisture, temperature, and nutrient levels. An event-driven architecture allows for immediate adjustments to irrigation systems or nutrient delivery based on real-time sensor data, optimizing crop yield and conserving resources. In contrast, a batch-based system would process this data intermittently, potentially missing critical windows for intervention.

By processing events as they occur, AIoT systems can provide:

- Real-time Insights: Operational dashboards reflect the current state of affairs, enabling informed decision-making.

- Proactive Interventions: AI models can detect anomalies and trigger alerts or automated actions before problems escalate.

- Enhanced Responsiveness: The system can adapt dynamically to changing environmental conditions or operational demands.

3.4 Designing an Event-Driven Streaming Backbone

Implementing an event-driven architecture requires a robust streaming backbone. Technologies like Apache Kafka, RabbitMQ, or cloud-native messaging services (e.g., AWS Kinesis, Azure Event Hubs, Google Cloud Pub/Sub) are designed to handle high volumes of real-time event data. These platforms ingest, store, and distribute events to various consumers – including AI models for inference, data lakes for storage, and other services for processing or visualization.

Key considerations for designing this backbone include:

- Scalability: The ability to handle continually increasing volumes of event data from a growing number of devices.

- Reliability: Ensuring that events are not lost and are delivered in order, even in the face of network outages or system failures.

- Low Latency: Minimizing the delay between an event occurring and its processing.

- Flexibility: The ability to easily integrate new event sources and consumers as the AIoT system evolves.

The shift to an event-driven paradigm is not just a technical choice; it’s a fundamental change in how AIoT systems conceptualize and interact with data. By embracing event-driven architectures, organizations can build AIoT solutions that are truly responsive, intelligent, and capable of generating real-time value.

4. Multi-Sensor Fusion and Digital Twins: Unlocking Holistic Intelligence

Individual sensors provide isolated pieces of information. A temperature sensor tells you the temperature. A vibration sensor tells you about vibrations. While valuable, these individual readings offer a limited view of reality. True insight in AIoT emerges not from isolated data points, but from the correlation and integration of information from multiple sensors, often brought to life within the context of a digital twin.

4.1 Beyond Isolated Data: The Power of Multi-Sensor Fusion

Multi-sensor fusion is the process of combining data from various sensors to obtain a more complete, accurate, and reliable understanding of a system’s state or an environment. Instead of treating each sensor independently, a fusion approach integrates their inputs into a unified intelligence layer.

Consider a predictive maintenance scenario for a rotating machine. A single vibration sensor might indicate an anomaly. However, when combined with data from a temperature sensor (showing overheating), an acoustic sensor (detecting unusual noises), and a power consumption sensor (revealing increased load), the AI model can more accurately diagnose the problem, predict potential failure, and even suggest the root cause. This holistic view significantly improves the accuracy and confidence of AI-driven insights.

Benefits of multi-sensor fusion include:

- Increased Accuracy: Redundancy and complementary information reduce uncertainty and noise.

- Robustness: If one sensor fails, others can still provide data, ensuring greater system resilience.

- Comprehensive Situational Awareness: A richer understanding of the environment or asset’s condition.

- Early Anomaly Detection: Subtle patterns that might be missed by individual sensors become apparent when data is fused.

4.2 Digital Twins: Bringing Context to Life

A digital twin is a virtual representation of a physical asset, process, or system. It acts as a dynamic, living model that continuously receives data from its physical counterpart, runs simulations, applies analytical models (including AI), and can even interact with the physical twin. In AIoT, digital twins are not merely dashboards that display data; they are powerful, interconnected entities that provide context, enable predictive analysis, and facilitate prescriptive actions.

Integrating sensor fusion with digital twins is where the real magic happens. The fused sensor intelligence provides the real-time data stream that feeds the digital twin. The digital twin, in turn, provides the context – the asset’s design specifications, its operational history, maintenance logs, environmental conditions, and even its simulated behavior under various stresses.

4.3 From Descriptive to Predictive and Prescriptive AI

When digital twins are connected to predictive and prescriptive AI models, their power multiplies:

- Predictive Models: The digital twin, fed by fused sensor data, can predict future behavior or potential failures with high accuracy. For example, by simulating stress over time, it can predict when a component is likely to fail, enabling just-in-time maintenance.

- Prescriptive Models: Beyond predicting what will happen, prescriptive AI within the digital twin can recommend the best course of action. Based on current conditions and predicted outcomes, it can suggest optimal operational parameters, maintenance schedules, or even automated adjustments to the physical asset.

For instance, a digital twin of a wind turbine, continuously updated with fused sensor data (wind speed, blade stress, bearing temperature), can not only predict when a gear box might fail but also recommend the optimal time for maintenance, considering weather forecasts, energy demand, and replacement part availability.

4.4 Simulation, Learning, and Acting

Digital twins become truly powerful when they can simulate, learn, and act:

- Simulate: Testing “what-if” scenarios in the virtual environment without impacting the physical asset.

- Learn: Continuously refining predictive and prescriptive models based on new data and operational feedback.

- Act: Directly or indirectly triggering actions on the physical twin, such as adjusting operational settings or dispatching maintenance crews, based on AI-driven insights.

By combining the rich, correlated data from multi-sensor fusion with the contextual intelligence and simulation capabilities of digital twins, AIoT systems can move beyond simple monitoring to achieve true holistic intelligence, enabling proactive decision-making and unprecedented levels of automation and optimization.

5. OTA Model Updates with Rollback: The Agility of AI at Scale

The deployment of an initial AI model on an edge device is only the beginning. AI models are not static entities; they are highly dynamic. In real-world AIoT environments, conditions change, data patterns drift, and new operational requirements emerge. Moreover, the performance of a model can degrade over time due to concept drift or data drift. Therefore, the ability to securely and efficiently update AI models over-the-air (OTA) across thousands, or even millions, of devices is a critical architectural decision.

5.1 Why OTA Model Updates Are Essential

Reliance on manual patching of edge models, device by device, is utterly impractical and unsustainable at scale. Such an approach leads to:

- Operational Bottlenecks: Manual updates are time-consuming, expensive, and prone to human error.

- Stale Models: Models quickly become outdated, leading to degraded performance and inaccurate insights.

- Security Vulnerabilities: Delays in applying critical security patches can leave devices exposed.

- Lack of Agility: The inability to rapidly deploy model improvements or adaptations hinders the AIoT system’s responsiveness to changing conditions.

AI models drift over time. This “model drift” occurs when the relationship between the input data and the target variable changes, or when the characteristics of the input data itself evolve. For example, a predictive maintenance model trained on data from machines operating in a specific climate might perform poorly when deployed in a significantly different environment. OTA updates provide the mechanism to continuously retrain and redeploy improved models, ensuring their accuracy and relevance.

5.2 Building Secure Over-the-Air Update Pipelines

A robust OTA model update pipeline is characterized by several key features:

5.2.1 Version Control and Model Registry

Every model version must be meticulously tracked. A centralized model registry stores different versions of AI models, along with their metadata, performance metrics, and compliance information. This allows for clear traceability and simplifies the management of model lifecycles.

5.2.2 Secure Delivery Mechanisms

Model updates must be delivered securely to edge devices. This involves:

- Digital Signatures: Ensuring that only authorized and authenticated model updates are deployed.

- Encryption: Protecting models during transmission to prevent tampering or interception.

- Device Authentication: Verifying the identity of the target device before delivering an update.

5.2.3 Staged Rollouts and A/B Testing

Deploying a new model version to all devices simultaneously carries significant risk. A phased rollout strategy, starting with a small group of devices and gradually expanding, allows for real-world testing and performance monitoring before widespread adoption. A/B testing can be employed to compare the performance of a new model against an existing one in a live environment, providing data-driven insights for optimal deployment.

5.2.4 Monitoring and Validation

After deployment, continuously monitoring the performance of the updated models on edge devices is crucial. This involves tracking key performance indicators (KPIs), model accuracy, latency, and resource utilization. Automated validation checks can identify any degradation in performance and trigger alerts.

5.3 Rollback Capability: The Fail-Safe Mechanism

Perhaps the most critical aspect of an OTA update pipeline is the robust rollback capability. Even with rigorous testing, unforeseen issues can arise when a new model is deployed in a complex, real-world environment. A “fail-safe” rollback mechanism allows for immediate reversion to a previously stable model version if a deployed update causes unexpected behavior, performance degradation, or critical failures.

This rollback should be:

- Automated: Initiated quickly and efficiently, often triggered by predefined alert conditions or monitoring thresholds.

- Controlled: Allowing administrators to select the specific previous version to revert to.

- Fast: Minimizing the window of potential disruption.

Without a reliable rollback mechanism, organizations face immense risk when deploying model updates at scale. The ability to quickly and seamlessly revert to a stable state provides the necessary confidence to embrace continuous model improvement and ensures the resilience of the AIoT system.

In essence, OTA model updates with integrated rollback capabilities are the cornerstone of agile and resilient AIoT deployments. They enable organizations to adapt, optimize, and secure their AIoT systems throughout their operational lifecycle, ensuring that intelligence remains current, relevant, and reliable.

6. Contextual Alerting, Not Noisy Alerting: Clarity Amidst the Signals

In the realm of AIoT, devices generate an enormous volume of data, leading to a constant stream of potential events and anomalies. The temptation is to configure alerts for every threshold breach or deviation. However, this approach inevitably leads to “alert fatigue” – a state where operators are bombarded with so many alarms that they begin to ignore them, missing critical issues amidst the noise. A successful AIoT architecture moves beyond noisy, simplistic alerting to embrace contextual alerting.

6.1 The Problem of Alert Fatigue

Imagine a factory floor with hundreds of sensors. If each sensor triggers an alert every time a parameter slightly deviates, operators will quickly become overwhelmed. This barrage of irrelevant or low-priority alerts diminishes their ability to discern truly important events. They become desensitized, and the effectiveness of the entire monitoring system is compromised. Alert fatigue tragically leads to slower response times to genuine crises, ultimately killing adoption faster than bad models.

6.2 Beyond Raw Data: Correlating Signals for Meaningful Insights

Contextual alerting focuses on correlating signals from multiple sources before escalating an alert. Instead of alerting on a single sensor reading anomaly, it considers the broader operational context. This means:

- Multi-Sensor Correlation: Combining data from various sensors to confirm and enrich an anomaly. For example, a single temperature spike might be a false positive, but a temperature spike accompanied by increased vibration and motor current draw is a strong indicator of an impending problem.

- Historical Context: Comparing current conditions against historical baselines and known operational patterns. Is this spike truly unusual, or is it a normal fluctuation for this time of day or operational mode?

- Operational Context: Understanding the current state of the system or asset. Is the machine currently idle, or operating under heavy load? Alerts should be prioritized differently based on operational status.

- Business Impact: Assessing the potential impact of an anomaly on business operations. A deviation in a non-critical system might warrant a low-priority notification, while a similar deviation in a production-critical asset demands immediate escalation.

6.3 Designing Confidence Thresholds and Contextual Escalation Rules

To implement contextual alerting, the AIoT architecture must incorporate intelligence layers that go beyond simple rule-based thresholds:

6.3.1 Confidence Thresholds

AI models can often output a confidence score along with a prediction or anomaly detection. Instead of alerting on every anomaly, the system should only escalate alerts that exceed a predefined confidence threshold. This filters out low-confidence detections that might be false positives.

6.3.2 Rule Engines and Complex Event Processing (CEP)

Advanced rule engines or Complex Event Processing (CEP) systems are essential for correlating multiple events and applying business logic. These systems can process streams of events, identify patterns across time and different data sources, and trigger actions only when specific, complex conditions are met. For example, a rule might state: “IF (temperature > X AND vibration > Y AND motor_current > Z) for duration T, THEN escalate to Level 1 Alert.”

6.3.3 Tiered Escalation Paths

Not all alerts are created equal. A tiered escalation strategy ensures that the right people are notified at the right time with the appropriate level of urgency. This might involve:

- Informational Notifications: Low-priority events that are logged for review but don’t require immediate human intervention.

- Warning Alerts: Events that indicate a potential issue but are not yet critical, perhaps notifying local operators or maintenance teams.

- Critical Alerts: High-priority events requiring immediate attention, escalating to supervisors, central control rooms, or even triggering automated shutdowns.

The escalation path should clearly define who is notified, through what channels (email, SMS, pager, dashboard), and what actions are expected.

6.3.4 Feedback Loops

The alerting system should incorporate feedback from operators. If an alert is consistently a false positive, the system should learn and adjust its thresholds or correlation rules. This continuous learning process refines the alerting logic, making it more intelligent and effective over time.

By prioritizing contextual alerting, AIoT systems can transform from a source of overwhelming notifications into an intelligent assistant that highlights precisely what matters, when it matters. This clarity enables faster, more effective responses, builds trust with operators, and ultimately drives the successful adoption and value realization of AIoT initiatives.

7. Strategic Data Retention: From Liability to Asset

In the era of big data, the mantra often heard is “store everything.” While data is undoubtedly a valuable asset in AIoT, indiscriminately storing every single data point from every device, forever, is not a strategy; it’s a significant liability. Unmanaged data growth leads to escalating storage costs, complicates data governance, hinders query performance, and can even pose regulatory compliance risks. A successful AIoT architecture demands a strategic approach to data retention, categorizing data based on its value, frequency of access, and compliance requirements.

7.1 The Pitfalls of Indefinite Data Storage

Storing everything forever comes with several drawbacks:

- Exploding Costs: Storage costs accrue quietly but relentlessly. For large-scale AIoT deployments with millions of devices generating terabytes or petabytes of data daily, these costs can quickly become prohibitive and cripple scaling efforts.

- Performance Degradation: Querying and analyzing vast, undifferentiated datasets becomes slower and more resource-intensive, impacting the responsiveness of AI models and analytical applications.

- Compliance Risks: Retaining sensitive data beyond its necessary lifecycle can expose organizations to regulatory fines and reputational damage, especially concerning data privacy regulations (e.g., GDPR, CCPA).

- Management Complexity: Managing and securing huge data lakes without clear retention policies increases operational overhead and introduces potential security vulnerabilities.

7.2 Defining Hot, Warm, and Cold Data Layers Strategically

The key to strategic data retention is to categorize data into different tiers based on its intended use throughout its lifecycle. This multi-tiered approach optimizes costs, performance, and compliance.

7.2.1 Hot Data (Real-time/Near Real-time)

- Purpose: Data that is immediately needed for real-time AI inference, operational monitoring, and instantaneous decision-making.

- Characteristics: High-frequency access, low latency required.

- Storage: Typically in-memory databases, fast SSD-backed databases, or specialized time-series databases at the edge or in a high-performance cloud tier.

- Retention: Very short; perhaps seconds, minutes, or a few hours, just long enough for immediate processing and initial analysis.

7.2.2 Warm Data (Recent Historical)

- Purpose: Data used for recent trend analysis, short-term historical comparisons, debugging, and retraining of AI models.

- Characteristics: Accessed frequently but not constantly, moderate latency acceptable.

- Storage: Scalable cloud databases, data warehouses, or object storage with higher performance tiers.

- Retention: Days, weeks, or a few months, depending on the analytical needs and model retraining cycles.

7.2.3 Cold Data (Long-term Archive)

- Purpose: Data primarily retained for regulatory compliance, long-term historical analysis, auditing, or forensic investigations. It’s often used for periodic, deep-dive analysis or full model retraining.

- Characteristics: Infrequent access, high latency acceptable, lowest cost storage.

- Storage: Low-cost object storage (e.g., AWS S3 Glacier, Azure Blob Archive), tape archives.

- Retention: Months, years, or indefinitely, as mandated by compliance requirements.

7.3 Implementing Data Lifecycle Management Policies

Effective data retention requires robust data lifecycle management policies and automated processes. This involves:

- Automated Tiering: Algorithms that automatically move data from hot to warm to cold storage based on predefined rules (e.g., data age, access patterns).

- Data Archiving and Deletion: Clear policies for what data needs to be archived and when it can be safely purged in accordance with retention schedules and compliance regulations.

- Data Governance: Establishing clear ownership, access controls, and auditing mechanisms for all data tiers.

- Cost Optimization: Regularly reviewing storage costs across tiers and optimizing policies to balance performance needs with budgetary constraints.

- Anonymization/Pseudonymization: For sensitive data, implementing anonymization or pseudonymization techniques before archiving reduces compliance risks while retaining analytical value.

The goal is to retain what truly adds value – what trains better models, provides critical insights, and proves compliance – and judiciously discard the rest. By adopting a strategic approach to data retention, AIoT architectures can transform data from a potential liability into a continuously valuable asset, ensuring cost-effectiveness, performance, and regulatory adherence throughout the system’s lifecycle.

8. Fail-Safe Mechanisms: Resilience Over Unquestioning Intelligence

The allure of artificial intelligence can sometimes lead to an over-reliance on its infallibility. However, in industrial AIoT systems, assuming the AI will always be right is a dangerous gamble. AI models, despite their sophistication, can make errors, encounter unforeseen scenarios, or drift in performance over time. In environments where physical assets, human safety, and critical operations are at stake, resilience matters more than intelligence alone. A robust AIoT architecture must integrate comprehensive fail-safe mechanisms, including clear human override paths and predefined fallback states.

8.1 The Reality of AI Imperfection

AI models are trained on historical data and operate within the bounds of what they have learned. They can struggle with:

- Edge Cases: Scenarios not adequately represented in their training data.

- Novel Conditions: Unprecedented events or environmental changes.

- Sensor Failures/Drift: Incorrect or degraded input data.

- Adversarial Attacks: Malicious inputs designed to disrupt model performance.

- Concept Drift: Changes in the underlying relationships between data points that render the model’s past learning obsolete.

In an AIoT system controlling a critical piece of machinery, a mistaken AI decision could lead to asset damage, production halts, or even human injury. Therefore, designing for resilience means acknowledging these imperfections and building layers of protection.

8.2 Designing Safe Fallback States

A fallback state is a predefined, safe operational mode that an AIoT system can revert to if its AI component encounters an error, ambiguity, or experiences a failure. This could involve:

- Halting Operation: In critical safety scenarios, the safest fallback might be to immediately stop the controlled process or machinery.

- Manual Control: Shifting control from AI automation to human operators.

- Predefined Parameters: Operating equipment based on conservative, pre-programmed parameters rather than AI-driven optimizations.

- Redundant Systems: Switching to a backup AI model or an alternative control system.

- Degraded Mode: Reducing functionality but maintaining core operations, e.g., a smart factory reducing throughput rather than shutting down completely.

The selection of a fallback state depends entirely on the criticality of the system and the potential consequences of AI failure. It requires a thorough risk assessment during the architectural design phase.

8.3 Human Override Paths: The Ultimate Safety Net

Even the most sophisticated automated systems require human oversight, especially in industrial contexts. Providing clear, intuitive, and readily accessible human override paths is paramount. This ensures that operators can intervene quickly and effectively when:

- The AI makes an incorrect decision.

- The AI encounters a situation it cannot handle.

- Human judgment is deemed necessary due to unforeseen circumstances.

- System malfunctions that AI cannot self-correct.

Human override mechanisms must be:

- Prioritized: Human commands should take precedence over AI instructions.

- Easily Accessible: Physical buttons, emergency stop mechanisms, or clear UI controls must be available.

- Intuitive: Training and clear procedures are essential so that operators can confidently and correctly intervene.

- Monitored: Any human intervention should be logged for analysis, helping to improve the AI or the system design.

For instance, in an autonomous vehicle system, a driver always has the ability to take manual control. Similarly, in an autonomous industrial robotic arm, an emergency stop button or a manual joystick control interface is vital.

8.4 Building Resilience Beyond Intelligence

Beyond fallback states and human overrides, fail-safe mechanisms also encompass:

- Redundancy: Duplicating critical components (sensors, controllers, network links) to ensure continuous operation in case of individual component failure.

- Self-Healing Capabilities: Systems designed to automatically detect and recover from certain types of failures without human intervention.

- Real-time Diagnostics: Continuous monitoring of both physical components and AI model performance to detect anomalies early.

- Robust Error Handling: Comprehensive error detection and handling routines within the software to prevent cascading failures.

The objective is to build an AIoT system that is not only intelligent but also inherently resilient – a system that can gracefully handle unexpected events, mitigate risks, and ensure continuous, safe operation even when its AI capabilities are challenged. This focus on reliability and robustness underscores the principle that in industrial systems, resilience often matters more than raw intelligence alone.

9. Fail-Safe Mechanisms for AI: Trust, But Verify, But Also Protect

This section delves deeper into the critical aspect of ensuring AI systems do not operate as black boxes, especially when they are controlling physical processes. The principle here is not just about human oversight, but also about building in systemic “safety valves” for the AI itself.

9.1 The Inherent Risks of Autonomous AI

As AI models become more sophisticated and autonomous, the risks associated with their potential failures grow. These failures could stem from:

- Data Poisoning: Malicious alteration of training data, leading to biased or incorrect model behavior.

- Adversarial Attacks: Carefully crafted inputs that intentionally fool an AI model, causing it to make incorrect predictions.

- Unforeseen Interactions: AI models operating in complex environments might interact with other systems or conditions in ways not anticipated during design.

In mission-critical AIoT applications, these risks cannot be ignored. The goal is to design systems that expect failure and are prepared for it.

9.2 The “Assume the AI Will Not Always Be Right” Principle

This is a fundamental shift in mindset. Instead of optimistically assuming flawless AI operation, a robust architecture proactively plans for scenarios where the AI’s recommendations or actions are incorrect, ambiguous, or even malicious. This principle guides the design of all fail-safe mechanisms.

9.3 Systemic Fallback States: Not Just for Humans

While human override is crucial, the system itself should have automated “tripwires” or fallback states that activate when the AI’s performance deviates significantly or when certain conditions are met. These could include:

- Confidence Scoring: If an AI model’s prediction confidence drops below a predefined threshold, the system automatically reverts to a safer, default mode or requests human review. For example, if a vision AI model detects an object but with only 30% confidence, it might trigger a warning rather than initiating a high-risk autonomous action.

- Anomaly Detection on AI Outputs: Monitoring the AI’s actual outputs or actions. If the AI starts generating commands that are statistically unusual or physically impossible (e.g., commanding a valve to open to 120% capacity), the system should detect this anomaly and halt or revert.

- Redundancy with Diversity: Employing multiple AI models (trained with different algorithms or datasets) and using a voting system or a weighted average. If one model deviates significantly from the others, its output can be flagged or ignored, preventing a single point of AI failure.

- Physical Constraints: Implementing physical barriers or governors that prevent the AI from issuing commands that would cause physical damage or exceed safe operating limits, regardless of what the AI “thinks.”

9.4 Human-in-the-Loop Architectures, Not Just Human Override

Beyond simply having an override button, a “human-in-the-loop” architecture means humans are actively involved in the AI’s decision-making process at strategic points. This could involve:

- AI for Recommendation, Human for Decision: The AI provides recommendations or predictions, but a human operator makes the final decision, especially for high-stakes actions.

- Supervisory Control: Humans monitor AI operations and intervene when necessary, effectively overseeing rather than directly controlling.

- Explainable AI (XAI) Interfaces: Providing operators with clear explanations of why the AI made a particular decision, enabling them to better understand, trust, and even correct the AI.

These approaches move beyond reactive override to proactive human involvement, fostering a symbiotic relationship between human and AI intelligence.

9.5 Resilience as a Core Architectural Principle

In industrial AIoT where high stakes are involved, building for resilience means accepting that unexpected situations will arise. The architecture must prioritize:

- Graceful Degradation: When parts of the system or AI fail, the entire system doesn’t collapse but continues to operate in a reduced or safer mode.

- Error Containment: Isolating issues to prevent them from spreading and causing systemic failures.

- Rapid Recovery: Mechanisms for quickly diagnosing and resolving problems and restoring full functionality.

These considerations are not add-ons; they are integral components of an AIoT architecture that aims for successful and safe long-term operation. By embracing the principle that AI, while powerful, is not infallible, and by proactively building in comprehensive fail-safe and human-in-the-loop mechanisms, organizations can deploy AIoT solutions with confidence, ensuring both intelligence and unwavering operational integrity.

Conclusion: Crafting the Future of Connected Intelligence

The journey to successful AIoT implementation is complex, demanding a meticulous approach that extends far beyond the development of sophisticated AI models. While groundbreaking algorithms and impressive model accuracy are undoubtedly components of the equation, they represent only a fraction of what is truly required to bring intelligent, connected systems to life in the real world. The ultimate success or failure of an AIoT initiative rests squarely on a handful of critical architectural decisions made early and rigorously adhered to throughout the development lifecycle.

These nine architectural decisions – from the strategic distribution of intelligence between edge and cloud to the absolute imperative of device-level security, the agility of event-driven architectures, the profound insights unlocked by sensor fusion and digital twins, the necessity of flexible OTA model updates with rollback, the clarity of contextual alerting, the efficiency of strategic data retention, and the non-negotiable importance of fail-safe mechanisms – collectively form the bedrock of robust, scalable, and resilient AIoT deployments.

They are the “hard calls” that differentiate a stalled pilot from a transformative, production-ready platform. They demand a profound understanding of operational environments, a foresight into future challenges, and a commitment to building systems that prioritize reliability, security, and human oversight alongside artificial intelligence.

The future of industries, cities, and critical infrastructure will increasingly intertwine with AIoT. By focusing on these architectural fundamentals, organizations can move beyond the hype and build solutions that not only deliver on the promise of connected intelligence but also thrive under the unpredictable conditions of the real world. It’s about designing for scale, building for endurance, and, most importantly, shipping intelligence that truly makes a difference – not just data.

Ready to navigate these critical architectural decisions and unlock the full potential of your AIoT initiatives? We invite you to connect with the experts at IoT Worlds. Together, we can engineer a future where your intelligent systems don’t just survive, but truly succeed.

Contact us today to discuss your vision and challenges. Send an email to info@iotworlds.com.