The telecommunications landscape is on the cusp of its most significant transformation since the advent of 4G. This shift, driven by the integration of Artificial Intelligence directly into the Radio Access Network (RAN), is known as AI-RAN. While the term is gaining traction, many engineers and industry professionals are still grappling with its profound implications. This comprehensive cheatsheet aims to demystify AI-RAN, providing a detailed breakdown of its core concepts, architectural layers, use cases, key players, deployment status, and learning pathways.

AI-RAN is not merely an incremental upgrade or the application of AI to existing RAN infrastructure. It represents a fundamental paradigm shift where the base station itself evolves into a powerful AI computer. This revolutionary concept enables the simultaneous execution of traditional 5G baseband processing and advanced AI inference workloads on the same GPU-accelerated hardware, directly at the network edge. This integrated approach redefines the capabilities of wireless networks, paving the way for unprecedented efficiency, intelligence, and innovative services.

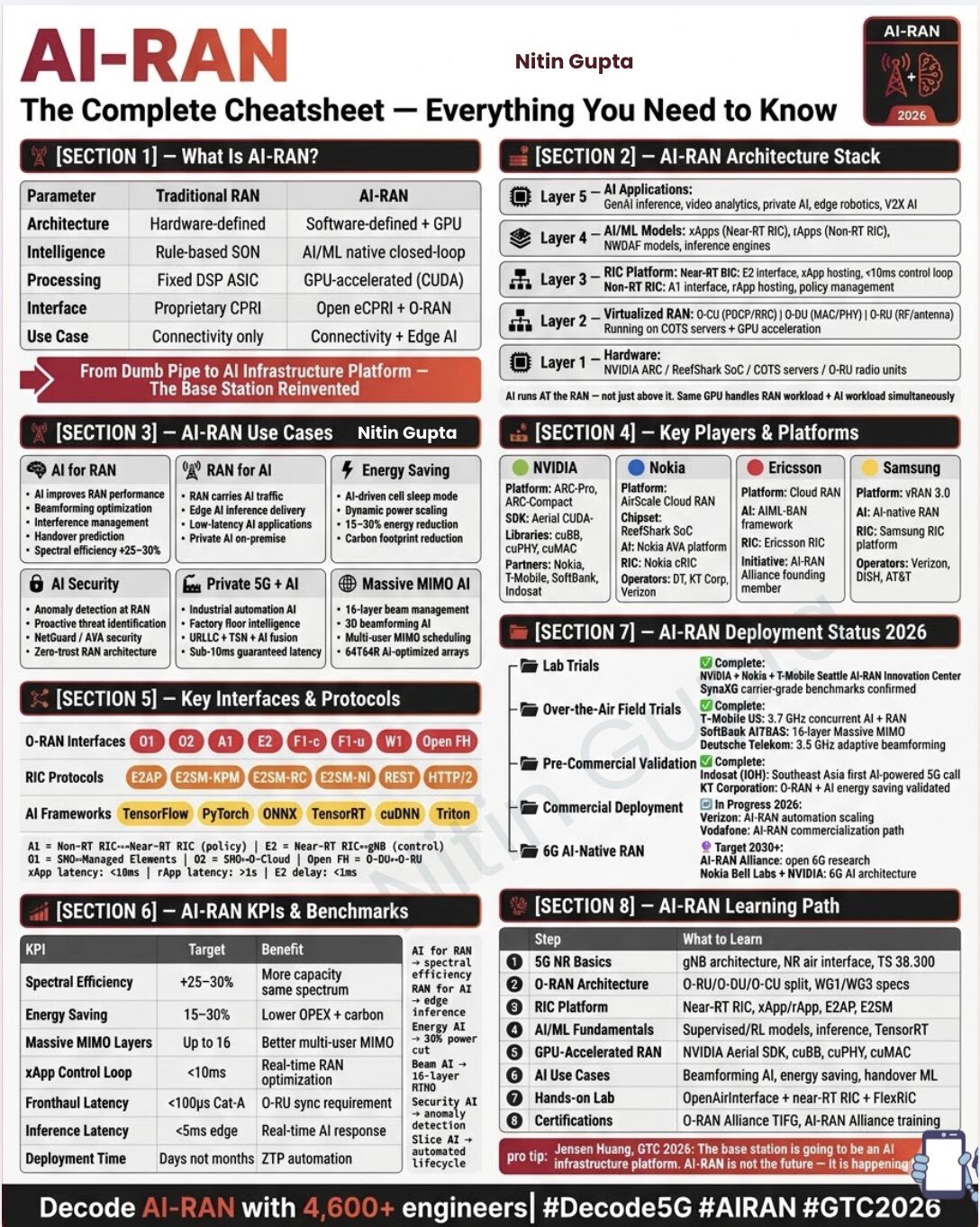

What IS AI-RAN Exactly?

At its core, AI-RAN transforms the traditional Radio Access Network, moving beyond a simple communication pipeline to become a sophisticated AI-driven infrastructure platform.

Beyond Traditional RAN: A Fundamental Shift

Traditionally, the RAN has been characterized by hardware-defined architectures, rule-based intelligence for Self-Organizing Networks (SON), and processing handled by fixed Digital Signal Processor (DSP) ASICs. The interface was typically proprietary CPRI (Common Public Radio Interface), and its primary use case was connectivity.

AI-RAN shatters these limitations. Its architecture is explicitly software-defined and heavily leverages Graphics Processing Units (GPUs). Intelligence is no longer rule-based but relies on AI/ML native closed-loop systems. Processing, a critical aspect, is GPU-accelerated, often utilizing CUDA. The interface shifts towards open eCPRI and the broader O-RAN framework, signifying a move towards open, disaggregated, and intelligent networks. Crucially, its use case extends beyond mere connectivity to encompass connectivity plus edge AI.

The Base Station Reinvented: An AI Computer

The fundamental breakthrough of AI-RAN lies in the transformation of the base station into an AI computer. Imagine a single GPU not only efficiently handling the complex calculations required for 5G baseband processing but also simultaneously executing sophisticated AI inference tasks. This parallel processing capability, directly at the edge, is what defines AI-RAN and unlocks its immense potential. It’s a move “From Dumb Pipe to AI Infrastructure Platform – The Base Station Reinvented,” as articulated by Jensen Huang at GTC 2026: “The base station is going to be an AI infrastructure platform. AI-RAN is not the future – It is happening.”

The AI-RAN Architecture Stack – 5 Layers

The AI-RAN stack is a layered architecture designed to harmoniously integrate communication and compute, enabling both traditional RAN functionalities and advanced AI workloads. This five-layer model illustrates how AI-RAN operates from the physical hardware up to sophisticated applications.

Layer 1: Hardware

This foundational layer comprises the physical infrastructure that underpins AI-RAN. It includes high-performance computing components essential for both RAN and AI workloads.

- NVIDIA ARC / ReefShark SoC / COTS servers / O-RU radio units: This layer incorporates specialized hardware like NVIDIA’s ARC platforms, Nokia’s ReefShark System-on-Chip (SoC), and commercial off-the-shelf (COTS) servers, all designed to host the demanding computations of the RAN and AI. O-RU (Open Radio Unit) radio units represent the RF front-end. The key here is that AI runs at the RAN, not just above it, with the same GPU handling both RAN and AI workloads simultaneously.

Layer 2: Virtualized RAN

Building on the hardware, this layer virtualizes the traditional RAN functions, making them more flexible and software-driven.

- O-CU (PDCP/RRC) / O-DU (MAC/PHY) / O-RU (RF/antenna) Running on COTS servers + GPU acceleration: This layer embodies the disaggregation principles of O-RAN. The Central Unit (O-CU) handles higher-layer protocols like PDCP (Packet Data Convergence Protocol) and RRC (Radio Resource Control). The Distributed Unit (O-DU) manages MAC (Medium Access Control) and PHY (Physical Layer) functions, while the O-RU handles the RF and antenna aspects. These components are virtualized and run on COTS servers, leveraging GPU acceleration for enhanced performance and efficiency.

Layer 3: RIC Platform

The RAN Intelligent Controller (RIC) is central to injecting intelligence and programmability into the RAN. It provides the framework for deploying AI/ML applications and policies.

- Near-RT RIC (<10ms) + Non-RT RIC (policy): The RIC is split into two primary components. The Near-Real-Time RIC (Near-RT RIC) operates with very low latency (less than 10 milliseconds), making real-time adjustments to RAN functions possible via the E2 interface and X-Apps. The Non-Real-Time RIC (Non-RT RIC) operates with higher latency (greater than 1 second) and focuses on policy management and provides guidance to the Near-RT RIC via the A1 interface, leveraging R-Apps.

Layer 4: AI/ML Models

This layer hosts the actual AI and Machine Learning models that drive intelligence within the RAN and enable new services.

- xApps (Near-RT RIC), rApps (Non-RT RIC), NWDAF inference engines: These are the intelligent applications that reside within the RIC. X-Apps are deployed on the Near-RT RIC for real-time control and optimization tasks. R-Apps, on the other hand, are deployed on the Non-RT RIC for broader policy management, network optimization, and resource allocation. Network Data Analytics Function (NWDAF) inference engines also play a crucial role in leveraging network data for advanced analytics and predictive capabilities.

Layer 5: AI Applications

At the top of the stack are the diverse AI applications that are enabled and enhanced by the underlying AI-RAN infrastructure.

- GenAI, video analytics, private AI, edge robotics, V2X AI: This layer represents the culmination of AI-RAN’s capabilities. It includes applications like Generative AI (GenAI) for creating new content or optimizing network operations, real-time video analytics for security or traffic management, private AI solutions deployed on-premise for enterprises, edge robotics requiring ultra-low latency control, and Vehicle-to-Everything (V2X) AI for intelligent transportation systems. These applications directly benefit from the distributed compute and intelligence of AI-RAN.

6 Things AI-RAN Actually Does

AI-RAN’s capabilities span a wide range of critical functionalities, offering significant improvements to performance, efficiency, security, and the enablement of new use cases.

AI for RAN: Optimizing Network Performance

This category highlights how AI directly enhances the traditional functions of the Radio Access Network itself.

- Beamforming Optimization: AI algorithms can intelligently adjust and optimize antenna beamforming, ensuring signals are precisely directed to users. This leads to improved signal strength, reduced interference, and enhanced coverage.

- Interference Management: By predicting and mitigating interference, AI helps maintain signal quality and network stability, especially in dense urban environments or areas with multiple overlapping cells.

- Handover Prediction: AI can proactively predict when a user device will need to transfer from one cell to another, enabling smoother and more seamless handovers, reducing dropped calls and service interruptions.

- Spectral Efficiency +25–30%: Through these optimizations, AI-RAN can achieve a substantial increase in spectral efficiency, meaning more data can be transmitted over the same amount of spectrum, leading to higher network capacity and faster speeds.

RAN for AI: Enabling Edge Intelligence

Beyond optimizing the RAN, AI-RAN also acts as a powerful platform for delivering AI-driven applications directly at the network edge.

- Edge AI Inference Delivery: The integrated GPU capabilities at the base station allow for real-time AI model inference closer to the data source, reducing latency and bandwidth requirements for AI applications.

- Private AI On-Premise: Enterprises can deploy private AI solutions directly within their localized RAN infrastructure, ensuring data privacy and dedicated computational resources for their specific needs.

- Low-Latency AI Applications at the Tower: Critical applications requiring immediate responses, such as augmented reality, real-time control of robotics, or smart city applications, can leverage the ultra-low latency provided by AI running at the cell tower.

Energy Saving: A Greener Network

AI-RAN significantly contributes to environmental sustainability and operational cost reduction through intelligent energy management.

- AI-Driven Cell Sleep Mode: AI algorithms can analyze traffic patterns and dynamically put cells into sleep mode during periods of low activity, reducing energy consumption without impacting service quality.

- Dynamic Power Scaling: Power consumption of network components can be dynamically adjusted based on demand, ensuring that only the necessary power is used at any given time.

- 15–30% Energy Reduction: These AI-driven optimizations lead to a substantial reduction in energy consumption (15-30%), directly translating into lower operational expenses (OPEX) and a reduced carbon footprint.

AI Security: Fortifying the Network Edge

Integrating AI at the RAN level enhances security posture, enabling proactive threat detection and robust protection.

- Anomaly Detection at RAN Level: AI can continuously monitor network traffic and behavior at the RAN, identifying unusual patterns that may indicate a security threat or attack.

- Proactive Threat Identification: By leveraging machine learning, AI-RAN can identify emerging threats and vulnerabilities in real-time, allowing for immediate countermeasures.

- Zero-Trust RAN Architecture: AI principles can underpin a zero-trust security model within the RAN, verifying every user and device before granting access, regardless of their location.

- NetGuard/AVA Security: Solutions like Nokia AVA (AI-driven Value Accelerator) platform incorporate AI for enhanced security capabilities.

Private 5G + AI: Industrial Transformation

The fusion of Private 5G networks with AI is a game-changer for industrial applications, promising unprecedented levels of automation and intelligence.

- Industrial Automation AI: AI-RAN facilitates advanced automation in industrial settings, enabling smart factories and connected manufacturing processes.

- Factory Floor Intelligence: Real-time data from sensors and devices on the factory floor can be analyzed by AI at the edge to optimize operations, predict machinery failures, and enhance safety.

- URLLC + TSN + AI Fusion: Ultra-Reliable Low Latency Communication (URLLC), Time-Sensitive Networking (TSN), and AI work in concert to deliver sub-10ms guaranteed latency, crucial for mission-critical industrial applications like autonomous guided vehicles and precision robotics.

- Sub-10ms Guaranteed Latency: This level of predictable, low latency is paramount for applications where even a slight delay can have significant consequences.

Massive MIMO AI: Advanced Antenna Management

Massive Multiple-Input Multiple-Output (MIMO) technology, a cornerstone of 5G, is further enhanced by AI.

- 16-Layer Beam Management: AI enables more sophisticated management of up to 16 independent data streams (layers) through advanced beamforming, significantly boosting capacity and throughput.

- 3D Beamforming AI: AI algorithms can create highly precise 3D beams, allowing for more efficient spectrum utilization and better coverage in complex environments.

- 64T64R AI-Optimized Arrays: AI optimizes antenna arrays with 64 transmit and 64 receive elements, maximizing the benefits of Massive MIMO for improved performance and reliability.

- Multi-User MIMO Scheduling: AI can intelligently schedule data transmissions to multiple users simultaneously on the same frequency and time resources, further increasing network efficiency.

Key Players & Platforms Building AI-RAN Right Now

The development and deployment of AI-RAN are being spearheaded by major telecommunications and technology companies, each contributing unique platforms and expertise.

NVIDIA

NVIDIA stands at the forefront of AI-RAN enablement, leveraging its GPU technology and software ecosystem.

- Platform: ARC-Pro, ARC-Compact – These platforms are designed for high-performance computing at the edge, capable of running both RAN and AI workloads.

- SDK: Aerial CUDA-Libraries – A comprehensive suite of CUDA-accelerated libraries, including

cuBB(for baseband processing),cuPHY(for physical layer functions), andcuMAC(for MAC layer processing). - Partners: Nokia, T-Mobile, SoftBank, Indosat – NVIDIA is collaborating with leading operators and equipment vendors to bring AI-RAN to commercial deployment. T-Mobile U.S., for instance, demonstrated concurrent AI and RAN processing on the NVIDIA AI-RAN platform with Nokia’s CUDA-accelerated RAN software in an over-the-air field environment. SoftBank achieved an industry-first 16-layer massive MIMO using a fully software-defined 5G on NVIDIA’s platform.

Nokia

Nokia is a strong proponent of AI-RAN, integrating AI into its AirScale Cloud RAN offering.

- Platform: AirScale Cloud RAN – Nokia’s cloud-native RAN solution designed for flexibility and scalability.

- Chipset: ReefShark SoC – Nokia’s custom System-on-Chip provides powerful processing for RAN functions.

- AI: Nokia AVA platform + cRIC – The AVA platform leverages AI for network automation and optimization, integrated with their Cloud-native RIC (cRIC).

- Operators: Deutsche Telekom, KT Corp, Verizon – Nokia is working with these operators on various AI-RAN initiatives, including pre-commercial validations and energy-saving deployments.

Ericsson

Ericsson is actively involved in AI-RAN, focusing on its Cloud RAN architecture and RIC platform.

- Platform: Cloud RAN – Ericsson’s virtualized RAN solution.

- AI: AIML-RAN framework – Ericsson’s framework for integrating AI and Machine Learning into the RAN.

- RIC: Ericsson RIC platform – Its intelligent controller supports AI applications.

- Role: AI-RAN Alliance founding member – Ericsson plays a key role in shaping the industry’s direction for AI-RAN.

Samsung

Samsung is advancing AI-RAN through its vRAN 3.0 platform and AI-native approach.

- Platform: vRAN 3.0 – Samsung’s virtualized RAN solution.

- AI: AI-native RAN – Emphasizing that AI is a core component, not an add-on.

- RIC: Samsung RIC platform – Providing intelligence and programmability.

- Operators: Verizon, DISH, AT&T – Samsung is collaborating with these major operators on their AI-RAN deployments.

AI-RAN Deployment Status 2026

AI-RAN is rapidly transitioning from theoretical concept to tangible reality, with significant progress across lab trials, over-the-air field trials, pre-commercial validation, and commercial deployment, setting the stage for 6G.

Lab Trials: Foundations Laid

- Complete:

- NVIDIA + Nokia + T-Mobile Seattle AI-RAN Innovation Center: This collaboration has successfully completed lab trials, showcasing the foundational integration of AI and RAN.

- SynaXG carrier-grade benchmarks confirmed: Benchmarking results from partners like SynaXG confirm that AI-RAN on NVIDIA platforms delivers high-speed, carrier-grade performance with extreme reliability across multiple 5G spectrum bands.

Over-the-Air Field Trials: Real-World Testing

- Complete:

- T-Mobile US: 3.7 GHz concurrent AI + RAN: T-Mobile U.S. demonstrated concurrent AI and RAN processing on the NVIDIA AI-RAN platform using Nokia’s CUDA-accelerated RAN software in their over-the-air field environment. This included supporting commercial devices running applications like video streaming and generative AI alongside 5G.

- SoftBank AITRAS: 16-layer Massive MIMO: SoftBank achieved an industry-first 16-layer massive MIMO using fully software-defined 5G running on NVIDIA’s AI-RAN platform in live field trials.

- Deutsche Telekom: 3.5 GHz adaptive beamforming: Deutsche Telekom has successfully implemented adaptive beamforming utilizing AI-RAN principles in field trials.

Pre-Commercial Validation: Near-Market Readiness

- Complete:

- Indosat (IOH): Southeast Asia first AI-powered 5G call: Indosat Ooredoo Hutchison (IOH) moved from proof-of-concept to pre-commercial field validation, implementing software-defined 5G with Nokia’s vRAN software on NVIDIA AI-RAN platforms. This milestone was showcased at MWC with Southeast Asia’s first AI-powered 5G call, demonstrating seamless AI and network intelligence for secure, real-time cross-border connectivity and remote control of a robotic dog.

- KT Corporation: O-RAN + AI energy saving validated: KT Corp has validated O-RAN and AI-driven energy-saving solutions, showcasing the practical benefits of AI-RAN.

Commercial Deployment: Actively Rolling Out

- In Progress 2026:

- Verizon: AI-RAN automation scaling: Verizon is actively working on scaling AI-RAN automation across its network.

- Vodafone: AI-RAN commercialization path: Vodafone is progressing with its commercialization plans for AI-RAN, indicating a clear path to market adoption. Emerging reports suggest Nokia and NVIDIA are targeting commercial AI-RAN by 2027.

6G AI-Native RAN: The Future Horizon

- Target 2030+:

- AI-RAN Alliance: open 6G research: The AI-RAN Alliance is actively conducting open research into the principles and architecture of 6G, with AI as a fundamental component.

- Nokia Bell Labs + NVIDIA: 6G AI architecture: Collaboration between Nokia Bell Labs and NVIDIA is focused on defining and developing the AI architecture for future 6G networks. The vision for 6G is inherently AI-native, meaning AI is not an add-on but a core building block of the RAN, operating in new frequency bands and acting as distributed computers running AI models at radio speed.

Key Interfaces & Protocols

The open and disaggregated nature of AI-RAN relies on a set of standardized interfaces and protocols, particularly those defined by O-RAN, to enable interoperability and intelligent control.

O-RAN Interfaces

These interfaces define the communication pathways between different functional components within the O-RAN architecture.

- O1: The O1 interface connects RIC elements (both Near-RT and Non-RT) to management planes for configuration, performance management, fault management, and software management. It handles O-RAN managed elements.

- O2: This interface connects the Orchestration/OSS (Operational Support System) to the O-Cloud, enabling infrastructure lifecycle management.

- A1: The A1 interface provides communication between the Non-RT RIC and the Near-RT RIC. It is used for policy management, enrichment information, and operational guidance from the Non-RT RIC to the Near-RT RIC.

- E2: The E2 interface is critical for control and management within the Near-RT RIC domain. It connects the Near-RT RIC to the O-CU and O-DU (gNB functions), allowing xApps to collect real-time data and send control commands. The E2 delay is specified to be less than 1ms.

- F1-c (Control Plane): Used for control plane signaling between the O-CU and O-DU.

- F1-u (User Plane): Used for user plane data transfer between the O-CU and O-DU.

- W1: The W1 interface is between the Central Unit-Control Plane (CU-CP) and the Central Unit-User Plane (CU-UP).

- Open FH (Fronthaul): Refers to the open fronthaul interface, connecting the O-DU to the O-RU. This typically uses eCPRI for efficient data transfer.

RIC Protocols

Within the RIC architecture, specific protocols facilitate communication and application development.

- E2AP (E2 Application Protocol): The main protocol used over the E2 interface for communication between the Near-RT RIC and the E2 Nodes (O-CU/O-DU).

- E2SM-KPM (E2 Service Model – Key Performance Measurement): An E2 Service Model for reporting key performance indicators (KPIs) from the E2 Nodes to the Near-RT RIC.

- E2SM-RC (E2 Service Model – RAN Control): An E2 Service Model for enabling direct control and re-direction of RAN functions by the Near-RT RIC.

- E2SM-NI (E2 Service Model – Network Interface): An E2 Service Model related to network interface management.

- REST (Representational State Transfer): Commonly used for communication over the A1 interface, particularly for policy management and data exchange between the Non-RT RIC and other network elements.

- HTTP/2 (Hypertext Transfer Protocol Version 2): Often used as the underlying transport for REST APIs, providing efficient and multiplexed communication.

AI Frameworks

AI-RAN leverages a variety of established AI frameworks and libraries for model development, training, and inference.

- TensorFlow: An open-source machine learning framework developed by Google, widely used for deep learning.

- PyTorch: An open-source machine learning library primarily developed by Facebook’s AI Research lab (FAIR), known for its flexibility.

- ONNX (Open Neural Network Exchange): An open standard for representing machine learning models, allowing models to be transferred between different frameworks.

- TensorRT: An NVIDIA library for high-performance inference on NVIDIA GPUs, optimizing models for deployment.

- cuDNN (CUDA Deep Neural Network library): An NVIDIA GPU-accelerated library for deep neural networks, providing highly optimized primitives for deep learning computations.

- Triton: NVIDIA’s open-source inference serving software that simplifies the deployment of AI models at scale.

AI-RAN KPIs & Benchmarks

The success of AI-RAN is measured by tangible improvements in key performance indicators (KPIs) and operational benchmarks. These metrics demonstrate the benefits of integrating AI into the RAN.

| KPI | Target | Benefit |

|---|---|---|

| Spectral Efficiency | +25–30% | More capacity via the same spectrum. Driven by AI for spectral efficiency. |

| Energy Saving | 15–30% | Lower OPEX & carbon footprint reduction. Achieved by Energy AI, leading to 30% power cut. |

| Massive MIMO Layers | Up to 16 | Better multi-user MIMO. Enabled by Beam AI for 16-layer Real-Time Network Optimization (RTNO). |

| xApp Control Loop | <10ms | Real-time RAN optimization. |

| Fronthaul Latency | <100µs Cat-A | O-RU sync requirement. |

| Inference Latency | <5ms edge | Real-time AI response. Driven by RAN for AI edge inference. |

| Deployment Time | Days not months | ZTP (Zero-Touch Provisioning) automation. Slice AI enables an automated lifecycle. Security AI for anomaly detection. |

These benchmarks highlight how AI-RAN leads to:

- Significantly increased network capacity and efficiency through optimized spectrum utilization.

- Substantial reductions in operational costs and environmental impact via intelligent energy management.

- Enhanced user experience and network reliability through superior Massive MIMO capabilities.

- Real-time and ultra-low latency responses, crucial for demanding AI applications and network control.

- Rapid and automated network deployment and management, ensuring agility and scalability.

- Improved security posture with proactive anomaly detection.

The AI-RAN Learning Path

For engineers and professionals looking to deepen their understanding and expertise in AI-RAN, a structured learning path is essential. This sequence provides a comprehensive roadmap, starting from fundamental concepts and progressing to advanced, hands-on applications.

Step 1: 5G NR Basics

A solid understanding of 5G New Radio (NR) is the foundation.

- What to Learn: gNB architecture, NR air interface, TS 38.300.

- Familiarize yourself with the overall architecture of the gNB (next-generation NodeB), which is the base station in a 5G network.

- Understand the nuances of the NR air interface, including waveform, numerology, and channel structures.

- Dive into 3GPP Technical Specification (TS) 38.300, which defines the overall description of NR for the user equipment (UE), radio access network (RAN), and core network interfaces.

Step 2: O-RAN Architecture

As AI-RAN heavily leverages O-RAN principles, understanding its architecture is crucial.

- What to Learn: O-RU/O-DU/O-CU split, WG1/WG3 specs.

- Study the functional split of the O-RAN architecture: the Open Radio Unit (O-RU), Open Distributed Unit (O-DU), and Open Central Unit (O-CU), and their respective roles.

- Explore the specifications from O-RAN Alliance Work Group 1 (WG1) covering overall architecture and Work Group 3 (WG3) focusing on the Near-RT RIC.

Step 3: RIC Platform

The RAN Intelligent Controller (RIC) is the brain of an intelligent network, facilitating AI integration.

- What to Learn: Near-RT RIC, xApp/rApp, E2AP, E2SM.

- Understand the architecture and functions of both the Near-Real-Time RIC and Non-Real-Time RIC.

- Learn about xApps (for Near-RT RIC) and rApps (for Non-RT RIC), which are the applications that run on the RIC to provide intelligence and control.

- Study the E2 Application Protocol (E2AP) and E2 Service Models (E2SMs) which define how the RIC communicates with and controls the underlying RAN functions.

Step 4: AI/ML Fundamentals

A foundational knowledge of AI and Machine Learning is indispensable for working with AI-RAN.

- What to Learn: Supervised/RL models, inference, TensorRT.

- Gain an understanding of supervised learning and reinforcement learning (RL) models, which are commonly used in RAN optimization.

- Learn about AI inference – the process of using a trained AI model to make predictions or decisions.

- Familiarize yourself with NVIDIA TensorRT, a software development kit for high-performance deep learning inference.

Step 5: GPU-Accelerated RAN

This step focuses on how GPUs are utilized to enhance RAN processing and enable AI workloads.

- What to Learn: NVIDIA Aerial SDK, cuBB, cuPHY, cuMAC.

- Delve into the NVIDIA Aerial SDK, a comprehensive platform that combines software, hardware, and tools for building high-performance, software-defined 5G and 6G RANs.

- Understand the specific CUDA-accelerated libraries:

cuBBfor baseband processing,cuPHYfor physical layer functions, andcuMACfor MAC layer processing, showcasing how GPUs accelerate these critical RAN tasks.

Step 6: AI Use Cases

Moving from theory to practical application, explore the diverse ways AI enhances RAN.

- What to Learn: Beamforming AI, energy saving, handover ML.

- Study how AI algorithms are applied to optimize beamforming for improved signal quality and capacity.

- Investigate AI-driven techniques for energy saving within the RAN, such as dynamic power scaling and cell sleep modes.

- Learn about machine learning models used for predictive handover, ensuring seamless user experience.

Step 7: Hands-on Lab

Practical experience is invaluable for cementing theoretical knowledge.

- What to Learn: OpenAirInterface + near-RT RIC + FlexRIC.

- Engage with OpenAirInterface (OAI), an open-source software implementation of 5G and LTE networks, to build and experiment with a software-defined RAN.

- Integrate and work with a Near-RT RIC implementation, such as FlexRIC or other open-source alternatives, to deploy and test xApps for RAN control and optimization.

Step 8: Certifications

Formal certifications can validate your expertise and provide industry recognition.

- What to Learn: O-RAN Alliance TIFG, AI-RAN Alliance training.

- Pursue certifications related to the O-RAN Alliance Test & Integration Focus Group (TIFG), which focuses on validating the interoperability and performance of O-RAN components.

- Look for training and certification programs offered by the AI-RAN Alliance or relevant industry bodies as they become available.

This structured learning path will equip you with the knowledge and practical skills required to navigate and innovate within the rapidly evolving world of AI-RAN.

Conclusion: The Programmable Stack and the Future of Wireless

AI-RAN is not merely an evolution; it’s a revolution that transforms the very foundation of wireless communication. By seamlessly integrating AI and RAN workloads on a single, GPU-accelerated platform at the edge, it creates a “programmable stack” that converges industrial IoT, telecommunications, and autonomy. This shift enables unprecedented capabilities, from optimizing spectral efficiency and reducing energy consumption to securing networks with AI-driven anomaly detection and powering a new generation of low-latency edge AI applications for industries and smart cities.

The move from hardware-defined, proprietary systems to software-defined, open, and AI-native architectures is irreversible. As we look towards 6G, this AI-RAN foundation will be critical for supporting billions of connected devices, extending intelligently into new frequency bands, and enabling sensing and communication in the same waveform. The base station is no longer just for connectivity; it is becoming an AI infrastructure platform, a distributed computer running AI models at radio speed. This is not a future concept; it is happening now, as evidenced by the rapid progression from lab trials to commercial deployments by leading industry players.

Understanding AI-RAN is paramount for anyone involved in the telecommunications and IoT sectors. It represents the blueprint for future intelligent mobile networks and the myriad innovative services they will enable.

Ready to dive deeper into the world of AI-RAN and explore how the convergence of AI and wireless connectivity can transform your business or research? Whether you’re an engineer seeking to unravel the complexities, an executive planning future strategies, or an investor looking for the next big wave, IoT Worlds is here to navigate this transformative landscape with you. Send us an email at info@iotworlds.com to connect with our experts and discover opportunities in this exciting new era of wireless intelligence!