The semiconductor industry stands at a pivotal juncture. As the foundational technology for virtually all modern electronics, silicon chips have consistently pushed the boundaries of innovation, enabling ever more powerful and compact devices. However, this relentless pursuit of advancement has led to an unprecedented surge in design complexity. Today’s leading-edge chips, the silent workhorses behind everything from smartphones to supercomputers, integrate tens of billions of transistors, heterogeneous compute blocks, and sophisticated packaging technologies. This intricate dance of microscopic components has, ironically, become the primary bottleneck in the very industry it propels forward.

The challenge is multi-faceted. Manufacturing nodes continue to shrink, pushing the limits of physics, yet the design process itself struggles to keep pace. The “first-silicon success rate”—the percentage of chips that work correctly on their initial fabrication—has plummeted to a dismal 14% in 2023-2024, a two-decade low. This indicates a profound systemic issue: traditional design methodologies are no longer sufficient to navigate the labyrinthine complexities of modern chip architectures.

Enter Artificial Intelligence (AI). A new wave of innovation is sweeping through the chip design landscape, with startups and established EDA giants alike leveraging machine learning, generative AI, and autonomous agents to tackle these formidable challenges. This article delves into the escalating complexity of chip design across its various stages and explores how AI-driven automation is poised to revolutionize this critical industry, ushering in an era of enhanced efficiency, accelerated development, and unprecedented innovation.

The Escalating Complexity of Chip Design

The journey from a conceptual idea to a physical silicon chip is a long and arduous one, traditionally broken down into several distinct stages. Each stage, from high-level architecture to final physical implementation, has seen its complexity explode, creating bottle-necks that hinder progress and escalate costs.

The Architecture Stage: Navigating an Infinite Design Space

At the very beginning of the chip design process, the architecture stage sets the blueprint. Here, engineers define the chip’s high-level structure, including its compute units, memory hierarchy, and interconnects. This involves making critical decisions that dictate the chip’s Power, Performance, and Area (PPA) characteristics.

In the past, human ingenuity and experience guided this exploration. However, contemporary chips, especially those designed for AI workloads and edge computing, offer a virtually infinite number of microarchitectural combinations. Factors such as the number of processing cores, cache sizes, pipeline depths, and interconnect topologies all interact in complex ways, making manual exploration slow, expensive, and increasingly inefficient. Decades ago, a senior architect could intuit optimal configurations; today, with billions of states to consider, the search space is simply too vast for human intuition alone. This “design space exploration” has become a monumental task, with the slightest alteration triggering a cascade of downstream effects on PPA.

Design Verification: The Unseen Battleground

Once an architectural blueprint is established, the design must be rigorously verified to ensure its correctness and functionality. This stage, known as design verification, has historically been the most time-consuming and resource-intensive part of the entire chip development cycle.

Verification often consumes a staggering 60-70% of the total development timeline. This involves creating elaborate testbenches, writing assertions to define expected behaviors, and developing coverage models to ensure that every nook and cranny of the design has been thoroughly checked. The goal is to catch bugs and inconsistencies before the chip is sent for manufacturing, as errors discovered post-fabrication can lead to costly re-spins and significant delays.

The complexity here grows even faster than the design itself. Ensuring correctness across billions of possible states in a modern system-on-chip (SoC) is akin to finding a needle in a colossal haystack, with the added challenge that the haystack itself is constantly reconfiguring. Traditional rule-based verification methods struggle to keep up with the exponential increase in design permutations and interactions, making it one of the hardest problems in contemporary chip development.

Emulation and Prototyping: Bridging Hardware and Software

Modern chips are not standalone entities; they are designed to run complex software stacks. Before the physical silicon even exists, developers need to validate the interaction between hardware and software, a process critical for enabling early software development and debugging. This is where emulation and prototyping come into play.

Emulation systems mimic the behavior of the future chip, allowing software to run on a hardware model. Prototyping, often using FPGAs (Field-Programmable Gate Arrays), provides a more accurate, albeit often slower, representation of the chip’s functionality. With the increasing size and complexity of software, coupled with the need for multi-chiplet and heterogeneous designs, emulation and prototyping environments are under immense pressure. They must be capable of handling vast amounts of data, simulating complex interactions, and providing robust debugging capabilities—all at a speed that allows for meaningful hardware/software co-verification at scale. The demand for these systems to be available earlier in the design cycle, and to support more intricate scenarios, continues to grow.

Physical Design: The Nitty-Gritty of Silicon

Once the architectural and functional aspects have been verified, the design moves to the physical design stage. This is where the abstract logic is translated into a physical layout on silicon. This involves a series of intricate steps, including floorplanning, placement, routing, and timing closure.

At advanced process nodes (e.g., below 5nm), physical design introduces a host of excruciating challenges:

- Timing Closure: Ensuring that all signals arrive at their destination within specified time limits, critical for the chip’s operational speed.

- Routing Congestion: Finding pathways for billions of interconnections without overlapping or creating bottlenecks.

- Power Delivery: Distributing power efficiently across the chip while minimizing voltage drops and ensuring thermal stability.

- Signal Integrity: Preventing corruption or degradation of signals due to electromagnetic interference or crosstalk.

- Manufacturability Optimization: Designing the chip in a way that maximizes manufacturing yield and minimizes defects.

These challenges are compounded by the advent of new technologies like heterogeneous integration, chiplets, and 3D stacking, which introduce new layers of complexity in managing inter-component communication, power distribution, and thermal management. The traditional manual and iterative “tweaking” by teams of engineers is no longer sustainable, leading to prolonged design cycles and increased risk of errors.

The AI Revolution in Chip Design Automation

The growing complexity across these stages has created a fertile ground for disruption, and Artificial Intelligence is emerging as the transformative force. A new generation of AI-driven design automation tools is not just assisting engineers; it is fundamentally reshaping how chips are conceived, developed, and optimized. This “AI-for-AI” loop—where AI designs the hardware that powers more advanced AI—is creating a self-reinforcing cycle of exponential hardware advancement.

According to industry analysts, the compound semiconductor market, a key component of this technological evolution, is projected to grow from 66.10 billion in 2026 to almost 169.06 billion by 2035, at a CAGR of 11.02%. This rapid growth underscores the critical need for AI-driven transformation, which is no longer optional but essential for long-term competitiveness.

AI in the Architecture Stage: Intelligent Design Space Exploration

AI is making a decisive impact at the architecture stage by enabling intelligent design space exploration. Instead of relying solely on deterministic rule-based systems, design environments now incorporate neural networks that learn from historical silicon data.

- Machine Learning (ML) Models as Surrogates: AI models can act as fast, accurate surrogates for time-consuming simulations. By learning the relationships between architectural parameters and PPA metrics, ML models can quickly predict the outcome of different design choices, drastically speeding up the evaluation process.

- Reinforcement Learning (RL) for Optimization: Reinforcement Learning, similar to the AI systems that master games like Go or Chess, is being trained to explore the vast architectural design space. By framing architectural design as a sequential decision-making game, RL agents can discover optimal configurations that human engineers might overlook. Google’s “Apollo” framework, for instance, uses a transferable RL-based approach for custom accelerator design, guiding the early architecture definition stage. These algorithms can adapt to user constraints (e.g., area or power limits) and quickly eliminate infeasible design points, focusing on promising regions of the architecture space.

- Foundation Models for Chip Design: Startups like Cognichip are developing “Foundation models for chip design.” These AI models are specialized for architecture exploration and design optimization, leveraging vast datasets of past designs and performance metrics to intelligently propose and refine architectural choices. This approach allows for the rapid identification of high-performing architectures for various AI workloads by learning which parameter combinations yield the best trade-offs in performance and energy.

These AI-driven approaches are reducing the time and effort required to converge on optimal architectural decisions, ensuring that the foundational elements of the chip are robust and efficient from the outset.

AI in Design Verification: Automating the Unautomatable

The verification bottleneck is perhaps where AI is offering the most profound immediate impact. AI is transforming verification from a manual, error-prone process into an automated, intelligent workflow.

- Generative AI for Testbench Creation: One of the most laborious tasks in verification is the creation of testbenches and assertions. AI tools are now capable of automatically generating verification environments from high-level specifications. Normal Computing, for instance, develops “AI tools for generating verification and design artifacts from specifications.” By understanding the intended behavior, these AI systems can create comprehensive test suites that cover a far wider range of scenarios than human-written tests.

- AI Agents for Verification: Several startups are focusing on AI agents specifically for verification workflows. ChipStack and MooresLabAI are developing AI agents that generate Universal Verification Methodology (UVM) testbenches and assertions, which are critical for thorough functional verification. These agents can learn from existing verification IP and design patterns to efficiently create accurate and effective test environments.

- Regression Failure Analysis and Debugging: Debugging is notorious for being time-consuming. Tools from Bronco AI leverage AI for “Regression failure analysis and DV (Design Verification) debugging.” By analyzing vast amounts of debug data, AI can quickly pinpoint the root cause of failures, significantly accelerating the debugging process.

- AI-driven Test Generation and Bug Discovery: VerifAI is developing “AI-driven test generation, stimuli creation, and bug discovery.” This involves using AI to create novel test cases that can expose hidden bugs and corner-case scenarios that might escape traditional verification methods. By exploring the state space intelligently, AI can discover vulnerabilities that human engineers might not anticipate.

- Copilots for Design Closure: Silimate offers “Copilot for bug detection and design closure acceleration,” providing AI assistance in identifying and resolving issues that hinder the finalization of a design. These AI copilots act as intelligent assistants, sifting through complex data to highlight potential problems and suggest solutions.

By automating these crucial verification tasks, AI can significantly reduce the verification cycle time, improve the quality of verification, and ultimately lead to higher first-pass success rates. AI-driven verification is projected to reduce chip design time by up to 30-50%.

AI for Emulation and Prototyping: Accelerating Hardware/Software Co-design

While the provided data focuses more on design and verification, the implications of AI on emulation and prototyping are clear. By accelerating the upstream design and verification processes, AI indirectly enables earlier and more robust emulation and prototyping.

- Faster and More Accurate Models: As AI improves the efficiency of architectural exploration and design synthesis, the underlying models for emulation and prototyping can be generated more quickly and with higher fidelity. This means software developers can get their hands on functional models earlier, compressing the overall development timeline.

- AI-driven Scenario Generation: AI could potentially generate more diverse and representative software workloads for emulation and prototyping, ensuring that the hardware-software interaction is thoroughly tested under a wide array of real-world conditions.

AI in Physical Design: Optimizing the Silicon Blueprint

The physical placement and routing of billions of transistors was once a task that consumed months of painstaking human effort. With the advent of AI, this process is being transformed into a highly optimized, automated workflow.

- Floorplanning as a Strategic Game: Google DeepMind’s AlphaChip framed floorplanning (the arrangement of components on a silicon die) as “a sequential decision-making game, similar to Go or Chess.” Using advanced Graph Neural Networks, AlphaChip can generate a tapeout-ready floorplan in under six hours, a task that previously took weeks for senior engineers. This technology was instrumental in the rapid deployment of Google’s TPU v5 and TPU v6 (Trillium), contributing to significant PPA gains.

- Reinforcement Learning for Placement and Routing: Major EDA giants like Synopsys and Cadence are deploying advanced reinforcement learning models to automate the placement and routing of trillions of transistors. Their tools, such as Synopsys DSO.ai and Cadence Cerebrus, treat chip layout as a strategic game, exploring vast “Design Space Optimization” (DSO) landscapes to optimize Power, Performance, and Area (PPA) simultaneously. These AI agents are discovering “alien” topologies and efficiencies previously unimaginable, ensuring that Moore’s Law remains vibrant.

- GPU-accelerated Physical Design: Startups like Partcl are harnessing the power of GPUs for “GPU-accelerated placement, timing, and PD optimization.” GPUs, originally designed for graphics rendering, are highly efficient at parallel processing, making them ideal for the computationally intensive tasks involved in physical design, especially when paired with AI algorithms.

- AI-driven Layout Optimization: AI-driven layout optimization can reduce chip area by up to 20% without sacrificing performance. By analyzing thousands of design variations, machine learning models can identify the most efficient ways to arrange components, which is critical for mobile devices and other space-constrained applications.

- Power Efficiency Optimization: Energy efficiency is paramount in modern chip design. AI tools analyze power consumption patterns and make real-time adjustments to optimize efficiency. Machine learning models can study thousands of design variations and suggest ways to lower power usage without sacrificing performance, contributing to up to 40% optimization in power efficiency.

The Rise of AI-Native Tools and Collaborative Agents

Beyond automating individual tasks, a new paradigm is emerging: AI-native tools and autonomous AI agents that can collaborate with human engineers. This represents a significant shift from AI as a mere assistant to AI as an intelligent partner in the design process.

AI Agents in Action

The term “agentic” in the context of chip design signifies AI systems that can make sequential decisions, learn from their environment, and work towards a defined goal without constant human supervision.

- RTL Development and Verification: ChipAgents are developing “AI agents for RTL (Register-Transfer Level) development and verification workflows.” RTL is a critical step where the behavioral description of a chip is translated into a structured representation suitable for synthesis. These agents can potentially generate and refine RTL code, ensuring it meets performance and power targets while being easily verifiable.

- Natural Language to HDL Design: PrimisAI is creating a “Natural language interface for chip design and verification” which aims to allow engineers to describe chip functionality in plain language, with AI translating it into Hardware Description Languages (HDL) like Verilog or VHDL. This could dramatically lower the barrier to entry for chip design and accelerate the initial specification phase.

- No-code SoC Design: ITDA Semiconductor is pushing the boundary further with “No-code SoC design.” Their AI automates power, clock, and Design For Testability (DFT) generation. This vision aims to abstract away much of the low-level coding, allowing engineers to focus on higher-level system architecture and functionality, with AI handling the intricate details of implementation.

- End-to-End Chip Project Orchestration: DSM Pro Engineering is working on “End-to-end chip project orchestration tools,” which suggests a holistic AI solution that manages the entire chip design flow from start to finish, optimizing processes and resources across all stages.

These AI agents are designed to learn from previous designs, adapt to new constraints, and continuously improve productivity. They are not merely following instructions; they are making informed decisions, identifying opportunities for optimization, and autonomously executing tasks that would otherwise require extensive human intervention. This shift elevates engineers from tedious, repetitive tasks to more strategic and innovative roles.

The Human-AI Collaboration Model

The vision is not to replace human engineers but to augment their capabilities significantly. AI agents can handle the voluminous, complex, and iterative tasks, freeing up human expertise for creative problem-solving, strategic decision-making, and exploring novel architectural concepts. This symbiotic relationship promises to unlock unprecedented levels of efficiency and innovation in the semiconductor industry.

Engineers, instead of spending months on manual iterations, can now leverage AI tools like Synopsys DSO.ai or Cadence Cerebrus to speed up their design workflow. This dramatic boost in productivity, with engineers completing tasks in a fraction of the usual time, has been reported as 3x to 5x improvement.

Impact and Future Outlook: A New Era for Silicon Innovation

The integration of AI into chip design workflows signals a profound shift that will have far-reaching implications for the entire technology landscape.

Accelerated Development Cycles

The most immediate and tangible benefit is the radical compression of design cycles. What once took months, or even years, can now be achieved in weeks or even days, particularly for certain stages like floorplanning. This acceleration is critical for keeping pace with the rapid evolution of technology and meeting market demands for faster, more efficient chips.

- Faster Time-to-Market: With shorter design cycles, companies can bring new products to market much more quickly, gaining a competitive edge and responding dynamically to emerging trends.

- Reduced Development Costs: By minimizing re-spins (the costly process of refabricating a chip due to design errors) and increasing engineering efficiency, AI significantly lowers the overall development cost of complex semiconductors.

Improved Engineering Efficiency and Innovation

AI allows engineers to offload repetitive and computationally intensive tasks, enabling them to focus on higher-level design, innovation, and strategic problem-solving. This shift empowers engineers to explore more complex features, experiment with novel architectures, and push the boundaries of what is possible.

- Focus on Creativity: With AI handling optimization and verification, human engineers can dedicate more time to truly innovative design choices rather than tedious manual adjustments.

- Higher Quality Designs: AI’s ability to explore vast design spaces and predict potential issues leads to more optimized and robust chips from the outset, reducing the likelihood of costly errors later in the development process.

Sustaining Moore’s Law

For decades, Moore’s Law—the observation that the number of transistors on a microchip doubles approximately every two years—has driven technological progress. However, as semiconductor technology approaches the atomic scale (e.g., 1.6nm and 2nm nodes with Gate-All-Around (GAA) transistors and Backside Power Delivery (BSPD)), traditional methods were hitting a “complexity wall”. AI is providing the necessary tools to navigate these new frontiers, ensuring that progress continues even as the physical challenges become more formidable. The widespread adoption of autonomous design systems optimizing for PPA with a precision exceeding human capability is now the new production standard.

The “AI-for-chips” revolution is not merely an incremental improvement; it is radically compressing design cycles that once spanned months into just a matter of days, fundamentally altering the pace of global technological advancement.

Enabling Future Technologies

The rapid advancements in AI-driven chip design are crucial for the development of future technologies, particularly in areas like:

- Edge AI: Faster and more efficient chips are essential for deploying powerful AI capabilities directly on edge devices, reducing latency and enabling new applications in IoT, autonomous vehicles, and smart infrastructure.

- High-Performance Computing (HPC) and Data Centers: The demand for specialized AI accelerators in hyperscale cloud environments and enterprise data centers continues to grow exponentially. AI-driven design ensures that these critical components are optimized for performance, power, and cost.

- 5G/6G Infrastructure: The complex chips required for next-generation communication networks benefit immensely from AI-driven design, ensuring they can handle massive data rates and intricate signal processing.

- Generative AI: The very AI models used to design chips are themselves fueling an explosion in generative AI applications, creating a feedback loop where more powerful AI hardware enables even more sophisticated AI design tools.

Key Players Driving the Transformation

The landscape of AI in chip design is vibrant, with a mix of established Electronic Design Automation (EDA) giants and innovative startups leading the charge.

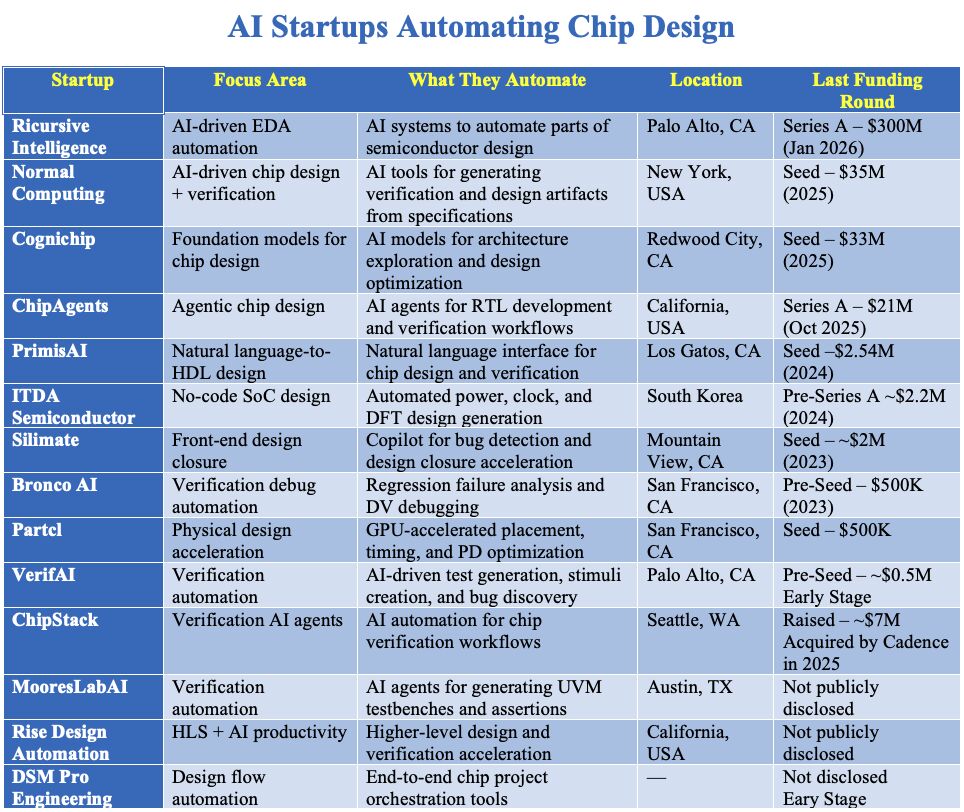

The image provides a snapshot of various AI startups contributing to this transformation:

| Startup | Focus Area | What They Automate | Location | Last Funding Round |

|---|---|---|---|---|

| Ricursive Intelligence | AI-driven EDA automation | AI systems to automate parts of semiconductor design | Palo Alto, CA | Series A – $300M (Jan 2026) |

| Normal Computing | AI-driven chip design + verification | AI tools for generating verification and design artifacts from specifications | New York, USA | Seed – $35M (2025) |

| Cognichip | Foundation models for chip design | AI models for architecture exploration and design optimization | Redwood City, CA | Seed – $33M (2025) |

| ChipAgents | Agentic chip design | AI agents for RTL development and verification workflows | California, USA | Series A – $21M (Oct 2025) |

| PrimisAI | Natural language-to-HDL design | Natural language interface for chip design and verification | Los Gatos, CA | Seed – $2.54M (2024) |

| ITDA Semiconductor | No-code SoC design | Automated power, clock, and DFT design generation | South Korea | Pre-Series A – $2.2M (2024) |

| Silimate | Front-end design closure | Copilot for bug detection and design closure acceleration | Mountain View, CA | Seed – ~$2M (2023) |

| Bronco AI | Verification debug automation | Regression failure analysis and DV debugging | San Francisco, CA | Pre-Seed – $500K (2023) |

| Partcl | Physical design acceleration | GPU-accelerated placement, timing, and PD optimization | San Francisco, CA | Seed – $500K (2023) |

| VerifAI | Verification automation | AI-driven test generation, stimuli creation, and bug discovery | Palo Alto, CA | Pre-Seed – ~$0.5M Early Stage |

| ChipStack | Verification AI agents | AI automation for chip verification workflows | Seattle, WA | Raised – ~$7M, Acquired by Cadence in 2025 |

| MooresLabAI | Verification automation | AI agents for generating UVM testbenches and assertions | Austin, TX | Not publicly disclosed |

| Rise Design Automation | HLS + AI productivity | Higher-level design and verification acceleration | California, USA | Not publicly disclosed |

| DSM Pro Engineering | Design flow automation | End-to-end chip project orchestration tools | — | Not disclosed Early Stage |

This list highlights the diversity of approaches and the specialization within the AI for chip design space, from automating specific tasks to developing comprehensive, end-to-end solutions. The significant funding rounds, such as Ricursive Intelligence’s $300M Series A in January 2026, underscore the massive market confidence in this disruptive technology. The acquisition of ChipStack by Cadence in 2025 further indicates that established players are rapidly incorporating these AI capabilities into their core offerings.

Challenges and Future Directions

While the promise of AI in chip design is immense, several challenges remain:

- Data Scarcity and Quality: AI models thrive on high-quality data. In chip design, historical data can be fragmented, proprietary, or not perfectly aligned with the needs of advanced AI training. Creating and curating vast, high-quality datasets for various design stages is crucial.

- Interpretability and Trust: “Black box” AI models, while effective, can be difficult for human engineers to understand and trust, especially in safety-critical applications. Developing interpretable AI models that can explain their decisions is an ongoing research area.

- Integration with Existing Workflows: Integrating new AI tools into established and often deeply entrenched EDA workflows requires careful planning, standardization, and interoperability.

- Computational Resources: Training and running advanced AI models for chip design can require significant computational resources, including specialized AI accelerators.

- Skill Gap: There is a growing need for engineers who possess expertise in both semiconductor design and AI/machine learning.

Despite these challenges, the trajectory is clear: AI will continue to deepen its integration into every stage of the chip design process. Future directions include:

- More Autonomous Agents: The evolution from AI-assisted tools to completely autonomous agents that can manage entire design blocks or even full chips with minimal human oversight.

- Generative Design Beyond Optimization: Moving beyond just optimizing existing designs to AI proactively generating entirely new and performant architectural concepts.

- Cross-Domain AI: AI that can optimize across multiple design stages simultaneously, such as architectural choices impacting physical design manufacturability.

- Explainable AI (XAI) in EDA: Developing AI systems that not only make optimal decisions but can also provide clear, human-understandable explanations for those decisions.

Conclusion: The Dawn of the AI-Architect

The semiconductor industry is undergoing a profound transformation, driven by the indispensable role of AI in overcoming the escalating complexity of chip design. From optimizing architectural exploration and automating verification to intelligently navigating the intricate landscape of physical implementation, AI is redefining the capabilities and pace of silicon innovation. The boundary between hardware and software has effectively dissolved, with AI taking over the role of the master architect, turning months-long processes into hours.

This revolution, spearheaded by innovative startups and major EDA players, is fundamental not just to the future of chip manufacturing but to the advancement of all technology. As AI-powered tools become the new standard, they are not only accelerating development cycles and improving efficiency but also enabling the creation of next-generation hardware that can power increasingly sophisticated AI systems. The self-reinforcing cycle of “AI designing AI” promises a future where technological progress continues at an exponential rate, pushing the boundaries of what we thought possible.

Elevate Your Organizational Intelligence with IoT Worlds

In this rapidly evolving landscape, understanding and leveraging cutting-edge technologies like AI in chip design is paramount for maintaining a competitive edge. At IoT Worlds, we specialize in providing insightful analysis, strategic guidance, and actionable intelligence to help your organization navigate the complexities of the connected world. Our expertise spans across IoT deployments, AI integration, and semiconductor trends, equipping you with the knowledge to make informed decisions and accelerate your innovation.

Don’t let the future of technology leave your organization behind. Unlock the full potential of AI-driven innovation with the experts at IoT Worlds.

To learn more about how we can help you with AI-driven strategies and IoT solutions, contact us today.

Email us at info@iotworlds.com to schedule a consultation.