In an increasingly interconnected world, the demand for instant insights and autonomous operations has pushed the boundaries of traditional computing. Centralized cloud intelligence, while powerful, often faces formidable challenges when confronted with the sheer volume, velocity, and variety of data generated at the network’s periphery. This is where Edge AI emerges as a transformative paradigm – a fundamental shift from reliance on distant data centers to localized, real-time decision-making capabilities embedded directly at the device level.

Edge AI is not merely an optimization; it is an architectural imperative for next-generation IoT, smart manufacturing, autonomous systems, and intelligent infrastructure. It addresses critical limitations inherent in cloud-centric models, paving the way for unprecedented responsiveness, efficiency, and resilience across a multitude of applications. This article delves into the intricacies of Edge AI, exploring its core components, multifaceted benefits, and the profound impact it is having on diverse industries.

The Paradigm Shift: From Cloud to Edge

For years, the cloud has been the undisputed king of data processing and AI model training. Its seemingly infinite computational resources and storage capacity have enabled the development of sophisticated AI algorithms that power everything from natural language processing to complex image recognition. However, the escalating scale of data generation, particularly from the burgeoning Internet of Things (IoT), has exposed the Achilles’ heel of an exclusively cloud-based approach.

The Limitations of Cloud-Centric AI

The primary challenges posed by a purely cloud-based AI architecture include:

- Latency: Sending vast amounts of data to the cloud for processing and then awaiting a response introduces unavoidable delays. For applications requiring instantaneous action, such as autonomous vehicles or critical industrial control systems, even milliseconds of delay can have severe consequences.

- Bandwidth Constraints: The sheer volume of data generated by countless edge devices can overwhelm network bandwidth, leading to bottlenecks, increased costs, and unreliable data transfer. This is particularly problematic in remote or intermittently connected environments.

- Privacy and Security Concerns: Transmitting sensitive data to the cloud raises significant privacy and security concerns. Companies and individuals are becoming increasingly wary of sending proprietary or personal information off-premises, especially with evolving data protection regulations.

- Operational Resilience: Cloud dependency creates a single point of failure. If connectivity to the cloud is interrupted, edge devices lose their intelligence, potentially leading to operational outages and safety hazards.

- Cost Implications: Constantly streaming all raw data to the cloud for analysis can incur substantial data transfer and storage costs, especially as the number of connected devices and data volume continues to grow exponentially.

Edge AI directly confronts these limitations by bringing intelligence closer to the source of data generation, fundamentally altering the architecture of intelligent systems.

Defining the Edge

The “edge” in Edge AI refers to the physical location where data is generated and processed, typically at or near the source rather than in a distant data center. This can encompass a wide range of devices and environments:

- IoT Devices: Smart sensors, cameras, wearables, and home appliances.

- Industrial Equipment: Robotics, manufacturing machinery, and predictive maintenance sensors.

- Vehicles: Autonomous cars, drones, and connected logistics vehicles.

- Infrastructure: Smart city components, traffic lights, and environmental monitoring stations.

- Local Servers/Gateways: Micro data centers or powerful gateways deployed on-premises, serving as an aggregation point for multiple edge devices.

The common thread is the decentralization of computational power and AI inferencing capabilities, enabling immediate action and reducing reliance on continuous cloud connectivity.

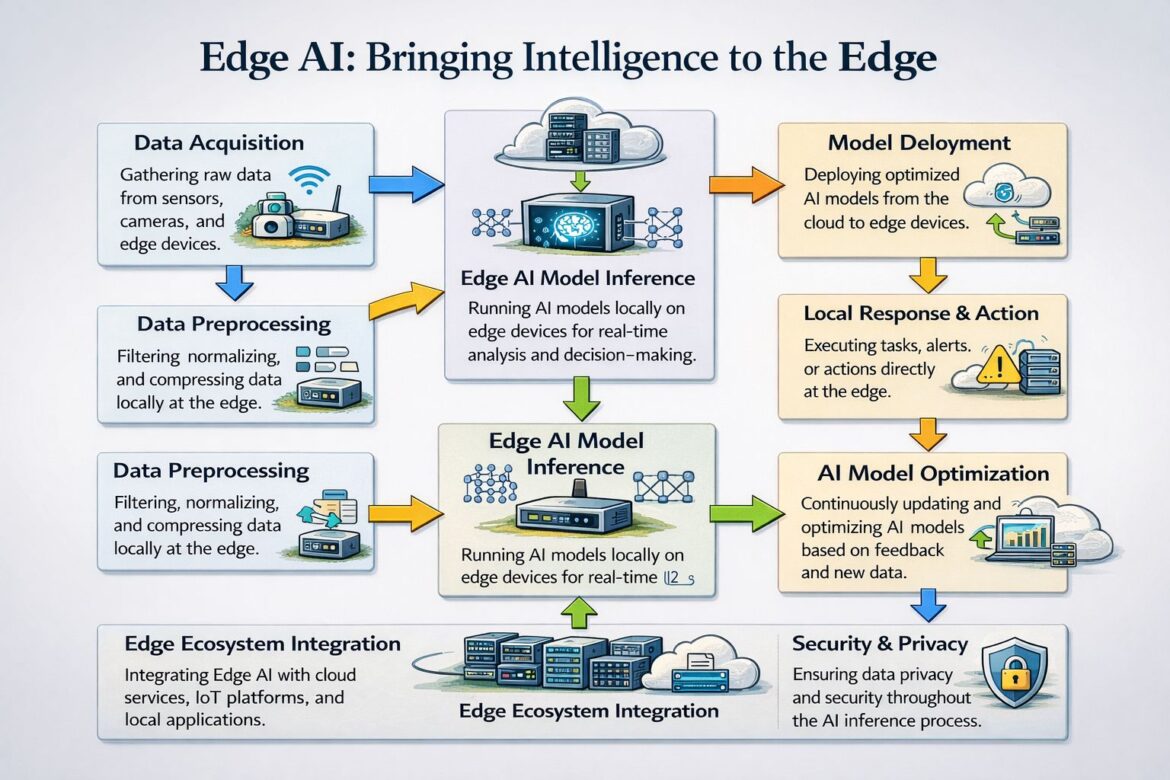

The Modern Edge AI Pipeline: A Journey of Intelligence

The implementation of Edge AI involves a sophisticated pipeline designed to optimize data flow, processing, and decision-making on resource-constrained hardware. This pipeline ensures that artificial intelligence can operate effectively and efficiently at the very frontier of the network.

Data Acquisition: The Foundation of Intelligence

The journey of intelligence at the edge begins with data acquisition. This critical initial step involves collecting raw information directly from a myriad of sensors and embedded devices deployed in the physical world.

- Sensor Diversity: Edge AI relies on a diverse array of sensors, including optical (cameras), acoustic (microphones), environmental (temperature, humidity, pressure), motion (accelerometers, gyroscopes), and specialized industrial sensors. Each sensor type captures unique facets of the operational environment.

- Embedded Devices: These are purpose-built computing systems often integrated directly into larger machines or environments. They are responsible for not only collecting data but also for initially preparing it for subsequent processing.

- Real-Time Streams: Data acquisition at the edge often involves continuous, real-time streams of information, demanding efficient capture mechanisms to prevent data loss or staleness.

- Data Volume: The sheer volume of raw data generated can be immense, highlighting the need for efficient handling from the outset. For instance, a single high-definition camera can generate gigabytes of data per hour.

Effective data acquisition is paramount as the quality and relevance of the collected data directly influence the accuracy and efficacy of downstream AI models.

Local Preprocessing: Refining Raw Data

Once acquired, raw data is rarely in a format immediately suitable for AI models. Local preprocessing is a crucial step that transforms raw data streams into a clean, normalized, and optimized format, right at the edge. This significantly reduces the burden on subsequent processing stages and minimizes the data volume that might need eventual cloud synchronization.

- Filtering: This involves removing noise, irrelevant information, or redundant data points from the raw streams. For example, in an audio application, background static might be filtered out.

- Normalization: Data normalization scales and adjusts data to a common range, preventing certain features from disproportionately influencing AI models. This is especially important for heterogeneous sensor data.

- Compression: Edge devices have limited storage and bandwidth. Data compression techniques reduce the size of the data without significant loss of critical information, facilitating more efficient storage and transmission.

- Feature Extraction: In some cases, preprocessing might also involve basic feature extraction, where raw data is transformed into more meaningful attributes that highlight patterns for the AI model. For example, detecting edges in an image or specific frequencies in an audio signal.

By performing these operations locally, Edge AI minimizes latency, conserves bandwidth, and reduces the computational load on the cloud.

Optimized AI Models: Intelligence on a Diet

The core of Edge AI lies in deploying optimized AI models to resource-constrained edge hardware. Unlike their cloud counterparts, which can leverage high-performance GPUs and ample memory, edge devices often have limited processing power, memory, and energy budgets.

- Model Compression Techniques:

- Quantization: This technique reduces the precision of the numerical representations that AI models use (e.g., from 32-bit floating-point numbers to 8-bit integers). This significantly shrinks model size and speeds up inference with minimal accuracy loss.

- Pruning: Pruning removes redundant or less important connections (weights) within a neural network. This thins out the network architecture, resulting in smaller, faster models.

- Knowledge Distillation: A smaller, “student” model is trained to mimic the behavior and outputs of a larger, more complex “teacher” model. The student model, being smaller, is more suitable for edge deployment.

- Efficient Architectures: The development of specialized neural network architectures tailored for edge devices, such as MobileNets and EfficientNets, focuses on achieving high accuracy with fewer parameters and operations.

- Hardware Acceleration: Many modern edge devices integrate specialized hardware accelerators (e.g., NPUs – Neural Processing Units, TPUs – Tensor Processing Units, or custom ASICs) designed to rapidly execute AI inference tasks with high energy efficiency.

The goal is to strike a delicate balance between model accuracy, speed, size, and power consumption, ensuring effective AI operation within the constraints of edge hardware.

On-Device Inference: Millisecond Decisions

With optimized models deployed, on-device inference becomes the engine of real-time decision-making. This is where the AI model directly processes the preprocessed data on the edge device itself, enabling millisecond-level responses without relying on external cloud computation.

- Local Computation: All the necessary calculations for the AI model to make a prediction or classify data occur directly on the edge hardware.

- Reduced Latency: By eliminating the round trip to the cloud, inference time is drastically cut, making it suitable for applications demanding immediate action. This is crucial for safety-critical systems like autonomous navigation or real-time anomaly detection in industrial settings.

- Offline Capability: Edge devices can continue to operate and make intelligent decisions even when network connectivity is intermittent or entirely absent, enhancing system resilience and autonomy.

- Resource Efficiency: Performing inference locally avoids the energy and bandwidth consumption associated with continuous data transmission to the cloud.

This capability is what truly distinguishes Edge AI, empowering devices to act autonomously and intelligently based on their immediate environment.

Local Response Mechanisms: Immediate Action

The power of on-device inference culminates in local response mechanisms, which trigger immediate actions based on the AI’s real-time decisions, often without any cloud dependency.

- Actuator Control: AI models can directly control actuators like robotic arms, motors, valves, or lights based on their analysis. For example, a quality control AI on a production line might immediately trigger a robotic arm to remove a defective product.

- Alert Generation: If an anomaly or critical event is detected, the edge device can instantly generate alerts, either locally (e.g., an alarm) or through a local network to human operators.

- System Adjustments: In smart infrastructure, an Edge AI system might adjust traffic light timings in real-time based on local traffic flow analysis or optimize HVAC systems in a building based on occupancy and environmental sensor data.

- Data Prioritization: The edge device can decide which data is critical enough to be sent to the cloud for further analysis or storage, and which can be discarded or aggregated locally.

These immediate, localized responses are fundamental to the agility and responsiveness promised by Edge AI, driving efficiency and safety in dynamic environments.

Secure Communication: The Bridge to the Cloud

While Edge AI prioritizes local processing, complete isolation from the cloud is rarely practical or desirable. Secure communication ensures encrypted data exchange when cloud synchronization is required for tasks like model retraining, aggregate data analysis, or remote monitoring.

- Encryption Protocols: Industry-standard encryption protocols (e.g., TLS/SSL) are used to protect data in transit between edge devices and the cloud, safeguarding sensitive information from eavesdropping and tampering.

- Authentication and Authorization: Robust mechanisms verify the identity of both edge devices and cloud services, ensuring that only authorized entities can communicate and access data.

- Data Minimization: Only necessary and preprocessed data is transmitted to the cloud, adhering to the principle of data minimization and further reducing bandwidth requirements and privacy risks.

- Secure Boot and Firmware Updates: Edge devices require secure boot processes to prevent unauthorized software execution, and firmware updates must be delivered securely to patch vulnerabilities and improve functionality.

Secure communication acts as the vital bridge, balancing edge autonomy with the benefits of cloud oversight and global intelligence.

Continuous Monitoring: Maintaining Performance

The deployment of AI models at the edge is not a set-it-and-forget-it operation. Continuous monitoring is essential for evaluating performance, detecting anomalies, and ensuring the ongoing health and accuracy of the Edge AI system.

- Performance Metrics: Monitoring tracks key performance indicators (KPIs) relevant to the AI model, such as inference speed, accuracy against ground truth data (where available), and resource utilization (CPU, memory, power).

- Anomaly Detection: Edge devices or local gateways can monitor their own operational parameters and the behavior of the AI models to detect unusual patterns or deviations that might indicate malfunctions, data drift, or adversarial attacks.

- Data Drift Identification: Over time, the characteristics of the data flowing into an AI model can change (data drift), causing its performance to degrade. Continuous monitoring helps identify such drift, signaling the need for model retraining.

- Health Checks: Regular checks on hardware components, network connectivity, and software integrity ensure the overall stability and reliability of the Edge AI deployment.

Proactive monitoring allows for timely intervention, maintenance, and retraining, ensuring that the Edge AI system remains effective and robust throughout its lifecycle.

Cloud-Edge Integration: The Full AI Lifecycle

The true power of Edge AI often lies in its symbiotic relationship with the cloud. Cloud-edge integration enables a comprehensive AI lifecycle management, from initial training to continuous improvement.

- Model Training and Retraining: The cloud, with its vast computational resources, remains the ideal environment for training complex AI models from scratch using large datasets. As data drift is detected at the edge or new data becomes available, the cloud facilitates efficient model retraining.

- Model Deployment and Updates: Optimized models are securely pushed from the cloud to individual or groups of edge devices. This allows for seamless updates, bug fixes, and the deployment of new AI capabilities across distributed networks.

- Aggregated Data Analysis: While individual raw data streams are processed at the edge, aggregated and anonymized insights from countless edge devices can be sent to the cloud for broader trend analysis, business intelligence, and strategic decision-making.

- Centralized Management: Cloud platforms provide tools for managing large-scale Edge AI deployments, including device provisioning, monitoring dashboards, configuration management, and over-the-air (OTA) updates.

- Human-in-the-Loop: For complex scenarios or when edge insights require human validation, the cloud can serve as a conduit for human-in-the-loop processes, where expert judgment can augment or refine automated decisions.

This integrated approach harnesses the best of both worlds: the real-time responsiveness of the edge and the scalable intelligence and management capabilities of the cloud.

The Pillars of Edge AI: Key Advantages

The architectural shift to Edge AI is driven by a compelling set of advantages that address critical needs in modern intelligent systems. These benefits collectively underscore why decentralized intelligence is rapidly becoming a necessity.

Reduced Latency: The Need for Speed

One of the most significant and immediate benefits of Edge AI is the dramatic reduction in latency. In an increasingly real-time world, the speed of decision-making can be the difference between success and failure, or even safety and disaster.

- Immediate Response: By performing AI inference directly at the data source, the round-trip time to a remote cloud server is eliminated. This allows for instantaneous reactions to events.

- Time-Critical Applications: Industries such as autonomous vehicles, robotics, industrial automation, and surgical assistance rely on sub-millisecond response times. Edge AI is fundamental to enabling the safety and effectiveness of these applications. An autonomous car needs to identify an obstacle and initiate braking in fractions of a second, not two seconds later due to network delay.

- Enhanced User Experience: For human-centric applications, lower latency translates to a more fluid, responsive, and natural user experience, whether it’s understanding voice commands or enabling augmented reality.

The ability to make decisions at the speed of the physical world transforms possibilities across numerous domains.

Improved Bandwidth Efficiency: Alleviating Network Strain

The proliferation of IoT devices generates unprecedented volumes of data. Transmitting all this raw data to the cloud places immense strain on network infrastructure and incurs significant costs. Edge AI offers a powerful solution by drastically improving bandwidth efficiency.

- Intelligent Filtering and Aggregation: Instead of sending every raw data point, Edge AI processes data locally, filters out irrelevant information, and only transmits critical insights or aggregated summaries to the cloud. For instance, a surveillance camera might only send an alert and a short video clip when an intruder is detected, rather than streaming 24/7 footage.

- Reduced Data Traffic: This selective transmission drastically reduces the amount of data traversing network links, alleviating congestion, and freeing up bandwidth for other critical communications.

- Cost Savings: Lower bandwidth usage directly translates into reduced operational costs, particularly for deployments in remote areas reliant on expensive satellite or cellular data.

- Optimal Resource Utilization: Networks become more efficient by only carrying truly valuable information, rather than a torrent of undifferentiated raw data.

By acting as intelligent data gatekeepers, edge devices transform network utilization from a data-heavy deluge to a precise flow of actionable intelligence.

Enhanced Privacy: Protecting Sensitive Information

In an era of heightened awareness surrounding data privacy and stringent regulations (like GDPR and CCPA), Edge AI provides a powerful mechanism for safeguarding sensitive information.

- Local Processing of Sensitive Data: Many applications deal with highly sensitive data, such as facial recognition in security systems, personal health metrics from wearables, or proprietary manufacturing processes. Edge AI allows this data to be processed locally, without ever leaving the device or premises.

- Anonymization at the Source: Before any data is potentially sent to the cloud, edge devices can be configured to anonymize or obfuscate sensitive identifiers, ensuring that personal or confidential information is never exposed beyond the immediate environment.

- Compliance with Regulations: By keeping data local, organizations can more easily comply with data residency requirements and privacy regulations, reducing the risk of data breaches and non-compliance fines.

- Reduced Attack Surface: The less sensitive data travels across networks or resides in centralized cloud repositories, the smaller the attack surface for cybercriminals.

Edge AI empowers organizations to leverage the power of AI while upholding the highest standards of data privacy and security.

Autonomous System Operation: Resilient and Independent

The ability of Edge AI to enable autonomous system operation is a game-changer, fostering resilience, reliability, and independence from constant cloud connectivity.

- Offline Functionality: Edge devices equipped with AI can continue to perform their functions and make intelligent decisions even if their connection to the central cloud is lost or unreliable. This is crucial for remote deployments, disaster recovery scenarios, or mobile assets.

- Increased Reliability: Reduces reliance on external network infrastructure, making systems inherently more robust against network outages or intermittent connectivity.

- Distributed Intelligence: Rather than routing all decisions through a central brain, intelligence is distributed across the network, making the overall system more robust and less susceptible to single points of failure.

- Operational Continuity: In critical infrastructure, manufacturing, or healthcare, continuous operation is paramount. Edge AI ensures that essential functions can proceed uninterrupted, even in challenging network environments.

This autonomy is not just about convenience; it’s about building inherently more resilient and dependable intelligent systems.

Applications Across Industries: Where Edge AI is Making an Impact

The transformative potential of Edge AI is being realized across a diverse spectrum of industries, driving innovation and solving complex challenges.

Smart Manufacturing and Industry 4.0

In the realm of smart manufacturing, Edge AI is a cornerstone of Industry 4.0, ushering in an era of unprecedented efficiency, quality, and safety.

- Predictive Maintenance: Sensors on machinery collect data on vibration, temperature, and acoustics. Edge AI models analyze this data in real-time to predict equipment failures before they occur, enabling proactive maintenance and minimizing costly downtime.

- Quality Control: AI-powered cameras at the edge can inspect products on assembly lines for defects with millisecond precision, ensuring consistent quality and reducing waste. These systems can immediately signal robotic arms to remove faulty items.

- Robotics and Automation: Edge AI enables industrial robots to operate more autonomously, adapt to dynamic environments, and collaborate more effectively with humans by processing sensor data locally for real-time navigation, object recognition, and task execution.

- Optimized Resource Management: Edge AI can monitor energy consumption, material flow, and production schedules, making real-time adjustments to optimize resource utilization and reduce operational costs within a factory.

By embedding intelligence directly into the production environment, Edge AI revolutionizes manufacturing processes.

Autonomous Systems: Vehicles, Drones, and Robotics

Perhaps no sector exemplifies the critical need for Edge AI more than autonomous systems, where immediate decision-making is a matter of life and safety.

- Autonomous Vehicles: Self-driving cars rely heavily on Edge AI for real-time perception (object detection, lane keeping, pedestrian identification), path planning, and immediate decision-making. The latency to the cloud is simply too high for safety-critical maneuvers.

- Drones and UAVs: Drones use Edge AI for navigation, obstacle avoidance, payload management, and real-time data analysis (e.g., in agriculture for crop health monitoring or in surveillance for anomaly detection).

- Field Robotics: Robots operating in remote or dangerous environments (e.g., search and rescue, hazardous materials handling, distant planetary exploration) leverage Edge AI for immediate environmental understanding and autonomous action without constant human or cloud intervention.

- Collaborative Robotics (Cobots): Edge AI allows cobots to safely interact with human workers, recognizing gestures, predicting movements, and adapting their tasks in real-time.

The ability to process vast streams of sensor data (Lidar, radar, cameras) and make instantaneous decisions locally is non-negotiable for autonomous operations.

Smart Cities and Intelligent Infrastructure

Edge AI is foundational to the vision of smart cities, enabling intelligent and responsive urban environments that enhance quality of life and optimize resource utilization.

- Traffic Management: AI at the edge can analyze real-time traffic flow from cameras and sensors, dynamically adjusting traffic lights to ease congestion, respond to accidents, and optimize transit times.

- Public Safety and Surveillance: Edge devices can perform real-time video analytics to detect unusual activities, identify security threats, or assist in emergency response, while preserving privacy by processing sensitive data locally before aggregation.

- Waste Management: Smart bins with Edge AI can detect fill levels and types of waste, optimizing collection routes and schedules, reducing operational costs, and promoting recycling.

- Environmental Monitoring: Edge sensors equipped with AI can monitor air quality, water levels, and noise pollution, providing real-time data for environmental protection and alerting authorities to critical changes.

- Smart Utilities: Edge AI can monitor power grids, water networks, and gas pipelines for anomalies, predict equipment failures, and optimize distribution, leading to more resilient and efficient utility services.

By bringing intelligence to streetlights, surveillance cameras, and utility meters, Edge AI makes cities more responsive, sustainable, and safer.

Healthcare and Wearables

Within healthcare, Edge AI is transforming patient care, diagnostics, and personal wellness, emphasizing privacy and immediate insights.

- Remote Patient Monitoring: Wearable devices and home sensors powered by Edge AI can continuously monitor vital signs, activity levels, and other health metrics. The AI can detect anomalies or critical changes in real-time, alerting patients or caregivers immediately, without sending all raw biometric data to the cloud.

- Point-of-Care Diagnostics: Portable medical devices can integrate Edge AI for rapid analysis of samples, providing quick diagnostic results in clinics, emergency rooms, or remote locations, accelerating treatment decisions.

- Assisted Living: Edge AI can monitor the movements and activities of elderly individuals in their homes, detecting falls or unusual behavior and sending alerts without invading privacy by continuously streaming video to the cloud.

- Personalized Wellness: Smartwatches and fitness trackers use Edge AI to analyze activity patterns, sleep quality, and heart rate variability, offering personalized recommendations for health improvement.

Edge AI makes healthcare more proactive, personalized, and accessible, often with enhanced privacy.

Challenges and Considerations in Edge AI Deployment

While the benefits of Edge AI are substantial, its deployment comes with its own set of unique challenges that require careful consideration and innovative solutions.

Hardware Constraints: Power, Size, and Cost

Edge devices, by definition, are characterized by their resource limitations, posing significant hurdles for AI implementation.

- Computational Power: Many edge devices have low-power processors and limited computational horsepower compared to cloud servers. This necessitates highly optimized AI models and efficient inference engines.

- Memory and Storage: RAM and local storage are often severely constrained, meaning AI models must be compact, and data retention policies must be carefully managed.

- Energy Consumption: For battery-powered or energy-harvesting edge devices (e.g., remote sensors), energy efficiency is paramount. AI workloads must be designed to consume minimal power.

- Form Factor and Durability: Edge devices can operate in harsh environments, requiring ruggedized hardware that can withstand extreme temperatures, vibrations, dust, and moisture, all while maintaining a compact size.

- Cost: The cost per unit must be low enough to enable large-scale deployment, placing further pressure on hardware and software optimization.

Addressing these hardware constraints is central to scalable and effective Edge AI solutions.

Model Optimization and Deployment Complexity

Optimizing AI models for edge deployment and managing their lifecycle presents considerable complexity.

- Balancing Accuracy and Efficiency: The trade-off between model accuracy and its computational efficiency (size, speed, power consumption) is a constant challenge. Achieving sufficient accuracy within edge constraints requires specialized techniques.

- Model Compression Techniques: Implementing and fine-tuning techniques like quantization, pruning, and knowledge distillation can be intricate and require specialized expertise.

- Heterogeneous Hardware: Edge AI solutions often need to run on a diverse range of hardware architectures from different vendors, each with unique capabilities and toolchains, complicating deployment and maintenance.

- Over-the-Air (OTA) Updates: Securely and reliably updating AI models and device firmware on potentially millions of distributed edge devices presents significant logistical and technical challenges.

- Model Versioning and Rollbacks: Managing different versions of AI models deployed across various devices and having robust rollback strategies is crucial for maintaining system stability.

Specialized tools and platforms are emerging to streamline the intricate process of edge model optimization and deployment.

Security and Privacy at the Edge

While Edge AI enhances privacy by keeping data local, it also introduces new security vulnerabilities and challenges that must be meticulously addressed.

- Physical Security: Edge devices are often deployed in physically exposed locations, making them susceptible to tampering, theft, or sabotage. Secure enclosures and tamper-detection mechanisms are vital.

- Data Protection: Even if data stays local, it must be protected against unauthorized access, manipulation, or leakage, both at rest and during processing on the edge device.

- Supply Chain Security: Ensuring the integrity of the hardware and software throughout the supply chain, from manufacturing to deployment, is critical to prevent the introduction of vulnerabilities.

- Vulnerability of AI Models: Edge AI models themselves can be vulnerable to adversarial attacks, where subtle perturbations in input data can lead an AI to make incorrect decisions. Protecting against such attacks is an active area of research.

- Compliance: Meeting stringent data privacy regulations (e.g., GDPR, HIPAA) mandates robust security measures across the entire Edge AI pipeline.

A multi-layered security strategy, encompassing hardware, software, and operational procedures, is essential for robust Edge AI.

Data Management and Cloud-Edge Synchronization

Even with local processing, data management and the selective synchronization between the edge and the cloud remain critical for a complete Edge AI solution.

- Data Aggregation and Summarization: Deciding what data to keep locally, what to aggregate, and what to send to the cloud requires intelligent policies. Over-transmitting data defeats the purpose of edge processing; under-transmitting can lead to a loss of valuable insights.

- Data Drift Monitoring: As environmental conditions or operational parameters change, the data characteristics at the edge can “drift,” potentially degrading the performance of deployed AI models. Mechanisms to detect and signal this drift back to the cloud for model retraining are crucial.

- Intermittent Connectivity: Edge devices may operate in environments with unreliable or intermittent network connectivity. Data synchronization mechanisms must be resilient, capable of queuing data, and resuming transmission when connectivity is restored.

- Data Integrity and Consistency: Ensuring data integrity and consistency across the distributed edge-cloud architecture, especially during synchronization, is a complex task.

- Edge Data Lakes: Managing and querying the vast amounts of preprocessed or aggregated data that might reside at the edge or local gateways can pose challenges for traditional data management tools.

Effective data governance and smart synchronization strategies are vital for optimizing the entire Edge AI ecosystem.

The Future of Edge AI: Evolution and Expansion

The journey of Edge AI is still in its relatively early stages, with continuous innovation and expansion driving its evolution. The future promises even more sophisticated capabilities and broader adoption.

Advanced Edge Processors and Accelerators

The relentless advance in semiconductor technology will continue to yield more powerful, energy-efficient, and specialized edge AI processors and accelerators.

- Neuromorphic Computing: Inspired by the human brain, neuromorphic chips offer ultra-low power consumption and highly efficient AI processing, ideal for battery-constrained edge devices.

- Domain-Specific Architectures (DSAs): Further specialization in chip design will lead to DSAs optimized for specific AI workloads (e.g., computer vision, natural language processing) at the edge, offering unparalleled performance per watt.

- AI-Enabled MCUs: Even tiny microcontrollers (MCUs) are gaining AI capabilities, allowing for incredibly low-power inference at the very smallest edge devices, expanding the reach of intelligence to almost everything.

- More Powerful Edge Gateways: The capabilities of edge gateways and micro data centers will grow, enabling them to host more complex AI models and manage larger fleets of subordinate edge devices.

These hardware advancements will unlock new possibilities for what can be achieved at the edge.

Federated Learning and Collaborative AI at the Edge

Traditional machine learning often requires centralizing data for training. Federated learning offers a privacy-preserving alternative, which is particularly well-suited for Edge AI.

- Preserving Privacy: In federated learning, individual edge devices collaboratively train a shared global model without ever sharing their raw, sensitive data. Only model updates (gradients) are sent to a central server, and these are often aggregated before application.

- Decentralized Training: This approach enables AI models to learn from diverse datasets distributed across many edge devices, while maintaining data locality and privacy.

- Collaborative Intelligence: Edge devices can share insights and learn from each other’s experiences, leading to more robust and generalized AI models.

- Reduced Data Transfer: Only model updates, which are typically much smaller than raw datasets, are exchanged, improving bandwidth efficiency.

Federated learning will play a crucial role in building intelligent systems that respect privacy and leverage distributed data effectively.

Edge AI for Spatial Computing and Metaverse

As spatial computing, augmented reality (AR), and the metaverse gain traction, Edge AI will be indispensable for delivering immersive and responsive experiences.

- Real-time Environmental Understanding: AR glasses and other spatial computing devices will use Edge AI to instantaneously understand their physical surroundings, map spaces, and track user movements and intentions.

- Low-Latency AI for Immersive Interactions: For truly immersive and believable metaverse experiences, AI-powered characters, objects, and environmental responses must occur with imperceptible latency, demanding local Edge AI processing.

- Personalization and Contextual Awareness: Edge AI can personalize AR experiences based on individual user preferences, location, and real-time context, all while preserving privacy by processing sensitive user data locally.

- Offloading Cloud Computation: While the cloud will still play a role, Edge AI will offload significant computational burden from cloud servers, especially for rendering and real-time interaction logic, making complex spatial experiences viable.

The convergence of Edge AI and spatial computing will redefine how humans interact with digital information and the physical world.

Explainable AI (XAI) at the Edge

As AI models become more pervasive and influential in critical decisions, the need for transparency and explainability (XAI) becomes increasingly important, extending to the edge.

- Trust and Accountability: For applications in healthcare, finance, or autonomous systems, understanding “why” an AI made a particular decision is crucial for building trust, ensuring accountability, and debugging issues.

- Edge-Native XAI: Developing XAI techniques that can run efficiently on resource-constrained edge devices will be essential, generating explanations locally without requiring cloud intervention.

- Debugging and Performance Tuning: Local explanations can help engineers and operators diagnose why an AI model might be underperforming or making incorrect predictions in specific edge scenarios.

- Regulatory Compliance: Future regulations may mandate explainability for AI systems, making XAI at the edge a compliance necessity.

Integrating XAI capabilities directly into edge deployments will enhance the reliability and trustworthiness of autonomous intelligent systems.

Conclusion: The Era of Distributed Intelligence

Edge AI represents more than just a technological advancement; it signifies a fundamental paradigm shift in how we conceive and deploy artificial intelligence. By bringing intelligence directly to the source of data generation, it addresses the critical limitations of centralized cloud computing – latency, bandwidth, privacy, and operational resilience. The modern Edge AI pipeline, from data acquisition and local preprocessing to optimized model deployment and real-time inference, forms the backbone of remarkably agile and powerful intelligent systems.

The undeniable advantages of Edge AI – reduced latency, improved bandwidth efficiency, enhanced privacy, and autonomous operation – are not mere optimizations; they are architectural necessities for the intelligent future. From predictive maintenance in smart factories to life-saving decisions in autonomous vehicles, and from privacy-preserving healthcare to responsive smart cities, Edge AI is the driving force behind the next generation of technological innovation.

While challenges remain in hardware constraints, model optimization, and ensuring robust security, the continuous evolution of processors, the emergence of federated learning, and the integration with burgeoning fields like spatial computing promise an even more impactful and pervasive role for Edge AI. Decentralized intelligence is not a fleeting trend; it is the cornerstone of a connected world where real-time insights and autonomous capabilities redefine industries and improve lives.

To explore how your organization can harness the power of Edge AI to drive innovation, enhance efficiency, and build truly intelligent systems, reach out to our experts. We can help you navigate the complexities and unlock the full potential of distributed intelligence for your specific needs.

Contact us today to learn more: info@iotworlds.com