The Internet of Things (IoT) has ushered in an era of unprecedented connectivity, transforming industries, homes, and cities. From tiny environmental sensors to complex industrial control systems, IoT devices are becoming ubiquitous. However, this growth brings forth a paramount challenge: power efficiency. The ability of IoT devices to operate reliably for extended periods, often in remote or inaccessible locations, hinges entirely on their power consumption. In the world of electronic and embedded system design, power optimization is no longer a luxury but a fundamental requirement.

This comprehensive article delves into the critical strategies and techniques engineers employ to minimize power consumption in modern hardware architectures, ensuring that IoT systems are not only energy-efficient but also high-performing and sustainable. We will explore both hardware-centric and software-driven approaches, providing a holistic view of the power optimization landscape.

The Imperative of Power Efficiency in IoT

The pervasive nature of IoT devices means they often operate on limited power budgets, drawing energy from batteries, energy harvesting solutions, or small power grids. The longevity and reliability of these devices are directly tied to their power consumption. A device that constantly needs battery replacement or recharging is impractical and economically unsustainable for large-scale deployments. Moreover, inefficient power usage can lead to excessive heat generation, impacting device performance, reliability, and lifespan.

Consider the diverse applications of IoT: smart agricultural sensors monitoring soil conditions for months on a single charge, wearable health trackers operating continuously for days, or remote industrial sensors relaying critical data from harsh environments. In each scenario, power efficiency is the bedrock of functionality and feasibility. Without robust power optimization, the promise of IoT—its ability to provide real-time insights and automate processes at scale—would remain largely unfulfilled.

Understanding Power Consumption in Embedded Systems

To effectively optimize power, it’s crucial to understand its sources within an embedded system. Power consumption generally arises from two main components: dynamic power and static power.

Dynamic Power Consumption

Dynamic power is consumed when transistors switch between states (from on to off, or vice versa). This power is directly proportional to the switching frequency, the square of the supply voltage, and the capacitance being switched.

Pdynamic∝C⋅V2⋅f

Where:

- C is the switching capacitance.

- V is the supply voltage.

- f is the switching frequency.

Minimizing any of these factors can significantly reduce dynamic power.

Static Power Consumption

Static power, also known as leakage power, is consumed even when transistors are not actively switching. This current leaks through transistors due to various physical phenomena, even in their “off” state. As transistors scale down in size, static leakage becomes a more prominent concern, especially in deep submicron technologies. While dynamic power often dominates in devices with high activity, static power can become a significant contributor in idle or low-activity states, especially as technology nodes shrink.

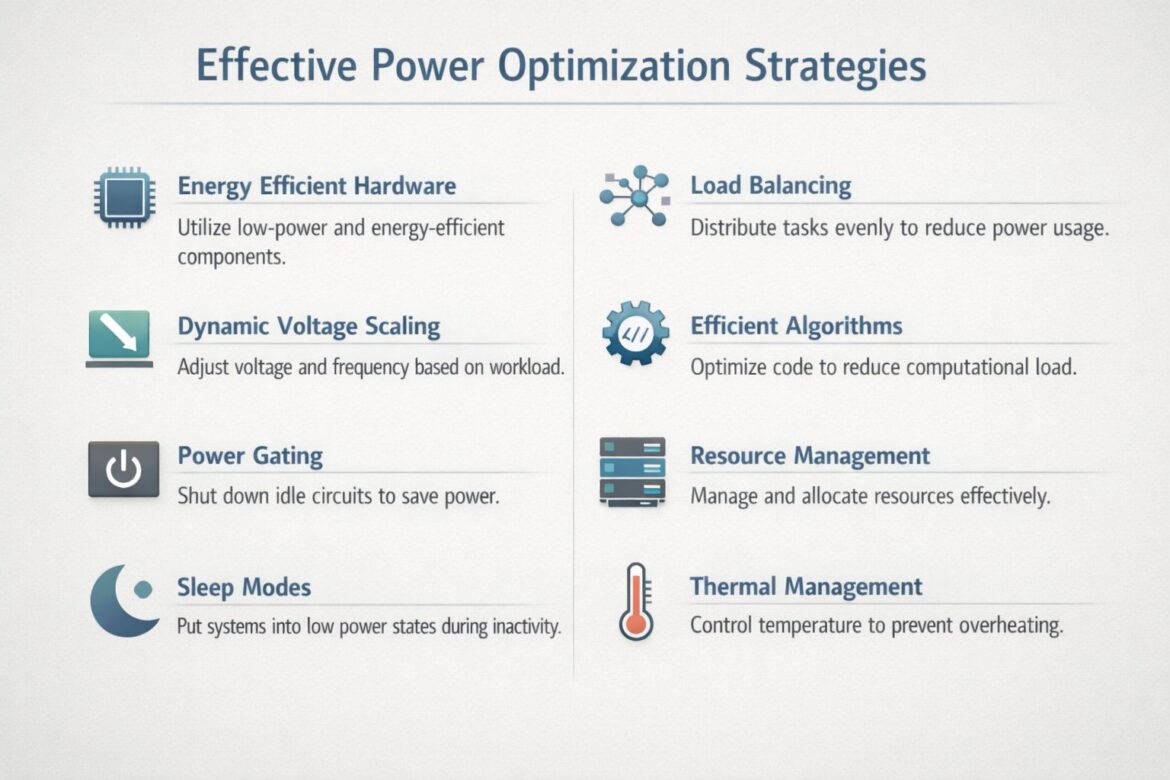

Hardware-Centric Power Optimization Strategies

Optimizing power consumption begins at the foundational level: the hardware. Selecting the right components and designing efficient circuits are critical first steps.

Energy-Efficient Hardware Design

The choice of hardware components plays a pivotal role in overall power consumption. Engineers must prioritize low-power, energy-efficient components from the outset of the design process.

Low-Power Microcontrollers and Processors

Modern microcontrollers (MCUs) and System-on-Chips (SoCs) designed for IoT applications often incorporate multiple low-power modes, optimized architectures, and specialized peripherals to reduce energy consumption. Features such as smaller process nodes (e.g., 28nm, 22nm, 14nm), specialized low-power cores (e.g., ARM Cortex-M series for microcontrollers, or RISC-V architectures optimized for power efficiency), and integrated power management units (PMUs) are crucial.

Designers should carefully evaluate the power consumption profiles of different MCUs under various operational loads, including active, idle, and sleep states. The trade-off between processing power and energy efficiency is a key consideration.

Optimized Memory Architectures

Memory can be a significant power consumer, especially embedded memory like SRAM and Flash. Using memory technologies with lower leakage currents and optimizing memory access patterns can yield substantial savings. Techniques include:

- Minimizing Memory Accesses: Reducing the frequency and volume of data transfers to and from memory.

- Using Lower Power Memory Types: Exploring emerging memory technologies with inherent lower power characteristics.

- Power Gating Memory Blocks: Independently shutting down unused memory blocks.

Efficient Communication Modules

Wireless communication (Wi-Fi, Bluetooth, LoRa, Zigbee, cellular) is often the most power-hungry component in many IoT devices. Selecting communication modules that are highly optimized for power efficiency is crucial.

- Low-Power Wireless Protocols: Utilizing protocols like Bluetooth Low Energy (BLE), LoRaWAN, and Zigbee, which are designed for long-range, low-power communication.

- Duty Cycling: Transmitting data intermittently rather than continuously. This involves keeping the radio module in a low-power state for most of the time and only powering it up for short bursts to send or receive data.

- Optimized Antenna Design: Ensuring efficient antenna performance minimizes the power required for signal transmission and reception.

Specialized Sensors and Actuators

Sensors and actuators, while often consuming less power than the main processing unit or communication module, contribute to the overall power budget. Choosing low-power variants, optimizing their sampling rates, and employing intelligent data acquisition strategies can further reduce consumption. For instance, instead of continuous measurement, a sensor might wake up periodically, take a reading, and then return to a low-power state.

Dynamic Voltage Scaling (DVS) and Dynamic Frequency Scaling (DFS)

Dynamic Voltage and Frequency Scaling (DVFS, often referred to as DVS or DFS individually) are a cornerstone of modern power management. These techniques allow the system to dynamically adjust the supply voltage and operating frequency of the processor and other components based on the current workload.

How DVS/DFS Works

As shown in the dynamic power consumption formula, power is proportional to the square of the voltage and directly proportional to the frequency. By reducing the voltage and frequency when the workload is light, power consumption can be drastically reduced.

- Voltage Scaling: Lowering the supply voltage significantly reduces dynamic power due to the quadratic relationship (V2). However, reducing voltage necessitates a corresponding reduction in operating frequency, as lower voltages provide less headroom for transistors to switch quickly.

- Frequency Scaling: Reducing the clock frequency directly reduces dynamic power and typically allows for a lower operating voltage.

Modern processors and MCUs incorporate sophisticated power management units that monitor workload and dynamically adjust DVFS parameters. This ensures that the device only consumes the necessary power for the task at hand, preventing wasteful over-provisioning of power.

Implementation Considerations

Implementing DVS/DFS requires:

- Voltage Regulators: Efficient DC-DC converters or Low-Dropout (LDO) regulators that can dynamically adjust output voltage.

- Software Control: Operating systems or firmware that can sense workload and issue commands to change voltage and frequency settings.

- Careful Profiling: Understanding the power-performance trade-offs at different voltage and frequency points to ensure system stability and meet performance requirements.

Power Gating

Power gating is a technique used to cut off the power supply to inactive blocks of a circuit, significantly reducing static (leakage) power consumption. Instead of just clock gating (which we’ll discuss next), power gating physically disconnects the power rails to parts of the chip that are not needed.

How Power Gating Works

Power gating typically involves inserting an “enable” switch (often a header or footer switch, usually a high-threshold voltage transistor) in the power path of a block. When the block is not in use, the switch is turned off, effectively cutting off power and eliminating both dynamic and static power consumption in that block.

Types of Power Gating

- Coarse-Grain Power Gating: Applied to large functional blocks (e.g., entire processing cores, memory modules, peripherals). The power-down and power-up sequences can take longer, making it suitable for blocks that remain idle for extended periods.

- Fine-Grain Power Gating: Applied to smaller sub-blocks or even individual gates within a functional unit. This offers more precise control but incurs higher overhead in terms of control logic and area.

Challenges and Considerations

- Wake-up Latency: Bringing a power-gated block back online takes time as power needs to be restored, and the block might need to be reinitialized.

- State Retention: The state of the power-gated block is lost. Mechanisms like state retention flip-flops or saving state to non-gated memory are required if the state needs to be preserved.

- In-rush Current: When a large block is powered up, it can draw a significant in-rush current, potentially causing voltage drops on the power rails, which needs careful design consideration.

Sleep Modes and Low-Power States

Virtually all modern embedded systems and IoT devices heavily rely on various sleep modes to achieve ultra-low power consumption when inactive. These modes allow the device to enter a low-power state, consuming minimal energy, and then quickly wake up when an event occurs or a task needs to be performed.

Common Sleep Modes

- Idle Mode: The CPU stops processing instructions, but peripherals and memory remain powered and possibly clocked. This allows for quick wake-up with minimal latency.

- Sleep Mode (Light Sleep): More components are shut down than in idle mode, often including the main CPU clock. Peripherals might still be active and capable of generating interrupts to wake up the system.

- Deep Sleep Mode (Standby/Hibernate): This is the lowest power state where most of the system, including the CPU, memory, and many peripherals, is powered down. Only vital components (e.g., a real-time clock, external interrupt lines) remain active. Wake-up from deep sleep is typically the slowest but offers the greatest power savings.

- Off Mode: The device is essentially powered off, consuming almost no power (except for potentially a small amount in a power button or battery management unit). Resurrection from this state is equivalent to a cold boot.

Smart Wake-up Mechanisms

Effective use of sleep modes requires intelligent wake-up mechanisms. These can include:

- Real-time Clock (RTC) Alarms: Waking up the device at predetermined intervals for periodic tasks (e.g., sensor readings, data transmission).

- External Interrupts: Responding to events from external sensors, buttons, or communication modules.

- Internal Peripherals: Timers, Analog-to-Digital Converters (ADCs), or other integrated peripherals can be configured to generate interrupts upon specific conditions.

The key is to minimize the time spent in active mode and maximize the time spent in the lowest possible power state while still meeting application requirements.

Clock Gating

Clock gating is a synchronous power-saving technique used in many synchronous digital circuits to reduce dynamic power dissipation by disabling the clock signal to parts of the circuit that are currently not in use.

How Clock Gating Works

Instead of constantly toggling the clock for all flip-flops and registers in a design, clock gating inserts AND or OR gates into the clock path. When a particular functional block is idle, the clock signal to that block is gated (disabled), preventing the flip-flops within that block from switching. This effectively stops the dynamic power consumption in that logic block without affecting its stored state.

Benefits of Clock Gating

- Significant Power Reduction: Eliminates dynamic power consumption associated with clocking inactive sequential elements.

- Relatively Simple Implementation: Can be inferred by synthesis tools or explicitly designed into the RTL.

- Preserves State: Unlike power gating, clock gating does not cut off power, so the state of the circuit is retained.

Considerations

- Clock Skew: Improperly implemented clock gating can introduce clock skew, potentially leading to functional errors. Careful design and verification are essential.

- Gating Logic Overhead: The gating logic itself consumes a small amount of power and adds gate delay. The power savings must outweigh this overhead.

Thermal Management

While often overlooked in direct power optimization discussions, effective thermal management is intrinsically linked to power efficiency. An overheated system often becomes less efficient, necessitating more power to maintain performance, and can lead to thermal throttling, where the system reduces its clock speed or voltage to cool down, impacting performance.

The Relationship Between Temperature and Power

- Increased Leakage Current: As temperature rises, the static leakage current in transistors increases exponentially, leading to higher static power consumption.

- Reduced Reliability and Lifespan: Prolonged operation at high temperatures significantly degrades the reliability and shortens the lifespan of electronic components.

- Performance Throttling: Many devices implement thermal management systems that automatically reduce clock frequency or voltage (effectively applying DVFS) when a certain temperature threshold is crossed to prevent damage. While this saves the device, it comes at the cost of reduced performance.

Strategies for Thermal Management

- Efficient Heat Dissipation: Using heat sinks, fans (for larger devices), and efficient PCB layouts to facilitate heat transfer away from hot spots.

- Component Placement: Arranging heat-generating components strategically to avoid localized hot spots and allow for better airflow.

- Operating Environment: Considering the ambient temperature of the deployment environment during design.

- Power Optimization as a Thermal Strategy: By minimizing power consumption through other methods (DVS, clock gating, efficient hardware), less heat is generated in the first place, simplifying thermal management.

Software and System-Level Power Optimization Strategies

Beyond hardware, intelligent software design, system architecture, and resource management play an equally vital role in achieving optimal power efficiency.

Efficient Algorithms and Code Optimization

The way software is written and algorithms are designed has a profound impact on power consumption. Inefficient code leads to more clock cycles, more memory accesses, and longer active times for the processor, all of which translate to higher power draw.

Key Principles

- Algorithm Selection: Choose algorithms that have lower computational complexity and better asymptotic time and space efficiency. For example, selecting an O(nlogn) sorting algorithm over an O(n2) algorithm for large datasets can drastically reduce processing time and energy.

- Code Optimization:

- Minimize CPU Cycles: Write concise and efficient code that performs critical tasks in the fewest possible instructions. Avoid unnecessary computations, loops, and function calls.

- Reduce Memory Accesses: Memory operations are energy intensive. Optimize data structures and algorithms to minimize fetching data from memory, especially off-chip memory.

- Avoid Busy-Waiting: Instead of continuously polling for an event (busy-waiting), use interrupt-driven programming or sleep modes with appropriate wake-up mechanisms.

- Compiler Optimizations: Utilize compiler optimization flags (e.g.,

-O2,-Osfor size optimization, which often leads to power optimization) to generate more efficient machine code. - Data Type Selection: Use the smallest data types possible that can hold the required information to reduce memory footprint and bus traffic.

- Fixed-Point vs. Floating-Point Arithmetic: Where precision allows, fixed-point arithmetic can be significantly more power-efficient than floating-point arithmetic, as specialized floating-point units consume more power.

Example: Edge Computing Optimization

In edge computing scenarios, where data is processed closer to its source, efficient algorithms are paramount. Instead of sending raw sensor data to the cloud for analysis (which consumes power for communication), local processing can filter out redundancies, aggregate data, or perform initial analytics, sending only relevant information. This reduces transmission time and frequency, thereby saving significant power.

Resource Management

Effective resource management involves intelligently controlling and allocating computational and peripheral resources to ensure that only necessary components are active and that they operate at their optimal power-performance point.

Dynamic Resource Allocation

- Peripheral Management: Only power up peripherals (e.g., ADC, SPI, I2C, UART) when they are actively needed. Many microcontrollers allow individual peripherals to be enabled or disabled via register-level control.

- Task Scheduling: Implement intelligent task schedulers that prioritize critical tasks and allow non-critical tasks to be deferred or performed during periods of lower system activity. This enables the system to spend more time in lower power states.

- Workload Balancing: Distribute computational tasks across available processing units (e.g., multiple CPU cores, specialized accelerators) in a way that optimizes for power. For instance, offloading specific tasks to a low-power digital signal processor (DSP) or dedicated hardware accelerator can be more energy-efficient than executing them on the main CPU.

Power Profiles and Modes

Operating systems and embedded frameworks often provide mechanisms to define and switch between different power profiles or modes (e.g., “Performance Mode,” “Balanced Mode,” “Power Save Mode”). These profiles configure various parameters like CPU frequency, display brightness, peripheral activity, and sleep mode aggressiveness to match the current user or application requirements with optimal power consumption.

Load Balancing

Load balancing, traditionally associated with server farms and network traffic, also finds relevance in the context of power optimization for distributed IoT systems and multi-core embedded devices.

Distributing Tasks in Multi-Core Systems

In devices with multiple processing cores (e.g., a system-on-chip with an application processor and a low-power microcontroller), intelligently distributing tasks can lead to power savings.

- Dedicated Low-Power Cores: Offloading simple, periodic tasks (e.g., sensor monitoring, basic communication) to a dedicated low-power core allows the main, more powerful core to remain in a deep sleep state for longer periods.

- Dynamic Task Migration: Migrating tasks between cores based on their power profiles and the current system load can ensure that cores are operating efficiently. For example, a computationally intensive burst might be handled by the high-performance core, which then goes to sleep, while background tasks are handled by a more energy-efficient core.

Distributing Tasks in Distributed IoT Networks

In a network of IoT devices, load balancing can involve:

- Relay Node Optimization: In mesh networks, efficiently routing data through nodes to minimize individual node’s transmission power and avoid over-burdening specific nodes.

- Sensor Network Balancing: Distributing sensing and data collection tasks among multiple redundant sensors to extend the overall network lifetime. If one sensor is overused and drains its battery, others can take over.

- Edge-Cloud Workload Distribution: Deciding which computations are performed at the edge device and which are offloaded to the cloud. This balancing act needs to consider data volume, processing power available at the edge, network bandwidth, and the energy cost of communication versus local computation.

Power Management ICs (PMICs)

Power Management Integrated Circuits (PMICs) are specialized chips designed to manage power flow within an electronic device. They integrate various power management functions into a single IC, playing a crucial role in improving power efficiency and battery life.

Key Functions of PMICs

- Voltage Regulation: PMICs incorporate various forms of voltage regulators, including:

- Buck Converters (Step-Down DC-DC): Efficiently convert a higher input voltage to a lower output voltage.

- Boost Converters (Step-Up DC-DC): Convert a lower input voltage to a higher output voltage.

- Low-Dropout (LDO) Regulators: Provide very stable and clean output voltage but are less efficient than buck/boost converters when there’s a large voltage difference.

- Dynamic Voltage Frequency Scaling (DVFS) Support: Many PMICs are designed to work in conjunction with the main processor to dynamically adjust supply voltages based on workload.

- Battery Charging and Management: For battery-powered devices, PMICs manage the charging process, monitor battery health, and protect against overcharge or over-discharge.

- Power Sequencing: Control the order in which different power rails are turned on and off during system startup and shutdown to ensure stable operation and prevent component damage.

- Load Switching: Enable or disable power to specific functional blocks or peripherals, supporting power gating techniques.

- Power Monitoring: Provide telemetry on voltage, current, and power consumption, which can be used by the system for intelligent power management decisions.

Benefits of Using PMICs

- Improved Efficiency: Optimized power conversion leads to less energy waste.

- Reduced Footprint: Consolidating multiple power management functions into one chip saves board space.

- Enhanced Battery Life: Intelligent power management extends the operational time of battery-powered devices.

- Simplified Design: PMICs provide a ready-made solution for complex power management requirements.

Advanced Power Optimization Techniques and Future Trends

The field of power optimization is continuously evolving, driven by the increasing demand for longer battery life and higher performance in increasingly compact form factors.

Energy Harvesting

For many IoT devices, particularly those in remote locations, replacing batteries is impractical or impossible. Energy harvesting offers a compelling solution by converting ambient energy into usable electrical power.

Types of Energy Harvesting

- Solar Energy: Photovoltaic cells convert light into electricity. Highly effective outdoors or in well-lit indoor environments.

- Thermal Energy: Thermoelectric generators (TEGs) convert temperature differences into electricity (e.g., from machinery exhaust, body heat).

- Vibration/Kinetic Energy: Piezoelectric materials convert mechanical vibrations or movement into electricity.

- Radio Frequency (RF) Energy: Harvesting energy from ambient RF signals (e.g., Wi-Fi, cellular). This typically provides very low power but can be sufficient for ultra-low power devices.

Challenges and Considerations

- Low Power Density: Ambient energy sources often have low power density, meaning only small amounts of power can be harvested.

- Intermittency: Harvesters are dependent on environmental conditions (e.g., sunlight, vibrations) and may not provide continuous power.

- Energy Storage: Requires efficient energy storage solutions (e.g., supercapacitors, small rechargeable batteries) to bridge periods when harvested energy is unavailable.

- Power Management: Specialized power management circuitry is needed to efficiently extract, convert, and store harvested energy and to manage power delivery to the device.

Non-Volatile Memory (NVM) and Computation-in-Memory

Traditional memory technologies (like SRAM and DRAM) consume static power even to retain data. Emerging non-volatile memory (NVM) technologies offer the promise of retaining data without continuous power, which can lead to significant power savings, especially in data-intensive IoT applications.

NVM Benefits

- Zero Standby Power: NVM retains data even when power is removed, eliminating static power consumption for data retention.

- Faster Wake-up: Systems can resume operation almost instantly without needing to reload data from slower, higher-power storage.

Computation-in-Memory (CIM) / Processing-in-Memory (PIM)

This paradigm shifts computation closer to or directly within memory, reducing the energy-intensive data movement between processor and memory. By performing certain operations (e.g., matrix multiplications for AI/ML inferences) directly within the memory fabric, the “memory wall” bottleneck and associated power consumption for data transfers can be significantly reduced. While still in research phases, CIM/PIM holds immense potential for ultra-low-power edge AI applications.

Artificial Intelligence (AI) and Machine Learning (ML) for Power Management

AI and ML algorithms are increasingly being used to optimize power consumption in complex embedded systems.

Predictive Power States

ML models can analyze historical usage patterns, environmental conditions, and application requirements to predict future workloads and intelligently transition the system into optimal power states proactively, rather than reactively. For example, an IoT device monitoring air quality might learn to anticipate periods of low activity and autonomously enter deep sleep, only to wake up for crucial data points or expected events.

Adaptive DVFS

AI can enable more sophisticated and finer-grained adaptive DVFS. Instead of simple threshold-based adjustments, ML models can learn complex relationships between workload characteristics, performance requirements, and optimal voltage/frequency settings, even across different temperature ranges, leading to more precise and efficient power management.

Anomaly Detection for Power Faults

ML can also be used to detect anomalous power consumption patterns that might indicate a hardware fault, a software bug, or even a cybersecurity threat, allowing for proactive intervention.

Ultra-Low Power Design Methodologies

The pursuit of extreme power efficiency has led to the development of specialized design methodologies:

- Subthreshold Computing: Operating transistors at voltages below their threshold voltage. This drastically reduces dynamic power (due to V2) but comes with challenges related to speed, variability, and noise immunity. Ideal for ultra-low-power, low-speed applications.

- Near-Threshold Computing (NTC): Operating just at or above the threshold voltage. NTC offers a better balance between power savings and performance compared to subthreshold, making it suitable for a broader range of applications where modest performance is acceptable.

- Asynchronous Design: Clockless circuits, where components communicate using handshaking signals rather than a global clock. Asynchronous designs can potentially eliminate the power consumed by driving a global clock and only consume power when active, though their design complexity is higher.

The Interplay of Strategies: A Holistic Approach

It is important to emphasize that no single power optimization technique stands in isolation. The most effective power management strategies involve a holistic approach, intelligently combining hardware design choices with software optimization and system-level management.

For instance, an energy-efficient chip (hardware) still requires well-optimized firmware (software) to utilize its low-power modes effectively. Dynamic Voltage Scaling capabilities on a processor are only useful if the operating system or application can dynamically adjust voltage and frequency based on the actual workload. Similarly, an energy harvesting solution (advanced technique) needs a sophisticated PMIC (hardware) to manage the intermittent power and an intelligent algorithm (software) to schedule tasks when sufficient power is available.

The interplay is dynamic and synergistic:

- Hardware Foundation: Start with the most energy-efficient components and architectures.

- Architectural Optimization: Implement techniques like DVS, power gating, and clock gating at the design level.

- Software Intelligence: Develop efficient algorithms, optimized code, and intelligent resource management to leverage the hardware capabilities.

- System-Level Control: Use PMICs and operating system power managers to coordinate interactions and transitions between power states.

- Advanced Techniques: Integrate energy harvesting or AI-driven management for long-term self-sufficiency and predictive optimization.

Measuring and Validating Power Consumption

Effective power optimization requires continuous measurement, analysis, and validation throughout the design and development cycle.

- Power Profiling Tools: Specialized hardware tools (e.g., power analyzers, current probes, oscilloscopes) are essential for accurately measuring power consumption at various points in the circuit and under different operational scenarios.

- Software-Based Estimation: Tools that estimate power consumption based on code execution and architectural models can provide early insights.

- Simulation and Emulation: During the design phase, simulators and emulators can help predict power behavior before physical silicon is available.

- Real-World Testing: Ultimately, devices must be tested in their target environment to validate power consumption against design goals and expected battery life. This includes testing under varying environmental conditions and diverse workloads.

Iterative refinement, driven by accurate measurement, is key to achieving optimal power efficiency. Identify the “power hogs” in your system, implement optimization techniques, measure the impact, and repeat.

Conclusion

The journey towards truly efficient and sustainable IoT is fundamentally intertwined with the mastery of power optimization. As billions of devices connect to the digital fabric of our world, the cumulative energy consumption becomes a substantial global concern. Engineers are at the forefront of this challenge, employing a diverse toolkit of strategies ranging from selecting cutting-edge, low-power hardware to crafting highly efficient software and pioneering advanced techniques like energy harvesting and AI-driven power management.

Understanding power optimization techniques such as dynamic voltage scaling, clock gating, intelligent power management, and the crucial role of efficient algorithms empowers engineers to build systems that are not only high-performing but also remarkably energy-efficient. This enables devices to operate longer, reduce maintenance overhead, minimize environmental impact, and ultimately unlock the full potential of the Internet of Things across every facet of our lives. The continuous innovation in this field promises a future where IoT devices seamlessly integrate into our environment, powered by ingenuity and efficiency.

Is your IoT project facing power challenges? Do you need expert guidance in designing energy-efficient hardware, optimizing software for minimal power consumption, or implementing advanced power management strategies? IoT Worlds brings unparalleled expertise in bridging the gap between innovative IoT concepts and sustainable, long-lasting deployments. Let us help you revolutionize your power footprint. Send us an email at info@iotworlds.com to discuss how we can engineer your next breakthrough in power-optimized IoT solutions.